l88bastard

2[H]4U

- Joined

- Oct 25, 2009

- Messages

- 3,718

Yea I want to know too if this thing is worth it? Like no one has one in here?

Per RentaZon mines not getting delivered until the 20th......which is foreverago :-(

Follow along with the video below to see how to install our site as a web app on your home screen.

Note: This feature may not be available in some browsers.

Yea I want to know too if this thing is worth it? Like no one has one in here?

HDR is about the small highlights, not full screen brightness. We're talking about the sun in a first-person game having the same kind of intensity as in the real world being the target in HDR content. If HDR was mastered correctly in the media you're viewing then any text should not be presented any brighter than it would normally be in SDR.

[HDR] Star Wars: The Rise of Skywalker 4K Blu-ray HDR Analysis - HDTVTestThis is being posted in relation to the C9, E9 and CX oleds and HDR display tech in general - not trying to continue arguments about any aw55 value, limitations, timeliness. It is what it is.

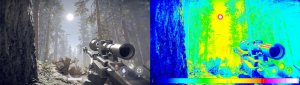

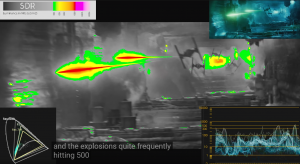

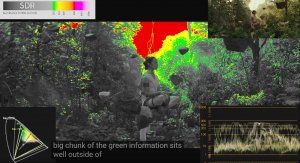

This HDR temperature mapping is pretty cool to watch. The scene content is broken down as follows:

under 100 nits = grayscale

100nits+ = pure green

200nits = yellow

400nits = orange

800 nits = red

1600nit = pink

4000nit+ = pure white

Yes movies are mastered at 10,000nit but UHD discs are mastered at HDR1000 mostly with some HDR 4000 and only a few HDR 10,0000 so far since there isn't any consumer hardware capable of showing it yet.

I'd think mapping HDR 10,000 to 1,000 would be easier. Mapping 10,000 or 1,000 to 750 - 800 nit sounds like it would be trickier. I'll have to read up on that more.

Going from 150 - 200 - 300 nit of color highlights, detail in color in bright areas, and from colored light sources in scenes to 500, 600, 700, and 800 nit on an OLED would be well worth it to me.

HDR is attempting to show more realism in scenes. We are still nowhere near reality at 10,000nit HDR but it is much more realistic than SDR. The Dolby public test I quoted was using 20,000nits.

https://www.theverge.com/2014/1/6/5276934/dolby-vision-the-future-of-tv-is-really-really-bright

"The problem is that the human eye is used to seeing a much wider range in real life. The sun at noon is about 1.6 billion nits, for example, while starlight comes in at a mere .0001 nits; the highlights of sun reflecting off a car can be hundreds of times brighter than the vehicle’s hood. The human eye can see it all, but when using contemporary technology that same range of brightness can’t be accurately reproduced. You can have rich details in the blacks or the highlights, but not both.

So Dolby basically threw current reference standards away. "Our scientists and engineers said, ‘Okay, what if we don’t have the shackles of technology that’s not going to be here [in the future],’" Griffis says, "and we could design for the real target — which is the human eye?" To start the process, the company took a theatrical digital cinema projector and focused the entire image down onto a 21-inch LCD panel, turning it into a jury-rigged display made up of 20,000 nit pixels. Subjects were shown a series of pictures with highlights like the sun, and then given the option to toggle between varying levels of brightness. Dolby found that users wanted those highlights to be many hundreds of times brighter than what normal TVs can offer: the more like real life, the better."

------------------------------------

HDR Color temperature map based on HDR 10,000 in a game.

You can see that the brighter parts of the scene are mostly 100nit, 150nit until you get to the sun (10,000nit), the sun's corona and the glint of the sun off of the rifle's scope and barrel which are in the 1,000nit range and a little higher on the brightest part of the scope's glint to 4000nit. I'd expect everything above the SDR range in the scene would be tone mapped down in relation to the peak colors (peak color brightnesses or luminances) the OLED or FALD LCD are capable of. That is, rather than scaling the whole scene down in direct ratio and making the SDR range darker which would look horrible.

--------------------------------------

"Different" would be a more apt description. BFI will improve motion clarity, G-Sync will help with smoothness, tearing and input lag. I would recommend BFI for fast paced shooters where you can maintain high fps at all times and G-Sync for anything else.

My Club3D adapter is scheduled for delivery this Friday but my TV is no where to be seen. Anyone order from Bestbuy recently with an ETA soon?

When did you order the Clube 3D adapter? and did you order from Amazon?

REally? yesterday I checked and it said they don't have in stock until July 18 or something?Yesterday (Sunday). Yes Amazon.

And you ordered yesterday too?got mine in today and it’s perfect..other than aggresjve dimming while web browsing..anyone know a fix to that?

REally? yesterday I checked and it said they don't have in stock until July 18 or something?

Are you talking about the tv or the club3d adapter?got mine in today and it’s perfect..other than aggresjve dimming while web browsing..anyone know a fix to that?

I used one. See my post earlier. Remember that the CX48 also doesn't support DSC. Also - my biggest problem was the constant signal loss having to pull power from the adapter to get it back. I've also bought a new 50$ HDMI2.1 cable (1.5m) to make sure that wasn't causing the problem. Same thing.... i've send it back to the supplier.They posted their findings but it seems to completely contradict another guys finding on AVS with his C9 using that adapter who has no issues with it. AVS guy is even using it with a 1080 Ti which isn't even capable of DSC.

Well it doesn't work (i tested it, see my post ealier). I also got a response from Club3d:Well that is good news in theory then. So with DSC, DP 1.4 can output 4K/120Hz 4:4:4 or RGB at 10-bit HDR. Then the adapter converts it into a non DSC HDMI 2.1 output which should allow the same 4K/120Hz 4:4:4 or RGB at 10-bit HDR. Technically that would require 40.1 Gbps bandwidth, but I'd assume LG wasn't stupid enough to forget that .1 Gbps which would mean kicking down to 8-bit color. But crazier stuff has happened...

Why has no one tested this yet? Must I do everything?

Yeah you tested it on a CX, i'm talking about the C9 in a CX threadWell it doesn't work (i tested it, see my post ealier). I also got a response from Club3d:

"Which means you can do 4K120Hz at 8Bits when you want to do full 444"

Full RGB did work btw...

Mine is on the way too, but i had to get a US postal forwarding address to get it to Australia, still going be a few weeks for meWell that is good news in theory then. So with DSC, DP 1.4 can output 4K/120Hz 4:4:4 or RGB at 10-bit HDR. Then the adapter converts it into a non DSC HDMI 2.1 output which should allow the same 4K/120Hz 4:4:4 or RGB at 10-bit HDR. Technically that would require 40.1 Gbps bandwidth, but I'd assume LG wasn't stupid enough to forget that .1 Gbps which would mean kicking down to 8-bit color. But crazier stuff has happened...

Why has no one tested this yet? Must I do everything?

Yes the CX will support all the bells and whistles just like the C9 will when hdmi2.1 cards arrive, until then we're all just using hacks to get it.Found out the issue. Current gen GPU (2080TI) can do RGB or 4K 120 Hz / 144 Hz HDR 10-bit YCbCr422. Not the latter with 444.

Found out the issue. Current gen GPU (2080TI) can do RGB or 4K 120 Hz / 144 Hz HDR 10-bit YCbCr422. Not the latter with 444.

No with the adapter on a C9 we should be able to get full 4k @ 120hz 4:4:4 with HDR. I'm hoping anyway. That's if the nvidia cpanel will allow 4:4:4 10bit, (i've heard rumours the nvidia cpanel won't allow it) we shall see soon.So with the Adapter on a C9, you can only do 4k @ 120hz 4:2:2?

Found out the issue. Current gen GPU (2080TI) can do RGB or 4K 120 Hz / 144 Hz HDR 10-bit YCbCr422. Not the latter with 444.

And you ordered yesterday too?

Sorry, should have clarified..my 48CX.Are you talking about the tv or the club3d adapter?

Not true. Club 3D says the input to the adapter (Display port 1.4) accepts a display stream compression signal (DSC), which the RTX series can do. So the bandwidth for 4K/120Hz 4:4:4 or RGB at 10-bit HDR should be there on both the input (DSC DP 1.4) and output (HDMI 2.1 without DSC) ends of the adapter.

Yes, because you are limited to DisplayPort 1.4's bandwidth of 32.4 Gbps with the adapter.Found out the issue. Current gen GPU (2080TI) can do RGB or 4K 120 Hz / 144 Hz HDR 10-bit YCbCr422. Not the latter with 444.

The 48CX does not support DSC. AVS confirmed this by looking at the EDID.Not true. Club 3D says the input to the adapter (Display port 1.4) accepts a display stream compression signal (DSC), which the RTX series can do. So the bandwidth for 4K/120Hz 4:4:4 or RGB at 10-bit HDR should be there on both the input (DSC DP 1.4) and output (HDMI 2.1 without DSC) ends of the adapter.

Neither does the C9, so i don't know how all of this is going to pan out when the adapter arrives.The 48CX does not support DSC. AVS confirmed this by looking at the EDID.

The C9 supports 48Gbps; so you compress over Displayport to the adapter, and the adapter decompresses and passes to HDMI.Neither does the C9, so i don't know how all of this is going to pan out when the adapter arrives.

Well that is good news in theory then. So with DSC, DP 1.4 can output 4K/120Hz 4:4:4 or RGB at 10-bit HDR. Then the adapter converts it into a non DSC HDMI 2.1 output which should allow the same 4K/120Hz 4:4:4 or RGB at 10-bit HDR. Technically that would require 40.1 Gbps bandwidth, but I'd assume LG wasn't stupid enough to forget that .1 Gbps which would mean kicking down to 8-bit color. But crazier stuff has happened...

Why has no one tested this yet? Must I do everything?

This BFI vs. G-Sync mess is getting a bit annoying...

I get that they technically can't work together, but BFI shouldn't really be necessary in the first place. We should already be getting 'perfect' motion resolution as available

Agreed that it's good news that the adapter can do DSC decoding.

I'm also wondering if the 40.1 Gbps number is correct. 4K @ 120hz 4:4:4 10-bit requires 32.274 Gbps data rate under CVT-RBv2. If the adapter is using HDMI 2.1's encoding scheme (16b/18b), then the bandwidth required is 36.3 Gbps, which should be within spec of both the C9 and CX. Alternatively, if the adapter is using HDMI 2.0's encoding scheme (8b/10b), then it requires 40.3 Gbps, which the CX can't handle. The 40.1 Gbps number implies that it's using HDMI 2.0's encoding, although that seems weird to me as these data rates far exceed HDMI 2.0's limits. Or am I forgetting something in my calculations?

Since others are commenting on games I thought I would add that I just loaded up Shadow of the Tomb Raider from 2018, oh I just remembered the full name of the game, The Way Home and ...... WOW ....... wow ..... wow .............

First of all, an incredible game thus far, I'm only into it maybe 5 mins but the blacks, the colors, the contrast. Game playing is on a totally new level that I have never experienced before. I've never seen anything like this before.

I am almost positive that game studios use these LG OLED's to master and or, test out their progress in whatever games they are designing. This C9 is incredible. I can only imagine what Cyberpunk 2077 will look like with OLED, HDR, 4K @ 120gz on the new nVidia 3080 ti this Fall, a few short 3 or 4 months away.

For me personally, this is a revolutionary step in gaming. Everything is aligning perfectly. Software and hardware meet, again at a historic point in time. Honestly, this might be another Glide 3D / Quake GL moment for me to see everything come together this Fall as I've mentioned.

You're saying there's ~25% of additional bandwidth consumed for other things that's on top of the encoding overhead? I haven't seen any of this in the specs. Do you have any material that sheds more light on this?Yes i think you aren't taking into account that about 25% of the bandwith is reserved for other things, the transport bit rate isn't the same as the data rate.

SDR should fit, but from what I've gathered HDR will not. HDR supposedly adds a 10% overhead to your total bandwidth. Some sources even say 20%.Agreed that it's good news that the adapter can do DSC decoding.

I'm also wondering if the 40.1 Gbps number is correct. 4K @ 120hz 4:4:4 10-bit requires 32.274 Gbps data rate under CVT-RBv2. If the adapter is using HDMI 2.1's encoding scheme (16b/18b), then the bandwidth required is 36.3 Gbps, which should be within spec of both the C9 and CX. Alternatively, if the adapter is using HDMI 2.0's encoding scheme (8b/10b), then it requires 40.3 Gbps, which the CX can't handle. The 40.1 Gbps number implies that it's using HDMI 2.0's encoding, although that seems weird to me as these data rates far exceed HDMI 2.0's limits. Or am I forgetting something in my calculations?

...

Why has no one tested this yet? Must I do everything?