Navigation

Install the app

How to install the app on iOS

Follow along with the video below to see how to install our site as a web app on your home screen.

Note: This feature may not be available in some browsers.

More options

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

NVIDIA GeForce GTX 580 Video Card Review @ [H]

- Thread starter FrgMstr

- Start date

Kyle & Co., as usual, another fantastic review from the top of the tech food chain.

I've already pulled the trigger on a GTX 580, having a "gut" feeling it would be worth it, and your excellent review served to justify my decision.

Now, as long as there are not problems with any games/apps and drivers, we'll all be set to go, hopefully solidly future-proof for quite some time.

I've already pulled the trigger on a GTX 580, having a "gut" feeling it would be worth it, and your excellent review served to justify my decision.

Now, as long as there are not problems with any games/apps and drivers, we'll all be set to go, hopefully solidly future-proof for quite some time.

BloodyIron

2[H]4U

- Joined

- Jul 11, 2005

- Messages

- 3,439

Am I the only one noticing nVidia's incremental improvments here? I havn't been astounded since the 8800 generation.

My eyes are on AMD.

My eyes are on AMD.

piscian18

[H]F Junkie

- Joined

- Jul 26, 2005

- Messages

- 11,020

Am I the only one noticing nVidia's incremental improvments here? I havn't been astounded since the 8800 generation.

My eyes are on AMD.

This.

GTX580 is on par with a 5870 2GB card but its $200 more..heee no.

The cheapest GTX580 is $559 on newegg the review says $500. blegh

The Cheapest HD5870 2GB is $339.

I disagree ingeneral with the review but this in specific bothers me.

firas

2[H]4U

- Joined

- Oct 29, 2006

- Messages

- 2,458

great review as always, thanks Kyle.

Brent_Justice

Moderator

- Joined

- Apr 17, 2000

- Messages

- 17,755

Zarathustra[H];1036405075 said:I'm a little bit disappointed - however - that the [H] reviewed this card without overclocking it...

Time factor, I would have loved to as well.

great review. as always truly unique compared to what other sites offer.

i love the real gameplay performance.

however, i did feel that something was missing. a performance comparison with morphological AA on.

i know Nvidia doesnt support it and that why this comparison seems important to me.

i have a 8800 gts 512 and im in the market for a new gpu. right now, amd with its morphological AA seemed to be a clear winner for me. it has less performance impact and equal quality. so, when i make a choice between 2 gpu's this is an important aspect. id like a current amd gpu using MLAA compared to the closest nvidia has to offer image quality wise in order to see if MLAA provides enough of a performance boost to tip the balance.

id like to see how MLAA changes the balance when making a choice in getting a new card.

i love the real gameplay performance.

however, i did feel that something was missing. a performance comparison with morphological AA on.

i know Nvidia doesnt support it and that why this comparison seems important to me.

i have a 8800 gts 512 and im in the market for a new gpu. right now, amd with its morphological AA seemed to be a clear winner for me. it has less performance impact and equal quality. so, when i make a choice between 2 gpu's this is an important aspect. id like a current amd gpu using MLAA compared to the closest nvidia has to offer image quality wise in order to see if MLAA provides enough of a performance boost to tip the balance.

id like to see how MLAA changes the balance when making a choice in getting a new card.

Awesome review as usual Kyle and Brent!!!! This card is def a beast!!! I cannot wait for the review that pits the 580 against the 6970 when they are released. I am def burning a hole in my pocket to upgrade, but I will wait for the review of the new cards that AMD/ATI will be offering. Looks like a win/win situation for the consumer in the choice of the upper echalon cards for the holidays!!!

Yakk

Supreme [H]ardness

- Joined

- Nov 5, 2010

- Messages

- 5,810

Am I the only one noticing nVidia's incremental improvments here? I havn't been astounded since the 8800 generation.

My eyes are on AMD.

Nope, I agree too. The review was excellent, the card itself.... meh... it's what it should've been more than 1 year ago. It's good, maybe not fantastic anymore, but at least nvidia got it to work like it was supposed to...

I now use 3 monitors everyday for work & some play. So I'm personally not going back to SLI after an extended stint of heat, noise, and issues a couple years back. All to use the same screens I'm using now.

Y.

Brent_Justice

Moderator

- Joined

- Apr 17, 2000

- Messages

- 17,755

A bit suprised it wasn't compared to the 5970 instead of the 5870, considering the 5970 is amd's top end and the 580 nvidia's.

5870 2GB is AMD's top end SINGLE-GPU, for the launch, we felt it best to compare the top end single GPUs, and at the time this evaluation was put together the Eyefinity6 card was the price competition to the 580.

I am working on a 5970 vs. 580 followup today.

Brent_Justice

Moderator

- Joined

- Apr 17, 2000

- Messages

- 17,755

Anything but a clear performance win for 580 vs 6970 would be a big fail for Nvidia, seeing how 580 is much larger, and probably will consume more power.

GTX 580 is really not impressive at all. Yes, it is much better than 480, but it's only 20-30% faster than a card that is one year (!!!) older, waaaaay smaller, consumes much less, and is 40 % cheaper.

To me, it is cause it is near silent, use a GTX 480 for a while, then put in a GTX 580 and find 20% faster performance than GTX 480 and performance you can't hear screaming at you. It's a big improvement.

AceGoober

Live! Laug[H]! Overclock!

- Joined

- Jun 25, 2003

- Messages

- 25,558

Big Thanks! goes out to the [H] Crew for putting out another well layed-out review on such a short notice.

The performance numbers fall in-line with what I imagined they would be in real world usage, not from nVidia's hype or voiced in the forums. With that said, I've asked my 2 relatives to hold off purchasing the GTX 580 (to their dismay) and wait for the AMD 5900 series to be released. They both want to go with single card solutions for the games they play and waiting until the 5900 series to arrive on the scene would be a better suggestion than to just pull the trigger and get the latest and greatest now and possibly regret the purchase later.

Better to be armed with fact based information than to hop into the fray based on rumor, I always say.

The performance numbers fall in-line with what I imagined they would be in real world usage, not from nVidia's hype or voiced in the forums. With that said, I've asked my 2 relatives to hold off purchasing the GTX 580 (to their dismay) and wait for the AMD 5900 series to be released. They both want to go with single card solutions for the games they play and waiting until the 5900 series to arrive on the scene would be a better suggestion than to just pull the trigger and get the latest and greatest now and possibly regret the purchase later.

Better to be armed with fact based information than to hop into the fray based on rumor, I always say.

byusinger84

Gawd

- Joined

- Feb 1, 2008

- Messages

- 807

I think I'll stick with my 5870. Right now it's not being taxed much at all on my 1920x1200 monitor, and $299 vs $499 for a ~20%-30% boost in performance at this present time isn't worth it in my book. I've never had problems with my ATI/AMD card and will stick with it until it starts to slow down.

With all that said, as always great review. Nvidia really has a winner here on the performance side of things. Definitely what Fermi should have been. Hopefully when AMD releases their 6970 it'll be on the $350-399 range. If it can be within a a few percent of the GTX 580, I'd call that a success.

All in all, a success for nVidia. Time for AMD to answer big.

With all that said, as always great review. Nvidia really has a winner here on the performance side of things. Definitely what Fermi should have been. Hopefully when AMD releases their 6970 it'll be on the $350-399 range. If it can be within a a few percent of the GTX 580, I'd call that a success.

All in all, a success for nVidia. Time for AMD to answer big.

byusinger84

Gawd

- Joined

- Feb 1, 2008

- Messages

- 807

Big Thanks! goes out to the [H] Crew for putting out another well layed-out review on such a short notice.

The performance numbers fall in-line with what I imagined they would be in real world usage, not from nVidia's hype or voiced in the forums. With that said, I've asked my 2 relatives to hold off purchasing the GTX 580 (to their dismay) and wait for the AMD 5900 series to be released. They both want to go with single card solutions for the games they play and waiting until the 5900 series to arrive on the scene would be a better suggestion than to just pull the trigger and get the latest and greatest now and possibly regret the purchase later.

Better to be armed with fact based information than to hop into the fray based on rumor, I always say.

You mean 6900

GTX580 is on par with a 5870 2GB card but its $200 more..heee no.

The cheapest GTX580 is $559 on newegg the review says $500. blegh

The Cheapest HD5870 2GB is $339.

I disagree ingeneral with the review but this in specific bothers me.

How do you get that the 580 is on par with the 5870?

Azdougness

n00b

- Joined

- Aug 22, 2010

- Messages

- 9

Great review, missed a couple non critical elements, but like you said there are 20 other review sites to find them on.

Kyle,

Living in Arizona heat has always been a major issue for me, to the point that during the winter my Q6600 goes to 4Ghz, while during the summer it goes down to 3Ghz with a voltage set under factory. Video cards have less room to play with in this field, which is why a "real world" sample of what the heat effects are is very important to me. In the next 2-3 months I will be overhauling my video card setup from single GPU to Xfire/SLI. I would be extremely appreciative if you would be able to replicate this test on the AMD 69XX series cards (and maybe even include the 5870s for good measure).

Can we also get a follow up with a little more detail maybe about the voltage regulator on this card, like others have expressed interest in. Would be damaging to the reputation of the card if it couldn't overclock (que watercooling solutions), even if it is a monster at stock specs. Also do we know if Nvidia made the hardware controllable through drivers? This will have a direct impact on the longevity of the card, in my opinion, if you cannot disable this feature.

Kyle,

Living in Arizona heat has always been a major issue for me, to the point that during the winter my Q6600 goes to 4Ghz, while during the summer it goes down to 3Ghz with a voltage set under factory. Video cards have less room to play with in this field, which is why a "real world" sample of what the heat effects are is very important to me. In the next 2-3 months I will be overhauling my video card setup from single GPU to Xfire/SLI. I would be extremely appreciative if you would be able to replicate this test on the AMD 69XX series cards (and maybe even include the 5870s for good measure).

Can we also get a follow up with a little more detail maybe about the voltage regulator on this card, like others have expressed interest in. Would be damaging to the reputation of the card if it couldn't overclock (que watercooling solutions), even if it is a monster at stock specs. Also do we know if Nvidia made the hardware controllable through drivers? This will have a direct impact on the longevity of the card, in my opinion, if you cannot disable this feature.

Brent_Justice

Moderator

- Joined

- Apr 17, 2000

- Messages

- 17,755

Will it overclock or will the chip that down clocks it kick in? Also if I were to keep my card for a long time, will future stressful games make that chip kick in? Is there a hardware or software indicator that it has enabled the limiter? Sorry if I missed it in the article; was excitedly reading.

Say if I turn AA on in a future title and the fps drops to 20, is it going to down clock? Or a game like Mafia II where PhysiX made some Nvidia cards cry (if I remember correctly; don't own that title). I know you'll can't look into crystal balls, but I was just wondering if it kicked on a title basis or just stress in general. Maybe what I'm really trying to ask is what is the definition of stress to Nvidia.

Thx for the great review as always. Now to see which of the nephews wants to buy (2) 460's.

These are good questions, I'm not really sure how that is going to work out. I can say in the games I tested, I never experienced that happening, and pushed them to insane levels to find the highest playable settings, well beyond what was playable. It is something we will keep a look out for. What I'm most concerned about is how it works with Overclocking, if overclocking will push the card to its thermal limit, thus kicking in the hardware. But, the the thermal limit of the chip is 97c, so I'd think it would have to get really high. I kinda look at the monitoring like it is targeted more for OCCT and Furmark specifically, heh. We'll see.

Kyle is there a chance to get a review if there is an adverse reaction to cooling if the new vapor chamber cooling is put in a 90 degree position like in an RV02 or FT02. I remember there being a problem with this in some of the earlier ATI cards.

NV stated orientation is not an issue.

Simply put.....RAW ,Naked and true to the bone review.

WYSIWYG.

Amazing review guys. Cant wait for the updated review with the 5970 in there.

Question , any chance you guys can do a Crossfire review of the 6870 & 6850 ? or has that been done ?

It's on the table.

It seems that contrary to rumors nothing was stripped from the g100 core, it was just reorganized and optimized. Texture units did increase but to only to 64 instead of the 128 most people were hoping.

Any info on yeilds? If they are good, the 480 and 470 should die soon. I'm also curious on an eventual GTX570, would it have the same SPs as the 480 or the 470?

You know know by now, never trust rumors. The components being removed rumor was laughable. I couldn't get over how many people were actually believing it.

great review. as always truly unique compared to what other sites offer.

i love the real gameplay performance.

however, i did feel that something was missing. a performance comparison with morphological AA on.

i know Nvidia doesnt support it and that why this comparison seems important to me.

i have a 8800 gts 512 and im in the market for a new gpu. right now, amd with its morphological AA seemed to be a clear winner for me. it has less performance impact and equal quality. so, when i make a choice between 2 gpu's this is an important aspect. id like a current amd gpu using MLAA compared to the closest nvidia has to offer image quality wise in order to see if MLAA provides enough of a performance boost to tip the balance.

id like to see how MLAA changes the balance when making a choice in getting a new card.

MLAA has not been qualified on Radeon HD 5xxx series yet. Therefore, I could not test it. I was not going to use a hacked driver. When MLAA comes to the Radeon HD 5xxx series, we will use it too.

Chris_B

Supreme [H]ardness

- Joined

- May 29, 2001

- Messages

- 5,361

5870 2GB is AMD's top end SINGLE-GPU, for the launch, we felt it best to compare the top end single GPUs, and at the time this evaluation was put together the Eyefinity6 card was the price competition to the 580.

I am working on a 5970 vs. 580 followup today.

Why exactly does single gpu card vs dual gpu card matter all of a sudden? It wasn't that long ago that the mentality seemed to be compare their high end to the competitions high end, amount of gpu's be damned. Now it seems price and amount of gpus also factor in. A bit of a strange philosophy when you consider amd's strategy for the high end is multi gpu cards.

piscian18

[H]F Junkie

- Joined

- Jul 26, 2005

- Messages

- 11,020

How do you get that the 580 is on par with the 5870?

It's states that in the conclusion. 5 line from the bottom or so.

It's states that in the conclusion. 5 line from the bottom or so.

I think you missed this part from the conclusion. The 5870 held up well, but the 580 is definitely faster by a good margin:

"In all games however, the GeForce GTX 580 surpassed the Radeon HD 5870. We experienced the GeForce GTX 580 to be about 30% better performing than the Radeon HD 5870 on average. Once again, Medal of Honor showed the greatest performance difference, with the GTX 580 being 42% faster than the Radeon HD 5870."

MLAA has not been qualified on Radeon HD 5xxx series yet. Therefore, I could not test it. I was not going to use a hacked driver. When MLAA comes to the Radeon HD 5xxx series, we will use it too.

i thought MLAA was already available my bad.

anyway, nice to see you are going to take this into consideration for the future. after the review [H] made of MLAA it seemed to me that this is a feature that could impact real world performance. maybe it could be allow a slower gpu to match a faster one performance wise without sacrificing on IQ. this is potentially a deciding factor and i only trust [H] to be able to give us the info we need to determine how important this could be.

Zarathustra[H]

Extremely [H]

- Joined

- Oct 29, 2000

- Messages

- 38,917

Why exactly does single gpu card vs dual gpu card matter all of a sudden? It wasn't that long ago that the mentality seemed to be compare their high end to the competitions high end, amount of gpu's be damned. Now it seems price and amount of gpus also factor in. A bit of a strange philosophy when you consider amd's strategy for the high end is multi gpu cards.

Because there are a lot of compromises and drawbacks with dual GPU solutions. If two different systems, one with a dual GPU (one or two cards doesnt matter) and one with a single GPU perform the same, then the single GPU is going to be preferable.

We discussed this in more detail on page six, if you cared to read it, but in essence it comes down to the following:

- CF/SLI solutions have real compatibility issues requiring carefully tested driver profiles that are not updated even close to often enough. CF is particularly bad, but SLI is bad too.

- CF/SLI solutions suffer from increased input lag and micro stuttering in AFR modes.

- SFR modes don't have microstutter or input lag issues, but also don't scale as well performance wise.

Unless all of the issues above can be solved, (especially the first), a one powerful single GPU card will always be better than a multi-GPU solution.

The only time I would consider multi GPU right now is in cases where the fastest single GPU solution isn't fast enough.

Why exactly does single gpu card vs dual gpu card matter all of a sudden? It wasn't that long ago that the mentality seemed to be compare their high end to the competitions high end, amount of gpu's be damned. Now it seems price and amount of gpus also factor in. A bit of a strange philosophy when you consider amd's strategy for the high end is multi gpu cards.

Number of GPUs is very important. There are significant downside to doing to a dual-gpu setup. These include driver compatability, game compatability, and for many, microstutter. Until CF/SLI are as seamless as a single gpu solution you need to make the distinction. I for one, would take a single gpu solution over a dual solution anyday because of the lower hasstles.

5870 2GB is AMD's top end SINGLE-GPU, for the launch, we felt it best to compare the top end single GPUs, and at the time this evaluation was put together the Eyefinity6 card was the price competition to the 580.

Have you thought about including some price/performance comparisons, like you do for heatsinks for example?

To me, it is cause it is near silent, use a GTX 480 for a while, then put in a GTX 580 and find 20% faster performance than GTX 480 and performance you can't hear screaming at you. It's a big improvement.

It's much quieter than 480, but nowhere near silent during load.

These are good questions, I'm not really sure how that is going to work out. I can say in the games I tested, I never experienced that happening, and pushed them to insane levels to find the highest playable settings, well beyond what was playable. It is something we will keep a look out for. What I'm most concerned about is how it works with Overclocking, if overclocking will push the card to its thermal limit, thus kicking in the hardware. But, the the thermal limit of the chip is 97c, so I'd think it would have to get really high. I kinda look at the monitoring like it is targeted more for OCCT and Furmark specifically, heh. We'll see.

From Guru3D:

The new advanced power monitoring management function is well ... dissapointing. If the ICs where there as overprotection for drawing too much power it would have been fine. But it was designed and implemented to detect specific applications such as Furmark and then throttle down the GPU. We really dislike the fact that ODMs like NVIDIA try to dictate how we as a consumer or press should stress the tested hardware. NVIDIA's defense here is that ATI has been doing this on R5000/6000 as well, yet we think the difference is that ATI does not enable it on stress tests, yet is simply a common safety feature when you go way beyond specs. We have not seen ATI cards clock down with Furmark as of recently, unless we clocked say the memory too high after which it clocked down as safety feature. No matter how you look at it or try to explain it, this is going to be a sore topic for now and in the future. There are however many ways to bypass this feature and I expect that any decent reviewer will do so. Much like any protection, if one application does not work, we'll move on to the next one.

I think you missed this part from the conclusion. The 5870 held up well, but the 580 is definitely faster by a good margin:

"In all games however, the GeForce GTX 580 surpassed the Radeon HD 5870. We experienced the GeForce GTX 580 to be about 30% better performing than the Radeon HD 5870 on average. Once again, Medal of Honor showed the greatest performance difference, with the GTX 580 being 42% faster than the Radeon HD 5870."

And in F1 2010 it's whole 3 % faster. And the last line on F1 page is...funny, lacking a better word. "There is no questioning that the GeForce GTX 580 is the fastest video card for this game right now." with numbers like this: 32/43/39.4 vs 32/43/38.2 Yes, technically it is true, but come on, really?

And of course it's faster, considering all that 1 year, size, consumption...thing.

Last edited:

5870 2GB is AMD's top end SINGLE-GPU, for the launch, we felt it best to compare the top end single GPUs, and at the time this evaluation was put together the Eyefinity6 card was the price competition to the 580.

I am working on a 5970 vs. 580 followup today.

Hi, Brent

Will you be doing a follow-up on a GTX 460 SLI vs. GTX 580? I would like to see how this would turn out as well, since 460 in SLI performs equal to or better than the GTX 480.

firas

2[H]4U

- Joined

- Oct 29, 2006

- Messages

- 2,458

Time factor, I would have loved to as well.

adding the overclocking and the SLI performance at any time will be very appreciated. sure there are 20 other sites like Kyle said but the different frames count and performance percentage between your review and the couple of reviews out there at this moment is not small and I prefer to trust [H].

GoldenTiger

Fully [H]

- Joined

- Dec 2, 2004

- Messages

- 29,723

Why exactly does single gpu card vs dual gpu card matter all of a sudden? It wasn't that long ago that the mentality seemed to be compare their high end to the competitions high end, amount of gpu's be damned. Now it seems price and amount of gpus also factor in. A bit of a strange philosophy when you consider amd's strategy for the high end is multi gpu cards.

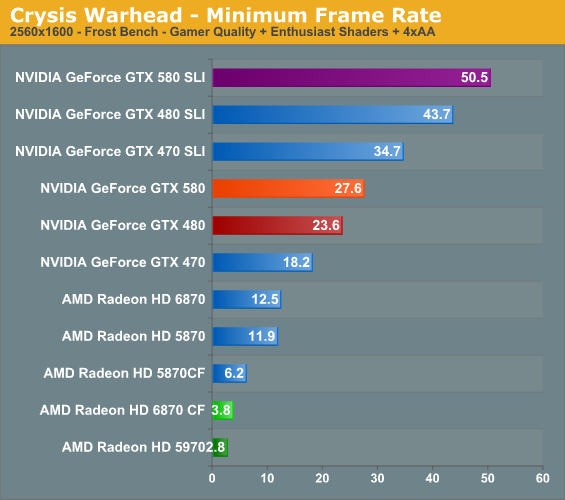

Microstutter, sometimes-lower min fps than even a single card, dependency on driver profiles, and input lag plus extra heat/noise are all major cons to dual-GPU/card solutions.

This is not an issue...

...proven by this:

It's not going to down-clock if the thermal mark is 97C which, unless you're not paying attention and don't turn up the fans, is not a level of heat you're ever going to hit.

While I was concerned about this feature as well, it seems that through Brent's testing, that alone has confirmed that the card is not going to down-clock itself in any normal gaming scenario. I think nVidia is a little smarter than that, and manufactured their hardware properly, as to not have something like that happen, which they know would ruin both the capabilities of their card, and their reputation, as it would be ridiculous.

So, I don't think it's even a concern, wouldn't you say, Brent?

From Guru3D:

The new advanced power monitoring management function is well ... dissapointing. If the ICs where there as overprotection for drawing too much power it would have been fine. But it was designed and implemented to detect specific applications such as Furmark and then throttle down the GPU. We really dislike the fact that ODMs like NVIDIA try to dictate how we as a consumer or press should stress the tested hardware. NVIDIA's defense here is that ATI has been doing this on R5000/6000 as well, yet we think the difference is that ATI does not enable it on stress tests, yet is simply a common safety feature when you go way beyond specs. We have not seen ATI cards clock down with Furmark as of recently, unless we clocked say the memory too high after which it clocked down as safety feature. No matter how you look at it or try to explain it, this is going to be a sore topic for now and in the future. There are however many ways to bypass this feature and I expect that any decent reviewer will do so. Much like any protection, if one application does not work, we'll move on to the next one.

...proven by this:

These are good questions, I'm not really sure how that is going to work out. I can say in the games I tested, I never experienced that happening, and pushed them to insane levels to find the highest playable settings, well beyond what was playable. It is something we will keep a look out for. What I'm most concerned about is how it works with Overclocking, if overclocking will push the card to its thermal limit, thus kicking in the hardware. But, the the thermal limit of the chip is 97c, so I'd think it would have to get really high. I kinda look at the monitoring like it is targeted more for OCCT and Furmark specifically, heh. We'll see.

It's not going to down-clock if the thermal mark is 97C which, unless you're not paying attention and don't turn up the fans, is not a level of heat you're ever going to hit.

While I was concerned about this feature as well, it seems that through Brent's testing, that alone has confirmed that the card is not going to down-clock itself in any normal gaming scenario. I think nVidia is a little smarter than that, and manufactured their hardware properly, as to not have something like that happen, which they know would ruin both the capabilities of their card, and their reputation, as it would be ridiculous.

So, I don't think it's even a concern, wouldn't you say, Brent?

5870 2GB is AMD's top end SINGLE-GPU, for the launch, we felt it best to compare the top end single GPUs, and at the time this evaluation was put together the Eyefinity6 card was the price competition to the 580.

I am working on a 5970 vs. 580 followup today.

This is why we love [H]

Gnomepatrol

Limp Gawd

- Joined

- Jan 14, 2010

- Messages

- 263

I think nvidia did a great job. Thumbs up can't wait to see if they do a dual gpu version of the gf110

I think nvidia did a great job. Thumbs up can't wait to see if they do a dual gpu version of the gf110

If they're pricing these at $500 I'd hate to see the cost of a 580 flavor dual GPU card.

I'm honestly not very impressed by this card. Definately a big improvement over the 480, and a much needed one at that, but it still doesn't support nVidia Surround with a single card. I'll stick to my 460 SLI setup, which is faster and still cost less than the 580's MSRP.

Zarathustra[H]

Extremely [H]

- Joined

- Oct 29, 2000

- Messages

- 38,917

Time factor, I would have loved to as well.

Did they only give you a limited amount of time with the board, or was it more of a time-to-publish kind of thing.

Any chance we'll get a follow-up?

I would love to see a re-do of your recent SLI write-up, witht he latest SLI and CX profiles and the GTX580!

Zarathustra[H]

Extremely [H]

- Joined

- Oct 29, 2000

- Messages

- 38,917

If they're pricing these at $500 I'd hate to see the cost of a 580 flavor dual GPU card.

It would probably be more of a 560 or 570 flavor dual card. I think putting two of these on one card right now is just too much from a power perspective, even with the current optimizations...

if only Nvidia could go back in time and release the GTX 580 as the 1st gen Fermi card then everyone would be drooling...but the whole 480 fiasco has left them behind the 8 ball and always in catch up mode...the GTX 580 is only going to be king for 2 weeks as they now are 1 product cycle behind AMD...hopefully they can catch up next refresh but the 580 is too late for people to really take notice

as the [H] article alluded to the real Fermi card to get will be the 28nm refresh part...Fermi will ony get better with each successive generation and the 28nm card could really be a revolutionary product

as the [H] article alluded to the real Fermi card to get will be the 28nm refresh part...Fermi will ony get better with each successive generation and the 28nm card could really be a revolutionary product

This is not an issue...

Guru3D quote

...proven by this:

Brent's quote

What is not an issue and how is latter disproving the former?

![[H]ard|Forum](/styles/hardforum/xenforo/logo_dark.png)