Can you take an 8x zoom more clear picture? That is the only way to be certain, short of taking the back housing off.

I will do my best to do so tomorrow, as I am currently not at home.

Follow along with the video below to see how to install our site as a web app on your home screen.

Note: This feature may not be available in some browsers.

Can you take an 8x zoom more clear picture? That is the only way to be certain, short of taking the back housing off.

Is everyone's panel Oct. 2016 or have they made any 2017 versions yet?

I'm starting to wonder if the 120Hz 4K OLED might actually see the light of day sooner rather than later. hmm....

Crisis somewhat averted.

I took a look through a handheld 30x microscope.

Snowdog is correct - the red stripes are staggered between columns. I think this is the cause of the unusual pattern along the diagonals that I can see.

I was pretty sure you guys had the same screen, getting two suppliers for an identically spec'ed but different OLED at this point seems very unlikely.

Could you have a look at a checkerboard like I mention here:

https://hardforum.com/threads/oled-4k-30-60-hz-dell-up3017q.1929730/page-4#post-1042972527

It should reveal a bit more info.

It might be that the base pattern is BGR (rare) instead of RGB, or if those red pairs are acting as one pixel.

Through the 30x microscope:

Each pixel is lit up as BGR. The R appears slightly higher than the corresponding B and G.

Good stuff.

If your microscope isn't reversing the image, and this is a BGR screen, that could cause fringing in default cleartype which IIRC is setup for RGB, but I think there is a setting for BGR.

Go into cleartype tuner, I don't think it exposes the BGR setting but you just get it by picking the example which works best on your monitor.

You're right again. It is reversed.

Ordering aside, my ClearType tuner has 5 pages worth of samples, and I have no idea what adjustments each page is making. I don't think all of them are for pixel ordering.

Look's like they redesigned the prototype. 60 Hz now instead of 120 Hz but at least they put DP 1.2 and HDMI 2.0 on it. Got it for $2,997 with military discount. Hopefully the input lag is low!

Agreed. That review was terrible. No testing whatsoever - basically gave us no useful information about the monitor.

not to mention its about 33ms response time not Insta like he claimed.

I'm confused. Could you explain where that 33 ms response time figure comes from?

Vega actually tested the numbers. Also, I had the Dell OLED and its responsiveness felt identical to my LG c6

he's talking about input lag.Just so I'm clear, are we talking about input lag time here or actual pixel response time? If it's the latter, when Dell is quoting a 0.1 ms response time for this monitor, even if they're misquoting it even a hundredfold, that should still mean only a 10 ms response time at worst, shouldn't it?

Plus, this is part of what's confusing me: A 33 ms translates to a 30 Hz refresh rate. Perhaps this is just my ignorance showing here but, when we know the monitor has a 60 Hz refresh rate, how could it have a pixel response time that's the equivalent of 30 Hz? In other words, how could each pixel be making only a single transition within the space of time they actually change state twice?

I feel I must be missing something.

he's talking about input lag.

If I get this monitor and get a beefy graphics card like a 1080TI and adjust my settings to that I am consistently getting >60fps and I enable vsync will i get a smooth gaming experience without tearing similar to what i am getting now with freesync? Thanks in advance for any input!!

Yeah, motion should be super smooth, especially if you enable 60hz strobing (assuming you're not super sensitive to the flicker). The problem with vsync though is adds noticeable input lag in most game engines. There are a few exceptions, like Frostbite (which most EA games use), which are pretty good about minimizing input lag with vsync enabled, especially if used in conjunction with the game's built-in frame limiting command. Others, like Far Cry's Dunia engine, add a stupid amount of input lag with vsync. Sometimes you can reduce the lag by adjusting Nvidia's "max pre-rendered frames"

Man...I hate false information. The 60hz strobe mode on this sucker is UNBEARABLE.

I haven't seen it personally, but I'm assuming they wouldn't have made it a feature if it's unbearable to everybody

I haven't seen it personally, but I'm assuming they wouldn't have made it a feature if it's unbearable to everybody

Yeah but every other manufacturer refuses to do 60hz single strobe, they either force double strobing or simply limit the strobing refresh rate to a minimum of 85hz like what's found in ULMB enabled monitors. It may not be bad for everybody, but it sure is bad for ALMOST everybody if every other manufacturer is going to avoid it like the plague.

I don't think professional reviewers would bury it. The double image "issue" at 120 Hz doesn't mean much for the professional audience as the flicker isn't noticeable there. Remember we are talking about OLED here, which naturally will destroy LCD on almost all metrics.

I don't think professional reviewers would bury it. The double image "issue" at 120 Hz doesn't mean much for the professional audience as the flicker isn't noticeable there. Remember we are talking about OLED here, which naturally will destroy LCD on almost all metrics.

You have not seen it so you have no idea what the hell you are talking about. And yet you continue on with your mis-information, are you trolling? Feature??? Dude the shit and I mean SHIT is so bad on 60hz strobe that I immediately mouthed the words "FUCK YOU DELL" when I tested it.

I'd love to buy one of these but it's really hard to part with $3500 when it only takes a 60hz input and I'm accustomed to 144hz.

I currently use Trinitron and Diamondtron monitors, so my 60hz strobed(actually, scanned) experience is based on that. And 60hz looks good on those for most content outside of text on white backgrounds. Street Fighter V with motion blur disabled looks ridiculously good.... IN MY EXPERIENCE, SO CHILL THE EFF OUT!

And from what I've read, the input lag isn't any better than LG's tv's. So at that point it gets harder to justify.

When you guys say 60Hz strobe, is that equivalent to the flicker you would get on a 60Hz CRT monitor?

If so, then I'd agree that it's unbearable. Especially on light colored backgrounds and ESPECIALLY when you catch it in your peripheral vision.

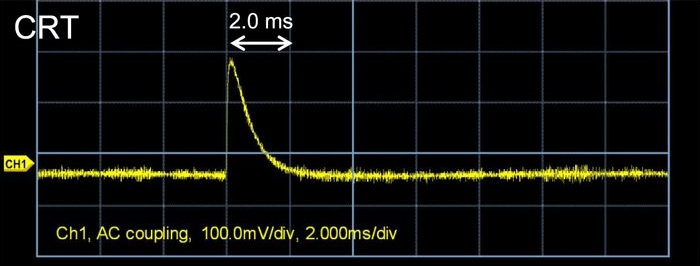

CRT persistence is typically <2ms:I have a hunch as to why 60Hz looks so bad on this monitor. CRTs have a pretty substantial persistence of each of their strobes/scans. I'm pretty sure this is on the order of several milliseconds (at least). So, after each scan/strobe is over, the phosphors on a CRT display stay lit until nearly the time of the next scan/strobe. (In fact, an ideal CRT display should presumably have a persistence time that's equal to the inverse of its optimum refresh rate; i.e., 1/60 seconds for 60Hz.) With the UP3017Q, the response time is 0.1 ms. So, the display can revert back to pure black much more quickly between each strobe. I'm not sure how much time each pixel spends in on vs. off state on this monitor (is it 1/120 seconds on and 1/120 seconds off for 60Hz strobe, or a much shorter "on" time?), but it feels like this super-quick response time would mean that the display spends much more cumulative time in its black state during strobing than a CRT display would, making the strobing that much more visible.

Hmmm. There's quite a bit of difference between 2 ms and 10 ms. With only 2 ms of persistence, the difference between CRT and OLED in terms of amount of flicker should be close to negligible, following my line of reasoning. (Since 60 Hz would give you nearly 17 ms of time between strobes, even a CRT that's decaying in 2 ms should have plenty of time to stay in its "dark state" between two strobes, creating plenty of flicker.)

However, my years of CRT use were during the younger years of my life, when I couldn't really afford very high-end CRT monitors. So, perhaps the CRT displays I'm likely to have experienced were closer to that 10 ms decay time. That would mean the monitor would have stayed on for 60% of the time period between two scans/strobes, meaning quite a high percentage of "on" time. That ratio might be much lower for OLEDs. (Not sure.)

On the other hand, it's coming back to me now that the norm for a "good" CRT monitor refresh rate was actually 85 Hz during the latest years I used them; not 60 Hz. I do believe some of the CRTs I owned (at least in post-college years) were 85 Hz models. That would mean just under 12 ms of time between each scan of the phosphors. With a persistence time of 10 ms or a little less, that does translate to the phosphors being lit nearly all the time between successive refreshes thanks to persistence.