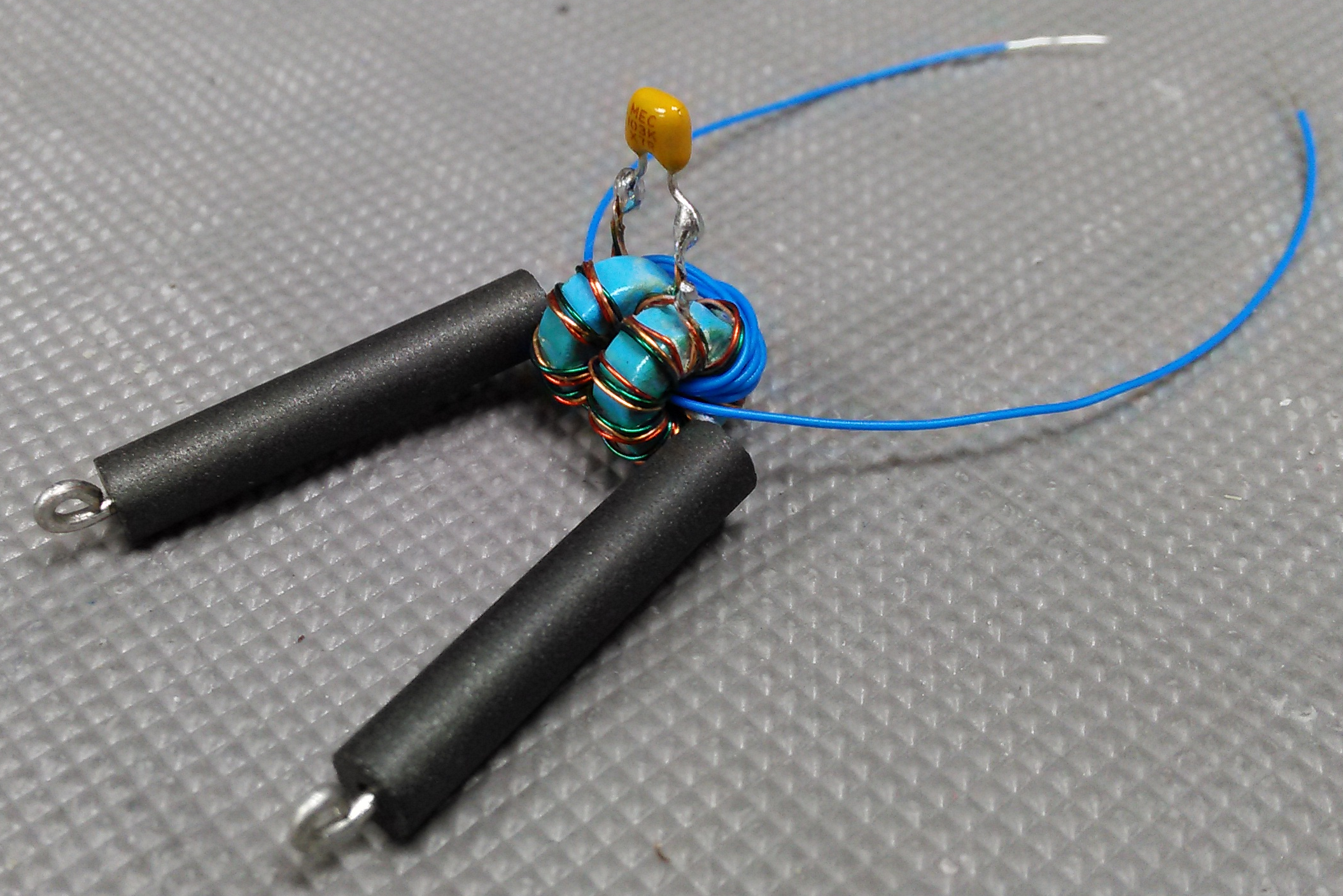

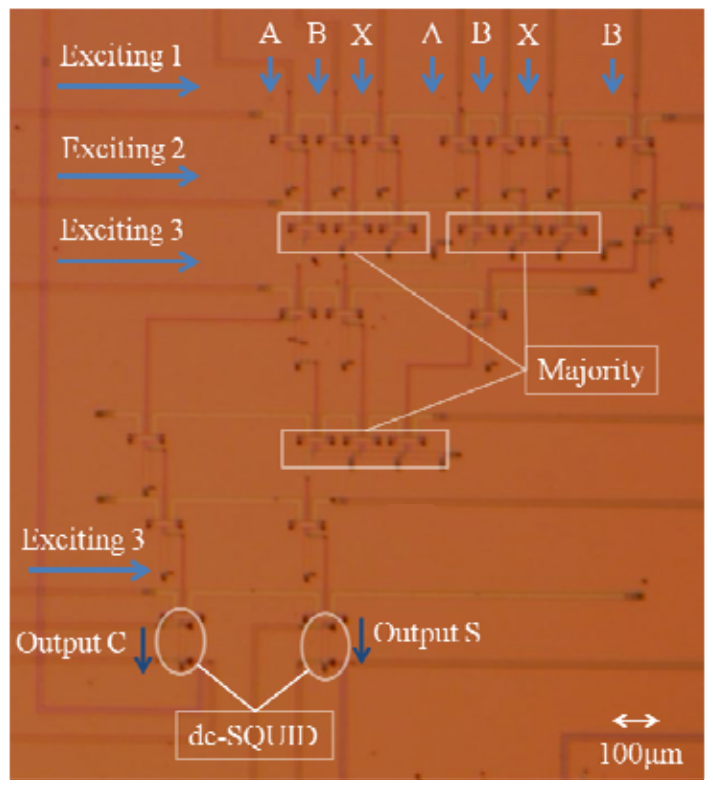

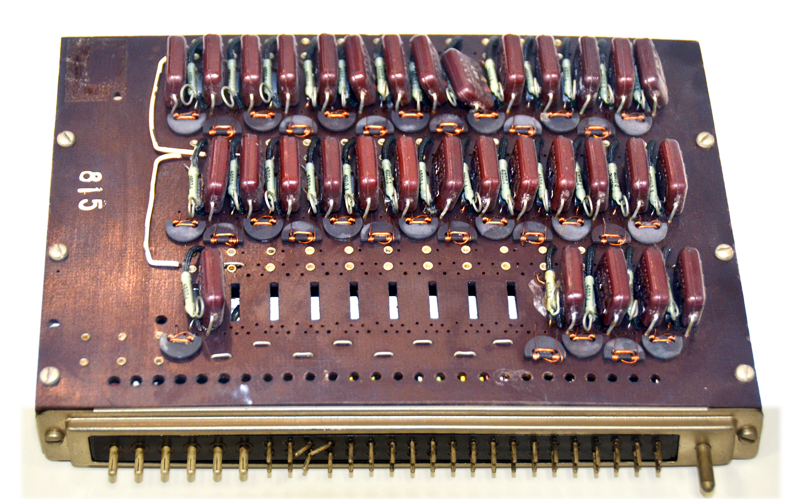

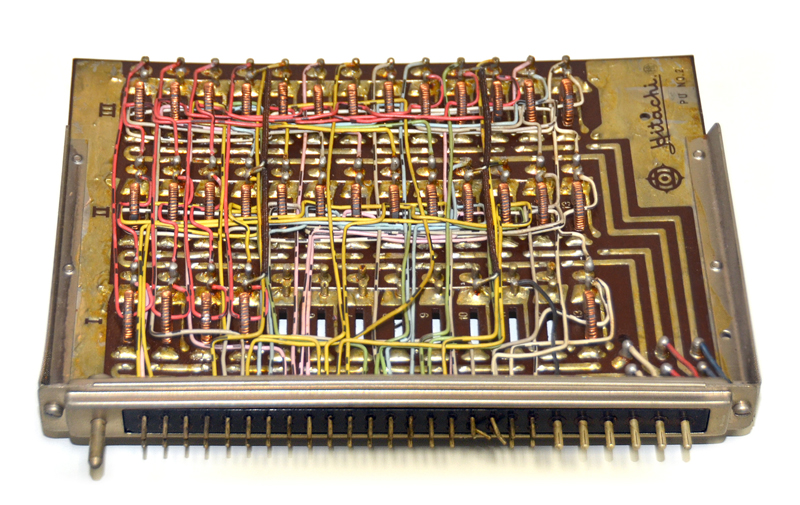

In 1954, Goto Eiichi (Eiichi Goto in English, except he wasn't) developed an oscillating magamp that

could perform majority vote logic, amplify the winning state, and hold the result for as long as needed

in one of two possible phase locks. All without transistors or tubes, just ordinary capacitors and slightly

overdriven inductors. He named this logic device "Parametron".

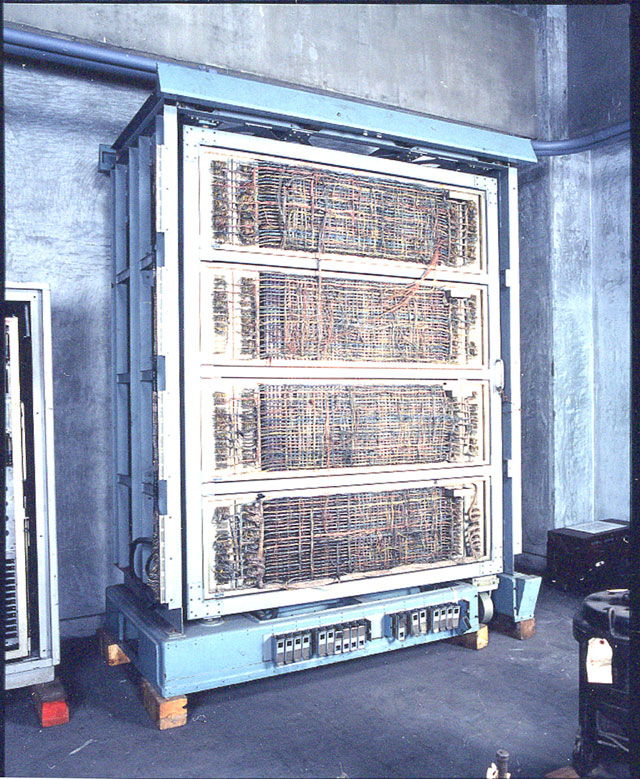

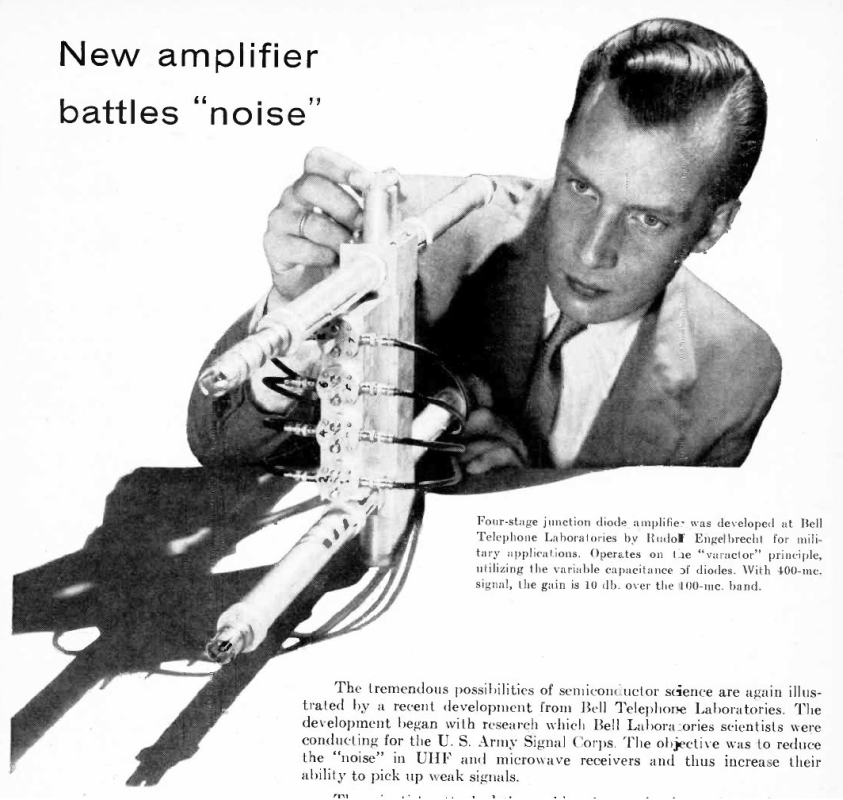

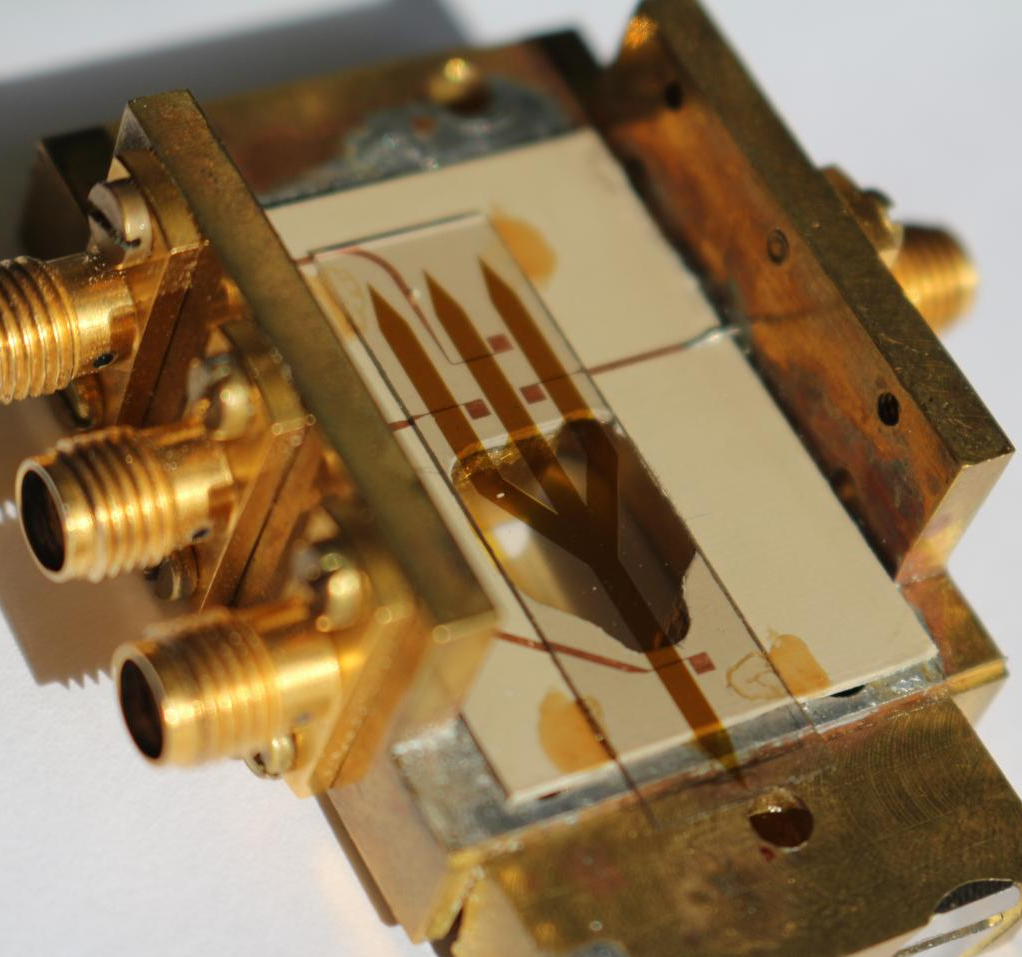

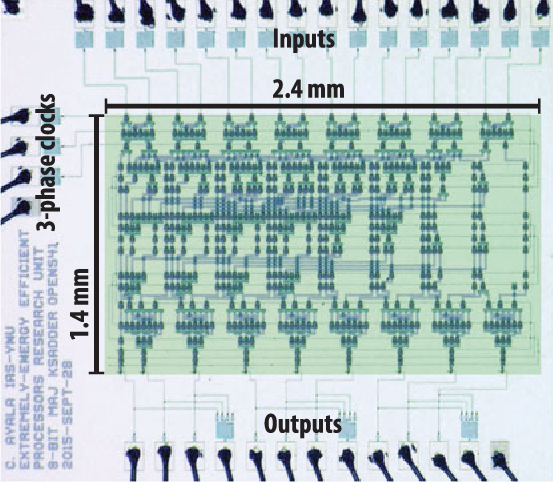

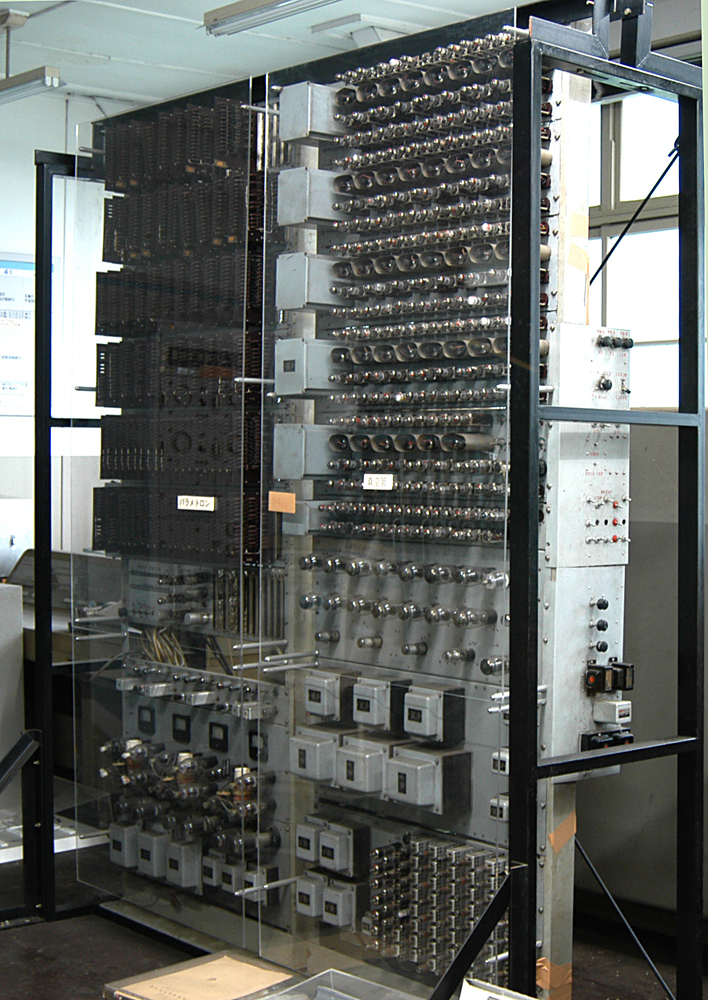

Several early Parametron Computers were built. And from the user perspective, they worked just like

ordinary computers made of gates. NEC put out a brochure for one in English that clearly shows just

how boringly normal these could be if you never looked under the hood. A few tubes were used for

convenience to pump the oscillators, but they wern't doing logic with those tubes. An alternator could

have provided the same pump waveforms for all that mattered.

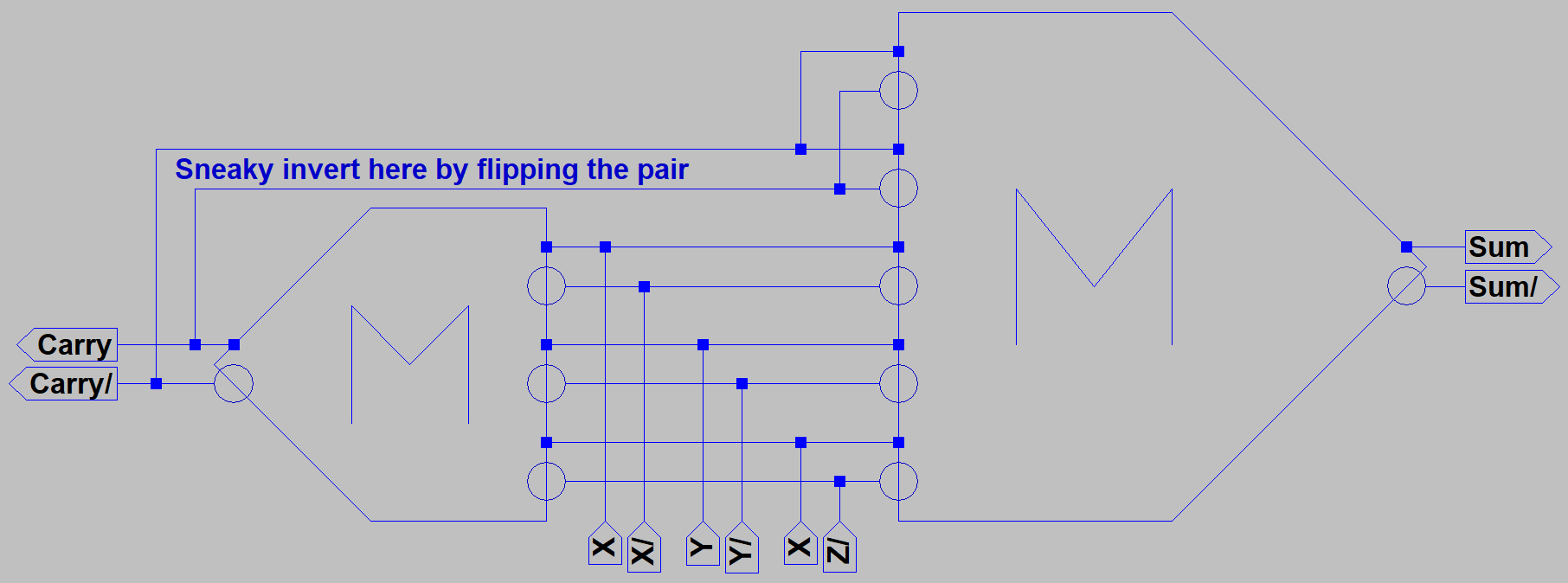

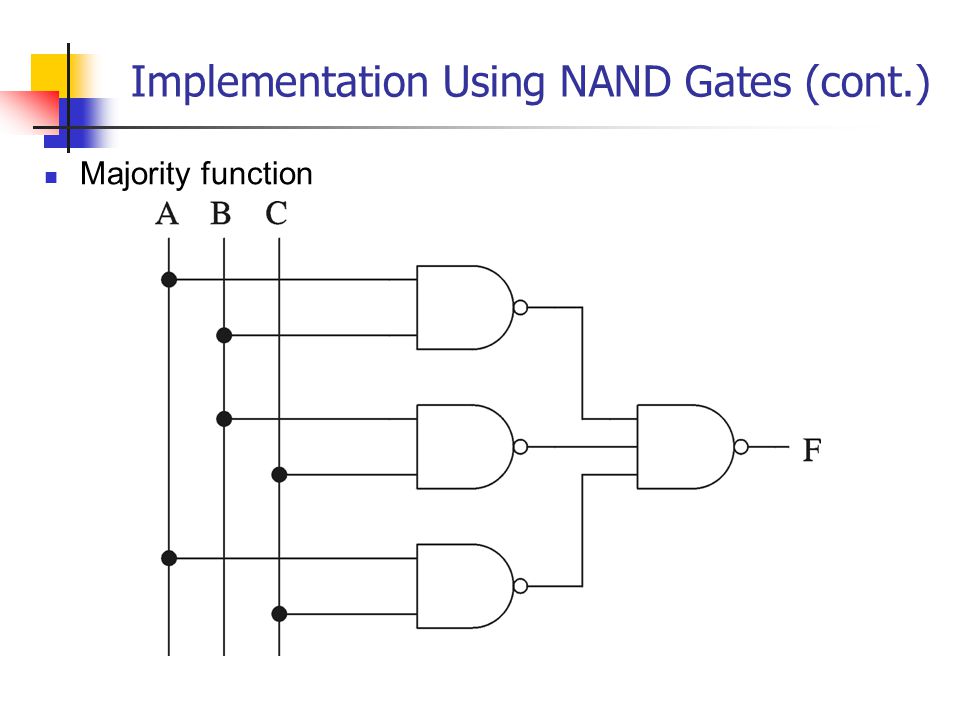

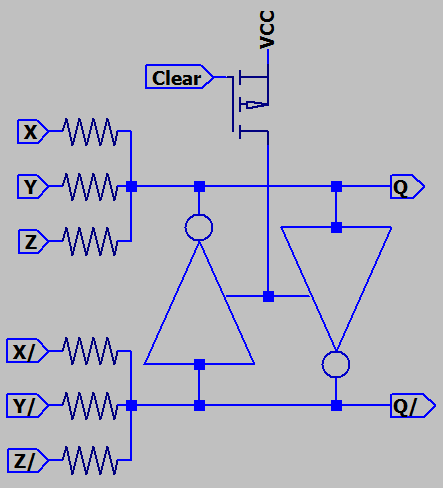

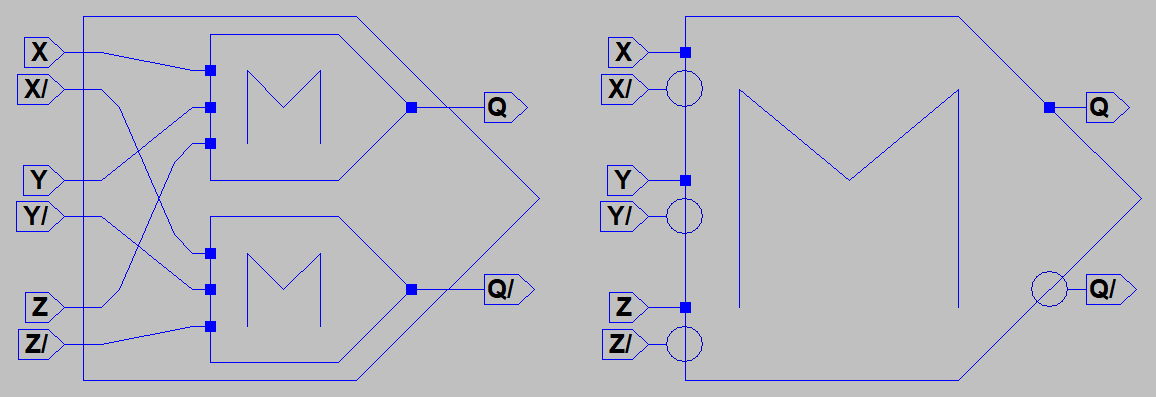

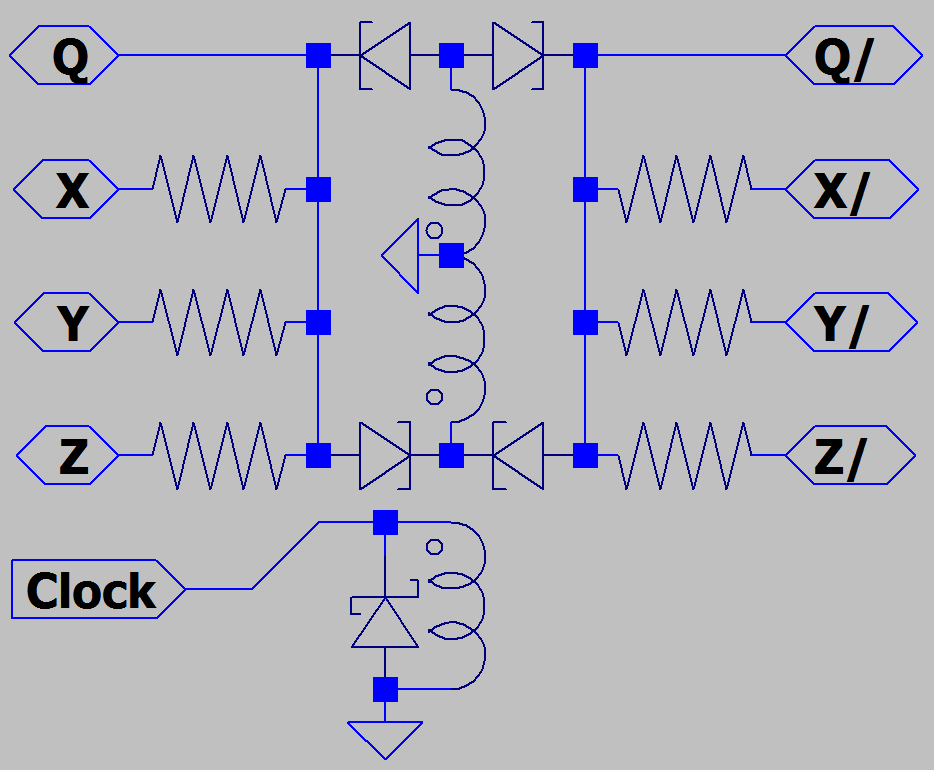

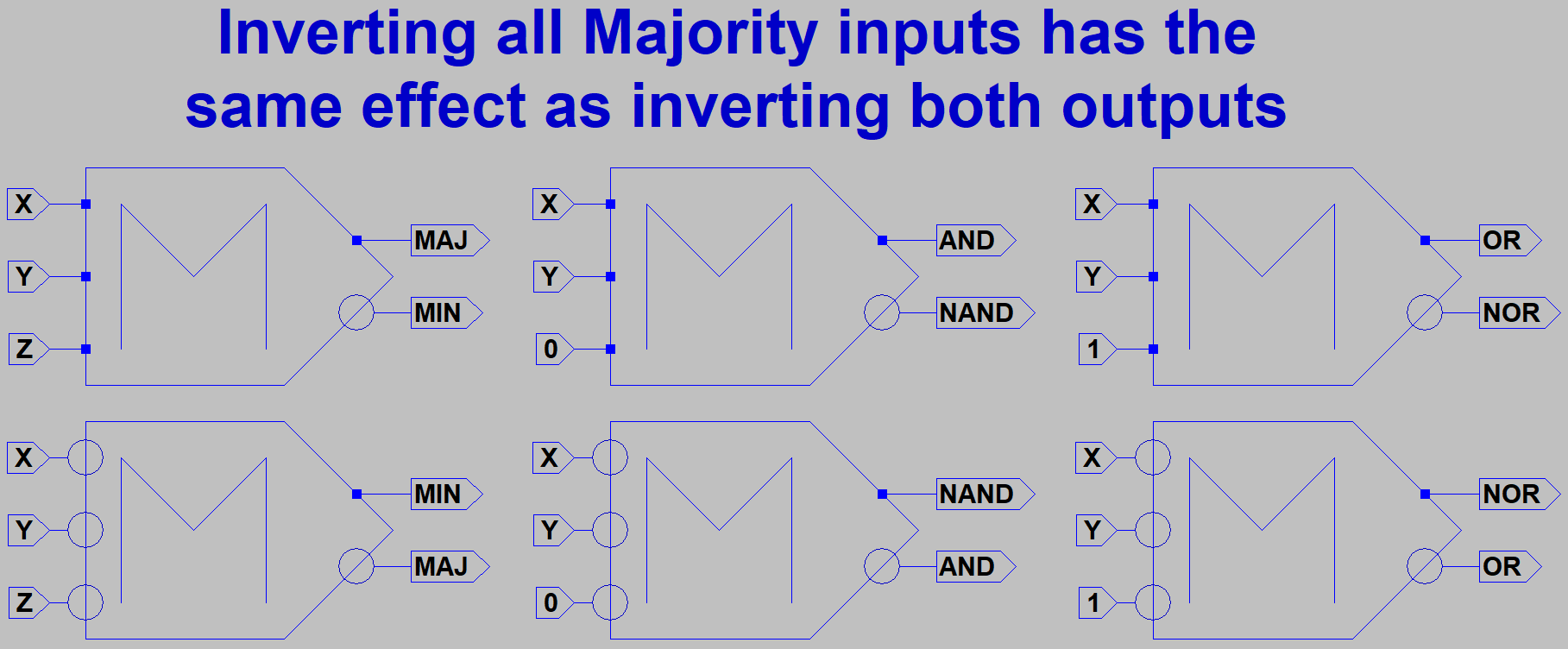

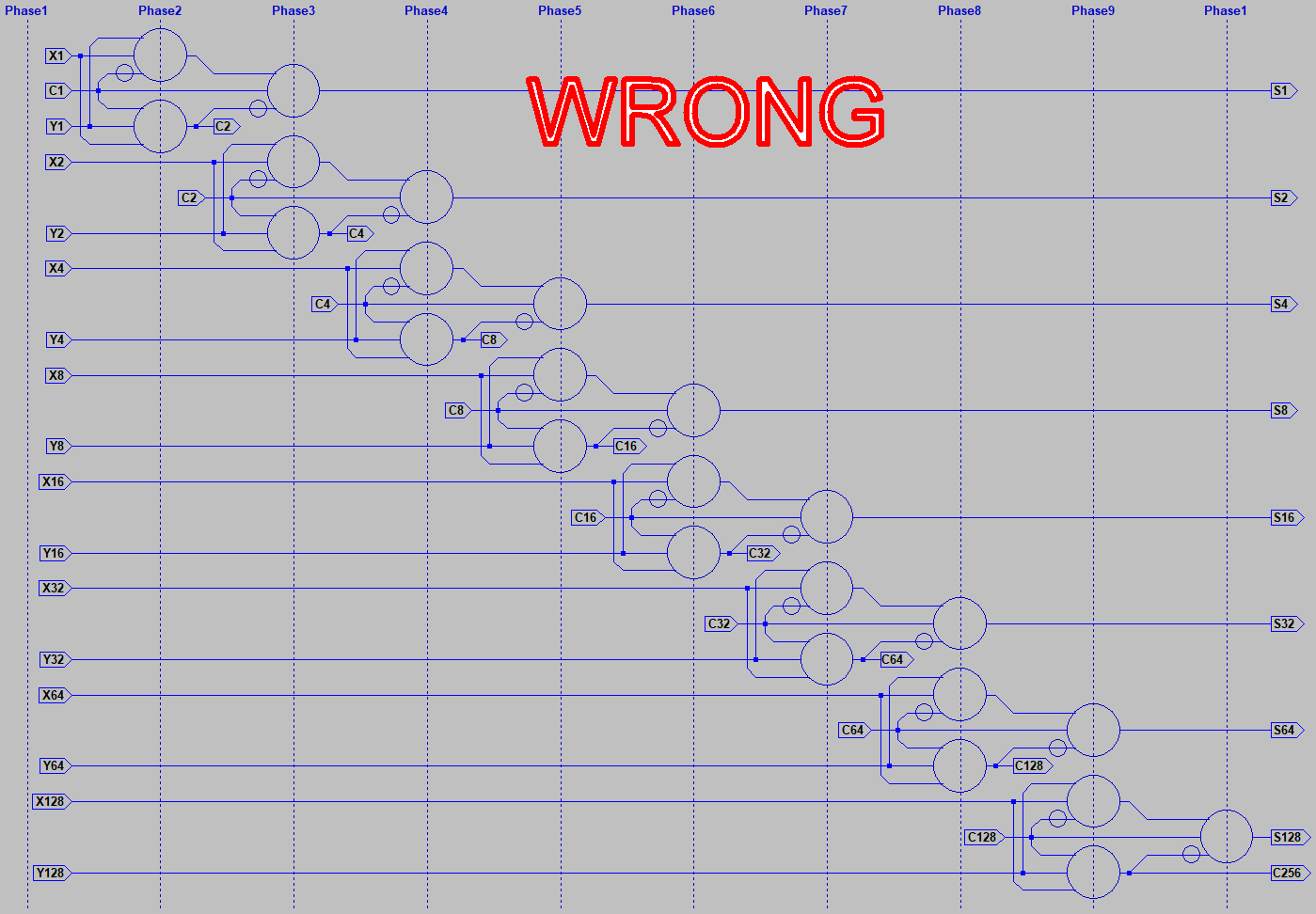

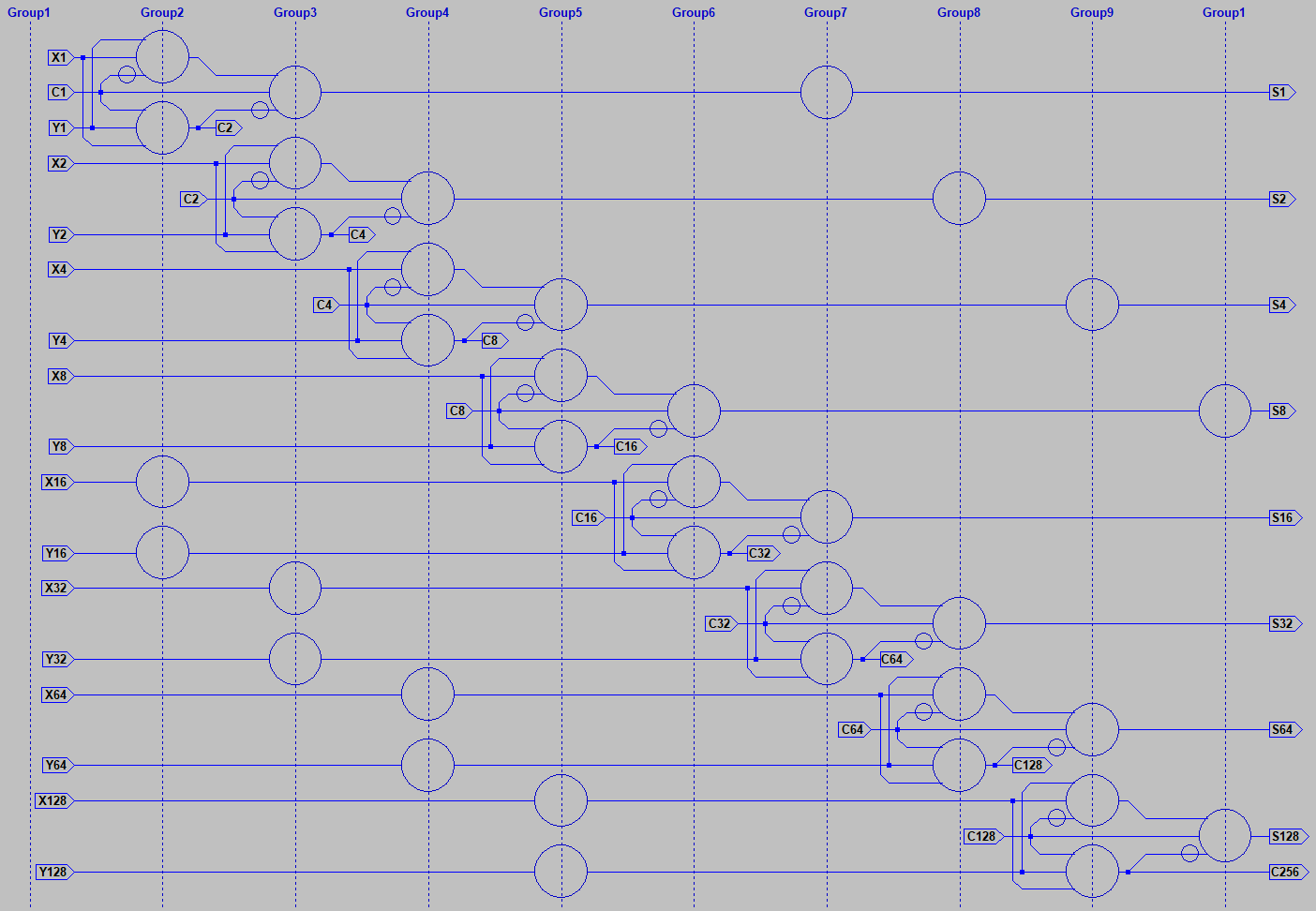

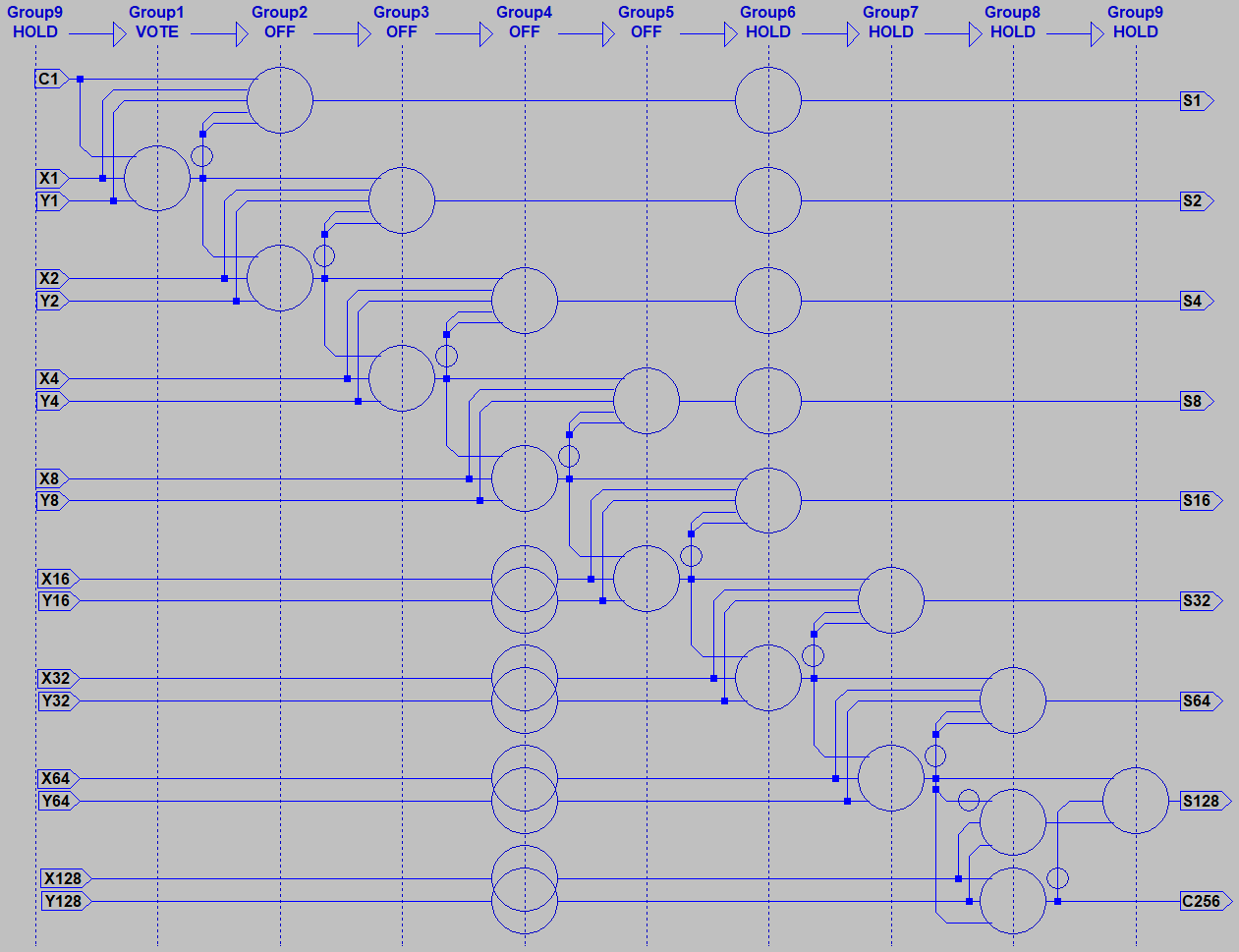

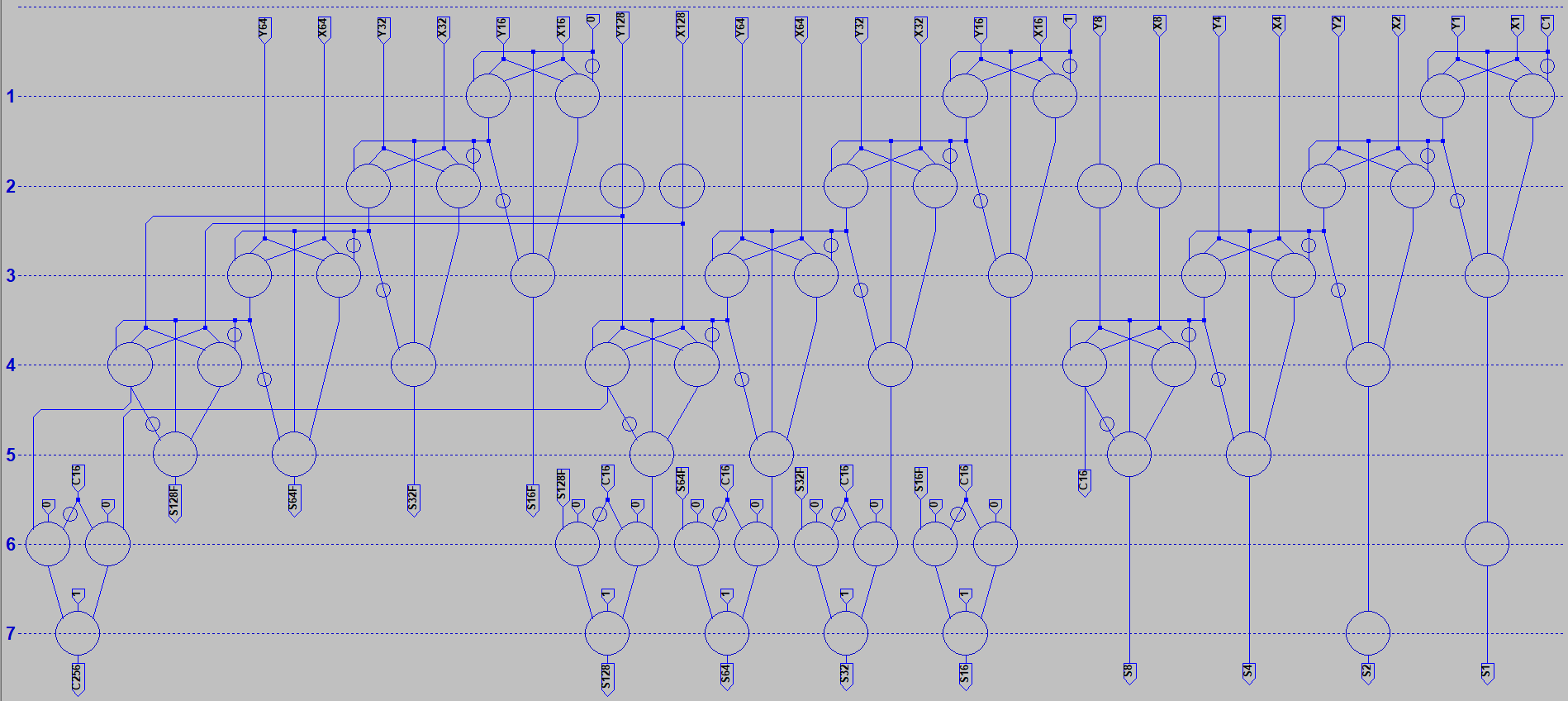

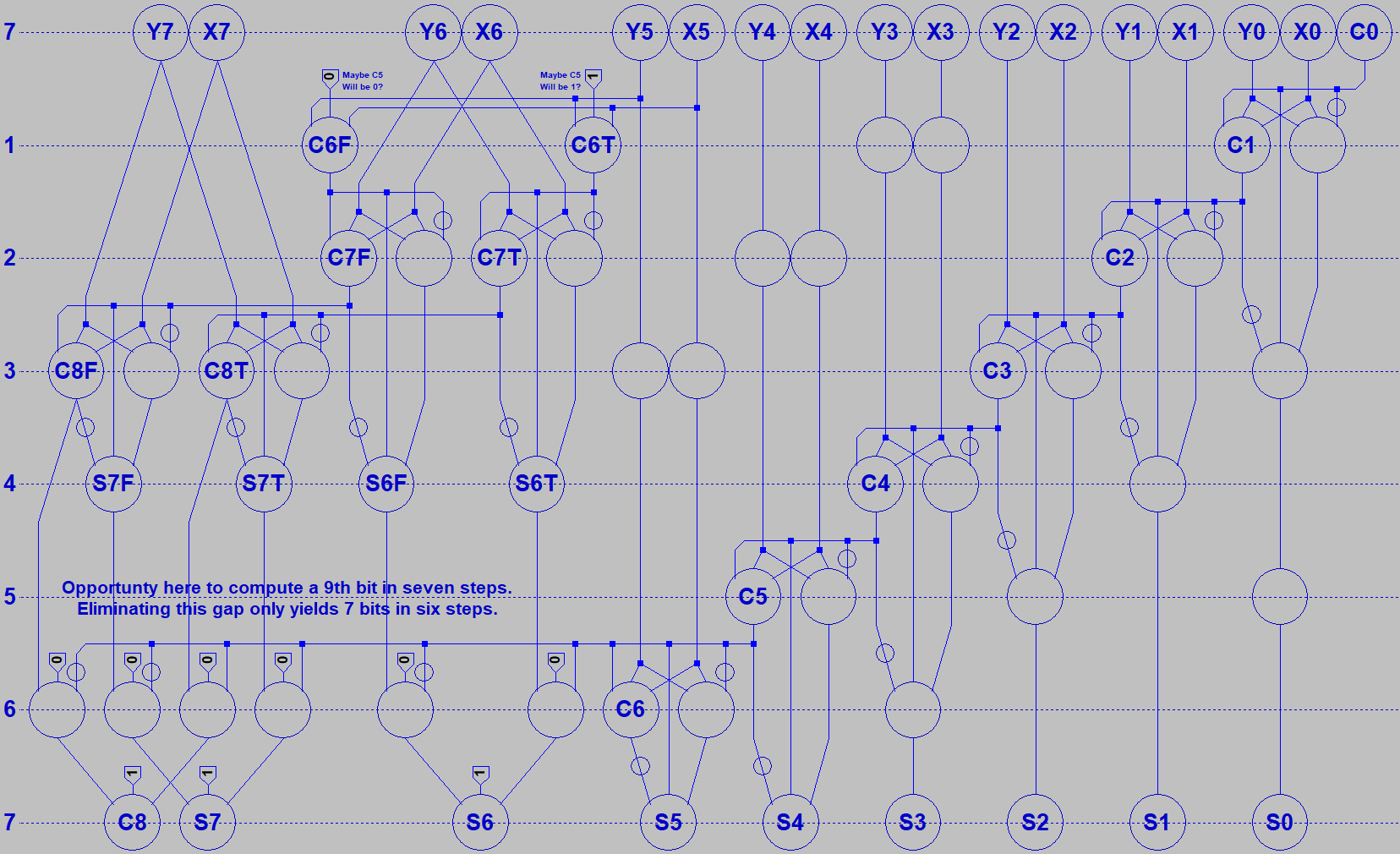

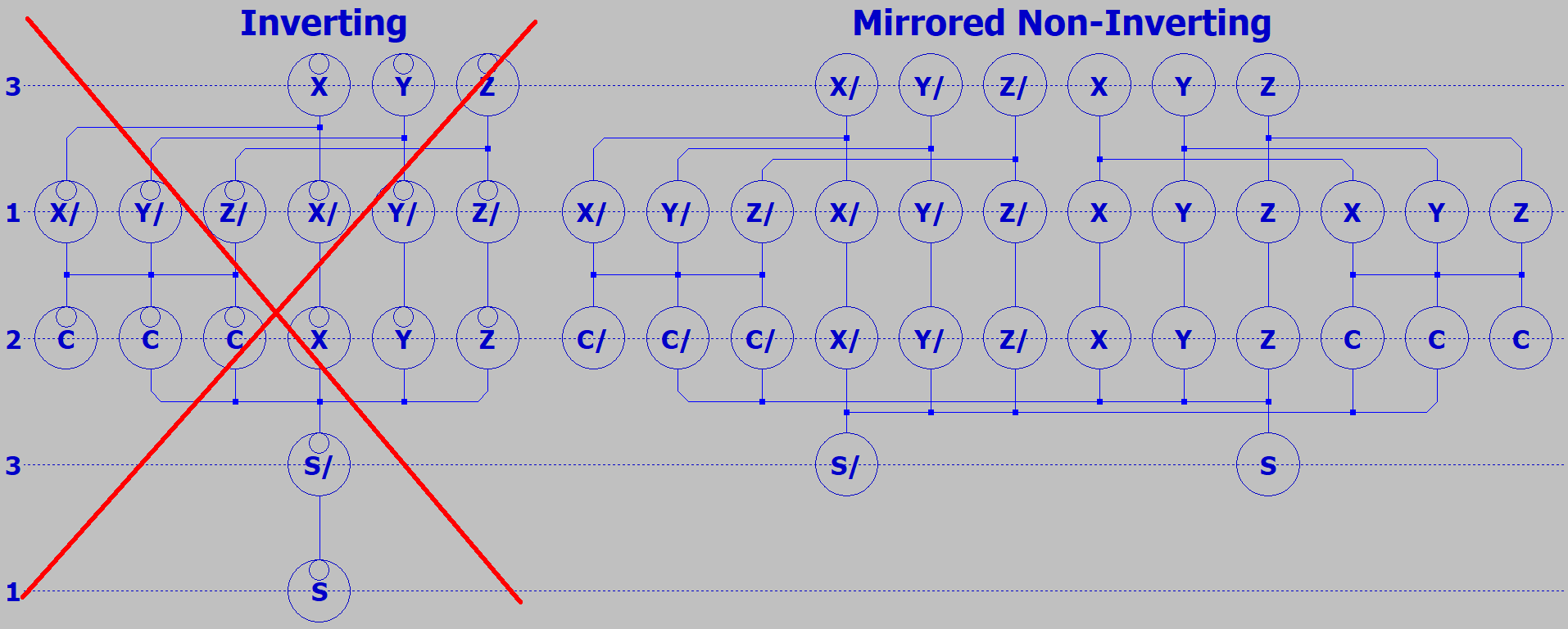

Familiar gates like NAND and NOR have dedicated inputs and outputs. Majority logic is also possible

with gates, but doing so would miss out on several interesting advantages. Majority logic devices were

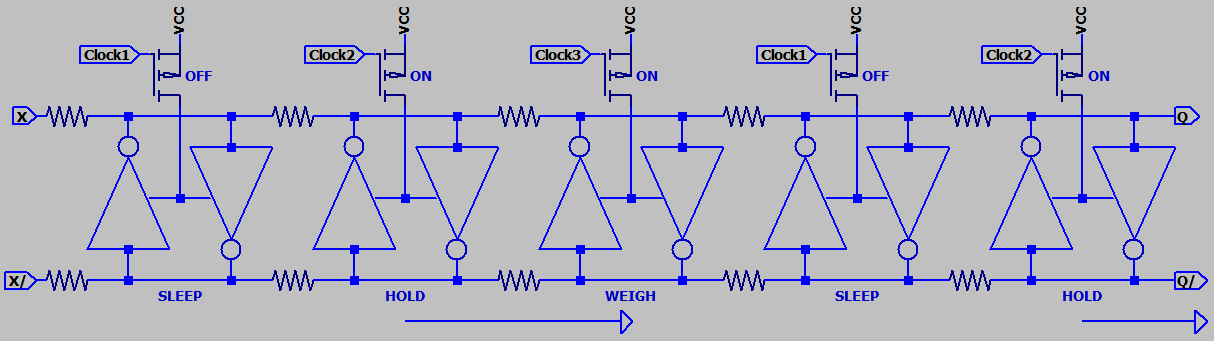

usually symmetrical, having I/Os without direction. Logic would pulled forward by a three phase clock

that powered down selected banks of devices to forget thier stored state and accept new votes. This

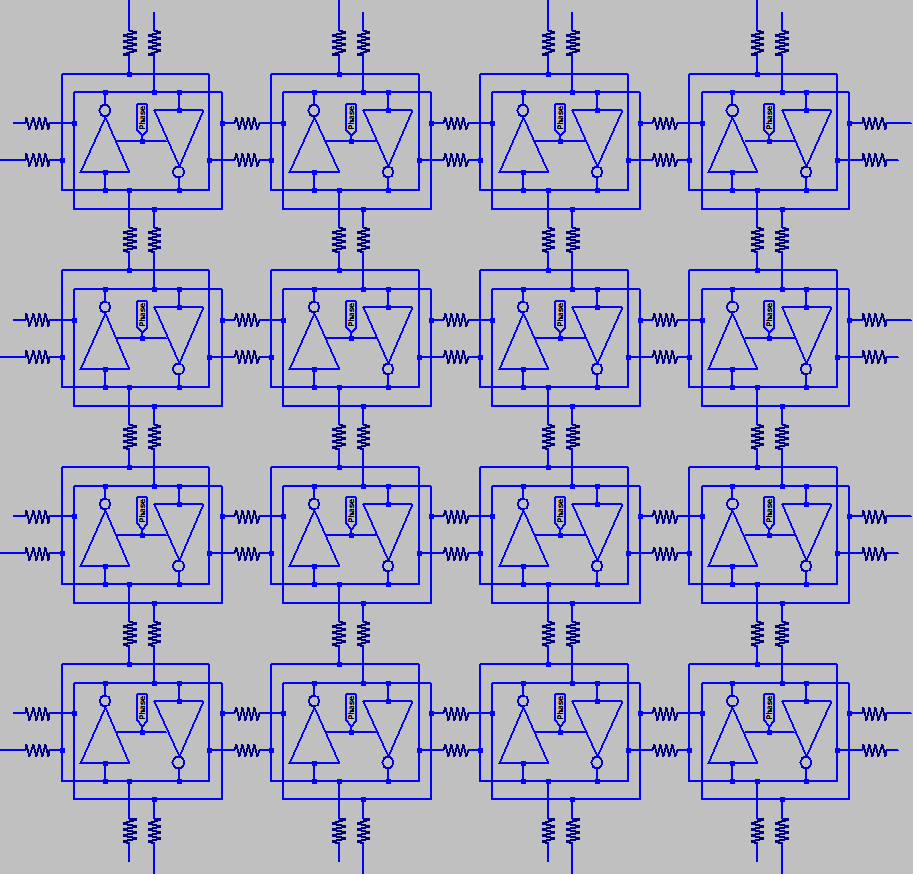

may sound inconvenient, but is powerful important. Because I will show how a programmable array

of such devices can pull logic forward, backward, or sideways...

So how do we compute with majority logic? Well, majority only makes sense if we start with an odd

number of votes, so there will always be an unambiguous winner. You can make a pretty good true

random number generator by having no inputs. But for normal Boolean logic we need at least three

votes. The majority of (1,X,Y) gives the same answer as OR. The Majority of (0,X,Y) gives the same

answer as AND. These devices were usually differential, so that NAND and NOR were as simple as

reversing the pair of output wires, giving us Minority Logic.

NAND and NOR are universal gates that can build anything. A minority of three gate replaces both.

NOR gates and nothing but NOR gates took people to the moon. Parametrons havn't quite gotten

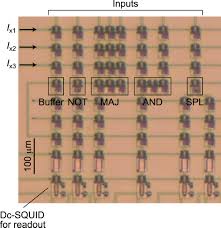

there yet, though they certainly could have. But looking forward, gated logic is making less sense

as transistors become impossibly small. Directionless logic may become the easier trick to pull off.

Majority of three spins or something, way more than one way to skin that cat. Will even show how

majority logic can be done using only light. Not that light is practical yet, just saying its been done.

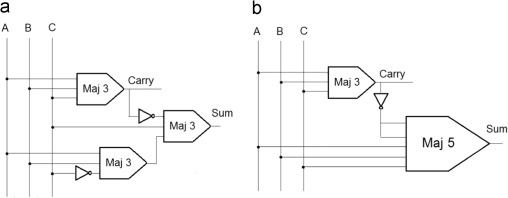

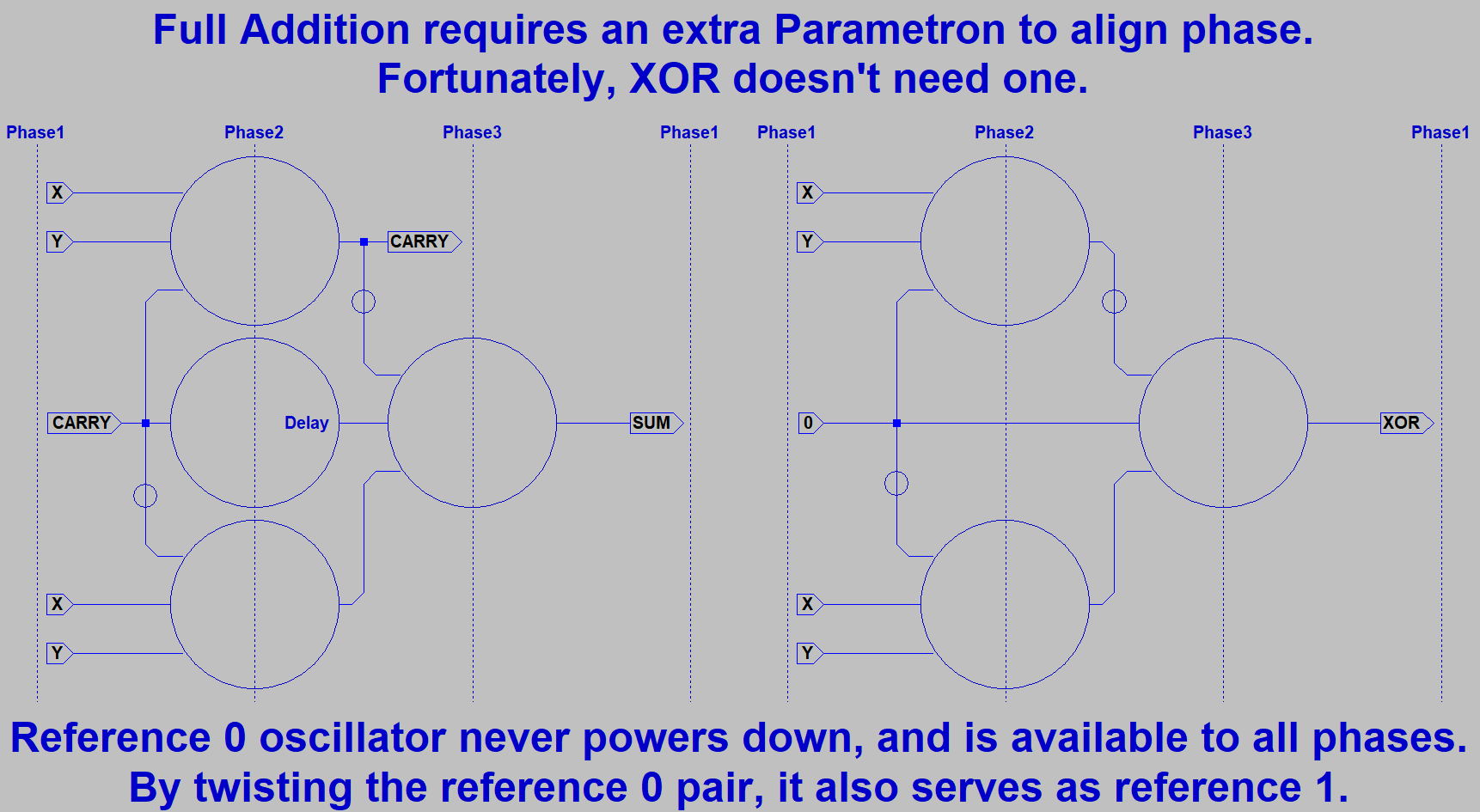

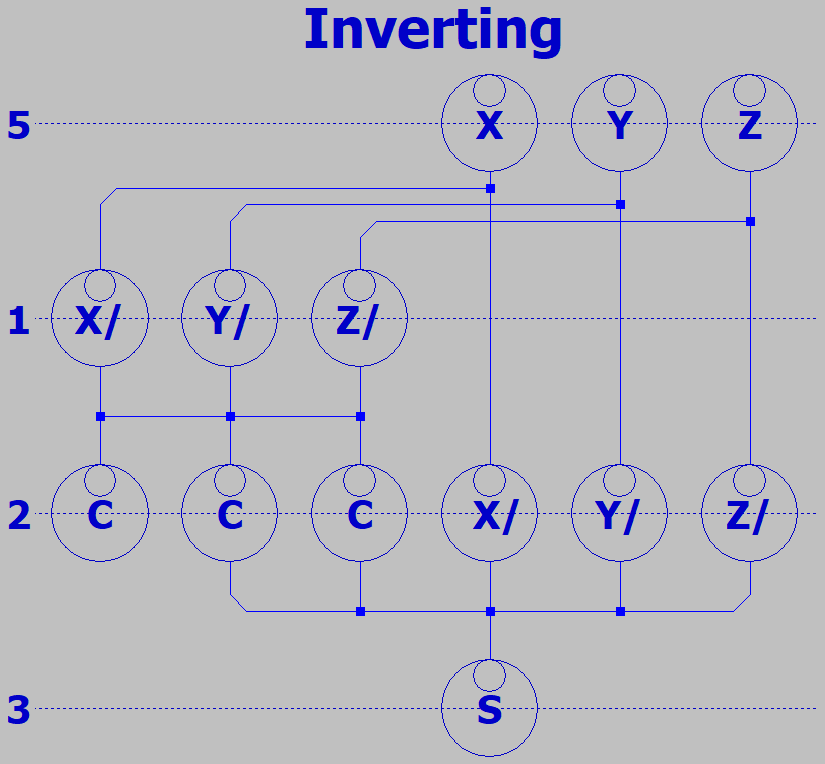

So we got NAND and NOR. Also stores the result like a one bit memory or flipflop. What else can

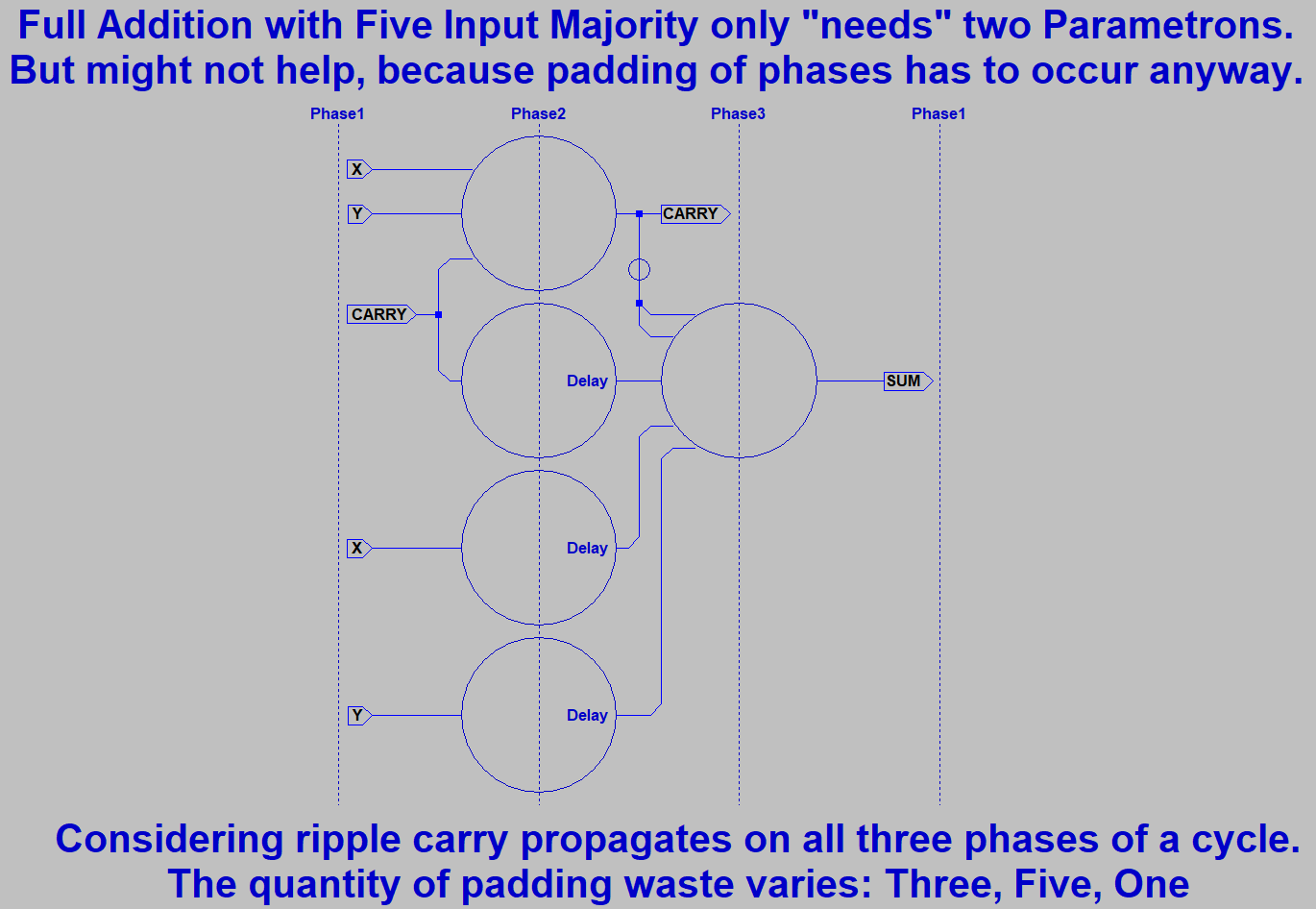

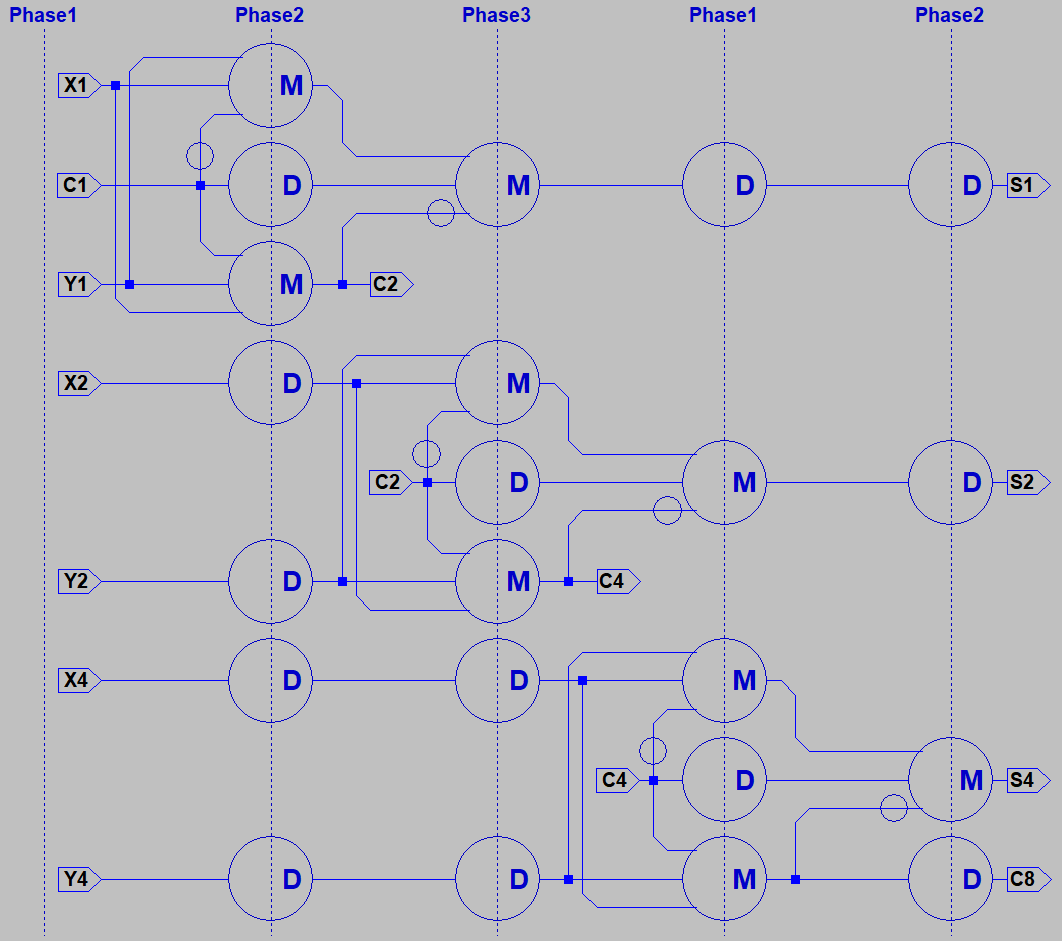

it do? The Majority of (X,Y,Z) gives us CARRY. Cool, so we now have an Arithmetic Logic Unit

that can do a math function or either of two logic functions! Lets complete the ADD function with a

five way vote of (X,Y,Z,NotCarry,NotCarry). Question: How many gates does that usually take???

I'll let you google yourselves up to speed on this, while I gather up the promised attachments.

could perform majority vote logic, amplify the winning state, and hold the result for as long as needed

in one of two possible phase locks. All without transistors or tubes, just ordinary capacitors and slightly

overdriven inductors. He named this logic device "Parametron".

Several early Parametron Computers were built. And from the user perspective, they worked just like

ordinary computers made of gates. NEC put out a brochure for one in English that clearly shows just

how boringly normal these could be if you never looked under the hood. A few tubes were used for

convenience to pump the oscillators, but they wern't doing logic with those tubes. An alternator could

have provided the same pump waveforms for all that mattered.

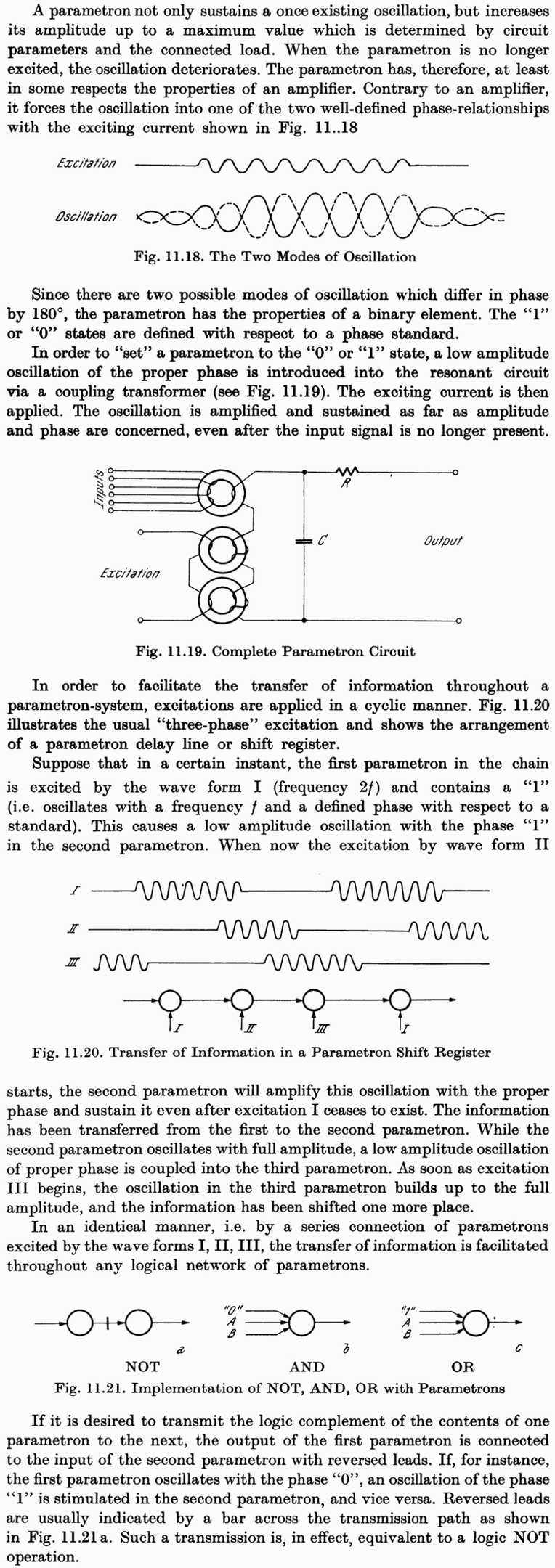

Familiar gates like NAND and NOR have dedicated inputs and outputs. Majority logic is also possible

with gates, but doing so would miss out on several interesting advantages. Majority logic devices were

usually symmetrical, having I/Os without direction. Logic would pulled forward by a three phase clock

that powered down selected banks of devices to forget thier stored state and accept new votes. This

may sound inconvenient, but is powerful important. Because I will show how a programmable array

of such devices can pull logic forward, backward, or sideways...

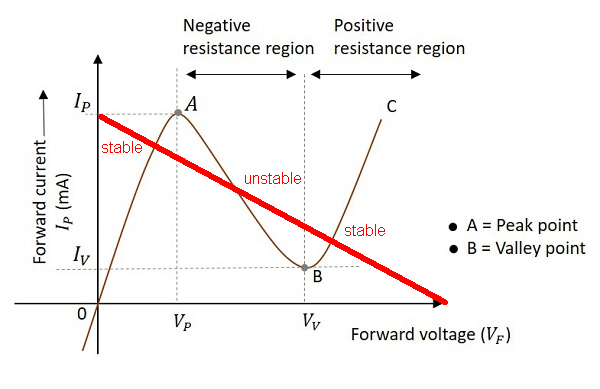

So how do we compute with majority logic? Well, majority only makes sense if we start with an odd

number of votes, so there will always be an unambiguous winner. You can make a pretty good true

random number generator by having no inputs. But for normal Boolean logic we need at least three

votes. The majority of (1,X,Y) gives the same answer as OR. The Majority of (0,X,Y) gives the same

answer as AND. These devices were usually differential, so that NAND and NOR were as simple as

reversing the pair of output wires, giving us Minority Logic.

NAND and NOR are universal gates that can build anything. A minority of three gate replaces both.

NOR gates and nothing but NOR gates took people to the moon. Parametrons havn't quite gotten

there yet, though they certainly could have. But looking forward, gated logic is making less sense

as transistors become impossibly small. Directionless logic may become the easier trick to pull off.

Majority of three spins or something, way more than one way to skin that cat. Will even show how

majority logic can be done using only light. Not that light is practical yet, just saying its been done.

So we got NAND and NOR. Also stores the result like a one bit memory or flipflop. What else can

it do? The Majority of (X,Y,Z) gives us CARRY. Cool, so we now have an Arithmetic Logic Unit

that can do a math function or either of two logic functions! Lets complete the ADD function with a

five way vote of (X,Y,Z,NotCarry,NotCarry). Question: How many gates does that usually take???

I'll let you google yourselves up to speed on this, while I gather up the promised attachments.

Last edited:

![[H]ard|Forum](/styles/hardforum/xenforo/logo_dark.png)