Since Brent passed on the article I bring you:

Something Ive seen claimed many times on the Internet is that there is a visible difference in color and font quality between AMD and nVidia cards. People claim this even on new monitors, new cards, with 100% digital connections. This is something that seems impossible to me, so I decided to put it to a real objective test in this article.

TL;DR Version: There is no difference in image quality between nVidia and AMD.

Full Version

Overview of Claims

The claim that we are evaluating is that there is a visible difference in 2D quality between AMD and nVidia cards. Numerous times on forums people have claimed that they see a difference in either the colors, the fonts, or both. They are sure theyve seen this difference when theyve gotten a new card, or looked at a different system. Generally the claim is that AMD has "richer" colors and better looking fonts. The claim is that this is universal, it is a function of the company, not a specific card, or specific vendor, or monitor.

Additionally, the claim is this happens on modern systems with all digital connections. An LCD monitor connected with a DVI or DP cable to a video card is claimed to show the difference. So it isnt a difference in conversion to analog, it is an actual difference with the cards, the way the handle colors.

Technical Details: Why it Shouldnt be the Case

The problem is that when you learn a bit about how graphics actually work on computers, it all seems to be impossible. There is no logical way this would be correct. The reason is because a digital signal remains the same, no matter how many times it is retransmitted or changed in form, unless something deliberately changes it.

In the case of color on a computer, you first have to understand how computers represent color. All colors are represented using what it called a tristimulus value. This means it is made up of a red, green, and blue component. This is because our eyes perceive those colors, and use that information to give our brains the color detail we see.

Being digital devices, that means each of those three colors are stored as a number. In the case of desktop graphics, an 8-bit value, from 0-255. You may have encountered this before in programs like Photoshop that will have three sliders, one if each color, demarcated in 256 steps, or in HTML code where you specify colors as #XXYYZZ, each pair of characters is a color value in hexadecimal (FF in hex is equal to 255 in decimal).

When the computer wants a given color displayed, it sends that tristimulus value for the video card. There the video card looks at it and decides what to do with it based on the lookup table in the video card. By default, the lookup table doesnt do anything; it is a straight line, specifying that the output should be the same as the input. It can be changed by the user in the control panel, or by a program such as a monitor calibration program. So by default, the value the OS hands the video card is the value the video card sends out over the DVI cable.

What this all means is that the monitor should be receiving the same digital data from either kind of card, and thus the image should be the same. Thus it would seem the claim isnt possible.

The Impartial Observer

For color, we need an impartial observer. Humans are notoriously bad with color perception, and lots of things can change what we perceive a color to be. Fortunately, I happen to have one on hand.

For this test well be using the i1Display Pro aka i1Display 3 aka EODIS3. This is a modern, quality colorimeter that can read color levels with a greater level of precision than the human eye. It is used in calibrating displays, in particular this one is for calibrating my display.

It will be used to take measurements of various color patches to find out their results. Ill give the same patch set to both the AMD card and the nVidia card and see if there is any difference in measured color. This gives us good, objective, results.

Test Setup

For these tests Ill be using my NEC MultiSync 2690WUXi monitor. It is a professional IPS monitor, designed with color accuracy in mind. It actually has internal lookup tables, so it can have correction applied to it internally, independent of the device feeding it the signal. Measurement will be taken by the aforementioned i1Display Pro. The software Ill be using is NECs SpectraView II. It is the monitor calibration software for this screen, but it can also generate color patches and give the result of their measurement.

In the nVidia corner I have a GeForce GTX 680 in my desktop, and in the AMD corner I have a Radeon 5850M in my laptop. The difference in power in these cards isnt relevant to this test since we arent testing performance, or even 3D, just color and fonts.

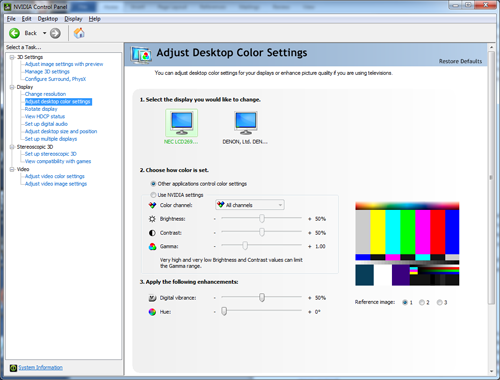

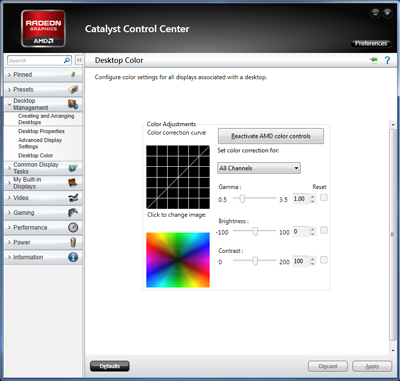

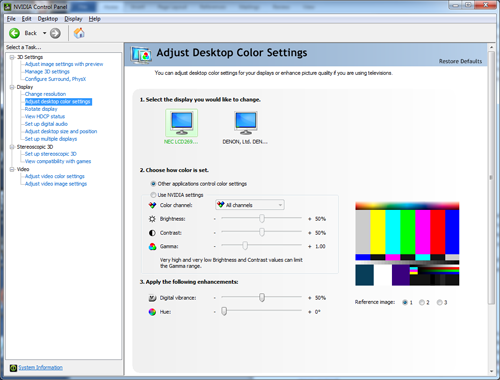

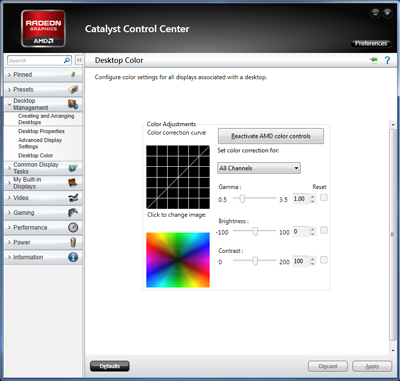

The first step on both systems is to make sure that all onboard color modifications are turned off. We know that the cards can change the color if they want, the question is if they are different despite settings. That means having brightness, contrast, gamma, digital vibrance, hue temperature and all that set to no modification.

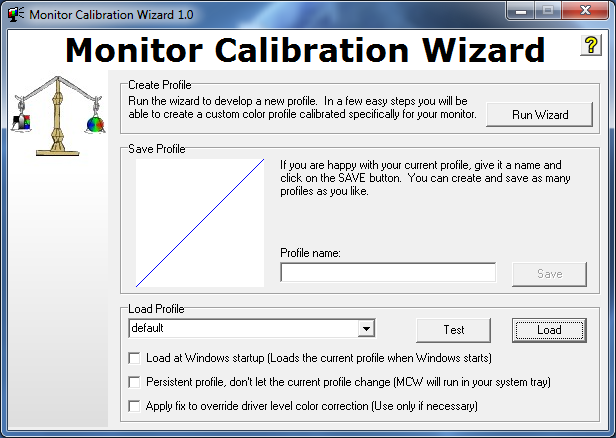

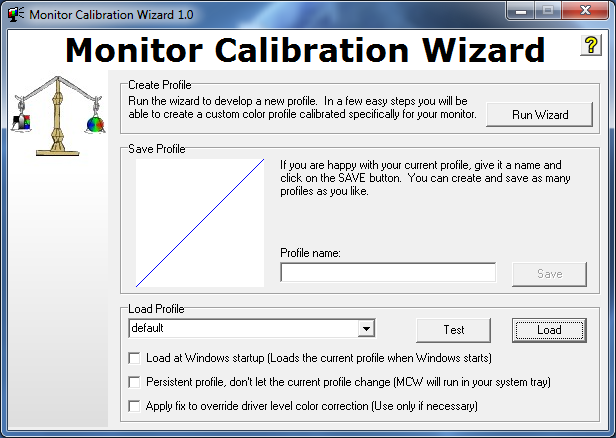

Then Ill use Monitor Calibraton Wizard just to make sure the system lookup tables are unaltered, a straight line.

That done, it is time to take some measurements. The monitor is warmed up and calibrated, the room is dark, and the sensor is good to go.

Color Test

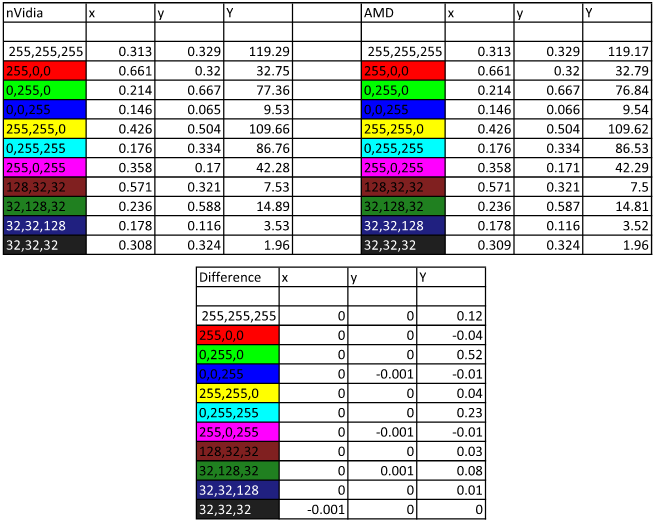

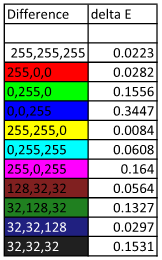

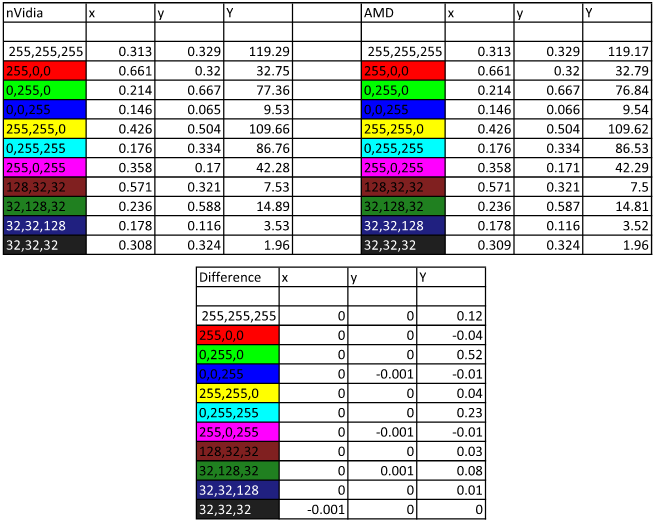

For this test Ill use a variety of color patches, one of each bright primary and secondary colors, as well as three pastels and two neutral colors. Colors are measured on the standard CIE chart and expressed in terms of xy for color coordinates and Y for luminescence (brightness).

Looking at the charts, you can already see that most colors are dead on with regards to the x and y value, and very close on the luma value. In fact, what you see here is as perfect a match as this setup can measure. The meter is not a perfectly accurate device to begin with, and is also slightly affected by temperature. Also, there are small variations across a screen, and I dont place it on precisely the same spot every time I take a reading. A difference of +- 0.001 xy is normal, and in fact below the rated error for this device. It is also completely unnoticeable.

value. In fact, what you see here is as perfect a match as this setup can measure. The meter is not a perfectly accurate device to begin with, and is also slightly affected by temperature. Also, there are small variations across a screen, and I dont place it on precisely the same spot every time I take a reading. A difference of +- 0.001 xy is normal, and in fact below the rated error for this device. It is also completely unnoticeable.

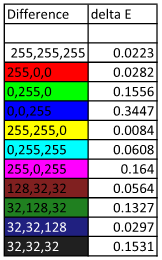

To quantify if a difference is noticeable, the difference between the measurements can be expressed by a value called delta E. This is a calculation of the perceived difference between two colors to the human eye. A value under 4 is generally unnoticeable to most observers for most colors. A value of 2 or under is considered acceptable for print. A value of 1 is the lowest perceptible difference level for any color, hence why it is 1.

As we can see, the highest delta E for the readings is 0.34, and most are under 0.2, well below a perceptible level and getting around the margin of error of the colorimeter. I am quite sure wed have an even closer match with better equipment and a precise aim on the monitor. The very, very small differences we are seeing are a result of imperfect measurement equipment, not any actual difference.

So as we can see, colors coming from AMD and nVidia cards are dead identical to within the limits of this test, and to within the limits of human perception. This is what wed expect given the completely digital nature of all the connections: A given input value will be the same output value, unless something changes it along the way.

If you think you see a difference with your new card, you may want to check your color settings. To be sure, video cards can change color output, when you ask them to. However with everything turned off, they should look completely the same.

Font Test

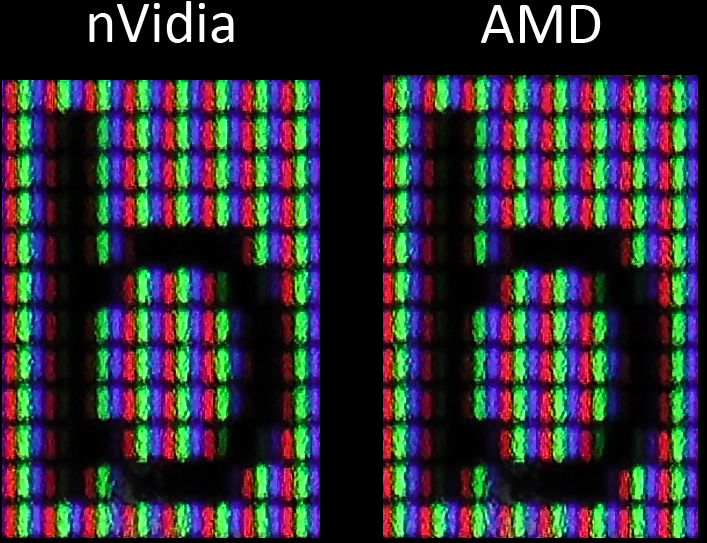

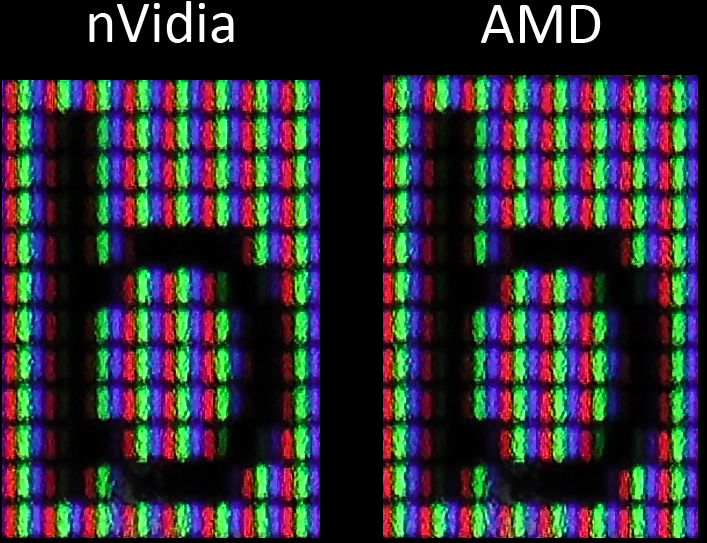

For fonts, there isnt the luxury of a device that can just measure the way they look and give us an objective verdict. However, we can get a good side by side comparison using our eyes and a camera. What Ive done here is taken some extreme closeup pictures of my monitor displaying the same text, with the same font (Calibri), in the same program (Word), from both the GTX 680 and the 5850M. Thus we can get a pretty good look at the actual pixel structure of the text, and see if any differences are noticeable. Ive attached the full resolution shots so you can have a look at whatever you like but lets compare a couple letters. The slight difference in angle is due to me holding the camera.

The thing to notice isnt just the shape of the letter, but the sub pixel anti-aliasing. Were there to be a difference, that would be where youd see it. However as we can see it is the same in both cases. Sub pixel for sub pixel, the b from the 12 point font is the same on either card.

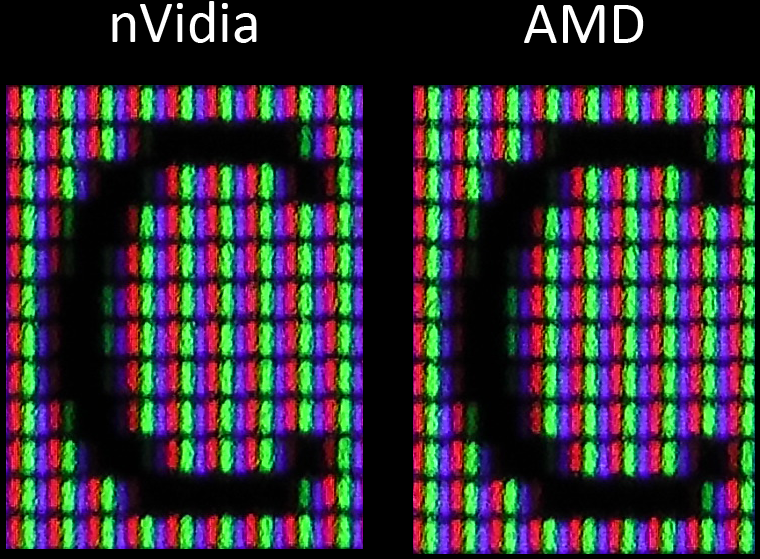

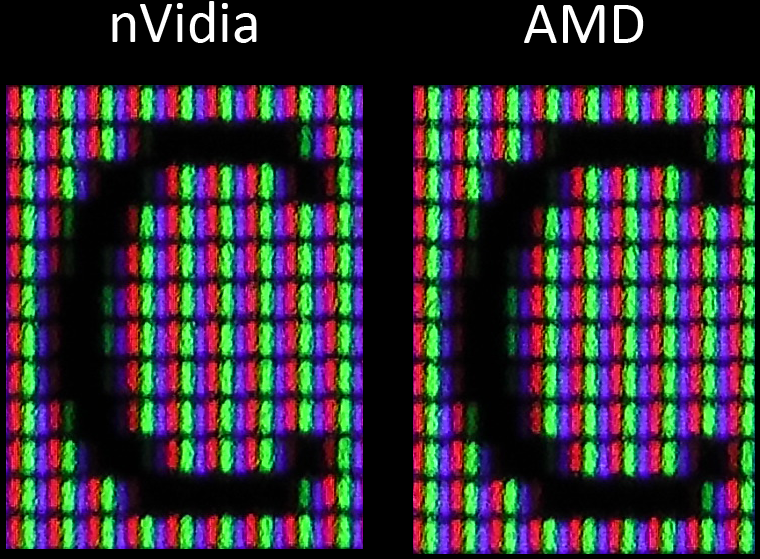

With the 16 point c the result is the same; the anti-aliasing is identical on the subpixel level.

There just isnt any difference to be seen with the characters in the font. Both cards render them the same in every detail.

A possible reason you can see a difference between some systems is that Microsoft actually offers a cleartype tuner. It will let you change around how cleartype (the subpixel anti-aliasing) is applied to the fonts to suit your liking. In these pictures it is the default but if you have run the program, it could look slightly different on your system. When comparing fonts for small details, you need to make sure it is set the same in both cases.

Conclusion

The results were as wed expect: There is no difference in image quality based on the brand of card you buy. The days of 2D image quality being a function of particular graphics cards are long gone for most people. When CRTs were popular, it was something that mattered as the quality of the RAMDAC controlled the quality of the analog VGA output. Thus different cards, even with the same chipset, could potentially produce different 2D image quality.

However LCDs and DVI have changed all that. The signal is fully digital from the OS, to the card, to the monitor right up until the individual sub pixels are finally lit up. This removes any quality differences from card outputs, since they are outputting a completely accurate digital signal. The only time they change anything is when instructed to by a change to their LUTs from the user, OS, application, and so on.

What that all means is the conclusion is pretty clear: There are many good reasons to buy team green or team red, but 2D image quality is not one of them. They are precisely the same.

Sycraft's Image Quality Showdown

Something Ive seen claimed many times on the Internet is that there is a visible difference in color and font quality between AMD and nVidia cards. People claim this even on new monitors, new cards, with 100% digital connections. This is something that seems impossible to me, so I decided to put it to a real objective test in this article.

TL;DR Version: There is no difference in image quality between nVidia and AMD.

Full Version

Overview of Claims

The claim that we are evaluating is that there is a visible difference in 2D quality between AMD and nVidia cards. Numerous times on forums people have claimed that they see a difference in either the colors, the fonts, or both. They are sure theyve seen this difference when theyve gotten a new card, or looked at a different system. Generally the claim is that AMD has "richer" colors and better looking fonts. The claim is that this is universal, it is a function of the company, not a specific card, or specific vendor, or monitor.

Additionally, the claim is this happens on modern systems with all digital connections. An LCD monitor connected with a DVI or DP cable to a video card is claimed to show the difference. So it isnt a difference in conversion to analog, it is an actual difference with the cards, the way the handle colors.

Technical Details: Why it Shouldnt be the Case

The problem is that when you learn a bit about how graphics actually work on computers, it all seems to be impossible. There is no logical way this would be correct. The reason is because a digital signal remains the same, no matter how many times it is retransmitted or changed in form, unless something deliberately changes it.

In the case of color on a computer, you first have to understand how computers represent color. All colors are represented using what it called a tristimulus value. This means it is made up of a red, green, and blue component. This is because our eyes perceive those colors, and use that information to give our brains the color detail we see.

Being digital devices, that means each of those three colors are stored as a number. In the case of desktop graphics, an 8-bit value, from 0-255. You may have encountered this before in programs like Photoshop that will have three sliders, one if each color, demarcated in 256 steps, or in HTML code where you specify colors as #XXYYZZ, each pair of characters is a color value in hexadecimal (FF in hex is equal to 255 in decimal).

When the computer wants a given color displayed, it sends that tristimulus value for the video card. There the video card looks at it and decides what to do with it based on the lookup table in the video card. By default, the lookup table doesnt do anything; it is a straight line, specifying that the output should be the same as the input. It can be changed by the user in the control panel, or by a program such as a monitor calibration program. So by default, the value the OS hands the video card is the value the video card sends out over the DVI cable.

What this all means is that the monitor should be receiving the same digital data from either kind of card, and thus the image should be the same. Thus it would seem the claim isnt possible.

The Impartial Observer

For color, we need an impartial observer. Humans are notoriously bad with color perception, and lots of things can change what we perceive a color to be. Fortunately, I happen to have one on hand.

For this test well be using the i1Display Pro aka i1Display 3 aka EODIS3. This is a modern, quality colorimeter that can read color levels with a greater level of precision than the human eye. It is used in calibrating displays, in particular this one is for calibrating my display.

It will be used to take measurements of various color patches to find out their results. Ill give the same patch set to both the AMD card and the nVidia card and see if there is any difference in measured color. This gives us good, objective, results.

Test Setup

For these tests Ill be using my NEC MultiSync 2690WUXi monitor. It is a professional IPS monitor, designed with color accuracy in mind. It actually has internal lookup tables, so it can have correction applied to it internally, independent of the device feeding it the signal. Measurement will be taken by the aforementioned i1Display Pro. The software Ill be using is NECs SpectraView II. It is the monitor calibration software for this screen, but it can also generate color patches and give the result of their measurement.

In the nVidia corner I have a GeForce GTX 680 in my desktop, and in the AMD corner I have a Radeon 5850M in my laptop. The difference in power in these cards isnt relevant to this test since we arent testing performance, or even 3D, just color and fonts.

The first step on both systems is to make sure that all onboard color modifications are turned off. We know that the cards can change the color if they want, the question is if they are different despite settings. That means having brightness, contrast, gamma, digital vibrance, hue temperature and all that set to no modification.

Then Ill use Monitor Calibraton Wizard just to make sure the system lookup tables are unaltered, a straight line.

That done, it is time to take some measurements. The monitor is warmed up and calibrated, the room is dark, and the sensor is good to go.

Color Test

For this test Ill use a variety of color patches, one of each bright primary and secondary colors, as well as three pastels and two neutral colors. Colors are measured on the standard CIE chart and expressed in terms of xy for color coordinates and Y for luminescence (brightness).

Looking at the charts, you can already see that most colors are dead on with regards to the x and y value, and very close on the luma

value. In fact, what you see here is as perfect a match as this setup can measure. The meter is not a perfectly accurate device to begin with, and is also slightly affected by temperature. Also, there are small variations across a screen, and I dont place it on precisely the same spot every time I take a reading. A difference of +- 0.001 xy is normal, and in fact below the rated error for this device. It is also completely unnoticeable.

value. In fact, what you see here is as perfect a match as this setup can measure. The meter is not a perfectly accurate device to begin with, and is also slightly affected by temperature. Also, there are small variations across a screen, and I dont place it on precisely the same spot every time I take a reading. A difference of +- 0.001 xy is normal, and in fact below the rated error for this device. It is also completely unnoticeable.To quantify if a difference is noticeable, the difference between the measurements can be expressed by a value called delta E. This is a calculation of the perceived difference between two colors to the human eye. A value under 4 is generally unnoticeable to most observers for most colors. A value of 2 or under is considered acceptable for print. A value of 1 is the lowest perceptible difference level for any color, hence why it is 1.

As we can see, the highest delta E for the readings is 0.34, and most are under 0.2, well below a perceptible level and getting around the margin of error of the colorimeter. I am quite sure wed have an even closer match with better equipment and a precise aim on the monitor. The very, very small differences we are seeing are a result of imperfect measurement equipment, not any actual difference.

So as we can see, colors coming from AMD and nVidia cards are dead identical to within the limits of this test, and to within the limits of human perception. This is what wed expect given the completely digital nature of all the connections: A given input value will be the same output value, unless something changes it along the way.

If you think you see a difference with your new card, you may want to check your color settings. To be sure, video cards can change color output, when you ask them to. However with everything turned off, they should look completely the same.

Font Test

For fonts, there isnt the luxury of a device that can just measure the way they look and give us an objective verdict. However, we can get a good side by side comparison using our eyes and a camera. What Ive done here is taken some extreme closeup pictures of my monitor displaying the same text, with the same font (Calibri), in the same program (Word), from both the GTX 680 and the 5850M. Thus we can get a pretty good look at the actual pixel structure of the text, and see if any differences are noticeable. Ive attached the full resolution shots so you can have a look at whatever you like but lets compare a couple letters. The slight difference in angle is due to me holding the camera.

The thing to notice isnt just the shape of the letter, but the sub pixel anti-aliasing. Were there to be a difference, that would be where youd see it. However as we can see it is the same in both cases. Sub pixel for sub pixel, the b from the 12 point font is the same on either card.

With the 16 point c the result is the same; the anti-aliasing is identical on the subpixel level.

There just isnt any difference to be seen with the characters in the font. Both cards render them the same in every detail.

A possible reason you can see a difference between some systems is that Microsoft actually offers a cleartype tuner. It will let you change around how cleartype (the subpixel anti-aliasing) is applied to the fonts to suit your liking. In these pictures it is the default but if you have run the program, it could look slightly different on your system. When comparing fonts for small details, you need to make sure it is set the same in both cases.

Conclusion

The results were as wed expect: There is no difference in image quality based on the brand of card you buy. The days of 2D image quality being a function of particular graphics cards are long gone for most people. When CRTs were popular, it was something that mattered as the quality of the RAMDAC controlled the quality of the analog VGA output. Thus different cards, even with the same chipset, could potentially produce different 2D image quality.

However LCDs and DVI have changed all that. The signal is fully digital from the OS, to the card, to the monitor right up until the individual sub pixels are finally lit up. This removes any quality differences from card outputs, since they are outputting a completely accurate digital signal. The only time they change anything is when instructed to by a change to their LUTs from the user, OS, application, and so on.

What that all means is the conclusion is pretty clear: There are many good reasons to buy team green or team red, but 2D image quality is not one of them. They are precisely the same.

![[H]ard|Forum](/styles/hardforum/xenforo/logo_dark.png)