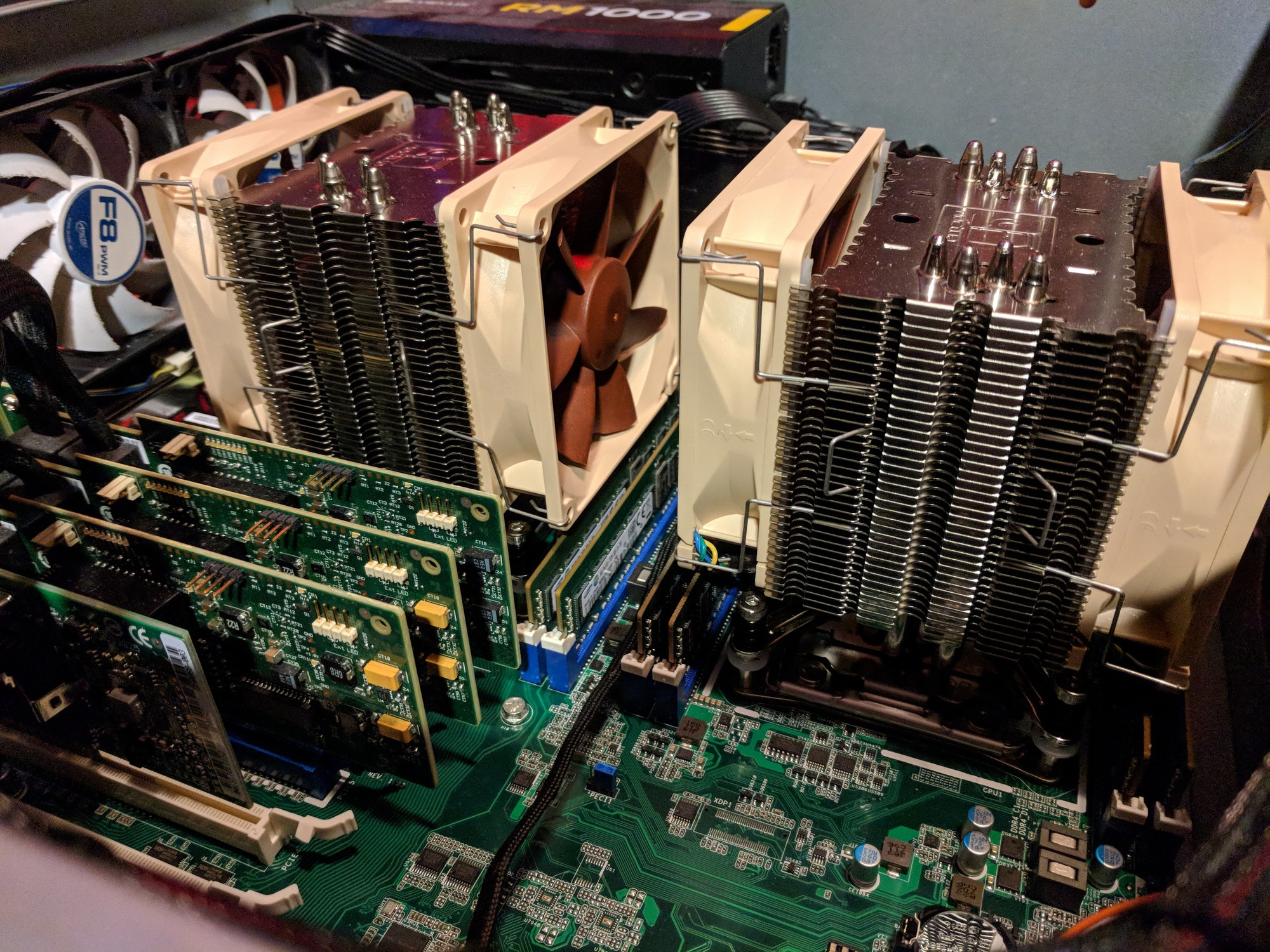

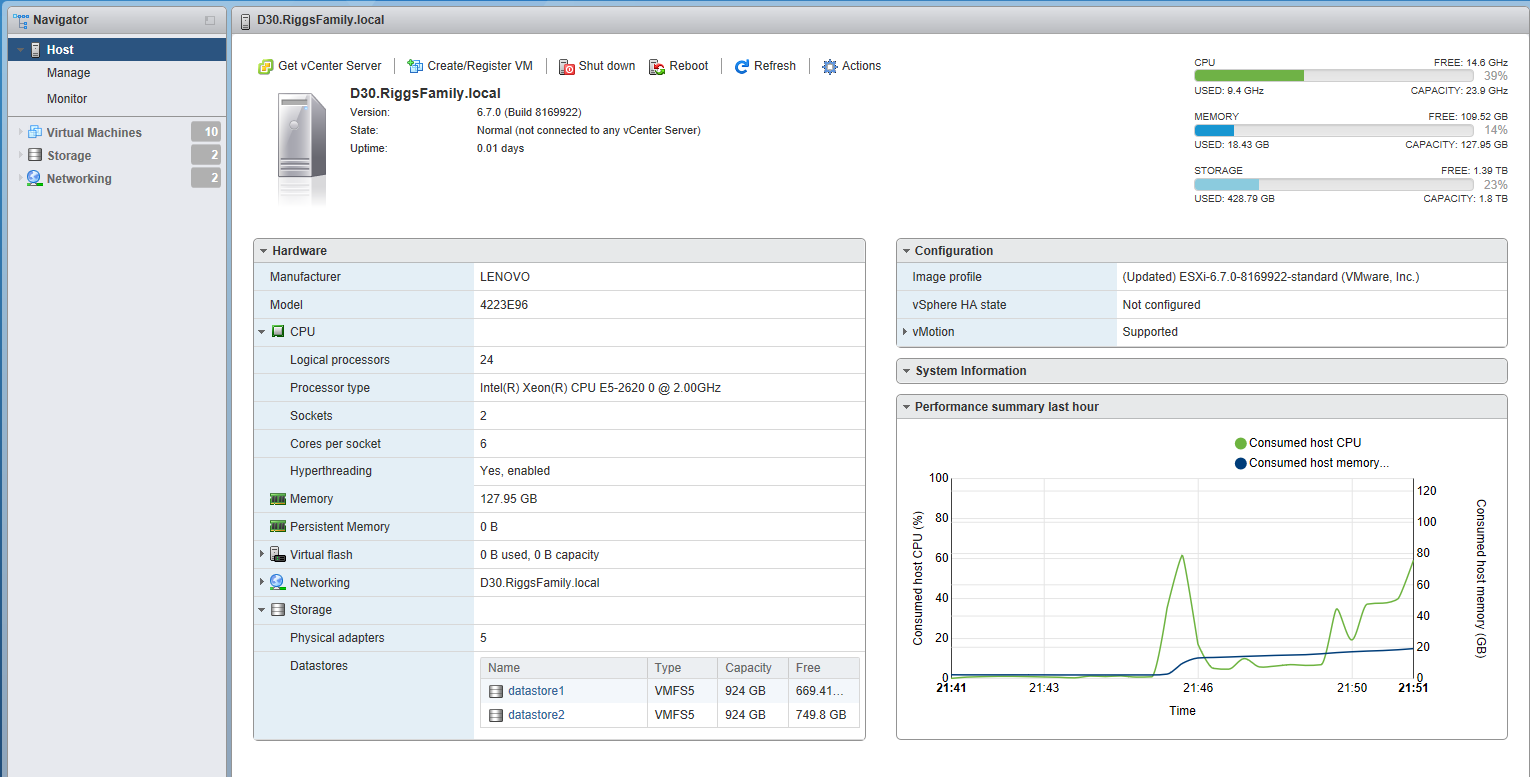

So I got an ibm x3550 m3 from work. I'm not sure if it's dual 8 thread or dual 12 thread, but it will have 192gb of ram. I only have three hdd cages right now, so I'll need to order more. I play on running a 240gb ssd and two 1tb reds in raid 1 for now. Should have it up and running over the weekend.

Anyone have any know how if running esxi 6.5 on one of these?

Anyone have any know how if running esxi 6.5 on one of these?

![[H]ard|Forum](/styles/hardforum/xenforo/logo_dark.png)