elvn

Supreme [H]ardness

- Joined

- May 5, 2006

- Messages

- 5,307

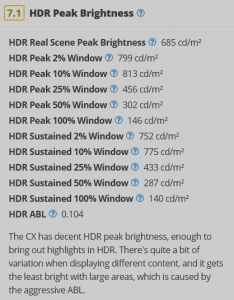

This is being posted in relation to the C9, E9 and CX oleds and HDR display tech in general - not trying to continue arguments about any aw55 value, limitations, timeliness. It is what it is.

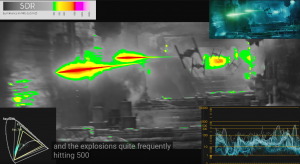

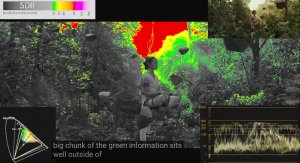

This HDR temperature mapping is pretty cool to watch.

The scene content is broken down as follows:

under 100 nits = grayscale

100nits+ = pure green

200nits = yellow

400nits = orange

800 nits = red

1600nit = pink

4000nit+ = pure white

[HDR] Star Wars: The Rise of Skywalker 4K Blu-ray HDR Analysis - HDTVTest

This HDR temperature mapping is pretty cool to watch.

The scene content is broken down as follows:

under 100 nits = grayscale

100nits+ = pure green

200nits = yellow

400nits = orange

800 nits = red

1600nit = pink

4000nit+ = pure white

[HDR] Star Wars: The Rise of Skywalker 4K Blu-ray HDR Analysis - HDTVTest

Last edited:

![[H]ard|Forum](/styles/hardforum/xenforo/logo_dark.png)