Navigation

Install the app

How to install the app on iOS

Follow along with the video below to see how to install our site as a web app on your home screen.

Note: This feature may not be available in some browsers.

More options

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

Assembling a high capacity NAS

- Thread starter carlmart

- Start date

No, what he said is that a RAID arrangement belongs in an editing studio, but not quite so on a private server like the one I intend to assemble.

Things get quite expensive if you have to proceed as you recommend.

No, they don't at all. Even if you want to go hardware raid, you can find oem LSI based cards for under $100. There's absolutely no reason not to use some type of redundant disk array when you're using multiple disks for storage, how you accomplish that through hardware and software is up to you.

mvmiller12

[H]ard|Gawd

- Joined

- Aug 7, 2011

- Messages

- 1,520

Heck, I was GIVEN an old Dell PowerEdge R515, and I upgraded the RAID controller in it to an PERC H700 (supporting RAID 6) for $25 from eBay and the Opteron CPUs (it has 2) to the low power, 6 core variants for... $11 from eBay. I replaced the DVD-ROM drive with a small SATA SSD I had sitting around, installed Windows Server 2012 (I had this sitting around as well), plugged both Broadcom NetXtreme NICs up to the switch and away I went, complete with automatic network load balancing.

I'm currently using an external 16TB (5x4TB RAID 5) eSATA array with it on a RocketRAID 644L card while I am saving up to populate the 8 bays connected to the H700 with 8 or 10 TB drives and configure them as a RAID 6. Then I will relegate the external array to backup duty. In the interim, the contents of the array is backed up to an external 8TB USB drive and a few older HDDs that I have attached to different PCs... Not optimal, but it gets the job done until I can get the main array online.

This does NOT have to be terribly expensive at all.

Edit: The point is that you can get server grade hardware that is a little older (but still great for your needs) for not a lot of money. Here is a Dell PowerEdge R515 (the 2 NICS are built-in) with 2 CPUs, 16G RAM, the H700 RAID card, and even 6 HDDs (2x500 GB and 4x2 TB) for $300 with free shipping. Just add your FreeNAS to it, and swap out the drives for whatever you want.

I'm currently using an external 16TB (5x4TB RAID 5) eSATA array with it on a RocketRAID 644L card while I am saving up to populate the 8 bays connected to the H700 with 8 or 10 TB drives and configure them as a RAID 6. Then I will relegate the external array to backup duty. In the interim, the contents of the array is backed up to an external 8TB USB drive and a few older HDDs that I have attached to different PCs... Not optimal, but it gets the job done until I can get the main array online.

This does NOT have to be terribly expensive at all.

Edit: The point is that you can get server grade hardware that is a little older (but still great for your needs) for not a lot of money. Here is a Dell PowerEdge R515 (the 2 NICS are built-in) with 2 CPUs, 16G RAM, the H700 RAID card, and even 6 HDDs (2x500 GB and 4x2 TB) for $300 with free shipping. Just add your FreeNAS to it, and swap out the drives for whatever you want.

Last edited:

As an eBay Associate, HardForum may earn from qualifying purchases.

Zarathustra[H]

Extremely [H]

- Joined

- Oct 29, 2000

- Messages

- 38,864

Heck, I was GIVEN an old Dell PowerEdge R515, and I upgraded the RAID controller in it to an PERC H700 (supporting RAID 6) for $25 from eBay and the Opteron CPUs (it has 2) to the low power, 6 core variants for... $11 from eBay. I replaced the DVD-ROM drive with a small SATA SSD I had sitting around, installed Windows Server 2012 (I had this sitting around as well), plugged both Broadcom NetXtreme NICs up to the switch and away I went, complete with automatic network load balancing.

I'm currently using an external 16TB (5x4TB RAID 5) eSATA array with it on a RocketRAID 644L card while I am saving up to populate the 8 bays connected to the H700 with 8 or 10 TB drives and configure them as a RAID 6. Then I will relegate the external array to backup duty. In the interim, the contents of the array is backed up to an external 8TB USB drive and a few older HDDs that I have attached to different PCs... Not optimal, but it gets the job done until I can get the main array online.

This does NOT have to be terribly expensive at all.

Edit: The point is that you can get server grade hardware that is a little older (but still great for your needs) for not a lot of money. Here is a Dell PowerEdge R515 (the 2 NICS are built-in) with 2 CPUs, 16G RAM, the H700 RAID card, and even 6 HDDs (2x500 GB and 4x2 TB) for $300 with free shipping. Just add your FreeNAS to it, and swap out the drives for whatever you want.

Definitely agree. Just be careful when it comes to those 2U storage servers. They need to force a lot of air through a very small space and as a result tend to be VERY loud. Much louder than you'd want in any home setting. If you do go with a used enterprise rack mount server, try to find a 4U or larger unit. They tend to have better airflow and be quieter.

Back when I was testing a HP DL180 G6 I got a great deal on, it had 8x 18k rpm fans in it, and when they spun up it would literally sound like a taxiing jumbo jet. I had it in the basement, but it was annoying to me two stories up in the second floor bedroom at night.

Here is an example (someone else's video)

As an eBay Associate, HardForum may earn from qualifying purchases.

mvmiller12

[H]ard|Gawd

- Joined

- Aug 7, 2011

- Messages

- 1,520

Definitely agree. Just be careful when it comes to those 2U storage servers. They need to force a lot of air through a very small space and as a result tend to be VERY loud. Much louder than you'd want in any home setting. If you do go with a used enterprise rack mount server, try to find a 4U or larger unit. They tend to have better airflow and be quieter.

Back when I was testing a HP DL180 G6 I got a great deal on, it had 8x 18k rpm fans in it, and when they spun up it would literally sound like a taxiing jumbo jet. I had it in the basement, but it was annoying to me two stories up in the second floor bedroom at night.

Here is an example (someone else's video)

It's only loud when it powers up, but once it is in Windows, it settles down a lot. It sits about 10 feet away from my workstation, and I can still hear it faintly, but it is not really very noticeable. Can't hear it at all if I am playing anything. YMMV, of course

Edit: Just watched your attached video clip - My Dell sounds about the same at initial power up, but even the fan down noise on that HP is ridiculous! My Dell runs a helluva lot quieter in actual use.

Zarathustra[H]

Extremely [H]

- Joined

- Oct 29, 2000

- Messages

- 38,864

It's only loud when it powers up, but once it is in Windows, it settles down a lot. It sits about 10 feet away from my workstation, and I can still hear it faintly, but it is not really very noticeable. Can't hear it at all if I am playing anything. YMMV, of courseThat power up fan noise, though..... boy howdy is that impressive!

Edit: Just watched your attached video clip - My Dell sounds about the same at initial power up, but even the fan down noise on that HP is ridiculous! My Dell runs a helluva lot quieter in actual use.

The problem I had was that HP puts sensors in all of their PCIe cards, and if the server can't read them (for instance, if you install a non HP storage controller) it freaks out and maxes all of the fans just in case something is overheating.

I got that server to run ZFS on it, but at the time there were no affordable non-raid jbod SAS HBA's, so it was either jet engine fans, use hardware RAID or use a hack to get ZFS to run over RAID (not recommended) so I decided against it.

I harvested the dual hexacore Xeon L5640's from it and built my own server in a Norco case with a new old stock Supermicro board instead.

Years later an HP SAS HBA with compatible sensors started becoming affordable on eBay, so I picked up a matching set of used low power quad cores (L5630?) for like $20 and fired it up again.

Last December when I was in place upgrading all of my 12x 4TB WD Red's with 10TB Seagate Enterprise drives, I popped the old WD Red's in that server. It lives on as my remote backup server in a friend's house using ZFS Send/Recv snapshots.

He backs up to my house, I back up to his. We call the arrangement "friendlocation". Much cheaper than colocation

Sorry guys, but your reasoning seems to be flawed when you talk "not expensive".

If I have to spend double the TBs I need to assemble a redundant raid, then it WILL be expensive. Certainly double what I was planning to.

Sorry, but your scary tales do not seem to add up. I SERIOUSLY doubt everybody is using a redundant array for their home server.

The person I asked this question to also has a lot of experience, and is a good friend of mine, so he wouldn't advice anything that could be bad for me, or even risky.

As I am not experienced enough myself I can't say what it is, but something doesn't add up as it should.

Now, if you tell me I should have an additional HDD, same size as the others, so at any sign of a possible problem I can backup that HDD, that is something reasonable.

If I have to spend double the TBs I need to assemble a redundant raid, then it WILL be expensive. Certainly double what I was planning to.

Sorry, but your scary tales do not seem to add up. I SERIOUSLY doubt everybody is using a redundant array for their home server.

The person I asked this question to also has a lot of experience, and is a good friend of mine, so he wouldn't advice anything that could be bad for me, or even risky.

As I am not experienced enough myself I can't say what it is, but something doesn't add up as it should.

Now, if you tell me I should have an additional HDD, same size as the others, so at any sign of a possible problem I can backup that HDD, that is something reasonable.

Two thoughts that I don't think I saw earlier in the thread.

a) You do not want all your drives to be super close; if all of your drives came from the same batch, and there was a firmware or manufacturing issue, chances are they're all going to die at the same time. Although, if you don't care about your data, maybe this risk isn't that important. Sometimes you're going to get a better deal buying everything at once, and you have to take your chances, but if staggering your purchases over a few weeks makes sense, you'll avoid some risk of simultaneous failures.

b) If you end up doing a redundant setup, pay attention to the disk size equality requirements and leave a good amount of space free in case replacement drives have fewer sectors. Even same manufacture drives may vary on how many sectors are in a drive that says the same size on the box. Dealing with that later is a pain, just leave a generous buffer.

a) You do not want all your drives to be super close; if all of your drives came from the same batch, and there was a firmware or manufacturing issue, chances are they're all going to die at the same time. Although, if you don't care about your data, maybe this risk isn't that important. Sometimes you're going to get a better deal buying everything at once, and you have to take your chances, but if staggering your purchases over a few weeks makes sense, you'll avoid some risk of simultaneous failures.

b) If you end up doing a redundant setup, pay attention to the disk size equality requirements and leave a good amount of space free in case replacement drives have fewer sectors. Even same manufacture drives may vary on how many sectors are in a drive that says the same size on the box. Dealing with that later is a pain, just leave a generous buffer.

mvmiller12

[H]ard|Gawd

- Joined

- Aug 7, 2011

- Messages

- 1,520

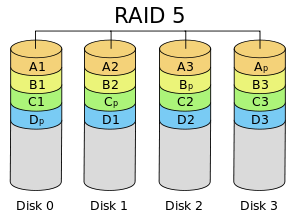

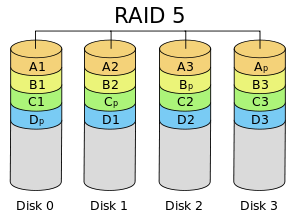

In a RAID 6 configuration you lose the capacity of 2 drives. In an 8-drive array, that is 25% capacity. The peace of mind alone is worth the cost of a mere 2 drives. If you're only using 3 or 4 drives, use RAID 5 and lose only the capacity of 1 of them (a 33% and 25% loss, respectively) .

I have had 3 major array failures in my 15 years of doing this - 2 of them with catastrophic irrecoverable data loss due to lack of redundancy. All it takes is one drive to flake out even momentarily and all that data is just... gone. It took me twice to learn, YMMV.

I have had 3 major array failures in my 15 years of doing this - 2 of them with catastrophic irrecoverable data loss due to lack of redundancy. All it takes is one drive to flake out even momentarily and all that data is just... gone. It took me twice to learn, YMMV.

Last edited:

IdiotInCharge

NVIDIA SHILL

- Joined

- Jun 13, 2003

- Messages

- 14,675

I SERIOUSLY doubt everybody is using a redundant array for their home server.

Anyone that cares about their data and their ability to access it is using at least mirrored pairs. Most would be doing it with a two-bay NAS appliance, but you can do it on a desktop system just as well.

If you're only using 3 or 4 drives, use RAID 5 and lose only the capacity of 1 of them (a 33% and 25% loss, respectively) .

OK, buying four to use three, using one for a RAID 5 and be protected, that would be similar to what I was proposing. Having an extra HDD for backup.

Is that what you mean?

Sort of, but not really (not trying to be a dick, this is one of those cases where terminology is very important). The RAID 5 is essentially a team of drives that work together and can still function in the event one drive fails. There is not a dedicated "backup" drive.

This Wiki graphic may help https://en.wikipedia.org/wiki/Standard_RAID_levels#RAID_5

This gives you redundancy in the case of a drive failure.

It's also important to note that redundancy is not the same as a backup. For a true backup you would need another device with 30tb minimum of storage to back the raid 5 up to. It's important to differentiate redundancy and backups because in events like cryptolocker, file deletion, misedits, etc. drive redundancy won't save you. You would need a real backup solution in one of those cases to recover your data.

This Wiki graphic may help https://en.wikipedia.org/wiki/Standard_RAID_levels#RAID_5

This gives you redundancy in the case of a drive failure.

It's also important to note that redundancy is not the same as a backup. For a true backup you would need another device with 30tb minimum of storage to back the raid 5 up to. It's important to differentiate redundancy and backups because in events like cryptolocker, file deletion, misedits, etc. drive redundancy won't save you. You would need a real backup solution in one of those cases to recover your data.

Spartacus09

[H]ard|Gawd

- Joined

- Apr 21, 2018

- Messages

- 1,930

You could also consider a cloud storage backup such as Amazon S3 Glacier (about $12/mo for 30TB ~ $0.004/Gb) or Backblaze($6/mo ~ Unlimited) which would give you not only overall backup but an offsite one, it would be a recurring cost though.It's also important to note that redundancy is not the same as a backup. For a true backup you would need another device with 30tb minimum of storage to back the raid 5 up to. It's important to differentiate redundancy and backups because in events like cryptolocker, file deletion, misedits, etc. drive redundancy won't save you. You would need a real backup solution in one of those cases to recover your data.

Last edited:

Zarathustra[H]

Extremely [H]

- Joined

- Oct 29, 2000

- Messages

- 38,864

Honestly,If it's a cost thing, I'd use old cheap used drives in RAID6/RAIDz2 before I'd ever use any drive, no matter how new or cool standalone. You may have to swap a drive and rebuild every now and then, but at least your data is not lost or damaged.

RAID5 is better than nothing, but it is still not recommended. Sure, you have protection against a single drive failure, but when you replace that failed drive, and the array is rebuilding, you have no protection at all, so any additional drive failure means total loss of all data, and any random URE's will result in damaged data during the rebuild. Add to that that the extreme load of a rebuild cycle - which can take many many hours - often causes marginal drives to fail, so this is not a far fetched scenario at all.

If you are really on a tight budget, look for used drives. 8x older 4TB drives in RAIDz2 will yield 24 useable TB...

The beauty of ZFS is that you don't need to match your drives. You can mix and match drives of the same size with no problem at all.

OK, buying four to use three, using one for a RAID 5 and be protected, that would be similar to what I was proposing. Having an extra HDD for backup.

Is that what you mean?

RAID5 is better than nothing, but it is still not recommended. Sure, you have protection against a single drive failure, but when you replace that failed drive, and the array is rebuilding, you have no protection at all, so any additional drive failure means total loss of all data, and any random URE's will result in damaged data during the rebuild. Add to that that the extreme load of a rebuild cycle - which can take many many hours - often causes marginal drives to fail, so this is not a far fetched scenario at all.

If you are really on a tight budget, look for used drives. 8x older 4TB drives in RAIDz2 will yield 24 useable TB...

The beauty of ZFS is that you don't need to match your drives. You can mix and match drives of the same size with no problem at all.

Zarathustra[H]

Extremely [H]

- Joined

- Oct 29, 2000

- Messages

- 38,864

The question is I would need to have at least 30TB of files, all different, split in three different HDDs.

How is the info organized with RAID 5 using 4 x 10TB HDDs? How am I protected?

With RAID or ZFS RAIDz by default all the storage becomes one large pool. You can create sub-volumes if you want to though.

mvmiller12

[H]ard|Gawd

- Joined

- Aug 7, 2011

- Messages

- 1,520

OK, buying four to use three, using one for a RAID 5 and be protected, that would be similar to what I was proposing. Having an extra HDD for backup.

Is that what you mean?

Like has been said, sort of...

All 4 drives would be in active use, with each drive holding 75% data and 25% redundancy info. If a single drive fails, the array will still work (just slower) with the exact same capacity. Your RAID will tell you that it is "degraded" and in "Critical Condition." You would replace the defective drive with another drive of preferably the same make/model/capacity (it will usually tell you which drive failed) and then the array will "rebuild" itself. Depending on the RAID setup you use, you may have to manually start this process or assign the new drive to the existing array to start the rebuild process. It is STILL usable while the rebuild is happening, but your PC will likely have a noticeable CPU load on it during the rebuild process which can take a while (depending on the size of the array, even days). The rebuild process cannot be paused or interrupted. Interrupting it means the rebuild process will have to start over from scratch.

Edit: Personally, experience has taught me with RAID 5 that if I have a drive failure, I back up everything that is important to a different drive(s) before starting the rebuild, just in case.

You'd be better off with a Synology or QNAP unless you really want to put the time in to figure out how you want to build this thing and if you want to do a hardware or software raid setup. If you really want to roll your own, you need to decide on what OS you plan on using to figure out the rest of the hardware and software needed. What OS's are you comfortable using and troubleshooting?

First of all I do not want to invest on ready made server. They are all quite expensive.

After my friend gave me the idea of using a computer and FreeNas, that got me interested.

From what I know using the system it's quite straightforward, but I was never considering using RAID.

Having all drives in active use, as apparently seems to be for a RAID system, doesn't sound to me like a very good idea. I would prefer them not run when they are not in use.

And I am not convinced yet that the RAID idea is better than the one I had, having a fourth HDD to move things to when one of the HDD shows signs of imminent failure. You just have to be aware of the signs.

After my friend gave me the idea of using a computer and FreeNas, that got me interested.

From what I know using the system it's quite straightforward, but I was never considering using RAID.

Having all drives in active use, as apparently seems to be for a RAID system, doesn't sound to me like a very good idea. I would prefer them not run when they are not in use.

And I am not convinced yet that the RAID idea is better than the one I had, having a fourth HDD to move things to when one of the HDD shows signs of imminent failure. You just have to be aware of the signs.

FreeNAS uses RAID, RAID = Redundant Array of Inexpensive Disk, with the key word being redundant. You don't get redundancy by having a spare hard drive just sitting around unused waiting for you to move your files off of another drive when it dies.

Synology and QNAP NAS devices are quite inexpensive for what they give you, you pull them out of the box and slap some drives in them and setup your share(s) and you're set. Time is money and they simplify the setup and config to save you time. Rolling your own will take you more time and you'll need to spend it with both the hardware and software configurations of what you end up putting together.

But putting 4 disks into a spare computer and accessing each one individually and hoping if you catch one of the hard drives failing you have time to move all of the data off of it is not "assembling a high capacity NAS", it's pretty much the exact opposite of one.

Synology and QNAP NAS devices are quite inexpensive for what they give you, you pull them out of the box and slap some drives in them and setup your share(s) and you're set. Time is money and they simplify the setup and config to save you time. Rolling your own will take you more time and you'll need to spend it with both the hardware and software configurations of what you end up putting together.

But putting 4 disks into a spare computer and accessing each one individually and hoping if you catch one of the hard drives failing you have time to move all of the data off of it is not "assembling a high capacity NAS", it's pretty much the exact opposite of one.

Spartacus09

[H]ard|Gawd

- Joined

- Apr 21, 2018

- Messages

- 1,930

Sounds like unraid might be a good option for you, it gives you the spin down options you want only uses the disks that it’s accessing, still provides redundancy with a parity drive and is expandable.

mvmiller12

[H]ard|Gawd

- Joined

- Aug 7, 2011

- Messages

- 1,520

First of all I do not want to invest on ready made server. They are all quite expensive.

After my friend gave me the idea of using a computer and FreeNas, that got me interested.

From what I know using the system it's quite straightforward, but I was never considering using RAID.

Having all drives in active use, as apparently seems to be for a RAID system, doesn't sound to me like a very good idea. I would prefer them not run when they are not in use.

And I am not convinced yet that the RAID idea is better than the one I had, having a fourth HDD to move things to when one of the HDD shows signs of imminent failure. You just have to be aware of the signs.

Good luck.

HammerSandwich

[H]ard|Gawd

- Joined

- Nov 18, 2004

- Messages

- 1,126

Yet you don't want to pay for redundancy.During the past years I have lost more files due to defective HDDs than to defective DVD media.

Yet you don't like the nearly unanimous advice that experienced users offer, because it increases the cost of your project.As I am not experienced enough myself I can't say what it is, but something doesn't add up as it should.

And that's far too late to start making your backups.Now, if you tell me I should have an additional HDD, same size as the others, so at any sign of a possible problem I can backup that HDD, that is something reasonable.

Storing 30TB still isn't dirt cheap, and you admittedly don't have the expertise to cut corners here. So, your options are to 1) wait, 2) increase your budget, or 3) take the risks of a cheap-as-possible deployment.

What's your time worth? Because recovering or recreating data can take many, many hours. And how much of your life has this thread taken already? Could you afford an extra HD if you'd spent that time some other way?

Sorry if this sounds mean, Carl. It is realistic, however. FACT: your hardware will fail at some point, very possibly before you have time to salvage data that's not backed up. All the advice you've been given aims to handle this scenario with as few negatives as possible.

EniGmA1987

Limp Gawd

- Joined

- May 2, 2017

- Messages

- 429

I kinda feel like you are just trolling at this pointWhat was just suggested to me with FreeNAS was one disk per pool and have multiple pools.

Zarathustra[H]

Extremely [H]

- Joined

- Oct 29, 2000

- Messages

- 38,864

I don't know what you mean by trolling. What I'm doing is learning.

Well, your biggest takeaway should be that you can't trust single disks. Eventually they fail. I haven't made this mistake since ~2001 when a hard drive I had started having bad sectors all throughout the whole thing. The disk never outright died, but all the data was ruined on it, as barely a file on it wasn't at least slightly corrupted.

As a follow-on to this, RAID is great to secure the integrity of your data, but it is not a backup. RAID protects you from individual hard drive failures, but if you accidentally format a disk, or delete the wrong file, get ransomware or your house burns down or floods, RAID is not going to save you.

So, you not only need RAID (or some form of it, I'm a huge fan of ZFS myself) but you also need backups, preferably in a different location/building than the primary NAS.

There are many ways you can save on the server hardware. Used Enterprise stuff from eBay is usually fine, as it has usually been cared for and not touched much over its life, unlike consumer parts. Either a DIY server or a used rackmount server with a free open source appliance OS like FreeNAS or Napp-IT are a great way to go.

Even something like a Drobo can be comparatively inexpensive (though somewhat limited)

The disks are not the place to save money. No matter what you do it's going to take some extra disk capacity to get proper redundancy. It's OK to save and get used disks, because if they fail you are protected, but it would be a bad idea to save by skimping on redundancy.

If I were you, I'd go down your FreeNAS route and set up a single 8 disk RAIDz2 pool and use inexpensive used disks.

Mix and match those disks to your heart's content. Even different sizes. The only limitation is that the overall pool size is determined. By ("Total Number of Disks" - "Redundancy Disks")*"Smallest Disk Size"

(In reality the redundancy is spread among all the disks, but it winds up being 2 disks worth of capacity for RAIDz2)

If later, you want to expand the size of the pool you can either remove the smallest disk in the pool and replace it with a larger one and the entire pool grows to the multiple of the new smallest disk in the pool.

Or you can add a second RAIDz2 VDEV to the pool and the data will spread across them.

The takeaway is this. Until now as a hobbyist you have probably mostly dealt with clients with one or two smallish disks, a lot of them containing easily replaceable data (like something that can be downloaded again from Steam)

When you enter the large storage NAS world you are running up against two new issues:

1.) Data corruption rates are expressed on a per bit level. All else being equal, 30TB worth of standalone disks are about 60x as likely to see corruption compared to a 500GB disk.

2.) The data you are putting on there is likely less easy to replace than a Steam game when everything hits the fan.

3.) Silent corruption is the killer. Most failures are not spectacular dead drive scenarios. With silent corruption you get a flipped bit here or there over time. You'll never know if the data is bad until you go to read it. It really sucks to find out 4 years later that the file you were keeping for as a keepsake has become corrupted.

If you have been on the HardForums for any amount of time, you should know how exceedingly rare it is to get a unanimous opinion on anything around here. I would take this as a sign.

Last edited:

So maybe the problem is solved by assembling a server with say multiple individual 3-4TB disks, as then the risk of something happening would multiply a lot less. My problem would be to have all the necessary bays to hold the HDDs,

Sorry, but as you see, I am not still convinced of the RAID arrangement being what I need or want.

Sorry, but as you see, I am not still convinced of the RAID arrangement being what I need or want.

From what I have read, the RAID brings another big problem: that if any HDD fails, it has to be replaced with the same brand and model. Considering models come and go, that might be a big issue.

Your option of cutting costs by buying used HDDs is out of the question. HDDs is the only thing I would never buy refurbished or used.

Your option of cutting costs by buying used HDDs is out of the question. HDDs is the only thing I would never buy refurbished or used.

IdiotInCharge

NVIDIA SHILL

- Joined

- Jun 13, 2003

- Messages

- 14,675

Sorry, but as you see, I am not still convinced of the RAID arrangement being what I need or want.

Then you're not convinced that your data is of any importance.

From what I have read, the RAID brings another big problem: that if any HDD fails, it has to be replaced with the same brand and model.

For hardware RAID controllers, this can be true- for software RAID, it isn't at all true. The biggest issue is that mixing and matching drives results in capacity that is used neither by data nor by providing redundancy. Otherwise, use whatever; the quality of the drives and level of redundancy will reflect your priorities.

Then you're not convinced that your data is of any importance.

No, my data is very important. But I don't think you have to go to that level of expense to take care of it.

If you use an early warning system like CrystalDiskInfo and check your disks every week, you can backup the one showing any signs of potential problems in advance.

For hardware RAID controllers, this can be true- for software RAID, it isn't at all true. The biggest issue is that mixing and matching drives results in capacity that is used neither by data nor by providing redundancy. Otherwise, use whatever; the quality of the drives and level of redundancy will reflect your priorities.

Dealing with irreplaceable masters in an editing house is not the same as dealing with files on a home server. Particularly if you watch your system.

Zarathustra[H]

Extremely [H]

- Joined

- Oct 29, 2000

- Messages

- 38,864

Your option of cutting costs by buying used HDDs is out of the question. HDDs is the only thing I would never buy refurbished or used.

For some hardware RAID controllers you need identical drives.

I wouldn't go down that route though.

ZFS does not care what drives you use. They can be different brands, different sizes, different speeds, on different interfaces. It doesn't care.

Your size will be limited by the smallest drive and your performance by the slowest drive, but other than that, you can use any pile of drives you want.

With ZFS, it works like this:

Let's say you are building a RAIDz2 setup with a bunch of random used drives.

You have:

1x 1TB drive

3x 2TB drives

2x 3TB drives

2x 4TB drives

Your total size will be:

("Total number of drives" - "redundancy level") * "Size of smallest disk"

So, (8-2)*1 = 6 TB.

So, this is not very efficient, as the smallest drive holds you back. However, let's say you a couple of months find a great deal on a 4TB drive.

You detach the 1TB drive, pop it out, insert the 4TB drive in it's place, tell it to rebuild, and once it is done you now have:

(8-2)*2 = 12 TB.

You never have to copy your data, or move it from disk to disk, or plan what is where. Just that simple, a rebuild is all it takes and more space is available.

In other words, it is incredibly flexible if you just want to use a random pile of drives and keep your eyes on the classifieds for replacements.

Of course, you waste the least amount of space if all drives are the same size, and waste the least amount of performance if they are all the same size, but other than that it just works.

And then, if you want to over time, you can even swap in your fancy new 10TB drives as the budget avails itself.

Last edited:

IdiotInCharge

NVIDIA SHILL

- Joined

- Jun 13, 2003

- Messages

- 14,675

If you use an early warning system like CrystalDiskInfo and check your disks every week, you can backup the one showing any signs of potential problems in advance.

Spinning drives can shit the bed at any given moment. Assuming that you're going to get a warning and that you'll be able to take steps when a drive starts showing signs of failure is the surest way to lose data.

No, my data is very important. But I don't think you have to go to that level of expense to take care of it.

If you use an early warning system like CrystalDiskInfo and check your disks every week, you can backup the one showing any signs of potential problems in advance.

What's your budget and how much space do you need? You keep talking about level of expense like this is some expensive endeavor when the most expensive part of this is going to be hard drives. You only need one extra hard drive in the array to give you at least some level of redundancy, it doesn't bump the cost much at all. And it's certainly better to have it that way then putting a failed drive in the freezer and trying to copy TBs of data off of it before it's gone for good.

I did find having just one extra HDD an acceptable expense, if that was better than my proposal of watching the HDDs to have an advance warning, and did say so here.

But then the additional info was that the extra HDD, not the one I first assembled the array with, but the ones that came after, had to be same brand and model. Which I think would be impossible to sustain along the years.

OTOS, after I started watching my HDDs, I always had a warning signal from all of them. On only one case I did think I still had a few days and I did not. That will never happen again. But I did have the warning.

But when disks are on a RAID array it seems the catastrophe can be even larger, not smaller. That's what scared me.

But then the additional info was that the extra HDD, not the one I first assembled the array with, but the ones that came after, had to be same brand and model. Which I think would be impossible to sustain along the years.

OTOS, after I started watching my HDDs, I always had a warning signal from all of them. On only one case I did think I still had a few days and I did not. That will never happen again. But I did have the warning.

But when disks are on a RAID array it seems the catastrophe can be even larger, not smaller. That's what scared me.

IdiotInCharge

NVIDIA SHILL

- Joined

- Jun 13, 2003

- Messages

- 14,675

You can toss two drives into a Windows Storage Spaces mirror. That's software RAID, and you can use any two drives- they'll work independantly just the same. This is really not that hard unless you want a lot of space.

Zarathustra[H]

Extremely [H]

- Joined

- Oct 29, 2000

- Messages

- 38,864

This is (sort of) what I did.

I started with a Drobo at 5 WD Green 2TB drives in RAID5 back in 2010. Two of them had previously been single drives in my desktop. 3 of them were new purchases.

I wasn't a huge fan of the Drobo performance, so eventually I sold the it and used the AMD FX-8120 I had intended to use for my desktop in 2011 in a new storage server. I set it up with FreeNAS on ZFS. 6 Drives in a RAIDz2 configuration, my 5x 2TB WD Greens and a new 3TB WD WD Green.

Because I was on a budget, I bought the cheapest case I could find with at least 6 3.5mm bays, the $29 NZXT Source 210 case. You don't need a fancy server case for this stuff (but it does make it easier when it is time to swap drives). I also bought a cheap used IBM M1015 SAS controller (rebranded LSI) on eBay and flashed it to non RAID mode for best use with ZFS.

Unfortuanately I couldn't easily transfer the data from the Drobo, so I had to back it up first, move the drives over to the new case, create the ZFS pool and restore. This would be the last time I'd have to do that.

Because my smallest drives were still 2TB, total available space was 8TB at this point.

In ~2012 one of the 2TB drives suddenly died, so I replaced it with a new 3TB WD Green. 2TB still being the smallest drive, my available space remained unchanged at 8TB.

In 2013 when the 4TB WD Red's hit the market I decided it was time for an upgrade. Over the next few months I wanted to grow the capacity of my storage server and get more reliable drives, so I started one by one, whenever I had some spare money buying 4TB WD Red's. With the first 3 of them the total size was unchanged at 8TB because I still had a 2TB drive in there, but once the 4th 4TB drive finished rebuilding, now the smallest drive was 3TB and all of a sudden I had 4x3TB = 12TB.

Two more 4TB drives swapped in and all of a sudden all drives are the same size at 4TB, and I wound up with 4x4TB = 16TB

I was very happy with my 16TB pool but I wanted more. In 2014 I moved to a new house and decided it was time for a real storage server, not this consumer stuff I had been playing with to date.

I bought a used HP DL180 G6 12 bay 2U server with two hexacore Xeon L5640's and 96GB of RAM from esiso on eBay. I fought with this server for some time. It was just too loud for me, and I couldn't get the fans to behave, so I took the CPU's and RAM out of it, and let it sit in storage in my my basement and instead bought a Norco RPC-4216 server case and an old new stock Supermicro X8DTE server motherboard and a second IBM M1015 SAS controller and built them together to a system.

(At this point I started running it as a VM server, first under FreeNAS and later under Proxmox, but that isn't strictly relevant here)

I saved up for 6 more 4TB WD Red's and added a second RAIDz2 vdev to my pool, for a total of 32TB of storage.

Over the next four years I had to replace 4 of the 12 WD Red's under warranty because they started having read errors, and thanked the fact that I discovered redundant drives as I would otherwise have lost my data, as I had no way to back all of that data up.

In ~2015 (I think) I started using Crashplan's unlimited plan to back up my files over the internet. This left much to be desired but at least I had a backup.

By late 2017 two things had happened. I was running low on storage space, and Crashplan had decided to exit the consumer backup market.

I decided it was time for an upgrade.

From October 2017 through early January 2018 I two by two (every couple of weeks) ordered 10TB helium filled Seagate Enterprise drives.

When each two came in, they would get tested with a SMART conveyance test followed by a full badblocks run on each, and upon clean health were swapped in one by one into the server.

By January 2018 I thus had a pool 12x 10TB drives, configured in two RAIDz2 vdevs of six drives each, for a total of 80TB available storage.

I also figured out a way to bring the old loud HP server back to life without being too loud, so I ordered a couple of quad core Xeon L5630's for it for $9 each at this point, and loaded it up with the 12x old 4TB drives in one large RAIDz3 pool with 36TB available. This would become my backup server.

I stashed the server at a friend's house (and he stashed one at mine) and now I back up remotely using ZFS nightly snapshots and send/Recv function. I obviously don't back up everything, as the backup server has less capacity than the main server, but I prioritize the difficult to replace stuff.

Since October 2017 - Jan 2018 over which time I installed them, none of the 10TB drives have had any issues.

Since I put my backup server in my friends basement I have lost one of the old 4TB drives, which are now cheaper to replace.

I started with a Drobo at 5 WD Green 2TB drives in RAID5 back in 2010. Two of them had previously been single drives in my desktop. 3 of them were new purchases.

I wasn't a huge fan of the Drobo performance, so eventually I sold the it and used the AMD FX-8120 I had intended to use for my desktop in 2011 in a new storage server. I set it up with FreeNAS on ZFS. 6 Drives in a RAIDz2 configuration, my 5x 2TB WD Greens and a new 3TB WD WD Green.

Because I was on a budget, I bought the cheapest case I could find with at least 6 3.5mm bays, the $29 NZXT Source 210 case. You don't need a fancy server case for this stuff (but it does make it easier when it is time to swap drives). I also bought a cheap used IBM M1015 SAS controller (rebranded LSI) on eBay and flashed it to non RAID mode for best use with ZFS.

Unfortuanately I couldn't easily transfer the data from the Drobo, so I had to back it up first, move the drives over to the new case, create the ZFS pool and restore. This would be the last time I'd have to do that.

Because my smallest drives were still 2TB, total available space was 8TB at this point.

In ~2012 one of the 2TB drives suddenly died, so I replaced it with a new 3TB WD Green. 2TB still being the smallest drive, my available space remained unchanged at 8TB.

In 2013 when the 4TB WD Red's hit the market I decided it was time for an upgrade. Over the next few months I wanted to grow the capacity of my storage server and get more reliable drives, so I started one by one, whenever I had some spare money buying 4TB WD Red's. With the first 3 of them the total size was unchanged at 8TB because I still had a 2TB drive in there, but once the 4th 4TB drive finished rebuilding, now the smallest drive was 3TB and all of a sudden I had 4x3TB = 12TB.

Two more 4TB drives swapped in and all of a sudden all drives are the same size at 4TB, and I wound up with 4x4TB = 16TB

I was very happy with my 16TB pool but I wanted more. In 2014 I moved to a new house and decided it was time for a real storage server, not this consumer stuff I had been playing with to date.

I bought a used HP DL180 G6 12 bay 2U server with two hexacore Xeon L5640's and 96GB of RAM from esiso on eBay. I fought with this server for some time. It was just too loud for me, and I couldn't get the fans to behave, so I took the CPU's and RAM out of it, and let it sit in storage in my my basement and instead bought a Norco RPC-4216 server case and an old new stock Supermicro X8DTE server motherboard and a second IBM M1015 SAS controller and built them together to a system.

(At this point I started running it as a VM server, first under FreeNAS and later under Proxmox, but that isn't strictly relevant here)

I saved up for 6 more 4TB WD Red's and added a second RAIDz2 vdev to my pool, for a total of 32TB of storage.

Over the next four years I had to replace 4 of the 12 WD Red's under warranty because they started having read errors, and thanked the fact that I discovered redundant drives as I would otherwise have lost my data, as I had no way to back all of that data up.

In ~2015 (I think) I started using Crashplan's unlimited plan to back up my files over the internet. This left much to be desired but at least I had a backup.

By late 2017 two things had happened. I was running low on storage space, and Crashplan had decided to exit the consumer backup market.

I decided it was time for an upgrade.

From October 2017 through early January 2018 I two by two (every couple of weeks) ordered 10TB helium filled Seagate Enterprise drives.

When each two came in, they would get tested with a SMART conveyance test followed by a full badblocks run on each, and upon clean health were swapped in one by one into the server.

By January 2018 I thus had a pool 12x 10TB drives, configured in two RAIDz2 vdevs of six drives each, for a total of 80TB available storage.

I also figured out a way to bring the old loud HP server back to life without being too loud, so I ordered a couple of quad core Xeon L5630's for it for $9 each at this point, and loaded it up with the 12x old 4TB drives in one large RAIDz3 pool with 36TB available. This would become my backup server.

I stashed the server at a friend's house (and he stashed one at mine) and now I back up remotely using ZFS nightly snapshots and send/Recv function. I obviously don't back up everything, as the backup server has less capacity than the main server, but I prioritize the difficult to replace stuff.

Since October 2017 - Jan 2018 over which time I installed them, none of the 10TB drives have had any issues.

Since I put my backup server in my friends basement I have lost one of the old 4TB drives, which are now cheaper to replace.

Last edited:

I did find having just one extra HDD an acceptable expense, if that was better than my proposal of watching the HDDs to have an advance warning, and did say so here.

So are we finally getting somewhere?

But then the additional info was that the extra HDD, not the one I first assembled the array with, but the ones that came after, had to be same brand and model. Which I think would be impossible to sustain along the years.

No, that's not the case at all with most solutions. The only time you're locked into a single hard drive model is with some very specific RAID controllers that you wouldn't want to use anyway.

OTOS, after I started watching my HDDs, I always had a warning signal from all of them. On only one case I did think I still had a few days and I did not. That will never happen again. But I did have the warning.

But when disks are on a RAID array it seems the catastrophe can be even larger, not smaller. That's what scared me.

If you end up losing a whole array, something went very wrong. Sure it can happen, just like a power supply could fry every hard drive in your system at the same time. Backups are the only way to mitigate it.

What is your budget? What equipment do you currently have on hand to include any hard drives you can use?

If you have the box running 24/7, I would not spin down the drives. They only use a couple watts when idle, and it's the spin down/up cycles that typically cause a drive to die.

If you have business critical data, you should have a local array AND remote backup. Period. If you have no problem potentially losing all data on a disk, then use whatever technology you want. Like others here, I run freenas at home with 6-4TB and 6-5TB 2.5" seagates in z2 arrays, with 4-400GB intel s3700s in raid 10 for VM storage. Plus remote backups for any data I can't afford to lose.

So you build your setup around your requirements and acceptable risk.

If you have business critical data, you should have a local array AND remote backup. Period. If you have no problem potentially losing all data on a disk, then use whatever technology you want. Like others here, I run freenas at home with 6-4TB and 6-5TB 2.5" seagates in z2 arrays, with 4-400GB intel s3700s in raid 10 for VM storage. Plus remote backups for any data I can't afford to lose.

So you build your setup around your requirements and acceptable risk.

My budget is the minimum possible, so it only includes the HDDs.

As I intend my NAS to have a 30TB capacity, my only safety precaution would be to have an additional 10TB HDD. Having more than that is not an option I'm interested in. Neither is assembling a 20TB server. It's 3 + 1.

All HDDs should be brand new.

My server would be a computer with an Asus MB and an Intel i7 cpu, all which I already have, running FreeNAS or a similar Linux program.

Please do suggest your options including all those decisions.

As I intend my NAS to have a 30TB capacity, my only safety precaution would be to have an additional 10TB HDD. Having more than that is not an option I'm interested in. Neither is assembling a 20TB server. It's 3 + 1.

All HDDs should be brand new.

My server would be a computer with an Asus MB and an Intel i7 cpu, all which I already have, running FreeNAS or a similar Linux program.

Please do suggest your options including all those decisions.

![[H]ard|Forum](/styles/hardforum/xenforo/logo_dark.png)