Navigation

Install the app

How to install the app on iOS

Follow along with the video below to see how to install our site as a web app on your home screen.

Note: This feature may not be available in some browsers.

More options

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

2080 3dmark leak

- Thread starter Elios

- Start date

Dayaks

[H]F Junkie

- Joined

- Feb 22, 2012

- Messages

- 9,774

Awesome. Bodes well for the 2080ti that has 48% more CUDA cores.

Will we see 15k TimeSpy without modding is the question...

Will we see 15k TimeSpy without modding is the question...

GoldenTiger

Fully [H]

- Joined

- Dec 2, 2004

- Messages

- 29,672

https://videocardz.com/newz/nvidia-geforce-rtx-2080-3dmark-time-spy-and-fire-strike-scores-leak

Unkown if these are with release or beta drivers.... but it's showing as stock Clocks winning over the 1080ti oc'd for the plain 2080. Need to know more before we can really judge anything.

EDIT: I'm very curious about how DLSS will work, too.

Unkown if these are with release or beta drivers.... but it's showing as stock Clocks winning over the 1080ti oc'd for the plain 2080. Need to know more before we can really judge anything.

EDIT: I'm very curious about how DLSS will work, too.

Dayaks

[H]F Junkie

- Joined

- Feb 22, 2012

- Messages

- 9,774

https://videocardz.com/newz/nvidia-geforce-rtx-2080-3dmark-time-spy-and-fire-strike-scores-leak

Unkown if these are with release or beta drivers.... but it's showing as stock Clocks winning over the 1080ti oc'd for the plain 2080. Need to know more before we can really judge anything.

EDIT: I'm very curious about how DLSS will work, too.

DLSS needs to be implemented on the nVidia side per each game unfortunately. I am very excited for it but I am also quite pessimistic about technologies that don’t “just work” all the time. At the same time I imagine it’s very automated. Plug in chug in a super computer... and it consumes die space (tensor cores) that could have been used for CUDA cores so that makes me slightly more optimistic.

Someone posted an image of what it can do in one of these threads. It did a better job of not blurring defined details than traditional AA IMO. I feel like we’ll have to wait for a [H] review for a detailed analysis.

GoldenTiger

Fully [H]

- Joined

- Dec 2, 2004

- Messages

- 29,672

Yep, me too. I hope it is something easy enough that most major games use it...I feel like we’ll have to wait for a [H] review for a detailed analysis.

Bankie

2[H]4U

- Joined

- Jul 27, 2004

- Messages

- 2,469

Yep, me too. I hope it is something easy enough that most major games use it...

I think NV said that the game dev just needs to send them a copy of the game and then they'll do the work. My guess is that they'll then make the profile available through GeForce Experience so the people that are afraid of the app will either have to install it anyway or do without.

Armenius

Extremely [H]

- Joined

- Jan 28, 2014

- Messages

- 42,162

Not seeing the results. Futuremark may have removed it.

VCZ is saying the driver has leaked out into the wild (411.51).

VCZ is saying the driver has leaked out into the wild (411.51).

Not seeing the results. Futuremark may have removed it.

VCZ is saying the driver has leaked out into the wild (411.51).

Hopefully. Don't want to wait 5 days to see something tangible.

harmattan

Supreme [H]ardness

- Joined

- Feb 11, 2008

- Messages

- 5,129

Is that score with stock clocks on the 2080?

Is that score with stock clocks on the 2080?

There were two scores, one with 9319 Graphics score and one with 10659. Videocardz says that the varying scores are because the FE is overclocked this time around.

I think we can't really read too much into these leaks at the moment.

Gideon

2[H]4U

- Joined

- Apr 13, 2006

- Messages

- 3,558

Am i missing something here? Those scores didnt impress me and my 1080Ti @ 2000 MHz had higher graphics scores than the scores for the 2080. Bring on the real benchmarks!

Some on here are impressed with any number thrown out for the 2000 series.

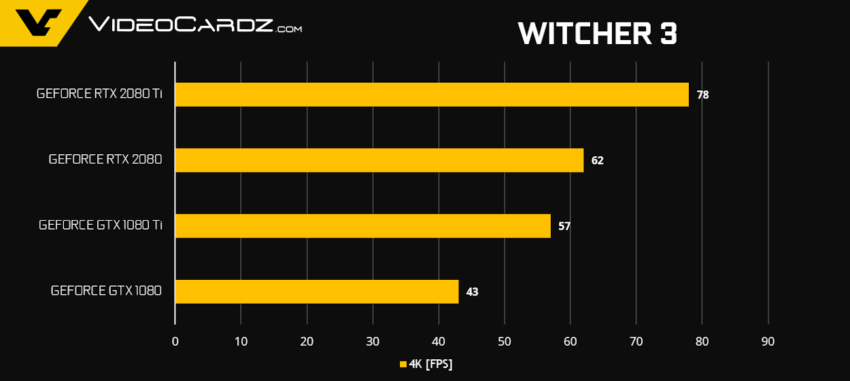

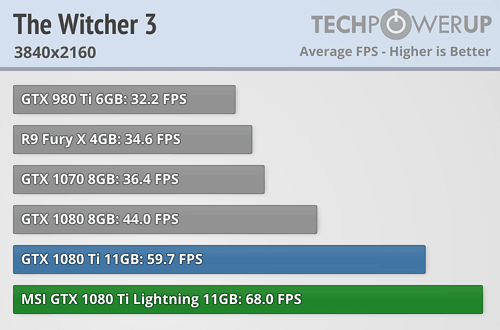

https://videocardz.com/77983/nvidia-geforce-rtx-2080-ti-and-rtx-2080-official-performance-unveiled

real benchmarks ( supposedly )

real benchmarks ( supposedly )

pendragon1

Extremely [H]

- Joined

- Oct 7, 2000

- Messages

- 52,249

also remember that the 2080ti is the new titan. so compare your 1080ti to the 2080 and theres not much improvement...

oh and op, that link goes to a blank page now.

oh and op, that link goes to a blank page now.

Furious_Styles

Supreme [H]ardness

- Joined

- Jan 16, 2013

- Messages

- 4,538

I think NV said that the game dev just needs to send them a copy of the game and then they'll do the work. My guess is that they'll then make the profile available through GeForce Experience so the people that are afraid of the app will either have to install it anyway or do without.

I'm not afraid to use the app I just don't want to. It's completely superfluous for me. I hope they don't do that.

also remember that the 2080ti is the new titan. so compare your 1080ti to the 2080 and theres not much improvement...

oh and op, that link goes to a blank page now.

One way to look at it. 2080 is the successor to the 1080 though. You are really making an assumption when calling it the new titan.

pendragon1

Extremely [H]

- Joined

- Oct 7, 2000

- Messages

- 52,249

it seems like they shifted the naming tiers. im not the only one that thinks so: https://hardforum.com/threads/wait-so-2080ti-is-actually-renamed-titan.1966665/One way to look at it. 2080 is the successor to the 1080 though. You are really making an assumption when calling it the new titan.

but yes assumptions based on the tech and pricing. they could still release some super rtx card for a couple grand...

Wasn't the stock 980 close to the 780 Ti in Sept 2014? If one didn't overclock the 980, which was wrong IMO. And if it's not about 250W TDP vs. 165W.

Yes they were very close.

Furious_Styles

Supreme [H]ardness

- Joined

- Jan 16, 2013

- Messages

- 4,538

Wasn't the stock 980 close to the 780 Ti in Sept 2014? If one didn't overclock the 980, which was wrong IMO. And if it's not about 250W TDP vs. 165W.

Yeah and the 1070 was on par with the 980 ti. So you'd expect the 1080ti to be right near the 2070's performance. But this is a different release so I don't really have any expectations at this point, I'm in wait and see mode.

Dayaks

[H]F Junkie

- Joined

- Feb 22, 2012

- Messages

- 9,774

it seems like they shifted the naming tiers. im not the only one that thinks so: https://hardforum.com/threads/wait-so-2080ti-is-actually-renamed-titan.1966665/

but yes assumptions based on the tech and pricing. they could still release some super rtx card for a couple grand...

AFAIK the 2080ti is within 7% from max die. Any Titan based on 12nm you will not be able to tell the difference and if the Titan V is any indication Titans are going back to prosumer.

I do wonder if they’ll rush a 7nm Titan. I haven’t seen anything useful on 7nm yet (max die size, ect.) 2080ti has a MASSIVE die and it’d take a pretty large 7nm chip to beat it... which can be risky/inefficient on a new node.

But one could consider the 2080ti to be in the Titan placeholder right now. Curious to see if the price drops to normal ti levels in 6-9 months when the ti would usually release. If they called it a Titan it would have helped with PR IMO and not out of line with the Titan X Pascal...

https://videocardz.com/77983/nvidia-geforce-rtx-2080-ti-and-rtx-2080-official-performance-unveiled

real benchmarks ( supposedly )

https://videocardz.com/77983/nvidia-geforce-rtx-2080-ti-and-rtx-2080-official-performance-unveiled

real benchmarks ( supposedly )

Well that about sums it up. Pretty close to what I expected. 2080ti being 10-15% faster than 1080ti on average. Given that it is already overclocked out of the box makes it even less impressive. But I am sure Nvidia is pushing developers to send them there games for them to scan them with their super computer and update game code for DLSS. But I am wondering if DLSS will only be effective at higher resolutions. I am also wondering what AMD is going to do on their end. Lisa was also talking about they have something going on with microsoft cloud computing so wouldn't be surprised if they use that and their partner ship with microsoft to do something similar but that might just be for consoles. AMD hasn't been too big about proprietary stuff. May be their next gen architecture will be designed to perform rather than doing something like dlss. I am not sure didn't he say during the presentation that DLSS was basically replacing parts of the screen with images through AI on tensor cores and GPU pretty much didn't have to render those portions. I am pretty confident thats what he said. So in that case its like working around the problem but still requires developers to hand over their code for nvidia to run and update. So may be some developers wouldn't want to do that lol.

harmattan

Supreme [H]ardness

- Joined

- Feb 11, 2008

- Messages

- 5,129

Huh? Those numbers are showing 2080 as ~10% faster than 1080 ti. 2080 ti looks around 40-50% faster than 1080 ti.

2080ti being 10-15% faster than 1080ti on average.

2080 being 10-15% faster than 1080ti.

Copy paste from reddit:

On average across 14 benchmarks:

- 2080 Ti is 45% faster vs 1080 Ti

- 2080 is 15% faster vs 1080 Ti

- 2080 Ti is 84% faster vs 1080

- 2080 is 46% faster vs 1080

Dayaks

[H]F Junkie

- Joined

- Feb 22, 2012

- Messages

- 9,774

Well that about sums it up. Pretty close to what I expected. 2080ti being 10-15% faster than 1080ti on average. Given that it is already overclocked out of the box makes it even less impressive. But I am sure Nvidia is pushing developers to send them there games for them to scan them with their super computer and update game code for DLSS. But I am wondering if DLSS will only be effective at higher resolutions. I am also wondering what AMD is going to do on their end. Lisa was also talking about they have something going on with microsoft cloud computing so wouldn't be surprised if they use that and their partner ship with microsoft to do something similar but that might just be for consoles. AMD hasn't been too big about proprietary stuff. May be their next gen architecture will be designed to perform rather than doing something like dlss. I am not sure didn't he say during the presentation that DLSS was basically replacing parts of the screen with images through AI on tensor cores and GPU pretty much didn't have to render those portions. I am pretty confident thats what he said. So in that case its like working around the problem but still requires developers to hand over their code for nvidia to run and update. So may be some developers wouldn't want to do that lol.

The input to DLSS is a lower resolution image, processed with some set of variables, and compares it to the perfect image. It keeps changing the variables until it replicates the perfect image. It does this with millions of images.

The game developer could just provide a low resolution image and the perfect high res image “ground truth” and nVidia could literally do it off that. It doesn’t process just parts, it does the whole image.

This is how all AI works. You give it an example and the desired output. It figures out the way to get there. You ideally so this in every combinaton possible. It’s also basically how humans learn too...

At the end you get millions of variables that had the lowest error tranforming the millions of lower quality images into the perfect image. That’s what your card runs off of.

Last edited:

pendragon1

Extremely [H]

- Joined

- Oct 7, 2000

- Messages

- 52,249

which puts everything in line with the 2080ti=titan theory.2080 being 10-15% faster than 1080ti.

Copy paste from reddit:

On average across 14 benchmarks:

https://i.imgur.com/trNPsJ1.png

- 2080 Ti is 45% faster vs 1080 Ti

- 2080 is 15% faster vs 1080 Ti

- 2080 Ti is 84% faster vs 1080

- 2080 is 46% faster vs 1080

which puts everything in line with the 2080ti=titan theory.

I think nvidia is smart here lol. They could have released Ti and Titan first lets say 2080 ti was actually titan and 2080 was released as 2080ti. Pricing will be justified but performance wont. So they sort of hiked the price and called it 2080. They know for sure people are going to bite. There is no question about it.

DooKey

[H]F Junkie

- Joined

- Apr 25, 2001

- Messages

- 13,560

Huh? Those numbers are showing 2080 as ~10% faster than 1080 ti. 2080 ti looks around 40-50% faster than 1080 ti.

Some people can't read and stick their foot in their mouth.

pippenainteasy

[H]ard|Gawd

- Joined

- May 20, 2016

- Messages

- 1,158

I dunno about 40-50% faster than 1080 ti. We're talking about open air cooled 2080 ti vs blower 1080 ti which is heavily sandbagging 1080 ti numbers. Comparing open air to open air implies something closer to 20% difference.

Dayaks

[H]F Junkie

- Joined

- Feb 22, 2012

- Messages

- 9,774

I think nvidia is smart here lol. They could have released Ti and Titan first lets say 2080 ti was actually titan and 2080 was released as 2080ti. Pricing will be justified but performance wont. So they sort of hiked the price and called it 2080. They know for sure people are going to bite. There is no question about it.

If they did 2080 and Titan like they usually do it would have been fine. 2080 would be +$100 and Titan would have been the same price as last gen. What a good deal!

View attachment 104017

View attachment 104018

I dunno about 40-50% faster than 1080 ti. We're talking about open air cooled 2080 ti vs blower 1080 ti which is heavily sandbagging 1080 ti numbers. Comparing open air to open air implies something closer to 20% difference.

Thats true. I had a founders edition 1080ti. It wasn't so great at boost clocks. Had to mess with bunch of parameters before I got it to where i wanted lol. still it wasn't desirable with fan speed. Then I had a open air dual fan evga ftw card and that pretty much blew it away. It was boosting much higher out of the box and easily boosting close to 1900-1950 with upping the power. The new founders edition cards are overclocked as well. So I am not too sure if its a fair deal to compare it to blower style from last year. Reviewers better be honest here and compare it apples to apples. Pretty much same boost clock at minimum to compare the two.

Last edited:

If they did 2080 and Titan like they usually do it would have been fine. 2080 would be +$100 and Titan would have been the same price as last gen. What a good deal!

I think they are just cashing in on no competition as much as they can. They know by 2020 with intel coming in to the GPU field its get tougher and AMD is suppose to be launching their true next gen in 2020 as well. I am not too sure about how they will perform on their first try, but AMD has taken their sweet as time with GCN and their next gen should be something to be excited about. They probably know what GCN lacked at by now lol. So they have got to get that cash in. This year is almost already over so we are looking at 2019. If AMD decides they are just going to focus on their next gen architecture and let Navi compete at mid range. I am cool with that honestly, if that is what it takes for them to do the high end right and not force it.

pippenainteasy

[H]ard|Gawd

- Joined

- May 20, 2016

- Messages

- 1,158

Thats true. I had a founders edition 1080ti. It wasn't so great at boost clocks. Had to mess with bunch of parameters before I got it to where i wanted lol. still it wasn't desirable with fan speed. Then I had a open air dual fan evga ftw card and that pretty much blew it away. It was boosting much higher out of the box and easily boosting close to 1900-1950 with upping the power. The new founders edition cards are overclocked as well. So I am not too sure if its a fair deal to compare it to blower style from last year. Reviewers better be honest here and compare it apples to apples. Pretty much same boost clock at minimum to compare the two.

Lol yes it's not apples to apples. I can post gamernexus watercooled titan v benches on a at 2ghz showing its 65% faster than a 1080 ti FE. It's not a fair comparison at all.

TahoeDust

Limp Gawd

- Joined

- Dec 3, 2011

- Messages

- 502

I am looking forward to seeing 3440x1440 benchmark comparisons to the 1080ti. I am hoping for 35% or better...that will have me keeping my pre-order on a 2080ti FTW3.

Huh? Those numbers are showing 2080 as ~10% faster than 1080 ti. 2080 ti looks around 40-50% faster than 1080 ti.

Are they? Comparing an FE 1080Ti to an FE 2080 only tells part of the story. I know my Evga SC black edition 1080Ti at stock gets better framerates in some of those games.

I am looking forward to seeing 3440x1440 benchmark comparisons to the 1080ti. I am hoping for 35% or better...that will have me keeping my pre-order on a 2080ti FTW3.

I had the same setup are you having hard time pushing that resolution? My setup really played through everything at that resolution. I didn't think I needed anything faster at that resolution. My monitor did have gsync but almost every game was like 80-100 fps at ultra smooth as butter.

Are we going to see anything but FE benches on the 19th?

Well I hope they are not benching against founders edition FE cards only. You never know if Nvidia will push them to just bench against the founder edition 1080 and 1080ti. Which would just suck because you got blower cards vs new founders edition cards that are factory OC'ed and are using much better cooler. Wanna see some apples to apples with after market 1080ti running at similar clocks as 2080ti and 1080 vs 2080.

![[H]ard|Forum](/styles/hardforum/xenforo/logo_dark.png)