pandora's box

Supreme [H]ardness

- Joined

- Sep 7, 2004

- Messages

- 4,846

https://tftcentral.co.uk/reviews/lg-27gr95qe-oled

TFT review not sure when it was published but think it was a few days ago for non Patrons.

And the calibration guide:

Follow along with the video below to see how to install our site as a web app on your home screen.

Note: This feature may not be available in some browsers.

https://tftcentral.co.uk/reviews/lg-27gr95qe-oled

TFT review not sure when it was published but think it was a few days ago for non Patrons.

According to Rtings, they still couldn’t reign in the color as much as the sRGB mode. I think the color space was still 112% of sRGB after calibration.

Really annoying. I don’t want to drop $1K on a monitor to have even less calibration options than my $219 monitor from 2019.

https://www.rtings.com/monitor/reviews/lg/27gr95qe-bHmmm...I haven't seen RTINGS review this one yet - curious where you saw that?

I just did the LG Calibration Studio calibration when I did it for sRGB, but it seemed to have a dE of well under 2 for everything. Visually, not too much difference switching between sRGB and Calibration - the main difference is I could set target brightness and gamma.

https://www.rtings.com/monitor/reviews/lg/27gr95qe-b

Scroll down to Color Accuracy (Post-Calibration). This is the first time in memory where I've seen a monitor score worse than the pre-calibration. Are they doing something wrong? Also - the LG Calibration Studio calibration that you did - is it a true hardware calibration? Are the settings you apply to the screen done on hardware and thus hold for whatever display you use?

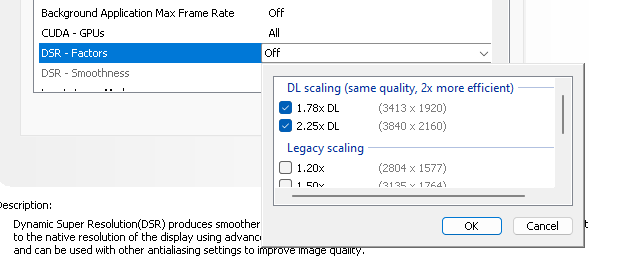

Also, I've been playing a game not using DLSS [on my video card] and thought the performance was good. Playing around, I enabled it the other day (I always thought it was unnecessary/could introduce artifacts if your system was beefy enough to run without it) and got a HUGE performance boost, to the point I can't even believe how responsive this monitor can actually get. (It was decent before, but this is a whole other level as far as how good/smooth it feels.) Pretty insane! I didn't use the frame counter but I'm quite sure I was hitting the 240 with DLSS on. It was like butter - definitely not going back to DLSS off.

Yep.BFI for modern titles too? If you want to turn on Ray Tracing...

While I am doing a fair number of strobe/BFI contracts behind the scenes, I am pressing hard for brute framerate-based motion blur reduction to gain much more traction by the end of the decade. Feeding off a small percentage of my contract work, I'm actually spending a significant amount of time/funds towards the future 1000fps 1000Hz ecosystem, since I've developed a new Blur Busters Master Plan all around it. So I plan to release some white papers/articles sometime this year about it. Reprojection is actually easier to deploy than one thinks -- the biggest problem is integration of inputreads into the reprojection API, so would ideally need to be done as sort of a UE plugin;Re-projection idea is cool but its much easier to make shader-less demo with few primitives than modern game with it. That said this tech already exists in VR world and I saw it years ago on Oculus Rift along with frame-rate interpolation. It made games running at 45 fps roughly feel like 90 fps. Even input latency was not an issue. What however was were artifacts. Some times subtle and at places somewhat jarring. Still I could see this tech adopted for games for normal displays. Especially with BFI could make real difference.

Please tell the manufacturers that the customers want it. Lots of us enthusiasts would love being able to have our cake and eat it too with OLED and BFI. I would jump on it in an instant. No cap. I’m a crt tube head that’s been waiting for this moment.While I am doing a fair number of strobe/BFI contracts behind the scenes, I am pressing hard for brute framerate-based motion blur reduction to gain much more traction by the end of the decade. Feeding off a small percentage of my contract work, I'm actually spending a significant amount of time/funds towards the future 1000fps 1000Hz ecosystem, since I've developed a new Blur Busters Master Plan all around it. So I plan to release some white papers/articles sometime this year about it. Reprojection is actually easier to deploy than one thinks -- the biggest problem is integration of inputreads into the reprojection API, so would ideally need to be done as sort of a UE plugin;

What I found that;

- ASW 2.0 was much more artifactless than ASW 1.0 because it used the depth buffer; so the jarring artifacts mostly disappeared; and

- Sample and hold reprojection has no double-image artifacts, it's simply extra motion blur on lower-framerate objects (unreprojected objects).

Tests with the downloadable demo (which is more akin to ASW 1.0 quality, rather than ASW 2.0 quality). Doing 100fps as the base frame rate, is above the flicker fusion threshold also eliminates a lot of reprojection artifacts. To understand the stutter-to-blur continuum (sample and hold stutters and blur is the same thing) -- see www.testufo.com/eyetracking#speed=-1 and look at 2nd UFO for at least 20 seconds. Low frame rates vibrates like a slow-vibrating music string, and high frame rates blur like a fast-vibrating music string.

Once reprojection starts off with a base frame rate above flicker fusion threshold (e.g. 100fps), the vibrating stutter is blur instead; and the sample and hold effect ensures there's no double-image effect. Now, apply the ASW 2.0 algorithm instead of ASW 1.0, and the artifacts is less than DLSS 3.0.

Some white papers will come out later 2023 to raise awareness by researchers, by developers, by GPU vendors, etc. With OLED having visible mainstream benefits even in non-game use (240Hz and 1000Hz is not just for esports anymore) if it can be done cheaply, with near zero-GtG displays. People won't pay for it if it costs unobtainum, but reprojection is a cheap upgrade to GPUs. And, will help sell more GPU s in the GPU glut, and also expand the market for high-Hz displays outside esports.

120Hz vs 240Hz is more mainstream visible on OLED than LCD, and the mainstreaming of 120Hz phones, tablets and consoles is raising awareness of the benefits of high refresh rates. One of the white papers I am aiming to write, is about the geometric upgrade requirement (60 -> 144 -> 360 -> 1000) combine with the simultaneous need to keep GtG as close to zero as possible. Non-flicker-based motion blur reduction is a hell lot more ergonomic; achieved via brute framerate-based motion blur reduction; reprojection ratios of 10:1 reduces display motion blur by 90% without strobing, making 1ms MPRT possible without strobing -- and I've seen it with my eyes. It's the future; but I need to play the Blur Busters Advocacy role to educate the industry (slowly) over the years -- like I did with LightBoost in 2012 to, um, spark the explosion of official strobe backlights.

The weak links preventing Hz-vs-Hz visibility caused by the spoonfeeding of GtG-bottlenecked jitter-bottlenecked refresh rate incrementalism means 240Hz-vs-360Hz, only a 1.5x difference, is literally throttled to only about 1.1x because of other various factors (slow GtG, jitter factors). Average users don't care about that. Even high-frequency jitter (70 jitters/sec at 360Hz) vibrates so fast that it's just extra blur; and that's on top of GtG blur mess on top of pure nice linear-blur MPRT.

Now, in a blind test, more Average Users (>90%) can tell 240Hz-vs-1000Hz on an experimental 0ms GtG display (monochrome DLP projector) in a random test (e.g. www.testufo.com/map becoming 4x clearer) much better than they can tell apart 144Hz-vs-240Hz LCD. You did have to zero-out GtG, and go ultra-dramatic up the curve, but just because many can't tell 240Hz-vs-360Hz or 144Hz-vs-240Hz, doesn't mean 240Hz-vs-1000Hz isn't a bigger difference to mainstream! (Blind test instructions were posted in the Area 51 of Blur Busters Discussion Forum).

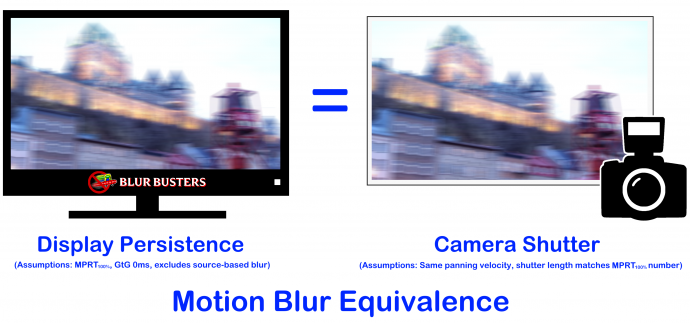

On a zero-GtG display (where all motion blur is MPRT-only), for 240fps 240Hz versus 1000fps 1000Hz, the blur difference is akin to a 1/240sec photograph versus a 1/1000sec photograph (4x motion clarity difference):

As an indie that plays the Refresh Rate Advocacy role -- I try to find ways to convince companies. If the companies can see a market to pushing frame rates AND refresh rates up geometrically (and catch even a bigger % of the niche). The high Hz market is slowly becoming larger and larger.

I'm really focussed on firing all the cylinders of the weak-links motor. 240Hz OLED just solved the GtG bottleneck, reprojection will solve the GPU bottleneck, and 1000Hz OLED is being targeted before the end of the decade.

But yes, BFI is going to be perpetually important for games that can't use reprojection, and legacy material (I love saying "60 years of legacy 60fps 60Hz material"), as I'm pushing REALLY hard to multiple parties to add BFI to OLED. It's a challenge, since some don't think BFI is needed.

I'm doing both routes.

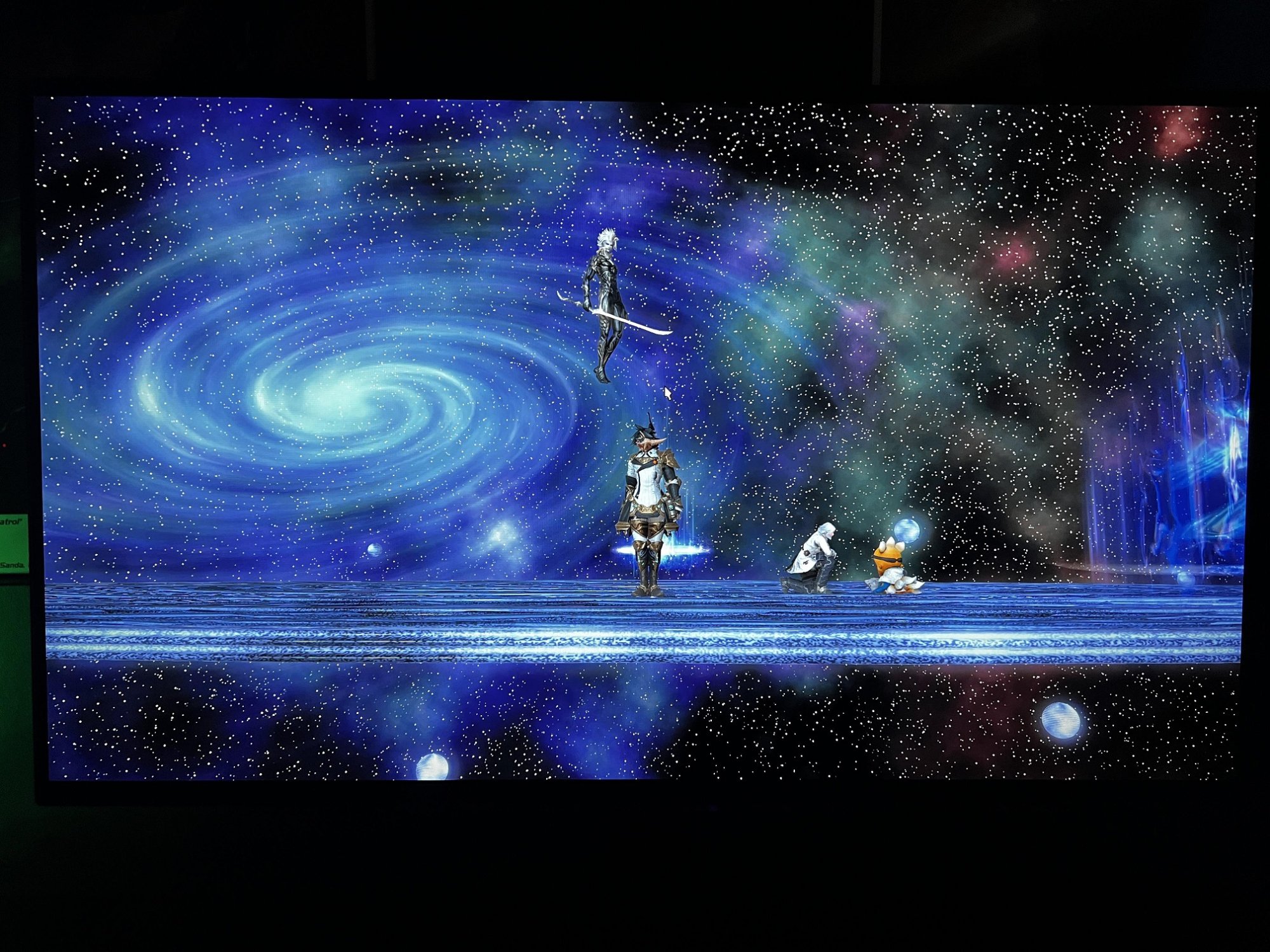

That's the content that OLED shines really well on. I've noticed even 10,000-LED-count FALD LCD still struggle on this specific type of material. FALD is superior for larger amounts of bright pixels than that, but if you're a lover of horror/space/dungeon/etc...Loving this monitor more and more.

Still doing my best. You've seen what I've done with some models to successfully bring 60Hz single-strobe to a few models.Please tell the manufacturers that the customers want it. Lots of us enthusiasts would love being able to have our cake and eat it too with OLED and BFI. I would jump on it in an instant. No cap. I’m a crt tube head that’s been waiting for this moment.

For simplicity of expectations, the first BFI would be for 60Hz and 120Hz only.

Based on what I now know about large-size OLED panel backplane behavior, I suspect we're hurtling faster towards 1000Hz capability (~2027-2030ish) than for subrefresh rolling-scan BFI.Noooooooo I already have a 120Hz BFI OLED (LG CX) and was really hoping for that next leap to 240Hz BFI OLED. I'm sure the motion clarity will be a sight to behold! Hopefully my CX will last until that day arrives.

This would give you display gamma of around 3.0Gamma Mode 3

(...)

.81 gamma important to lower for better contrast

I received my monitor yesterday and does anyone want to share their current settings? I have tried the TFT Central videos settings and the monitor just still feels dull and colors feel off in SDR on Gamer 1 or Gamer 2 modes. I switched on HDR and Auto HDR on within Win 11 and it does feel brighter but the darks feel a little too dark for fps shooters. I tried messing with the HDR/SDR slider Win 11 offers but it made everything washed out. I did try the Vivid mode other videos mention and in SDR it seems to be brighter than the rest of the preset modes but again colors seemed off. At this point I don't know if it's something I just need to spend more time with to get used to the wider color gamut or if OLED might not just be for me. FYI I had a 32" 1440p 165hz LG VA panel that's almost six years old and my 2nd monitor is an LG 32" 1440p ips 60hz panel used for browsing/content. The new LG OLED is used for gaming only.

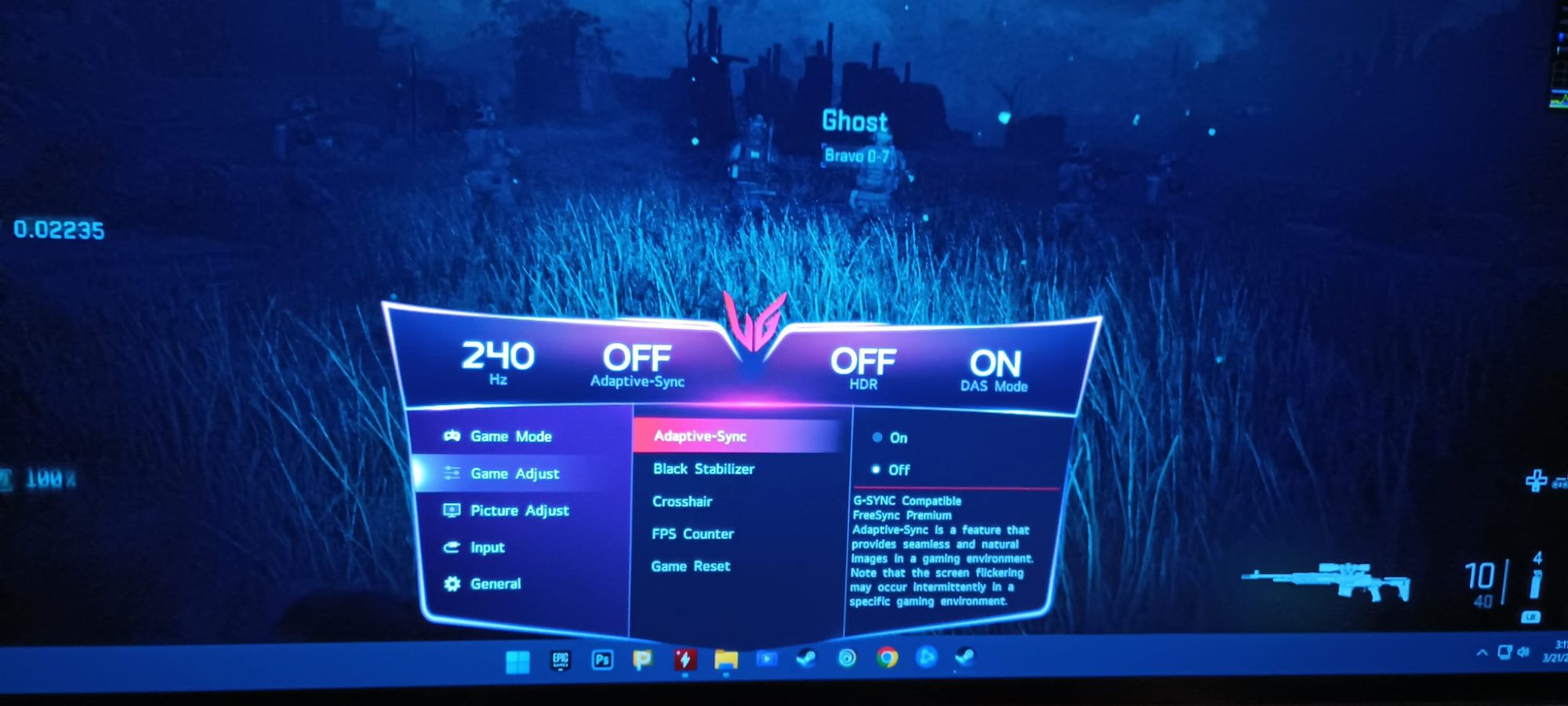

I just noticed that on LG 48GQ900 I have DAS enabled in VIVID, sRGB and calibration modes whereas when I checked it before DAS was disabled in these modes and switching between mode with DAS and without it there would be noticeable latency difference (read: one frame of input lag with DAS being off)

Might be something to do with the used settings. I also have DAS at ON for all modes on LG 27GP950 and was surprised and disappointed it was not enabled everywhere on 48GQ900. Now it seems I can play with proper colors without needing to try to calibrate Gamer mode using six axis color controls like I planned to do for games where wide gamut wouldn't look right.

Can anyone with 27GR90QE confirm these monitors can also do DAS ON in sRGB/calibration modes?

I noticed that if DAS is ON or OFF in sRGB or calibration mode depends on video mode on 48GQ900.DAS does not appear to be available in any modes except the Gamer modes on this monitor, and there doesn't seem a setting to enable it. It's perplexing why so much is locked out in non-Gamer modes.

That said, I haven't personally noticed any appreciable lag/difference. I'm not a competitive player, but it seems just as responsive to me as long as it's set to 240 Hz and VRR is enabled (GSync is not).

I noticed that if DAS is ON or OFF in sRGB or calibration mode depends on video mode on 48GQ900.

At 60, 96 and 120Hz its OFF and at overclocked 138Hz its ON

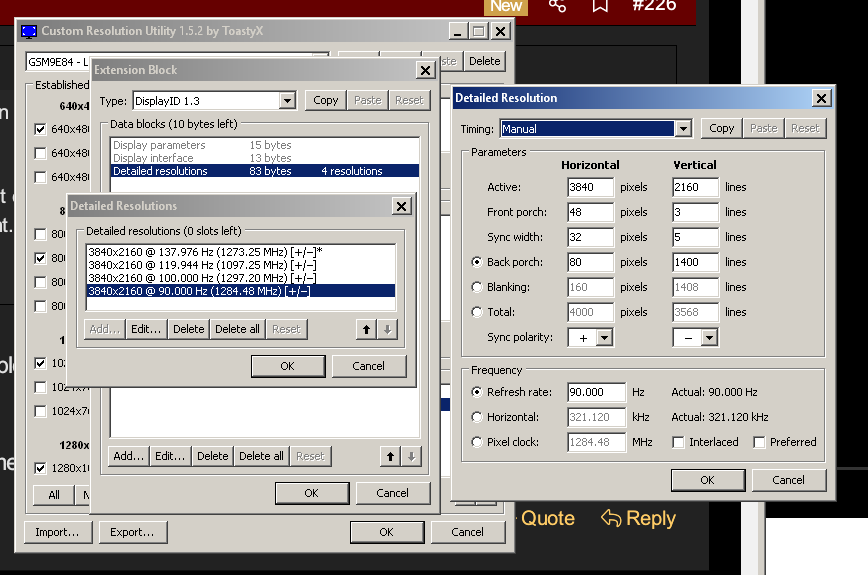

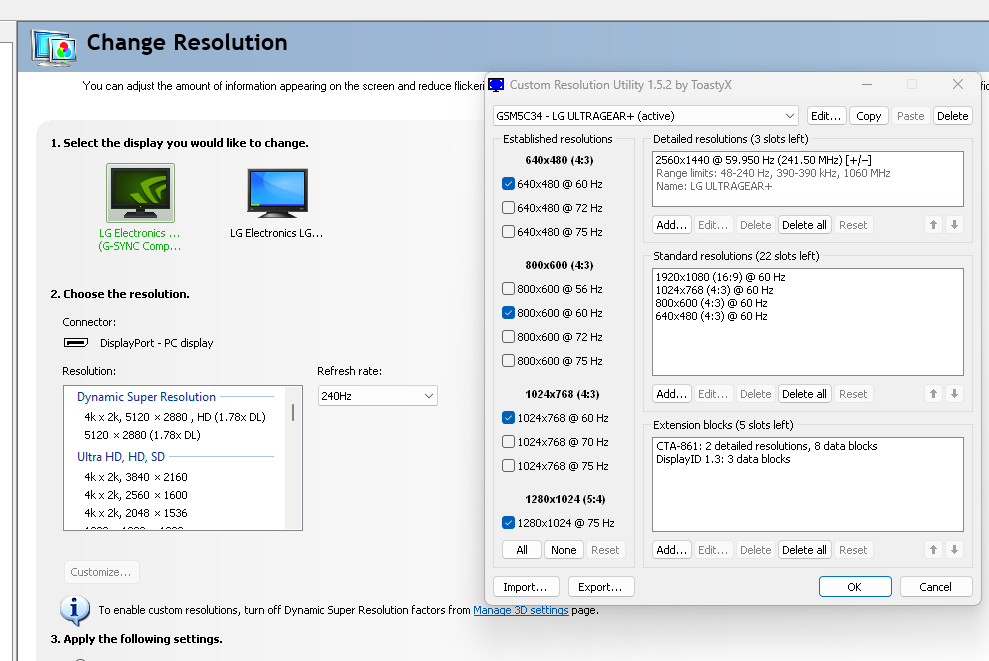

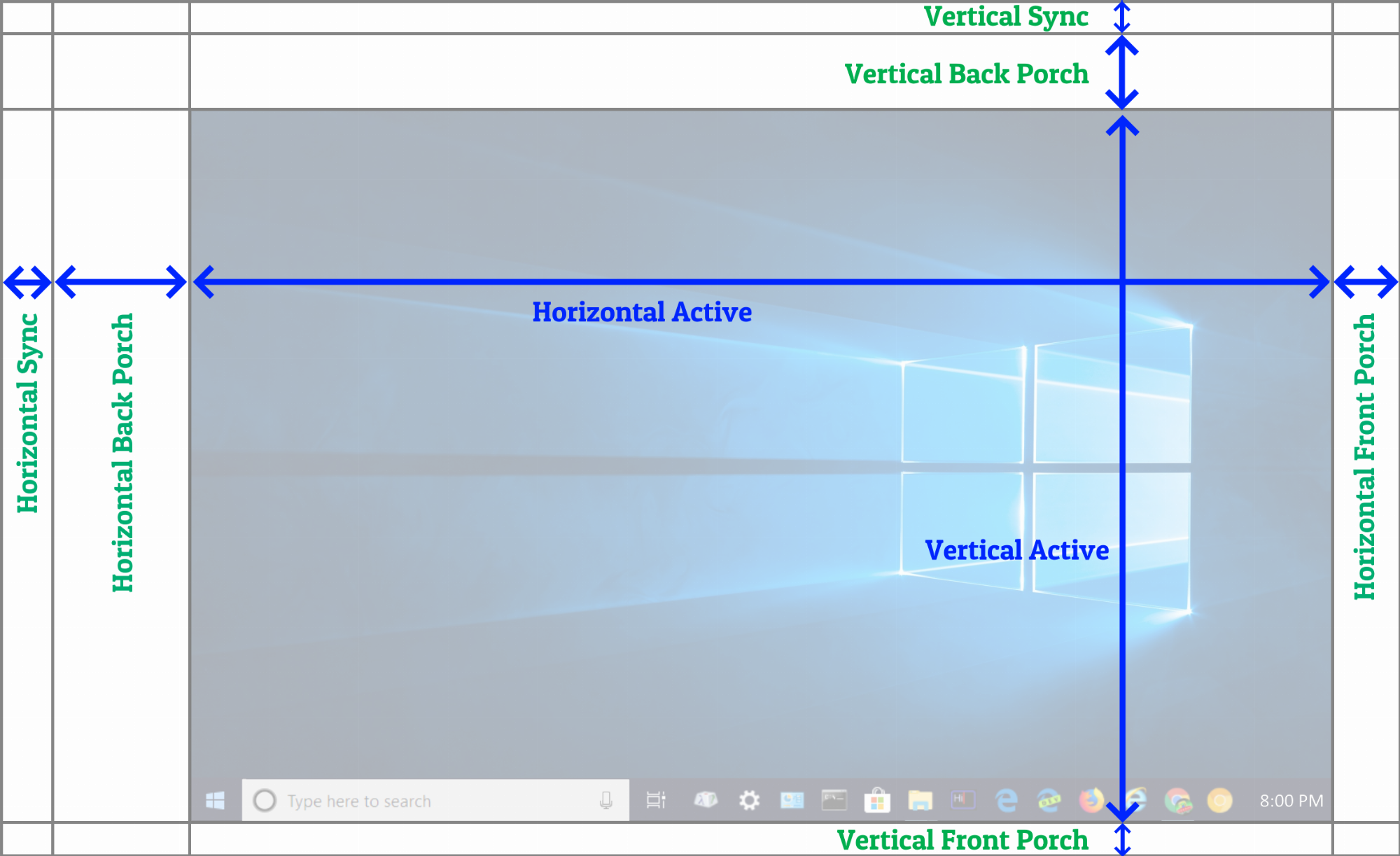

Whayt you can try is creating custom video mode with higher bandwidth because on my monitor it seems bandwidth is used to enable DAS in sRGB. When there is enough blanking lines to increase bandwidth to around or past what monitor uses in its factory overclock mode DAS is enabled.

View attachment 559570

So it might be worth checking on 27GR95QE i eg. creating video mode with smaller refresh rate but higher overall bandwidth helps. Might be also possible to increase refresh rate but probably monitor will throw out of range message. It should not throw such messages when refresh rate is smaller than 240Hz. Just beware that image might not apear if bandwidth is too high so best to always set video mode to default before restarting GPU otherwise you might have issues. Especially if that is the only monitor you have connected to your PC. Otherwise should be pretty safe to do.

That's interesting way -- to use QFT to enable DAS.So it might be worth checking on 27GR95QE i eg. creating video mode with smaller refresh rate but higher overall bandwidth helps. Might be also possible to increase refresh rate but probably monitor will throw out of range message. It should not throw such messages when refresh rate is smaller than 240Hz. Just beware that image might not apear if bandwidth is too high so best to always set video mode to default before restarting GPU otherwise you might have issues. Especially if that is the only monitor you have connected to your PC. Otherwise should be pretty safe to do.

I just switched back to the Stock LG Stand the one I bought off Amazon was ok but the Stock one is better =) I think the monitor warped the back a bit cause there is a little play on the back with the Stock stand. I just needed a 3rd party stand for training wheels to get used to the monitor I guess. What I reall y could use is a Office Chair that went a bit higher or my Desk could go a tad lower but I already took a hacksaw to my desk a few year back.

Sorry to hear it didn't work out. I had gone through two monitors I wasn't happy with before finding this one, so I know how that feels, and it is frustrating! It's a very personal choice with a lot of factors to consider! At any rate, good luck with your search and I hope you find something you're happy with!I ended up returning mine. I could not find the right settings to suit me and it was driving me crazy. IMO if I spend 1k on a monitor it needs to be near perfect out of the box with minimal fiddling on my end. I did nothing but change settings on this thing non stop the entire time I had it, very frustrating. I will probably go the mini led route and look back into OLED in a couple more years down the road. I appreciate you guys sending me some setting to test!