Zarathustra[H]

Extremely [H]

- Joined

- Oct 29, 2000

- Messages

- 38,877

Hey all,

A few questions.

1.) I have read in the past that ESXi is terrible at LACP and it is best to avoid it. Is this a historical concern, or still the case with ESXi 5.5?

My ESXi does A LOT of network traffic to quite a few clients, but I only have gigabit ethernet on my network.

Currently I have dual Intel Ethernet adapters Direct I/O forwarded to my heaviest network using guests, and have bonded the two ports in the guest and on my ProCurve switch using the "trunking" menu.

This is obviously not ideal. I don't use vMotion, but I would like to regain more RAM flexibility, by not using Direct I/O forwarding, as well as more flexibly using my network capacity.

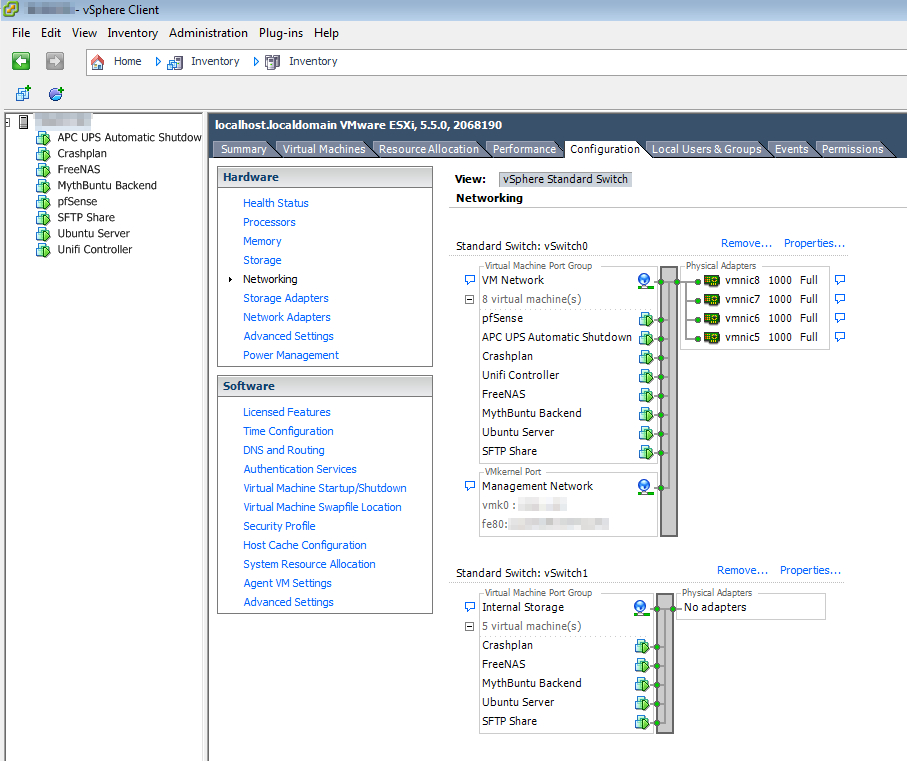

2.) I figured a setup like this would work:

Each traffic heavy guest gets a VMXNET3 virtual 10gig interface to the VSwitch. Then instead of Direct I/O forwarding the adapters, I assign them all to that vswitch, and use LACP to connect them to my physical Procurve switch using trunking.

Is this a good way to do things?

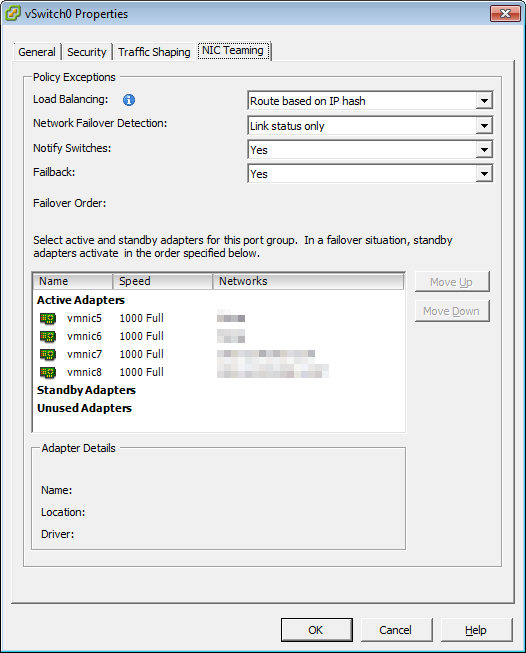

3.) Looking at the "teaming" settings in the ESXi 5.5 client, it would appear that the terminology is very different than I am used to for this stuff (though this doesn't surprise me, as every vendor seems to have their own terminology for this stuff (Unix LAGG, Linux Bond, HP Trunking, Intel Teaming, etc. etc.)

Can anyone tell me which of the settings correspond to proper LACP that will be compatible with the LACP in the Trunking menu on my ProCurve 1810G-24?

Much obliged!

--Matt

A few questions.

1.) I have read in the past that ESXi is terrible at LACP and it is best to avoid it. Is this a historical concern, or still the case with ESXi 5.5?

My ESXi does A LOT of network traffic to quite a few clients, but I only have gigabit ethernet on my network.

Currently I have dual Intel Ethernet adapters Direct I/O forwarded to my heaviest network using guests, and have bonded the two ports in the guest and on my ProCurve switch using the "trunking" menu.

This is obviously not ideal. I don't use vMotion, but I would like to regain more RAM flexibility, by not using Direct I/O forwarding, as well as more flexibly using my network capacity.

2.) I figured a setup like this would work:

Each traffic heavy guest gets a VMXNET3 virtual 10gig interface to the VSwitch. Then instead of Direct I/O forwarding the adapters, I assign them all to that vswitch, and use LACP to connect them to my physical Procurve switch using trunking.

Is this a good way to do things?

3.) Looking at the "teaming" settings in the ESXi 5.5 client, it would appear that the terminology is very different than I am used to for this stuff (though this doesn't surprise me, as every vendor seems to have their own terminology for this stuff (Unix LAGG, Linux Bond, HP Trunking, Intel Teaming, etc. etc.)

Can anyone tell me which of the settings correspond to proper LACP that will be compatible with the LACP in the Trunking menu on my ProCurve 1810G-24?

Much obliged!

--Matt

![[H]ard|Forum](/styles/hardforum/xenforo/logo_dark.png)