Michaelius

Supreme [H]ardness

- Joined

- Sep 8, 2003

- Messages

- 4,684

because of this

Check that guy posting history on Anandtech forums

Follow along with the video below to see how to install our site as a web app on your home screen.

Note: This feature may not be available in some browsers.

because of this

I see that the NVIDIA DEFENSE SQUAD is finally showing up. Allow me to explain the results to you, since the Fury X is capable of delivering smoother gameplay at 4K Ultra, this means unlike the 980 TI, I can turn down a few settings and enjoy better gameplay unlike the 980TI.

Please post source for UE4 already supporting async because thus far Unreal only supports dx 12 implements for XBOne.

https://docs.unrealengine.com/lates...ing/ShaderDevelopment/AsyncCompute/index.html

http://www.anandtech.com/show/9659/fable-legends-directx-12-benchmark-analysis/2

Anand gives a different outlook.

Check that guy posting history on Anandtech forums

There's gotta be something hardware-wise that's bottlenecking the Fury (or GCN 1.2 for that matter). If the 390X and even the 390 can outpace the 980 at DX12, why does the Fury seem so horribly gimped by comparison?

because of this

Lion Head isn't using the same engine as what is available to everyone else ok, and they have stated for this demo benchmark for Dx12, they are using async compute for PC's. If you are too lazy to read the articles, I suggest you don't post. I don't understand why people don't read... doesn't make any sense, they just want to be spoon feed.

Please post source for UE4 already supporting async because thus far Unreal only supports dx 12 implements for XBOne.

https://docs.unrealengine.com/lates...ing/ShaderDevelopment/AsyncCompute/index.html

I actually looked at the chart and frame times are poor for both. Unplayable regardless of which card you have. So that's a win for Fury? lol

As far as turning settings down - you would have to re-test the frame time results then. You can't just assume they would be better.

My biggest takeaway from this is anyone that continues to recommend a 970 over a 390 should get an infraction for trolling.

So far there's no indication that Maxwell is going to pull ahead of Hawaii in DX12 games. We now have two benchmarks and the results are "meh" at best for Nvidia so there's no reason to continue buying 970's.If all you're ever going to play is one cartoon F2P then sure. Not to say 390X isn't bad value if you can get a good price on one, but so many current DX11 games where it gets best handily by a mildly OC'd 970.

My biggest takeaway from this is anyone that continues to recommend a 970 over a 390 should get an infraction for trolling.

So far there's no indication that Maxwell is going to pull ahead of Hawaii in DX12 games. We now have two benchmarks and the results are "meh" at best for Nvidia so there's no reason to continue buying 970's.

The 390 is at least as good as the 970 in DX11 games, and it has over twice the VRAM. With the performance gains of DX12 (which may or may not exist in every game) you're taking a gamble with the 970 at this point. There's really no pay off.

The real question is simply, why buy a GTX 970? You just shouldn't unless you have no choice... So, if you own a G-Sync monitor then buy a 970, I guess.

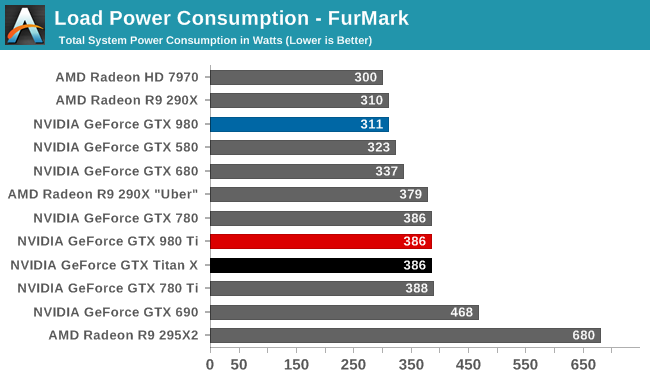

The 390 is not much faster in actual games on the market, but holy shit it sucks up enough power to require it's own flux capacitor.

Eh how about day one drivers? Shadowplay? OC headroom? Cooler? Less power? All the current DX11 titles the 970 is faster at? Plenty of reasons. A tech demo and a cartoon game are a bit premature to be declaring victories for one side or the other. Lets wait for meaningful and reproducible benches on games people actually want to play.

I thought drivers didn't matter in DX12? Or is that only true when Nvidia says their drivers are outdated...Anandtech wasn't using the latest Catalyst either.

AMD Catalyst Cat 15.201.1102 vs 15.201.1151 out already.

Please post source for Fable Legends fully supporting DX 12 features.

Compute shader simulation and culling is the cost of our foliage physics sim, collision and also per-instance culling, all of which run on the GPU. Again, this work runs asynchronously on supporting hardware.

Hmmm

I don't know the validity of your post, but move the "Dark green line" bit above the graph so it's easier to see.pc perceptive review, I already linked it, and talked about it, and quoted it. READ instead of just posting without.

http://hardforum.com/showpost.php?p=1041873255&postcount=13

http://www.pcper.com/reviews/Graphi...-Benchmark-DX12-Performance-Testing-Continues

That is the developer talking about async compute for this specific benchmark.

When we do a direct comparison for AMD’s Fury X and NVIDIA’s GTX 980 Ti in the render sub-category results for 4K using a Core i7, both AMD and NVIDIA have their strong points in this benchmark. NVIDIA favors illumination, compute shader work and GBuffer rendering where AMD favors post processing, transparency and dynamic lighting.

I thought drivers didn't matter in DX12? Or is that only true when Nvidia says their drivers are outdated...

The 390 is not much faster in actual games on the market, but holy shit it sucks up enough power to require it's own flux capacitor.

I don't know the validity of your post, but move the "Dark green line" bit above the graph so it's easier to see.

What about dynamic lighting and post processing? Aren't those handled asynchronously? Looks like Maxwell loses at both of those.

AnandTech found similar results.

The real question is simply, why buy a GTX 970? You just shouldn't unless you have no choice... So, if you own a G-Sync monitor then buy a 970, I guess.

I don't know the validity of your post, but move the "Dark green line" bit above the graph so it's easier to see.

What about dynamic lighting and post processing? Aren't those handled asynchronously? Looks like Maxwell loses at both of those.

AnandTech found similar results.

Eh how about day one drivers? Shadowplay? OC headroom? Cooler? Less power? All the current DX11 titles the 970 is faster at? Plenty of reasons. A tech demo and a cartoon game are a bit premature to be declaring DX12 victories for one side or the other. Lets wait for meaningful and reproducible benches on games people actually want to play. Way too much assumption and extrapolation going on. It definitely looks good for 390 but it's far from no-brainer.

We spent the last month listening to people ramble about the 290X beating a 980 Ti in DX12. So... There's a bit of a difference in Fable.I dont see anything here that changes what we saw in AOTS.

We spent the last month listening to people ramble about the 290X beating a 980 Ti in DX12. So... There's a bit of a difference in Fable.

If all you're ever going to play is one cartoon F2P then sure. And that's assuming you can get a good price on one, but then factor that so many current DX11 games it gets beat handily by a mildly OC'd 970. By the time DX12 really hits a stride (end 2016 at earliest) and there's more than a few token titles, the landscape will have shifted again in that price bracket, in part due to Pascal.

In general though you can't go wrong either way if it's a choice between those two in particular.

Let's see....

200w 8hrs a day

(200x8)/1000 = 1.6kwh per day

$0.16 per kWh (here in CT, generation plus delivery cost)

1.6 x 0.16 = $0.256

Wow. If you game 8hrs a day, with the gpu pegged at 100% the whole time, it costs you 27 cents a day to run a 390 vs a 970.

Guess I'm not that worried about that $0.27 a day.

Yeah but that's 8 hours of full load per day, and the 970 also runs up your power bill too.