NightReaver

2[H]4U

- Joined

- Apr 20, 2017

- Messages

- 3,818

I mean most people do play at 1080p, but that same majority are still playing at 60 fps, so it really doesn't matter if one cpu can get 5% more fps when they're both above that target.

Follow along with the video below to see how to install our site as a web app on your home screen.

Note: This feature may not be available in some browsers.

Man the 3900x is a monster in many applications that are not games. It is the better chip in all applications that are not games. It beats Intel in so many tests, that are not games. So I see that the 9900k is at 449usd, 50 dollars less than the 3900x. I see the x570 platform starting at 300, in most cases more than that. I see every review site claiming the 3900x is the greatest, except for games. I see everyone on this forum talking about content creation as if content creators are the majority of pc builders/enthusiasts(really?). This is a joke of a launch. It is more expensive to build an AMD system than an Intel system for less gaming performance. I wanted AMD to dominate, they did not. Please, AMD, take one performance crown.

Do not come in talking about how trhe 3900x is the better chip unless you can prove you are a content creator with livestreams or actual content, otherwise you are a shill.

Me, I just do a build every few years with the best available. My first build ever was an Athlonxp and a 9800pro. That was a disruption for many years. This is a whiff.

I mean most people do play at 1080p, but that same majority are still playing at 60 fps, so it really doesn't matter if one cpu can get 5% more fps when they're both above that target.

Well, it helps if you are playing on a freesync or gsync capable monitor.

Yes, and the 9900K does not come with a cooler. The 3900X comes with a cooler that by all accounts is decent in performance. You need to take that into account when comparing prices, otherwise you are a shill for Intel by ignoring the facts.So I see that the 9900k is at 449usd, 50 dollars less than the 3900x.

Define "platform". If you are referring to the motherboard, there are many X570 offerings that start at less than $200. You also have the choice of NOT buying an X570 motherboard and instead choosing one of the many previous generation platforms that are still compatible with the 3000 series for even less money.I see the x570 platform starting at 300

Man the 3900x is a monster in many applications that are not games. It is the better chip in all applications that are not games. It beats Intel in so many tests, that are not games. So I see that the 9900k is at 449usd, 50 dollars less than the 3900x. I see the x570 platform starting at 300, in most cases more than that. I see every review site claiming the 3900x is the greatest, except for games. I see everyone on this forum talking about content creation as if content creators are the majority of pc builders/enthusiasts(really?). This is a joke of a launch. It is more expensive to build an AMD system than an Intel system for less gaming performance. I wanted AMD to dominate, they did not. Please, AMD, take one performance crown.

Do not come in talking about how trhe 3900x is the better chip unless you can prove you are a content creator with livestreams or actual content, otherwise you are a shill.

Me, I just do a build every few years with the best available. My first build ever was an Athlonxp and a 9800pro. That was a disruption for many years. This is a whiff.

I thought that stuff only smooths stuff out when you aren't pegged at max fps the whole time? If I'm playing at 60 fps and I never dip, does it really make a difference?

Correct me if I'm wrong, it's been a while since I used an AMD card (was during the mining craze), and there's no way I'm shelling out for gsync.

actually faster at 4k resolution. its 2019, i havent been on 1080p for years.

Between my department at work and at home I have seen exactly 1 monitor that is significantly greater than 1080p and that was an expensive (tens of thousands of dollars) medical imaging monitor. I loved working on it..

Maybe its time for me to shop for some new monitors however in the mean time 1080p is what I am interested in.

And from the opposite side of the spectrum, I haven't used a 1080p monitor in over a decade and a half. 1080p is not only the very last resolution I'm interested in, it doesn't even make the list of resolutions I'm interested in. You may as well game at 640x480 if you're going to game at 1080p

And from the opposite side of the spectrum, I haven't used a 1080p monitor in over a decade and a half. 1080p is not only the very last resolution I'm interested in, it doesn't even make the list of resolutions I'm interested in. You may as well game at 640x480 if you're going to game at 1080p

wow that hit me right in the feels.. my 1080p monitors are crying now because of you..

jk i'm just a cheap bastard otherwise i'd probably grab some 1440p monitors.but i agree, once you go 1440p+ there's no going back to 1080p, that's for damn sure.

wow that hit me right in the feels.. my 1080p monitors are crying now because of you..

jk i'm just a cheap bastard otherwise i'd probably grab some 1440p monitors.but i agree, once you go 1440p+ there's no going back to 1080p, that's for damn sure.

It's just my .02

I'm an odd duck...My perspective on the whole "What's the best res to play at?" issue is: There's 1 item in your computer you interact with no matter what you're doing....surfing, streaming, compiling, gaming, defragging an old spinner, watching teh pr0n, whatever....

It's your monitor. It should be the primary item in your build since supporting the resolution/settings you want to play at will determine every other component you use in your build.

When I build a system I decide what res and features I want to play at, then choose my monitor and, from there, the rest of the parts. When I get to the hardware choices on the system side, start with the power supply since it's the one item in a build which impacts everything else in the build...

Man the 3900x is a monster in many applications that are not games. It is the better chip in all applications that are not games. It beats Intel in so many tests, that are not games. So I see that the 9900k is at 449usd, 50 dollars less than the 3900x. I see the x570 platform starting at 300, in most cases more than that. I see every review site claiming the 3900x is the greatest, except for games. I see everyone on this forum talking about content creation as if content creators are the majority of pc builders/enthusiasts(really?). This is a joke of a launch. It is more expensive to build an AMD system than an Intel system for less gaming performance. I wanted AMD to dominate, they did not. Please, AMD, take one performance crown.

Do not come in talking about how trhe 3900x is the better chip unless you can prove you are a content creator with livestreams or actual content, otherwise you are a shill.

Me, I just do a build every few years with the best available. My first build ever was an Athlonxp and a 9800pro. That was a disruption for many years. This is a whiff.

You may as well game at 640x480 if you're going to game at 1080p

Between my department at work and at home I have seen exactly 1 monitor that is significantly greater than 1080p and that was an expensive (tens of thousands of dollars) medical imaging monitor. I loved working on it..

Maybe its time for me to shop for some new monitors however in the mean time 1080p is what I am interested in.

Why don’t you go ahead and give that a shot then.

Man the 3900x is a monster in many applications that are not games. It is the better chip in all applications that are not games. It beats Intel in so many tests, that are not games. So I see that the 9900k is at 449usd, 50 dollars less than the 3900x. I see the x570 platform starting at 300, in most cases more than that. I see every review site claiming the 3900x is the greatest, except for games. I see everyone on this forum talking about content creation as if content creators are the majority of pc builders/enthusiasts(really?). This is a joke of a launch. It is more expensive to build an AMD system than an Intel system for less gaming performance. I wanted AMD to dominate, they did not. Please, AMD, take one performance crown.

Do not come in talking about how trhe 3900x is the better chip unless you can prove you are a content creator with livestreams or actual content, otherwise you are a shill.

Me, I just do a build every few years with the best available. My first build ever was an Athlonxp and a 9800pro. That was a disruption for many years. This is a whiff.

9900k is not cheaper when you include platform cost. As stated you can use a $100 last gen board for the 3900x.

I’d like to know what games you play at what resolutions when you claim the 9900k is better for gaming. If it’s anything above 1080p it’s a wash.

People who think you can only be CPU limited at 1080p must have really slow computers...

I game at 1440p and spend ~10% of my time ripping DVDs (encoding, sorta). Explain to me why 9900K is the better purchase please.

This is the part so many miss. Z390 is a dead end, 9900K is the last "upgrade" for it. Want anything faster? Another $200+ for the mobo you go.

If we're going to criticize the cost of X570, then let's at least acknowledge that Zen 3 (Ryzen 4000) is guaranteed to be supported, and possibly even Zen 4 (Ryzen 5000). Good luck trying to do drop-in upgrades with Intel lol.

Man the 3900x is a monster in many applications that are not games. It is the better chip in all applications that are not games. It beats Intel in so many tests, that are not games. So I see that the 9900k is at 449usd, 50 dollars less than the 3900x. I see the x570 platform starting at 300, in most cases more than that. I see every review site claiming the 3900x is the greatest, except for games. I see everyone on this forum talking about content creation as if content creators are the majority of pc builders/enthusiasts(really?). This is a joke of a launch. It is more expensive to build an AMD system than an Intel system for less gaming performance. I wanted AMD to dominate, they did not. Please, AMD, take one performance crown.

Do not come in talking about how trhe 3900x is the better chip unless you can prove you are a content creator with livestreams or actual content, otherwise you are a shill.

Me, I just do a build every few years with the best available. My first build ever was an Athlonxp and a 9800pro. That was a disruption for many years. This is a whiff.

Where is Zen 3 guaranteed to be supported? AMD has stated that AM4 will be supported until 2020. Zen 3 desktop products will probably be released in late 2020 to early 2021 since the server (Milan) products are slated for mid-2020. There's a good chance that Ryzen 3000 is the end of the road for AM4.

Where is Zen 3 guaranteed to be supported? AMD has stated that AM4 will be supported until 2020. Zen 3 desktop products will probably be released in late 2020 to early 2021 since the server (Milan) products are slated for mid-2020. There's a good chance that Ryzen 3000 is the end of the road for AM4.

Then maybe you shouldn’t be so asinine as to compare it to 640x480. To view them as the same paints you as extremely ignorant.Nah. As I said, I haven't used 1080p (or lower) in 15+ years.

Why don't you try it first and create a thread about it.

This is true. I kind of feel like an idiot moving up and leaving myself no upgrade path. But, I'm still on Sandy with DDR3, so I obviously keep my stuff for quite a while. If I wait any longer, I'll be skipping an entire memory generation.

I know. I played CS for years (~1999 to ~2005) and ran 3 of the most popular public servers on the East coast for many years.

When you said "competitive" I thought you meant the narrow subset of people who actually played it in actual competitions, not just for fun.

At least in my time we were still trying to enjoy the game, liked whatever the graphical quality we could get and weren't trying to manipulate and disable whatever graphics settings we could all in some misguided attempt to get some ridiculous framerate.

One stupid trend I see kids doing now Is playing in a 4:3 resolution stretched to 16:9 in some misguided effort to make it easier to aim and get an advantage.

The "gamer" kids these days are so dumb it just makes me want to repeatedly bash my head against a wall.

Considering Zen 2 desktop released before Rome (Epyc 2), I'm not sure that conclusion holds. To be clear I'm not saying Zen 3 desktop will release before Milan, but at this point I see no evidence pointing to Ryzen 3000 being end of the road for AM4.

Fair enough. I guess I read AMD's statement as AM4 will be supported until end of 2020, in which case Ryzen 4000 will most likely make the cut.

Fack you very much Dan_D, now what am I supposed to upgrade to?

Z390 is dead, and I absolutely do not want a 14nm++++ chip, so Intel's out until 2021 at least. Meanwhile I'd really hate to be on the tail-end of AM4, so I guess one more year of waiting it is.

Question Dan, When you say 'doesn't hit advertised boost'. Do you mean on all core or single core?

https://www.newegg.com/amd-ryzen-7-3800x/p/N82E16819113104Pros: - As manual OC gives much less performance in a lot of cases (especially gaming) for Zen 2, PBO2 now plays a huge rule, and a lot of people doesn't see the point of getting a 3800x, but the advantage of 3800x compare to 3700x is that they are better binned and have higher boost clock and higher TDP. If you don't mind the extra premium, it will boost 100-200 MHz higher than the 3700X. Although you won't see a huge difference compare to 3700X, usually 5-10 FPS gain, in some cases exact same FPS as 3700X. It's for people who want to get the top end 8C/16T chip AMD has to offer, and it's gaming performance is much closer to 3900X.

Cons: - Causing fire in Intel's HC

Let me be clear on how this works.

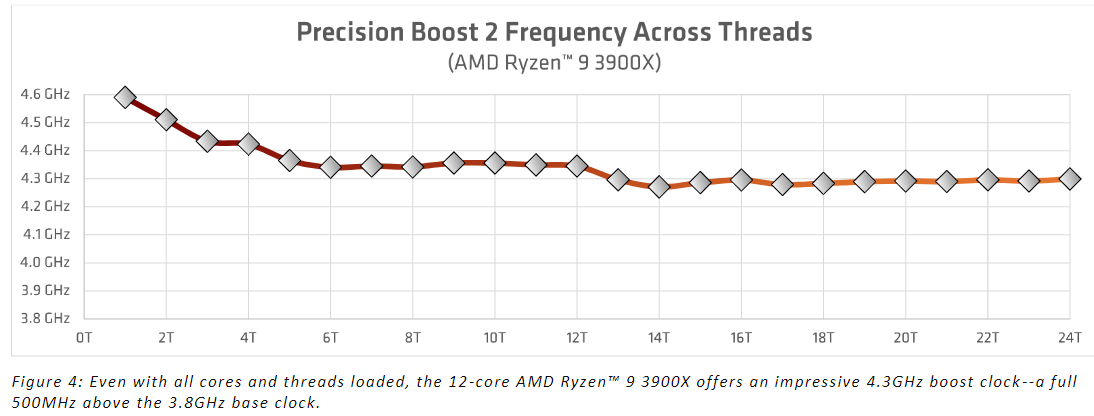

There is no specific all core boost clock in the traditional sense. There is no guaranteed frequency that these CPU's will do in multi-threaded applications. The Precision Boost 2 algorithm attempts to boost loaded core clocks as high as possible until power or thermal limits are reached. These limits can be from VRM output, socket power, or CPU / VRM thermal limits. The way thermals ramp up under load basically has the CPU's even out their clock speeds across all cores. Where these stop is going to depend on the specific workload, power and thermal conditions. To some extenet, the quality of your specific CPU will come into play as well. Under PBO, you can defer to the motherboards PPT, EDC and TDC values, or you can manually adjust these. Even if you go ham on the limits and input stupidly high values, the CPU is still at least partially governed by its own internal values. While PPT, EDC and TDC can effectively be overridden, the CPU still has an OEM max clock speed limit programmed into the CPU.

Here is data provided by AMD on their tests. I can confirm this is pretty much the case and its roughly in line with what I saw on a manual all core overclock. I typically saw boost clocks under load that were a bit lower than these, but that can come down to a variety of things. Ambient temperatures in my office, specific board BIOS settings, and motherboard design. Some reviewers like Gamer's Nexus reported better clocks than I saw, but the GIGABYTE Aorus Master they used has a better VRM design than the MSI does. Or at least, one that's capable of outputting more power with less heat. I believe this is the case but, I haven't confirmed the design details. This is something I saw or heard mentioned somewhere.

View attachment 173117

More specifically, AGESA Code 1.0.0.3 patch A isn't as aggressive as 1.0.0.3 patch AB as far as clocks go. The review BIOS revisions were patch A, not AB. MSI has provided me with a unrealeased internal copy of its latest BIOS using AGESA code 1.0.0.3 AB which I'm in the process of testing but the fact of the matter is, I'm not seeing any real improvement in boost clocks. Keep in mind that boost clocks are never guaranteed. According to Ryzen Master, using PB2, I'm hitting the TDC, EDC, and PPT limits as it is. Therefore, it isn't going to go beyond the 4.425GHz I'm seeing now. It doesn't do this single thread either, but that could easily be my silicon just not being that great. The interesting thing about the new Ryzen Master is that you can actually see what each core is doing. You can even watch the CPU shift to using a different core and trying to boost it higher. You can also see cores go into sleep mode, or wake up and run at various frequencies.

In short, my specific 3900X does not run at the advertised 4.6GHz boost clock. That clock is generally a single core boost clock. No "all core" boost clock is advertised anywhere for these CPU's. That said, expecting around 4.0-4.3GHz on all cores is reasonable. One thing I'll restate that I said in my review is this: I don't think manual overclocking is the way to go with these chips. I think your best bet is to buy the best motherboard and cooling you are willing to pay for and use PBO or PBO with the +offset to achieve your best results. I have been able to achieve 4.5GHz in single threaded applications with PBO+200MHz offset. So I have gotten close.

That said, I do not think the difference in clock speeds would alter the bottom line on these CPUs. I will verify this, but I don't think it will. The fact is, the all core overclocking and performance under multi-threaded workloads is unaffected by this issue and therefore said data is 100% accurate as it stands. Single threaded or lightly threaded applications might end up with a boost, but per MSI on AMD's AGESA code, performance can vary due to other variables in the code, and seeing a higher clock speed in CPU-Z or Ryzen Master does not guarantee better performance. I've only run Cinebench so far, and under PB2, I've seen no impact to the test results.

Let me be clear on how this works.

There is no specific all core boost clock in the traditional sense. There is no guaranteed frequency that these CPU's will do in multi-threaded applications. The Precision Boost 2 algorithm attempts to boost loaded core clocks as high as possible until power or thermal limits are reached. These limits can be from VRM output, socket power, or CPU / VRM thermal limits. The way thermals ramp up under load basically has the CPU's even out their clock speeds across all cores. Where these stop is going to depend on the specific workload, power and thermal conditions. To some extenet, the quality of your specific CPU will come into play as well. Under PBO, you can defer to the motherboards PPT, EDC and TDC values, or you can manually adjust these. Even if you go ham on the limits and input stupidly high values, the CPU is still at least partially governed by its own internal values. While PPT, EDC and TDC can effectively be overridden, the CPU still has an OEM max clock speed limit programmed into the CPU.

Here is data provided by AMD on their tests. I can confirm this is pretty much the case and its roughly in line with what I saw on a manual all core overclock. I typically saw boost clocks under load that were a bit lower than these, but that can come down to a variety of things. Ambient temperatures in my office, specific board BIOS settings, and motherboard design. Some reviewers like Gamer's Nexus reported better clocks than I saw, but the GIGABYTE Aorus Master they used has a better VRM design than the MSI does. Or at least, one that's capable of outputting more power with less heat. I believe this is the case but, I haven't confirmed the design details. This is something I saw or heard mentioned somewhere.

View attachment 173117

More specifically, AGESA Code 1.0.0.3 patch A isn't as aggressive as 1.0.0.3 patch AB as far as clocks go. The review BIOS revisions were patch A, not AB. MSI has provided me with a unrealeased internal copy of its latest BIOS using AGESA code 1.0.0.3 AB which I'm in the process of testing but the fact of the matter is, I'm not seeing any real improvement in boost clocks. Keep in mind that boost clocks are never guaranteed. According to Ryzen Master, using PB2, I'm hitting the TDC, EDC, and PPT limits as it is. Therefore, it isn't going to go beyond the 4.425GHz I'm seeing now. It doesn't do this single thread either, but that could easily be my silicon just not being that great. The interesting thing about the new Ryzen Master is that you can actually see what each core is doing. You can even watch the CPU shift to using a different core and trying to boost it higher. You can also see cores go into sleep mode, or wake up and run at various frequencies.

In short, my specific 3900X does not run at the advertised 4.6GHz boost clock. That clock is generally a single core boost clock. No "all core" boost clock is advertised anywhere for these CPU's. That said, expecting around 4.0-4.3GHz on all cores is reasonable. One thing I'll restate that I said in my review is this: I don't think manual overclocking is the way to go with these chips. I think your best bet is to buy the best motherboard and cooling you are willing to pay for and use PBO or PBO with the +offset to achieve your best results. I have been able to achieve 4.5GHz in single threaded applications with PBO+200MHz offset. So I have gotten close.

That said, I do not think the difference in clock speeds would alter the bottom line on these CPUs. I will verify this, but I don't think it will. The fact is, the all core overclocking and performance under multi-threaded workloads is unaffected by this issue and therefore said data is 100% accurate as it stands. Single threaded or lightly threaded applications might end up with a boost, but per MSI on AMD's AGESA code, performance can vary due to other variables in the code, and seeing a higher clock speed in CPU-Z or Ryzen Master does not guarantee better performance. I've only run Cinebench so far, and under PB2, I've seen no impact to the test results.