0

Power Delivered =

p = i*V

Power Dissipated:

(In the resistors)

P= R*I^2

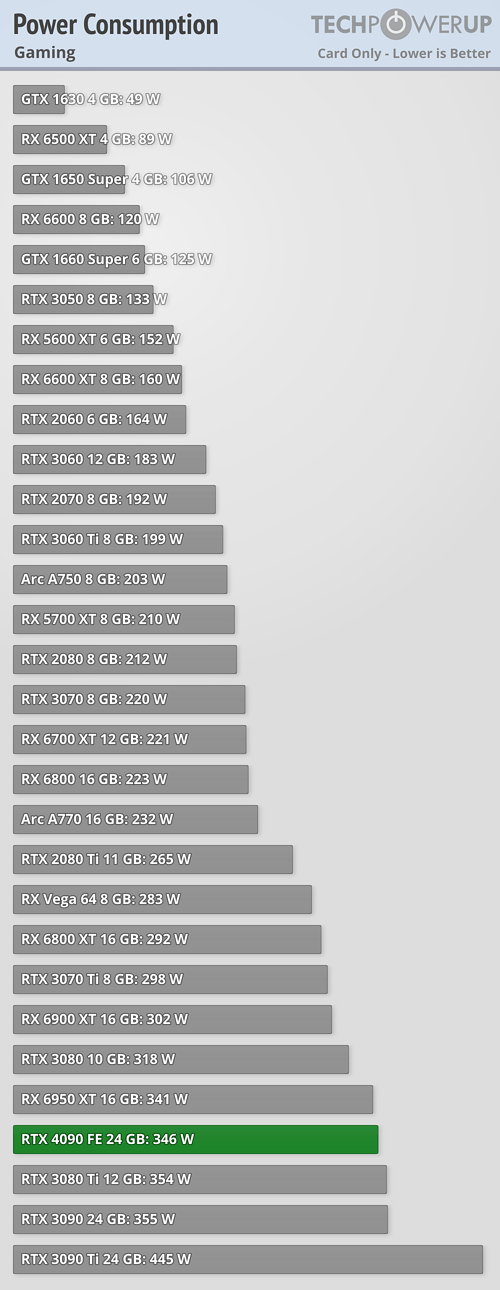

Don't your words and picture say two different things? If both are correct then Power Delivered = Power Dissipated. I can divide both sides by power and arrive at Delivered = Dissipated. Assuming we're not taking large portions of the power and converting it into some other energy state, like radio waves or other types of emitted radiation. I guess my comment should have pointed to CPUs, as probably north of 95% of the power draw will be waste heat torching your rooms.

Power Delivered =

p = i*V

Power Dissipated:

(In the resistors)

P= R*I^2

![[H]ard|Forum](/styles/hardforum/xenforo/logo_dark.png)