kramnelis

Gawd

- Joined

- Jun 30, 2022

- Messages

- 890

Funny sRGB is the lowest standard that looks as good as office documents. Yet you are not even seeing the most accurate sRGB as it requires 80nits to be the most accurate. So your accuracy standard is in fact lower the lowest lol. It just proves that yours eyes want to see better than whatever the excuse you type.- sRGB is still *the* standard on PC (as well as phones, etc.) for most everyday applications, including things like photos, where it's still the default. While many modern PCs/monitors support wide gamuts on some level, the entirety of the Internet is mostly sRGB based. That include everything from commercial sites to photo and artistic websites/collections to many games; it's a LOT more than just office work, as some have claimed. Anything with a wider gamut is still the exception, not the rule.

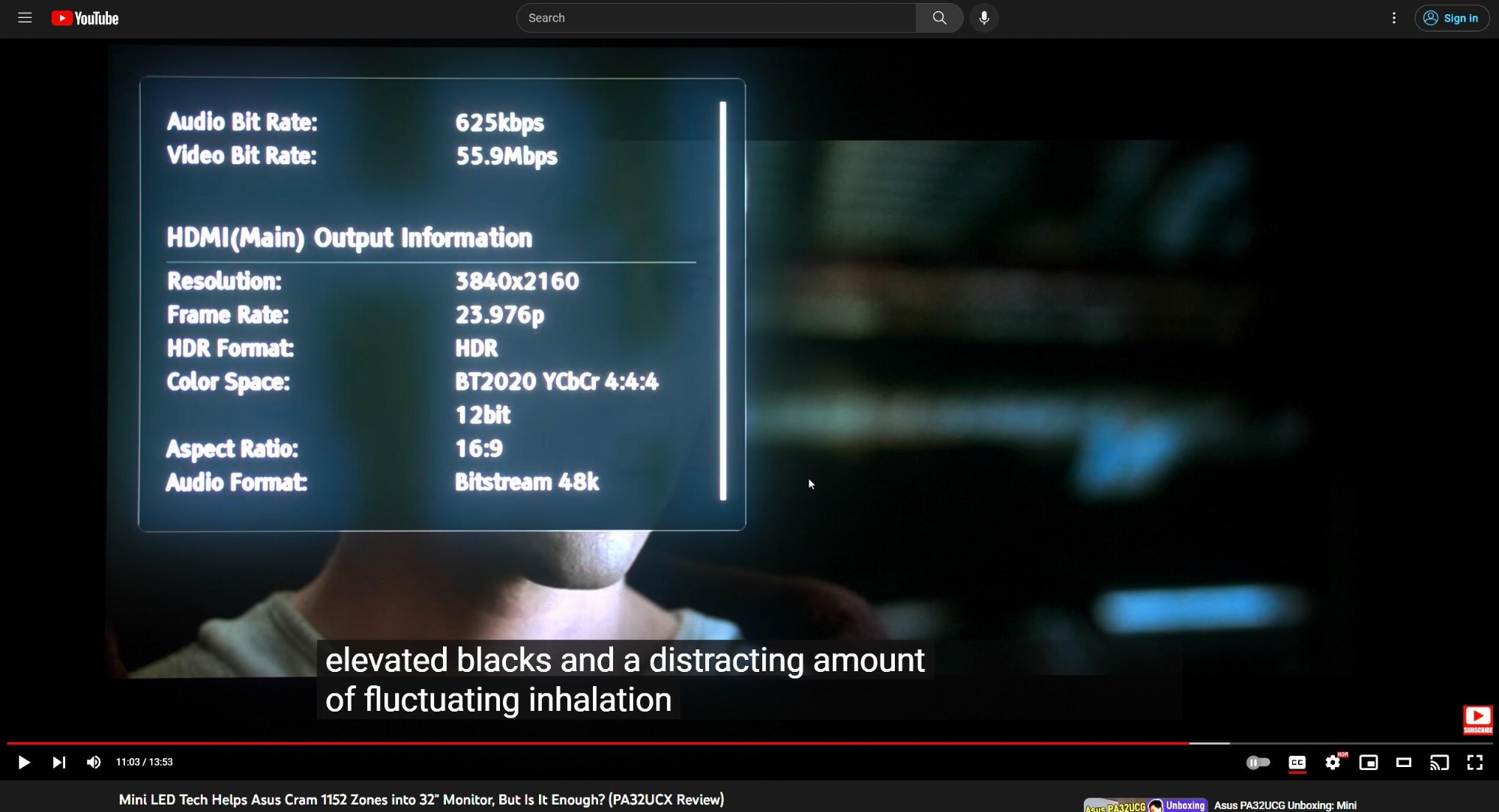

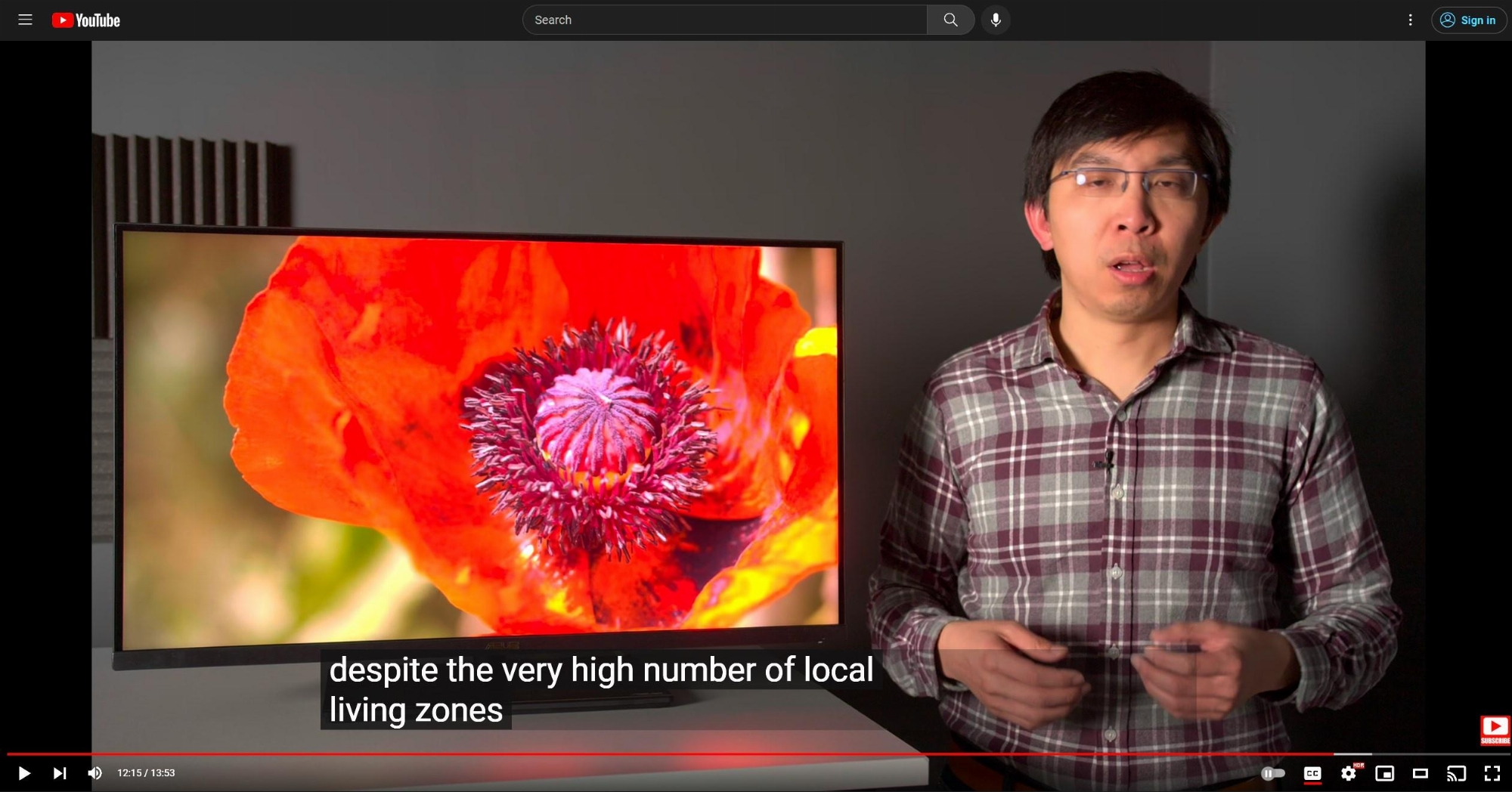

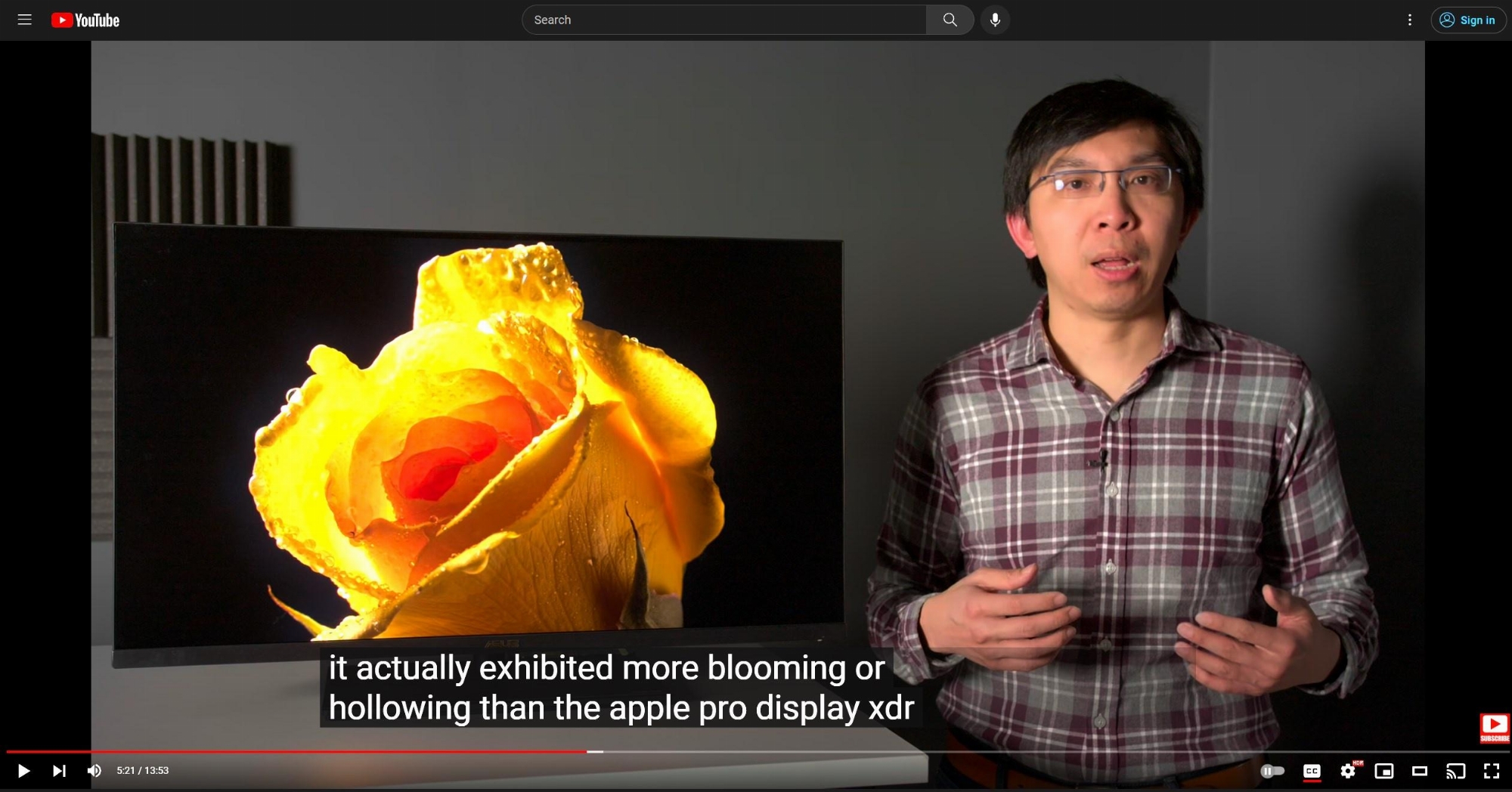

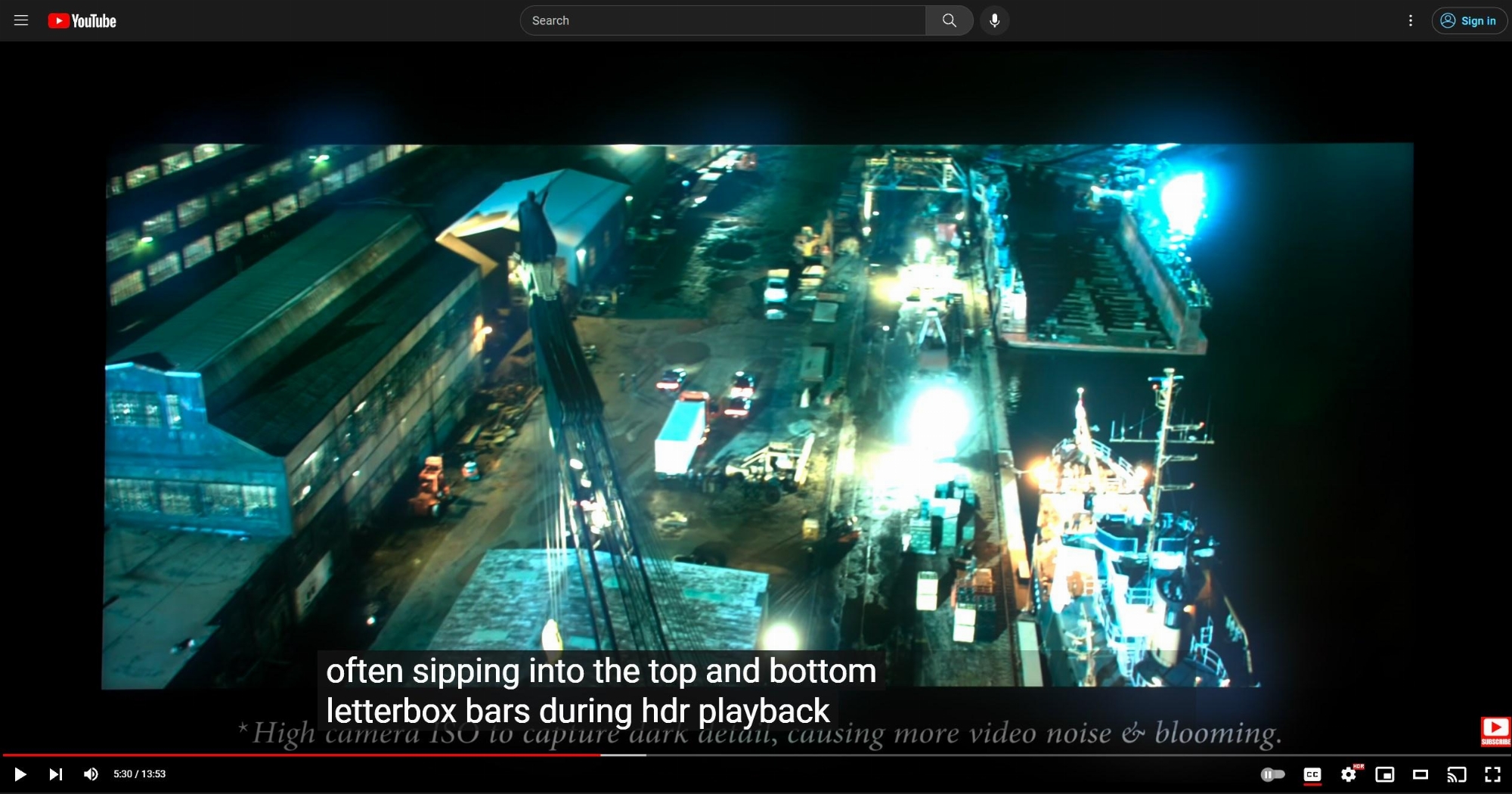

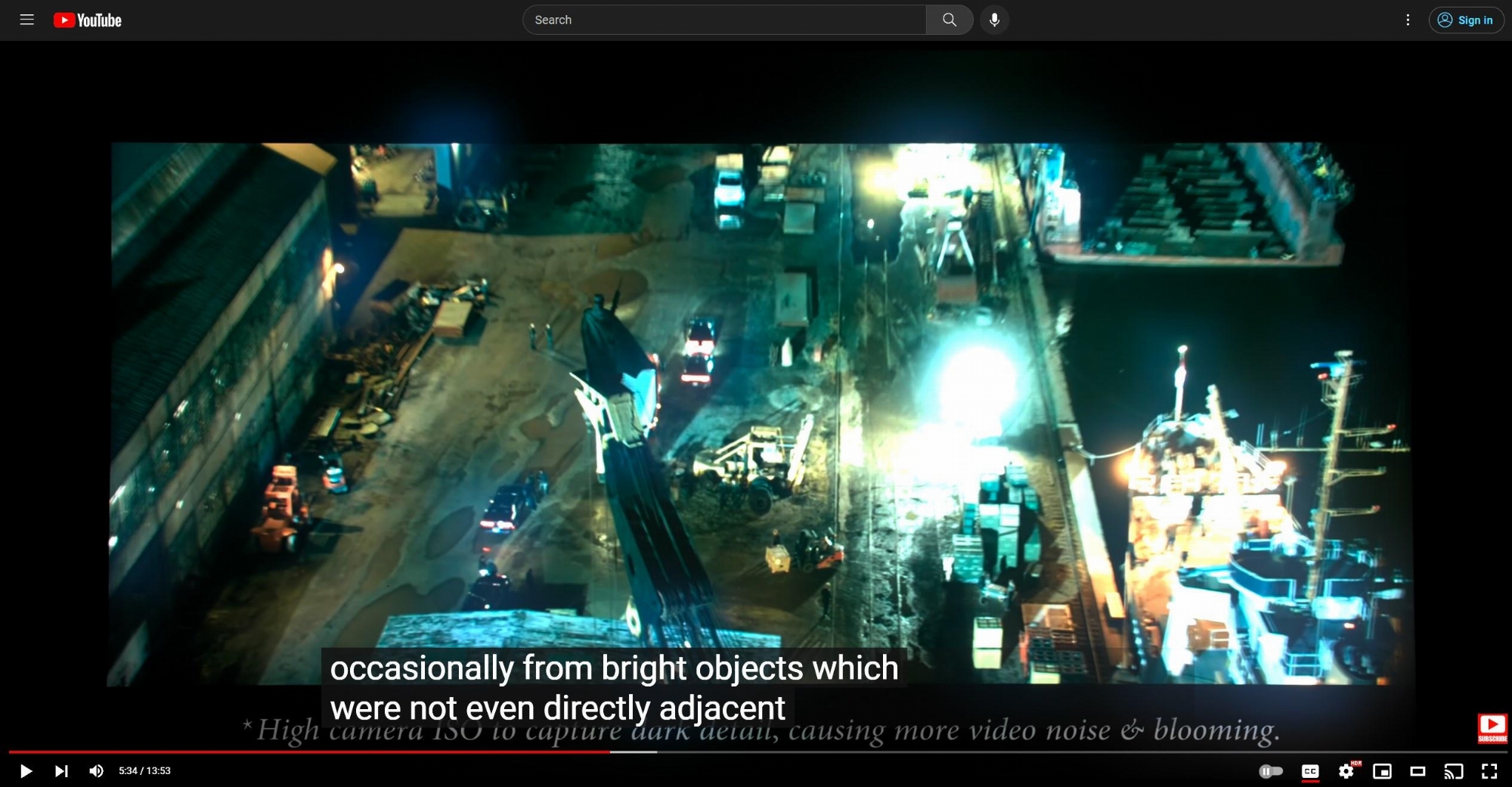

Of course you will say there are poor HDR implementations as another excuse since your OLED accuracy is way off the chart to catch up with the required HDR.- HDR is the future, but it's still fairly inconsistent in its implementation and can be very hit or miss, not to mention the various formats and standards. In short, HDR looks great when done properly, but there are a lot of caveats and poor implementations of it as well. In addition, there are adoptions problem, particularly among older people who don't understand it. I sometimes help older people with AV setup and most of them have no idea HDR even exists, let alone how to make sure their TV is set up properly or shows it in the right preset, etc. Younger people tend to have fewer problems, but range switching still has a ways to go before it's truly simple. The system in Windows 11 is a lot better than 10's but still somewhat cumbersome. And the fact that there are all these competing technologies means that HDR setup of some kind to tone map is necessary on most displays; displays that can do high-nit HDR without tone-mapping are also the exception at the moment.

It's very simple to see HDR mode. You just need to turn on HDR to see HDR graded materials. It's that simple.

But it's not simple to see HDR when not every display especially OLED can display it.

Yet "HDR Effect" SDR still look better than the dim "HDR" on 27GR95QE. It's all the reason why wide gamut SDR is ironically useful. And I don't even need to use FALD to prove this. The OLED just proves it by its own user.- "HDR Effect" modes are included as pseudo-HDR modes on many different TV's and monitors; it has little to do with native device HDR support, which many OLEDS including mine do have; it's for people who like the look and want to do the equivalent of Windows Auto HDR on the display level instead of in software. Personally, I'd never use it as I want to see sRGB in the range as close to the source as possible and find it visually preferable, just as I don't use Auto HDR on Windows 11, but it's nice for the people who do like it.

160nits is never that bright compared to 400nits wide gamut SDR. But again, if you value the accuracy of sRGB. You would've used sRGB at 80nits. But obviously your eyes won't value so much accuracy because they are not better images to your eyes.- SDR is plenty bright on my OLED. (After calibration, I'm at 84 brightness as I didn't need the full 100 to reach my target of 160 nits in a 10% window). HDR is bright enough for me in real-world use and provides a nice level of pop and 10-bit color for bright details. In games, once set up, it looks quite good and I have no real complaints. It'll be nice to eventually have more brightness headroom in a future monitor as HDR evolves, but it's enough for me right now to be able to enjoy it.

![[H]ard|Forum](/styles/hardforum/xenforo/logo_dark.png)