Navigation

Install the app

How to install the app on iOS

Follow along with the video below to see how to install our site as a web app on your home screen.

Note: This feature may not be available in some browsers.

More options

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

Best Gaming CPU

- Thread starter kill8r

- Start date

10700k is best bang for buck, with 10900k being best no holes bared option.

BTW, have you seen the latest DDC fitting cpu block/pump/res setups from barrow? Perfect for ITX/SFF

https://m.aliexpress.com/item/10000...tedetail&spm=a2g0s.9042311.0.0.19ce4c4dF6G4ed

BTW, have you seen the latest DDC fitting cpu block/pump/res setups from barrow? Perfect for ITX/SFF

https://m.aliexpress.com/item/10000...tedetail&spm=a2g0s.9042311.0.0.19ce4c4dF6G4ed

CEO_OF_CBT

Limp Gawd

- Joined

- Jul 2, 2020

- Messages

- 212

would concur on this because next gen console is 8 cores/16 threads10700k is best bang for buck, with 10900k being best no holes bared option.

BTW, have you seen the latest DDC fitting cpu block/pump/res setups from barrow? Perfect for ITX/SFF

https://m.aliexpress.com/item/10000...tedetail&spm=a2g0s.9042311.0.0.19ce4c4dF6G4ed

Ok, going for 10900k unless AMD has a better option.?10700k for bang for buck.

10900k for all out.

CEO_OF_CBT

Limp Gawd

- Joined

- Jul 2, 2020

- Messages

- 212

I would say wait if you can, because pcie4 is a thing and comet lake doesn't have itOk, going for 10900k unless AMD has a better option.?

2080ti already maxes out pcie3x16

Ok thank you and I will. Any idea how long of a waitI would say wait if you can, because pcie4 is a thing and comet lake doesn't have it

2080ti already maxes out pcie3x16

Ok, going for 10900k unless AMD has a better option.?

AMD does not currently have a better option if gaming is all you care about.

But AMD supports pcie4 if I am not mistaken and Intel 10th gen cpus do not. I could be wrong here but this could matter greatly.AMD does not currently have a better option if gaming is all you care about.

Last edited:

2080ti already maxes out pcie3x16

please back up this statement with figures.

But AMD supports pcie4 if I am not mistaken and Intel 10th gen cpus do not. I could be wrong here but this could matter greatly.

It's literally never mattered in previous generations.

CEO_OF_CBT

Limp Gawd

- Joined

- Jul 2, 2020

- Messages

- 212

https://www.techpowerup.com/review/nvidia-geforce-rtx-2080-ti-pci-express-scaling/3.htmlplease back up this statement with figures.

yes but you are saying it is saturating 3.0x16, how can you know that when it is only capable of 3.0x16?

CEO_OF_CBT

Limp Gawd

- Joined

- Jul 2, 2020

- Messages

- 212

with pcie4 the 3070 (2080ti level if marketing is to be believed) might be able to leverage more bandwidthyes but you are saying it is saturating 3.0x16, how can you know that when it is only capable of 3.0x16?

just a thought

Denpepe

2[H]4U

- Joined

- Oct 26, 2015

- Messages

- 2,279

the article only proves it saturates PCIe 3.0 at x8 speeds, not x16

SnowBeast

[H]ard|Gawd

- Joined

- Aug 3, 2003

- Messages

- 1,312

Seems Z490 chipsets support 4.0. Intel just has to drop the chip with it. That's Rocket Lake, correct?

https://itigic.com/all-compatible-models-of-z490-boards-with-pcie-4-0/

https://itigic.com/all-compatible-models-of-z490-boards-with-pcie-4-0/

I currently use and like AMD CPU's, however I see no reason to make PCIe 4.0 a sticking factor.

https://www.anandtech.com/show/1605...re-for-gaming-starting-with-rtx-3080-rtx-3090

https://www.anandtech.com/show/1605...re-for-gaming-starting-with-rtx-3080-rtx-3090

As for the performance impact from PCIe 4.0, we’re not expecting much of a difference at this time, as there’s been very little evidence that Turing cards have been limited by PCIe 3.0 speeds – even PCIe 3.0 x8 has proven to be sufficient in most cases. Ampere’s higher performance will undoubtedly drive up the need for more bandwidth, but not by much. Which is likely why even NVIDIA isn’t promoting PCIe 4.0 support terribly hard (though being second to AMD here could very well be a factor).

I’m going to differ from other opinions here:

get a 3700x

Get a gigabyte aorus ultra 570x

Get 32 gig of ram in two sticks

2 of those sabrient 1-2tb drives (nvme)

A fractal design define r6

And search the used market for a 2080ti

The OP asked what the best gaming CPU is. He said nothing about value. He also asked about 4K and 8K gaming. The 10900K is his best option. It's not the cheapest, but it is the best.

Also for 4K or higher, the 2080 Ti isn't going to be the best option. At that resolution, the 2080 Ti can barely do 60FPS in some games. That means 8K is a no go unless you like running games in potato mode.

The 3080 or 3090 will be better. We just aren't sure by how much yet.

Last edited:

The OP asked what the best gaming CPU is. He said nothing about value. He also asked about 4K and 8K gaming. The 10900K is his best option. It's not the cheapest, but it is the best.

Also for 4K or higher, the 2080 Ti isn't going to be the best option. At that resolution, the 2080 Ti can barely do 60FPS in some games. That means 8K is a no go unless you like running games in potato mode.

The 3080 or 3090 will be better. We just aren't sure by how much yet.

I clearly posted in the wrong thread, sorry

Right now it's the 10700k and 10900k for best gaming performance. If that's all you care about, that's what you should decide between.

That said, new CPU's from AMD should be out soon (zen3) and hopefully Ice Lake in the near future that will add support for pcie 4.0 which *may* make a few % difference for games (more likely for lower resolutions than you're talking, at higher resolutions the differences will be less). Honestly though, if you only play 4k (and some 8k) I think you'll be more GPU constrained than CPU and would probably lean towards the 10700k myself.

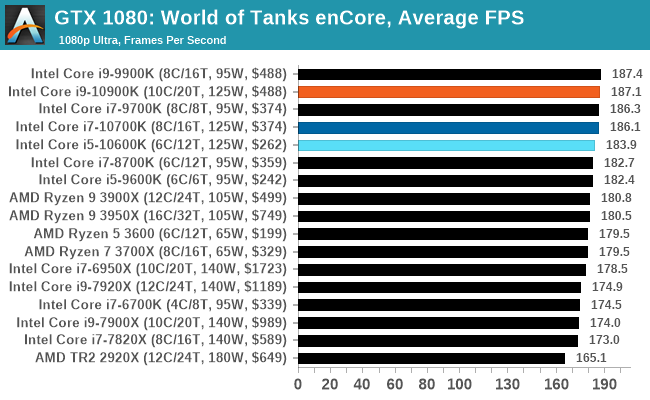

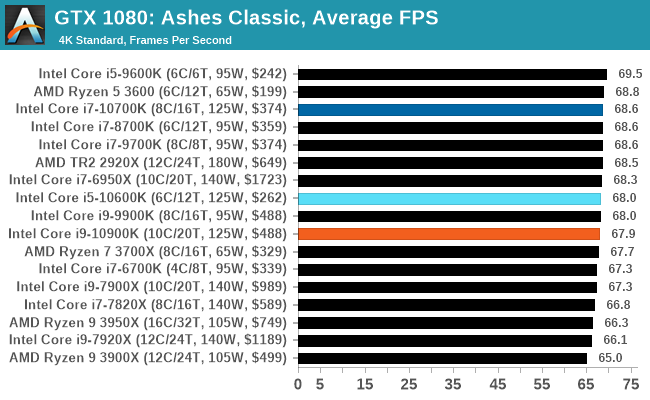

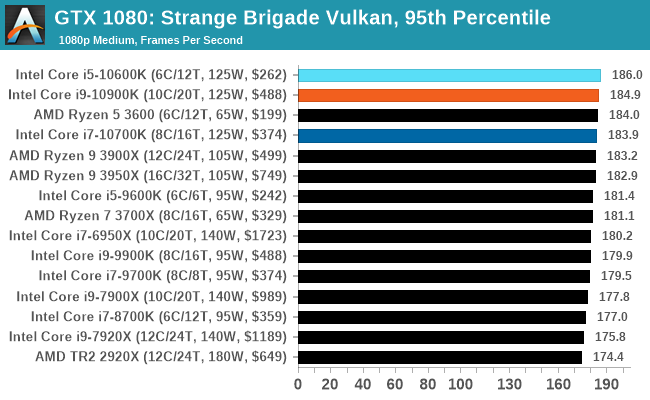

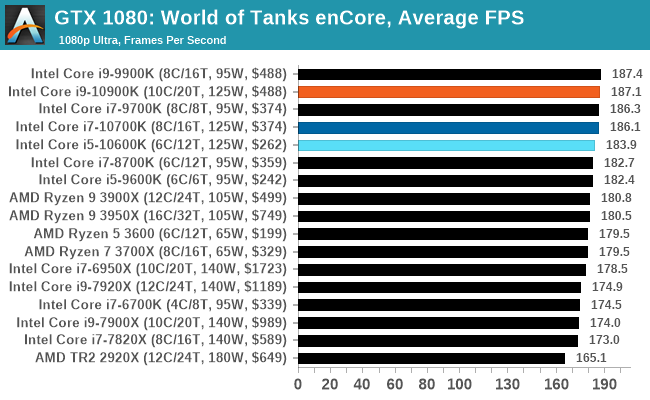

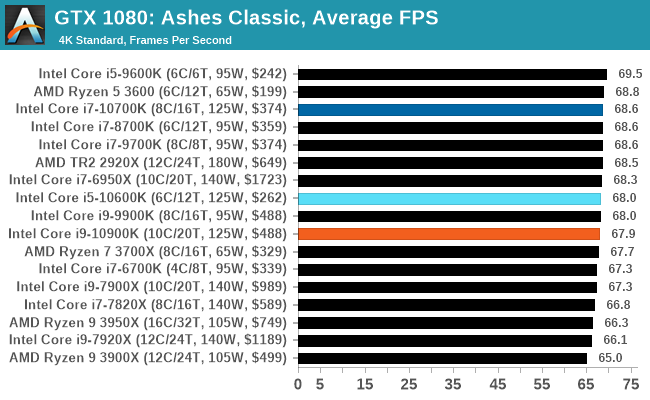

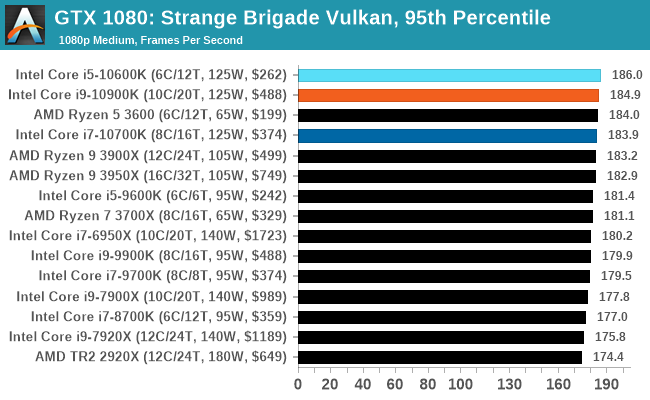

Just an example from a few benchmarks, this is only a 1080p with medium/high details... (last one is 95th percentile, not average). As you can see, even at this lower resolution where frame rates are higher, there is barely any seperation between the 10700k/10900k... and even the 10600k keeping up just fine. Heck, the Ryzen 3600 is right up there as well, but in general it will fall behind more often (depends heavily on the game). Please do find some charts (from multiple reviewers) and find the games you like and see what the differences are. Spend more $$ doesn't always get you more performance if you aren't matched to your workload. Like using a 3950x wouldn't make sense for a pure gaming build, but is great if you need cores.

https://www.anandtech.com/show/15785/the-intel-comet-lake-review-skylake-we-go-again/16

That said, new CPU's from AMD should be out soon (zen3) and hopefully Ice Lake in the near future that will add support for pcie 4.0 which *may* make a few % difference for games (more likely for lower resolutions than you're talking, at higher resolutions the differences will be less). Honestly though, if you only play 4k (and some 8k) I think you'll be more GPU constrained than CPU and would probably lean towards the 10700k myself.

Just an example from a few benchmarks, this is only a 1080p with medium/high details... (last one is 95th percentile, not average). As you can see, even at this lower resolution where frame rates are higher, there is barely any seperation between the 10700k/10900k... and even the 10600k keeping up just fine. Heck, the Ryzen 3600 is right up there as well, but in general it will fall behind more often (depends heavily on the game). Please do find some charts (from multiple reviewers) and find the games you like and see what the differences are. Spend more $$ doesn't always get you more performance if you aren't matched to your workload. Like using a 3950x wouldn't make sense for a pure gaming build, but is great if you need cores.

https://www.anandtech.com/show/15785/the-intel-comet-lake-review-skylake-we-go-again/16

endlesszeal

Limp Gawd

- Joined

- Jul 4, 2007

- Messages

- 135

I read the same conclusion....... odd how it was interrupted as “maxes out pcie3.0 x16”.

if anything, extrapolating that data means pcie 3.0 x16 still has some legs before moving up to 4.0 Is required

if anything, extrapolating that data means pcie 3.0 x16 still has some legs before moving up to 4.0 Is required

somebrains

[H]ard|Gawd

- Joined

- Nov 10, 2013

- Messages

- 1,668

Haven't checked CPU performance for some time now, but damn AMD's best isn't even an i5 in gaming anymore. Just going by the crazy hype alone, you would think that AMD is the one on top lol!

You can do what I did when I needed to dig into Kubernetes, build yourself essentially a gaming box.

I saw a $300 MSI x570 + 3700x combo for $300 in my area last week on CL from a guy that did exactly what I was doing ^.

Lab out whatever pays the bills, then game on it a bunch to compare it to an Intel build.

Ryzen was ok, but I can feel stutters in frame pacing.

I have a couple visual fx buddies doing same right now, they game on their 3950x and Threadripper 3 builds when they aren’t working.

Some people aren’t so sensitive so $100 Ryzen cpu & $50 or less motherboards are fine.

After I sold the Ryzen parts, I built out my current sig rig.

Gaming, I’m much happier.

kirbyrj

Fully [H]

- Joined

- Feb 1, 2005

- Messages

- 30,694

At this point, we're close enough to Zen3 to see if that is any better than Intel in gaming. I have a 10700 and used a 3600/3600x/3800x/3900x and didn't notice any big differences when gaming at 1440p.

Edit: Caveat...I mostly play SP games, and have a Freesync monitor. I'm not oblivious to the fact that there are differences, but they aren't so noticeable or distracting to me. They might be to others.

Edit: Caveat...I mostly play SP games, and have a Freesync monitor. I'm not oblivious to the fact that there are differences, but they aren't so noticeable or distracting to me. They might be to others.

Last edited:

FlawleZ

[H]ard|Gawd

- Joined

- Oct 20, 2010

- Messages

- 1,691

Like others said already, were close enough now to Zen 3 that it would be senseless to go all out on what's available today when soon we will have faster options.

Wait for Zen3.

I have decided to wait for Zen3 along with a revision to the SFF cases to accomodate the 3090. I am hoping for an n1 rev7Like others said already, were close enough now to Zen 3 that it would be senseless to go all out on what's available today when soon we will have faster options.

Nenu

[H]ardened

- Joined

- Apr 28, 2007

- Messages

- 20,315

The 10850K looks to have the 10900K pipped.

https://www.techpowerup.com/review/intel-core-i9-10850k/

Power consumption

https://www.techpowerup.com/review/intel-core-i9-10850k/18.html

vs 10900K

https://www.techpowerup.com/review/intel-core-i9-10850k/21.html

And is cheaper.

https://www.techpowerup.com/review/intel-core-i9-10850k/

Power consumption

https://www.techpowerup.com/review/intel-core-i9-10850k/18.html

vs 10900K

https://www.techpowerup.com/review/intel-core-i9-10850k/21.html

And is cheaper.

somebrains

[H]ard|Gawd

- Joined

- Nov 10, 2013

- Messages

- 1,668

My vote for a dedicated gaming build is still 9600k/10600k clocked.

If you can get a mid tier or + z370/z390 board for under $100 you put the extra $ towards 3080 vs 3070.....or a better monitor which barely anyone mentions we we bench race builds.

If you can get a mid tier or + z370/z390 board for under $100 you put the extra $ towards 3080 vs 3070.....or a better monitor which barely anyone mentions we we bench race builds.

MavericK

Zero Cool

- Joined

- Sep 2, 2004

- Messages

- 31,910

10600K or 10700K for Intel - doesn't seem to be a compelling reason to spend $100 more on a 10850K or 10900K for gaming.

Otherwise...wait for Zen 3.

Otherwise...wait for Zen 3.

VirtualMirage

Limp Gawd

- Joined

- Nov 29, 2011

- Messages

- 470

Something to keep in mind between Intel and AMD is USB endpoint limitations if you use quite a few USB ports, especially if you VR. Unless Intel has changed something, their USB 3.x controller are limited to a maximum number of 96 endpoints per controller. AMD's USB 3.x is limited to a maximum number of 254 endpoints per controller. This is one of the reasons I switched to AMD for my current build project (among a few other things). With up to 32 endpoints being consumed per device, those resources can be eaten up pretty quickly. Just something to keep in mind that probably most people don't even consider when building out a new machine.

My current Intel machine, a 6700K, I frequently run into USB resource errors ever since I bought my Valve Index. Hubs don't help, they actually will make it worse since they will at minimum consume 1 endpoint per port on the hub whether it is used or not. Even if you are using ports just for RGB or charging, they will eat up some of those endpoints. One of the workarounds for this is to disable XHCI but then you drop your USB ports down to 2.0 by doing this. Another option is to invest in an additional USB controller, but that doesn't work if you are doing a SFF or compact build like I tend to do with my builds.

I would like to think that I don't have too many devices, and have even unplugged some devices to free up resources:

My current Intel machine, a 6700K, I frequently run into USB resource errors ever since I bought my Valve Index. Hubs don't help, they actually will make it worse since they will at minimum consume 1 endpoint per port on the hub whether it is used or not. Even if you are using ports just for RGB or charging, they will eat up some of those endpoints. One of the workarounds for this is to disable XHCI but then you drop your USB ports down to 2.0 by doing this. Another option is to invest in an additional USB controller, but that doesn't work if you are doing a SFF or compact build like I tend to do with my builds.

I would like to think that I don't have too many devices, and have even unplugged some devices to free up resources:

- Razer BlackWidow Elite (2 USB ports)

- Razer Basilisk wireless mouse/dock (1 USB port)

- Razer Firefly V2 mousepad (1 USB port)

- XBox 360 controller (1 USB port)

- Epson V500 Photo Scanner (1 USB port)

- 7 port USB 3.0 hub, only using two ports to charge Valve Index controllers (1 USB port)

- Valve Index (1 USB port)

- APC UPS (1 USB port)

- USB Hard drive dock or camera card reader (1 USB port, only plugged in when using)

MavericK

Zero Cool

- Joined

- Sep 2, 2004

- Messages

- 31,910

Something to keep in mind between Intel and AMD is USB endpoint limitations if you use quite a few USB ports, especially if you VR. Unless Intel has changed something, their USB 3.x controller are limited to a maximum number of 96 endpoints per controller. AMD's USB 3.x is limited to a maximum number of 254 endpoints per controller. This is one of the reasons I switched to AMD for my current build project (among a few other things). With up to 32 endpoints being consumed per device, those resources can be eaten up pretty quickly. Just something to keep in mind that probably most people don't even consider when building out a new machine.

My current Intel machine, a 6700K, I frequently run into USB resource errors ever since I bought my Valve Index. Hubs don't help, they actually will make it worse since they will at minimum consume 1 endpoint per port on the hub whether it is used or not. Even if you are using ports just for RGB or charging, they will eat up some of those endpoints. One of the workarounds for this is to disable XHCI but then you drop your USB ports down to 2.0 by doing this. Another option is to invest in an additional USB controller, but that doesn't work if you are doing a SFF or compact build like I tend to do with my builds.

I would like to think that I don't have too many devices, and have even unplugged some devices to free up resources:

- Razer BlackWidow Elite (2 USB ports)

- Razer Basilisk wireless mouse/dock (1 USB port)

- Razer Firefly V2 mousepad (1 USB port)

- XBox 360 controller (1 USB port)

- Epson V500 Photo Scanner (1 USB port)

- 7 port USB 3.0 hub, only using two ports to charge Valve Index controllers (1 USB port)

- Valve Index (1 USB port)

- APC UPS (1 USB port)

- USB Hard drive dock or camera card reader (1 USB port, only plugged in when using)

I have had similar issues on my rig - however, I can't find any data supporting the Intel vs. AMD thing you mentioned on this front.

VirtualMirage

Limp Gawd

- Joined

- Nov 29, 2011

- Messages

- 470

I have been trying to find a whitepaper on this, but all I have found through my searching is a couple of tech blogs that discuss USB endpoint usage with XHCI and some forum/reddit posts of users discussing the USB endpoint counts between Intel and AMD and the problems it causes. I can't find a direct link if current chipsets from Intel still exhibit the problem or not.I have had similar issues on my rig - however, I can't find any data supporting the Intel vs. AMD thing you mentioned on this front.

Also, my initial count on the AMD total endpoints per controller might be off. Some links I have read mentioned a total of 254 endpoints per controller for AMD while others mentioned 127 endpoints per controller. The confusion here is because the AMD CPUs have a USB controller on both the processor and on the motherboard chipset. If each controller has 127 endpoints, then it would total 254 endpoints between the two of them. Depending on how the CPU and chipset handle these in regards to the ports on the motherboard, I am not sure if it counts as a total pool of 254 endpoints or if a certain set of USB ports are allocated to one pool of 127 endpoints while the other set of USB ports are allocated to the other 127 endpoints.

![[H]ard|Forum](/styles/hardforum/xenforo/logo_dark.png)