elvn

Supreme [H]ardness

- Joined

- May 5, 2006

- Messages

- 5,339

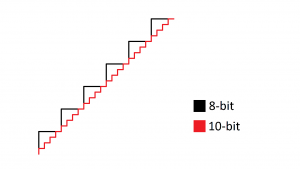

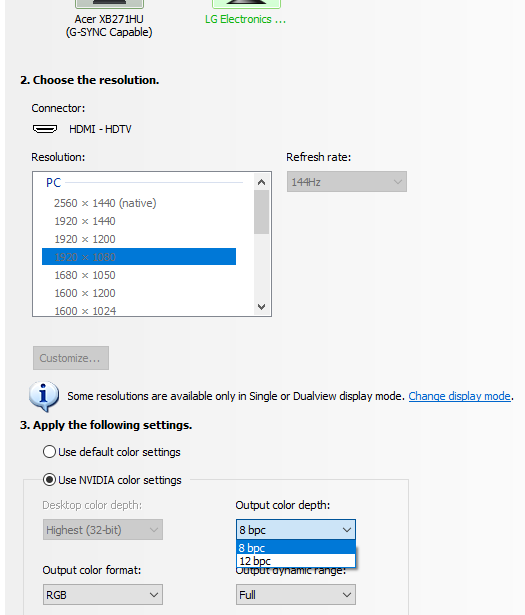

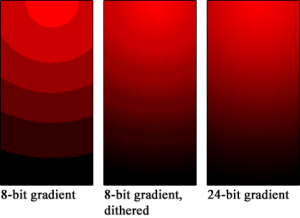

I think you'd have to have the same 10 bit capable monitor and feed it 10bit images and 10bit renders on a 10bit signal from for example, an amd gpu (or nvidia studio drivers on photoshop) - then compare the same material sent to the same 10 bit capable monitor model fed regular nvidia gaming drivers. The nvidia gaming one would be below 10 bit so would rely on dithering. So you'd be comparing dithering which is smoothing to avoid banding, to source accurate per pixel 10bit source pipeline. Making some kind of dithering gradient to smooth "rough" banding lines might look "ok" but it isn't accurate to the source material.

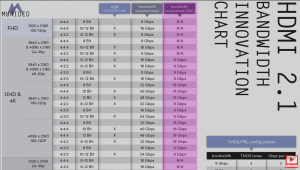

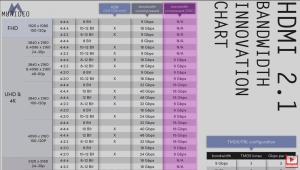

I haven't dug into any examples or 10bit test materials since I don't have such a panel yet. The frustrating thing is , as was mentioned in this thread - a lot of us thought finally hdmi 2.1 support on a OLED so no more compromising with bandwidth limitations and workarounds.

EDIT: Rtings might have some here -- https://www.rtings.com/tv/tests/picture-quality/gradient

I haven't dug into any examples or 10bit test materials since I don't have such a panel yet. The frustrating thing is , as was mentioned in this thread - a lot of us thought finally hdmi 2.1 support on a OLED so no more compromising with bandwidth limitations and workarounds.

EDIT: Rtings might have some here -- https://www.rtings.com/tv/tests/picture-quality/gradient

.I think the biggest beef here is HDMI 2.1 was supposed to be the solution to all our current problems. Today we have to chose between several pros & cons in regards to resolution, refresh rate, color bit, etc. because you can’t have it all with either HDMI 2.0B nor DP 1.4 due to bandwidth limitations.

With the 48 to 40 GBPS downgrade, we now still can’t have our cake and eat it too. We yet again need to fiddle with settings and sacrifice something and some people’s choices are different than others. This can probably be solved with a TV software update if LG chooses, maybe the full bandwidth can be unlocked while the TV is set is in “game mode” if enough people are loud enough about it.

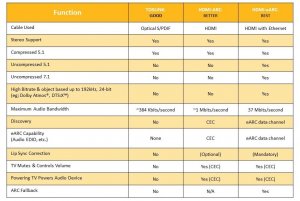

Nvidia could just start supporting 10bit output on gaming GPU's gaming drivers

- and LG could start supporting passing through uncompressed hdmi audio formats over its eARC on the CX series....

Hopefully they will both come through.

Last edited:

![[H]ard|Forum](/styles/hardforum/xenforo/logo_dark.png)