PhysX is the most ubiquitous physics engine in games today, so that bodes well for DLSS.

Fine, I amended my comment

Follow along with the video below to see how to install our site as a web app on your home screen.

Note: This feature may not be available in some browsers.

PhysX is the most ubiquitous physics engine in games today, so that bodes well for DLSS.

There is no real competition to drive prices down so GPU's getting more expensive is inevitable.Any hopes Nvidia had of getting me to spend money on these died when they decided to price them at their current price points. Nvidia had always priced their new cards more or less around the previous gen's msrp but decided to give these new cards a significant bump this time.. I'm currently running a 1080 which i got for $669 (cdn) back in November 2017. If the 2080 TI was closer to $1000 cdn I'd be a little more tempted to bite, but it's currently sitting at $1600 cdn. I originally spent around $1600 for my whole system when i first put it together, not for a single gpu.

U sure?Anyways, I think price is one of the biggest issues for these cards right now.

To have raytracing in games you first need actual hardware to run it on.Yes, some day ray tracing maybe the thing, but today it is still pretty much the end goal it was in the 80's and 90's, albeit slightly closer. Once we have actual implemented raytracing on a broadscale, not just a shitty EA game and some vague promises of it, we will be there, currently we do not.

Blasphemy!!!Personally it doesn't matter much to be, but I'm one of those crazy people that would rather just turn the settings down in a game or play something older rather than spend a ton of money on a fancy new GPU. Heresy, I know...

RTX is NVIDIA’s moniker...RT (Ray Traycing) is buildt into DX12 by Microsoft...RT is THE holy grail in graphics...so no.

DLSS also doesn’t affect gameplay (unlike PhysX)...A.I. is also comming (DLSS uses deep learning) if you like it or not.

Let me put it this way:

RT and Deep Learning are comming and if you don’t like that, you are SOL.

GPU PhysX was closed standard and RTX is hardware implementation of DXR which is available to any company that wants to implement it in their products.ok, BUT..

PhysX never affected gameplay, it was visuel eyecandy and nothing more.. at least in nV's inception.

and RT is the holy grail of graphics... when we have gen 2 or 3.. not in the current state. at all.

RT and deep learning is coming? my god.. keep em coming, haven't laughed that hard in a while.. this really is PhysX all over again, only worse..

ok, BUT..

PhysX never affected gameplay, it was visuel eyecandy and nothing more.. at least in nV's inception.

and RT is the holy grail of graphics... when we have gen 2 or 3.. not in the current state. at all.

RT and deep learning is coming? my god.. keep em coming, haven't laughed that hard in a while.. this really is PhysX all over again, only worse..

GPU PhysX was closed standard and RTX is hardware implementation of DXR which is available to any company that wants to implement it in their products.

Unreal Engine already got support for DXR and Unity will follow soon.

RTX as some super duper feature set that justify higher prices did fail but ray-tracing and neuron network generated graphics this is natural way computer graphics will evolve and there is no other way forward.

It was obvious RXT 20x0 series is a specific product released to clear the waters for this technology. NV could afford making this bold move to sacrifice rasterization speed for these features because of lack of competition... or rather their technical superiority and GPU market dominance.

And PhysX... it was strong selling point of NV cards and main reason many people bought GeForce over Radeon's. There was the time when AMD had simply better cards back when HD5xxx series came out and PhysX helped NV sales. So was it as failed as some people present it to be? No!

AMD will probably fail to implement RT on their products any time soon only proving they are 2 years behind competition despite having process node advantage. When games with DXR support start coming it will only make NV dominance even stronger.

PhysX is the most ubiquitous physics engine in games today, so that bodes well for DLSS.

Well, once it's able to run on the CPU you might be right on the money..

Are you trolling?

PhysX has been veen able to run on CPU’s since the AGEIA days?!

I usually turned it off to avoid it hammering my CPU cores. I remember Metro 2033 benchmark running at a crawl because Physx was on. Turning it off got me back to 60fps.

If I owned an NVidia GPU, I would just set Physx to the GPU in NVCP. But if I didn't, I had to turn it off entirely.

It's not moot. The price the market will carry does not care what the cost to manufacture is, but you can be sure that the manufacturer does care! If the die size is really large, the number of ICs per wafer and the yield rate go down. That drives the price to manufacture up. Add in all the profit margin and other costs, it must clear a companies internal rate of return hurdle. If it can't, then they simply don't make the product. There needs to be enough slack between what the general consumer market will pay and the total cost + profit the company desires to make or the product simple won't exist. Products that fail this are mistakes.1. No one outside of hardware enthusiasts care about die size, so the continual argument about the 2070 being a larger die is moot, those details do not justify or help sell a card to the general consumer.

It's not moot. The price the market will carry does not care what the cost to manufacture is, but you can be sure that the manufacturer does care! If the die size is really large, the number of ICs per wafer and the yield rate go down. That drives the price to manufacture up. Add in all the profit margin and other costs, it must clear a companies internal rate of return hurdle. If it can't, then they simply don't make the product. There needs to be enough slack between what the general consumer market will pay and the total cost + profit the company desires to make or the product simple won't exist. Products that fail this are mistakes.

The problem for consumers with RTX is that it's not more than 1.3x previous gen (extra cores) + RT/DLSS. At least for now, that's a hard sell due to the limited value add in actual games. It's not terrible if you are upgrading from several generations ago. Specifically, it means, ignoring model numbers and focusing only on price, there really isn't a performance increase, but a feature increase of highly subjective value. Personally, I'm on a 1080Ti and waiting for this architecture to hit 7nm.

You aren't looking at the big picture.It is moot to the consumer, it is not a selling point unless there is a benefit to the larger size, which there isn't much if you put the rest of my post in.

You aren't looking at the big picture.

Mainstream gamers dont care about die size but they do care about price to performance ratio and this turing generation is not doing well on that based on sales data.

Nvidia chose to use almost 1/3 of the die size on a feature that gamers don't care about.

Most gamers care about performance and price, and these cards would have sold like hot cakes if they had used the 33% die space for more cuda cores.

1. No one outside of hardware enthusiasts care about die size, so the continual argument about the 2070 being a larger die is moot, those details do not justify or help sell a card to the general consumer.

2. Could nVidia have combated this by bumping models up, ie 2080 becomes the 2080ti, the 2080ti becomes the Titan, and 2070 becomes 2080? Partial solve, but it would highlight how there is very little performance increase this generation.

3. The biggest problem is the lack of RTX/DLSS titles, we are in a chicken and egg moment, which comes first, the hardware or the software? So the consumer is paying for a bunch of Tech that cannot be used yet, on the hopes that it will be used in the future. nVidia took this new tech and upped the price rather than cut their margin, a short sighted move IMHO because if you want RTX to take off, you need market penetration, which isn't going to happen atm.

I bought a 2080ti, and am quite happy with it, was it worth the cost compared to my Titan Xp, no, but it is the fastest and I do notice the difference.

You seem to be confused about the performance gap between CPU’s and GPU’s.

Try running graphics on your CPU...to keep up your argumentation you would have to blame DirextX for the poor performance of rendering the graphics on your CPU...and that would make me question the your level of knowlegde/insight into the topic...

CPU PhysX is the main physics engine yes...but that has NOTHING to do the GPU PhysX effects you talk about.Physx is enabled by default for that game. If I didn't use an Nvidia GPU, performance tanked with that setting on. I got better performance on my GTX 650 in some scenes than an R9 290 if Physx was on for Metro because it was running on the CPU; the 650 didn't have that problem.

CPU PhysX is the main physics engine yes...but that has NOTHING to do the GPU PhysX effects you talk about.

FUD and ignorance is the new black on forums:

https://www.tomshardware.com/reviews/nvidia-physx-hack-amd-radeon,2764.html

From the article you posted:

"This is where our current dilemma begins. There is only one official way to take advantage of PhysX (with Nvidia-based graphics cards) but two GPU manufacturers."

This basically sums up what I said.

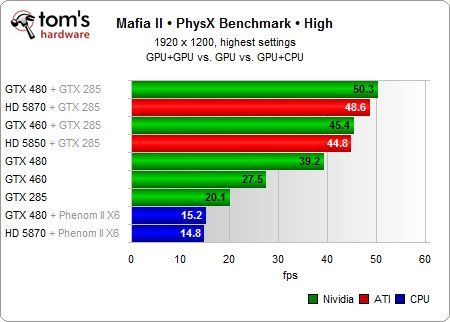

This image from the article is basically my experience:

View attachment 142700

If you didn't have an Nvidia card (AMD only), Physx was CPU only.

Having this setting enabled by default is silly.

I think they made a TERRIBLE job on the model naming for the RTX line.

One of the main concerns is that the RTX2080, for example, replaces the GTX1080, yet it performs and costs much as a GTX1080Ti.

Same thing goes for the rest of the line except the 2080Ti which is a special case, so to speak.

As it is now

RTX 2060 replaces GTX1060, costs like a GTX1070

RTX 2070 replaces GTX1070/1070Ti costs like a GTX1080

RTX 2080 replaces GTX1080 costs like a GTX1080Ti

RTX 2080Ti replaces GTX1080Ti costs and arm and a leg, a kidney a lung and both retinas.

But if the RTX 2060 was named as RTX2070 instead?

Suddenly it wouldn't sound so bad as it would cost as much as a GTX1070 and perform faster, not by that much, but still.

And if the RTX2070 was named as RTX 2080?

Guess what, it would still be faster than the GTX1080 and cost as much.

and you get the idea.

I guess nvidia feared that consumers would view Turing as just Pascal + RTX/DLSS with just a small bump in performance for the same price. So they chose to position them as a large performance increase + RTX/DLSS but also a big jump in price.

And now we know how it turned out...

From the article you posted:

"This is where our current dilemma begins. There is only one official way to take advantage of PhysX (with Nvidia-based graphics cards) but two GPU manufacturers."

This basically sums up what I said.

This image from the article is basically my experience:

View attachment 142700

If you didn't have an Nvidia card (AMD only), Physx was CPU only.

Having this setting enabled by default is silly.

I've thought similar things about this. Part of it - I think - has to do with the fact that they promoted the Titan to non-gaming enterprise/machine learning/compute territory, so the TI is now what the titan used to be. If you think of it that way throughout the lineup, just bump them up one level, then the prices don't seem as outrageous.

On the flip side - however - if you bump them up one level in the lineup, then the generation to generation performance increase is even more disappointing than it already was.

I don't think performance increase is dissapointing at all. I mean the RTX2080Ti is a monster.

Well, there are several cases where the Ti is capable of running games @4k 60+ fps at max settings where the GTX2080 just can't. So at least, there's that.I guess it depends on what you are coming from. Compared to my Pascal Titan X I bought for the same price as an MSRP 2080ti TWO AND A HALF YEARS AGO, you gain - what - 20-30% on average?

So lets say I'm getting ~52fps which is common for many titles with high settings at 4k, this puts me just a hair above 60fps in those titles.

Sure, being able to vsync at 60fps is nice for those of us that don't have adaptive sync screens, but yet another $1200 upgrade for 8fps just doesn't seem worth it, and is a bit disappointing.

Well, there are several cases where the Ti is capable of running games @4k 60+ fps at max settings where the GTX2080 just can't. So at least, there's that.

In the end, like Neo said, "the problem is choice".... err I mean, "Price"

I agree.Well, if we ever get choice, the prices will fall.

It's great that AMD has been in resurgence on the CPU front, but I really hope they get their act together on the GPU front as well. They've been without a high end product for way too long.

Heck, it would be amazing if both Intel and AMD can compete in GPU's. I miss the days when we had 3-4 legitimate GPU competitors. It's been a while, but there was a time when 3DFX, ATi and Nvidia were all competing for high end graphics, and Matrox wasn't that far behind them.

I guess it depends on what you are coming from. Compared to my Pascal Titan X I bought for the same price as an MSRP 2080ti TWO AND A HALF YEARS AGO, you gain - what - 20-30% on average?

So lets say I'm getting ~52fps which is common for many titles with high settings at 4k, this puts me just a hair above 60fps in those titles.

Sure, being able to vsync at 60fps is nice for those of us that don't have adaptive sync screens, but yet another $1200 upgrade for 8fps just doesn't seem worth it, and is a bit disappointing.

I always subtract the cost of the card when I upgrade. Plus if you are coming from a titan x you should be aming for another titan. Yep it’s sad it’s expensive but it is what it is. You are right. It’s not worth the cost for you unless you had money to throw at a titan. But for someone like me it was 70%+ performance increase. Plus I paid less than 1000 for my 2080ti.

For you though I would probably take the wait and see approach. Hopefully there is enough competition next year for nvidia to rethink their strategy.

Agree, and that is what I am doing.

I think you missed the part of our conversation where we were comparing GPU's by cost parity generation to generation.

So, my Pascal Titan X was $1,200 when I bought it new in August of 2016. If I were to replace it with a GPU of the same cost today, that would be a FE 2080ti. So, dollar for dollar, the performance upgrade has been somewhat disappointing over the last 2.5 years.

In 2013 I bought the original Kepler Titan for $1000 on launch in order to try to keep up with my 30" 2560x1600 screen. Nothing else at that time could do it. Then in the summer 2015 I tried SLI again, by going with dual 980ti's to support my new 4K TV. They were $675 a piece I think for th eEVGA OC models I went with? Can't remember, but lets say $1,350 for the pair. It was a disappointing experience, so when the Pascal Titan X launched in August of 2016 I jumped on it for $1,200.

If I wanted to go from Titan to Titan today, a Titan RTX would cost me $2,499. Could I afford it? Yes. But damn. I just can't bring myself to do it. It's just too nuts.

People want a 144hz/4k GPU. The 2080ti wasn't it. So there's basically no point in it existing. It's too slow for ray tracing, it's too slow for 144hz/4k, so what's the point?

It adds no value.

People want a 144hz/4k GPU. The 2080ti wasn't it. So there's basically no point in it existing. It's too slow for ray tracing, it's too slow for 144hz/4k, so what's the point?

It adds no value.

People want a 144hz/4k GPU. The 2080ti wasn't it. So there's basically no point in it existing. It's too slow for ray tracing, it's too slow for 144hz/4k, so what's the point?

It adds no value.