Yeah but that's 8 hours of full load per day, and the 970 also runs up your power bill too.

obviously a worst case scenario.

real life is no where near those #s because of idle.

Follow along with the video below to see how to install our site as a web app on your home screen.

Note: This feature may not be available in some browsers.

Yeah but that's 8 hours of full load per day, and the 970 also runs up your power bill too.

I agree but at the same time that's about $90/year which can be otherwise put into a GPU upgrade and is instead wasted powering a GPU that is sucking down too much power relative to it's performance. It still makes the 970 a better buy, especially for someone that plans to upgrade and is on a limited budget and can't afford to burn $90/yr on electric for a GPU.

If you're gaming 8 hours a day and can't afford the $90....other issues exist.

If you're gaming 8 hours a day and can't afford the $90....other issues exist.

One could say the same about you using the same evidence. Pot meet kettle.I see that the NVIDIA DEFENSE SQUAD is finally showing up. Allow me to explain the results to you, since the Fury X is capable of delivering smoother gameplay at 4K Ultra, this means unlike the 980 TI, I can turn down a few settings and enjoy better gameplay unlike the 980TI.PC: Corsair 650D || Asus X99 Deluxe || i7 5930k || 32GB GSKILL DDR4 || 980 Ti ||

In your case, your sig comes complete with negative judgment towards your previous product, making you look like fanboys you are so critical of. People can own product from a company, put that in their sig for troubleshooting help, and not necessarily be a blind fanboy. Correlation is NOT causation.Q9650 cooled by Thermaltake Water 2.0 Extreme + San Ace fans -- Crucial m4 256Gb -- R9 290x Lightning or GTX 580 <<< from back when NVIDIA WAS STILL GOOD

Anandtech screwed up their labeling here. Is AMD the orange bars as the graph suggests or black bars as the paragraph says?

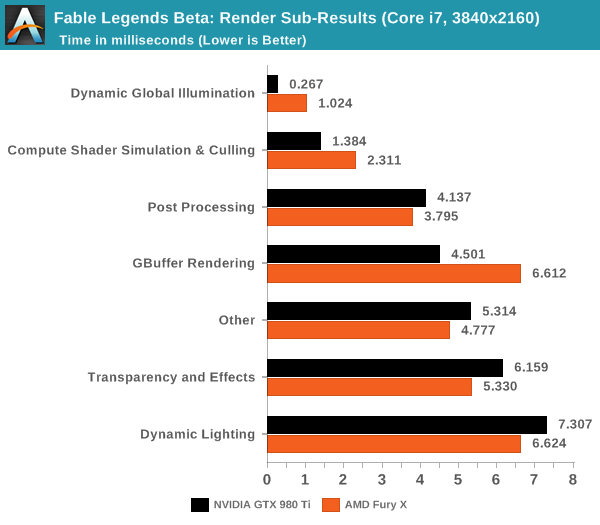

When we do a direct comparison for AMD’s Fury X and NVIDIA’s GTX 980 Ti in the render sub-category results for 4K using a Core i7, both AMD and NVIDIA have their strong points in this benchmark. NVIDIA favors illumination (GI which is heavy compute shaders this is not being done asynchronously), compute shader work (this is async compute with culling and physics for foliage) and GBuffer rendering (all of these are representative of the shorter black bars) where AMD favors post processing, transparency and dynamic lighting (shorter orange bars)

Can people on all sides please stop talking about power savings. If you have the free time to game for the hours on end that this makes a difference, you have the disposable income to deal with literally a Starbucks a month worth of cost difference.

If money is no object then get dual Titan X's. I'm just assuming that people who are looking at midrange cards like the 970 and 390 are looking to limit their costs. In that case the 970 will save on electricity and you won't need a more expensive power supply.

As for DX12. It will most likely be several years before pure DX12 games hit the market. Until there are actual games with actual drivers available, these discussions are for entertainment purposes only.

The one thing I've learned about DX12 after seeing AotS and Fable benchmarks, is that it runs like shit. You've got the 970 and 390 barely scraping 60fps in a cartoon game and you have all GPUs running 30-40fps in Ashes. If we're using those to represent DX12 (as it seems we are) then can we please stay on DX11?

all I was saying is that DX12 is giving AMD a boost.

Still i'm waiting on the [H] review.

You must not know very many gamers. There's some guys I know that do this all day long and stream on twitch even with jobs. I personally game maybe 4-5 hours a week if I'm lucky but there's people that love gaming and make up a big chunk of the midrange market. And cageymaru, maybe you should put that $90 into upgrading your CPU.

Yes it is. Noone is denying that. Some of us are just having fun at the cost of people who argued that nvidia cards will be useless in dx12 games due to no async

Let's see....

200w 8hrs a day

(200x8)/1000 = 1.6kwh per day

$0.16 per kWh (here in CT, generation plus delivery cost)

1.6 x 0.16 = $0.256

Wow. If you game 8hrs a day, with the gpu pegged at 100% the whole time, it costs you 27 cents a day to run a 390 vs a 970.

Guess I'm not that worried about that $0.27 a day.

I doubt they care about $7 a month then, and if they do then like I said they have bigger problems like gaming addiction rather than looking for a better job that would get them better income

Also and upgrading CPU just did away with the power savings and ended up costing more so it doesn't quite work out for them, spending $90 on upgrade and then an extra $40-$90 in extra power.

Async benchmark is AOTS, Fable from everything read and implied does not use much AS, it uses it for culling only was the what one review site said.

I doubt they care about $7 a month then, and if they do then like I said they have bigger problems like gaming addiction rather than looking for a better job that would get them better income

Hey that would make for a great AMD marketing motto! "Get off your ass and get a better job pleb!". Like I said, even casual use can rack up about $50/yr which is a total waste, basically you're taking a lighter to money and burning it for no reason. Overall benchmarks don't show the 970 very far behind the 390x so why burn money for no reason? If people are so inclined to burn money, they'd all own Titan X's and not midrange AMD parts. Fortunately it seems most of the market isn't so inclined to burn money for no reason and it is why the 970 is one of the most popular cards ever made while AMD sales continue to sink.

In fact, AMD sent us a note that there is a new driver available specifically for this benchmark which should improve the scores on the Fury X, although it arrived too late for this pre-release look at Fable Legends (Ryan did the testing but is covering Samsung’s 950 Pro launch in Korea at this time).

I agree but at the same time that's about $90/year which can be otherwise put into a GPU upgrade and is instead wasted powering a GPU that is sucking down too much power relative to it's performance. It still makes the 970 a better buy, especially for someone that plans to upgrade and is on a limited budget and can't afford to burn $90/yr on electric for a GPU.

The $90 I'm saving per year in power is more than enough compensation.Some of the lengths you people go to to defend your favorite video card team are nuts. I hope they're paying you all well.

In this case, buying a 390 is like rolling coal, just because you can.

The one thing I've learned about DX12 after seeing AotS and Fable benchmarks, is that it runs like shit. You've got the 970 and 390 barely scraping 60fps in a cartoon game and you have all GPUs running 30-40fps in Ashes. If we're using those to represent DX12 (as it seems we are) then can we please stay on DX11?

The $90 I'm saving per year in power is more than enough compensation.

Kidding, of course...

Hardly. It's an almost worst case scenario of $91 a year, and an average of another $21 a year. That's the same as a pair of 100w incandescent light bulbs (have to specify, I love ccfl and led's personally).

Leave on a porch light at night? Kitchen light? I'd hardly consider those to be the same as purposefully running your diesel super rich. If the 290(x) is faster to boot, then I fail to see the issue.

Missing my point. Sure, in the end, you get to your destination... but you could have done it just as well without screwing the environment over. Too many of you have a "hurrr durr, it's only an extra $27 a month" attitude. Until you look at the scale of 100-300K cards doing the same damn thing, and all the energy wasted.

Missing my point. Sure, in the end, you get to your destination... but you could have done it just as well without screwing the environment over. Too many of you have a "hurrr durr, it's only an extra $27 a month" attitude. Until you look at the scale of 100-300K cards doing the same damn thing, and all the energy wasted.

After all this lecture, I just hope you were using AMD cards before the GTX 7xx release.

Thinking back, I don't think I've ever owned an ATI card. I think I went 3DFX -> Nvidia. But, back then there wasn't much of a choice. Efficiency wasn't exactly a goal at the time.

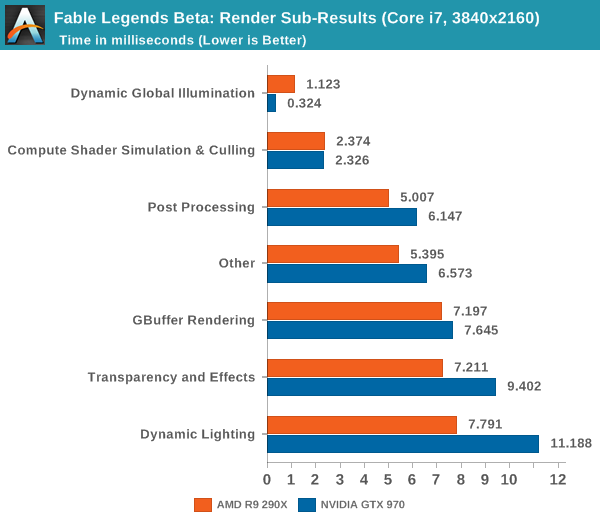

Think you are miss reading the graph, this graph is about latency in completion of a certain task, which lower is better, that's why

Missing my point. Sure, in the end, you get to your destination... but you could have done it just as well without screwing the environment over. Too many of you have a "hurrr durr, it's only an extra $27 a month" attitude. Until you look at the scale of 100-300K cards doing the same damn thing, and all the energy wasted.

Funny that I am actually consuming less power overall with my Fury X compared to 980Ti. The reason is the fact that 980Ti, or any other GPU except Fiji lineup cannot run my monitors with idle clocks. They have to up the clocks to low power 3D state or shit hits the fan (green screenMissing my point. Sure, in the end, you get to your destination... but you could have done it just as well without screwing the environment over.

Can you shut up about the energy savings then?

If a 390(x) is faster than a 970/980, but also uses more power, in your opinion is that bad? If so, you're saying that performance isn't the end goal, and that power is more important.

I'm pretty sure the 4670/980 combo you're running now doesn't sip power compared to a celeron/750ti. I bet you could save loads of power by playing at 720p.

Sorry if I hit a sore spot. Sheesh.