elvn

Supreme [H]ardness

- Joined

- May 5, 2006

- Messages

- 5,300

I thought I'd start a thread where details about dual layer LCD tech could be posted rather than it being only mixed into other threads.

Here are a few links with some basics:

-------------------------------

https://www.researchgate.net/publication/261050283_HDR_medical_display_based_on_dual_layer_LCD

(2013)

-------------------------------

https://appleinsider.com/articles/1...echnology-promises-crisp-lifelike-hdr-images-

(2016)

--------------------------------

------ Current news -----

--------------------------------

https://www.displaydaily.com/article/display-daily/if-one-lcd-is-not-enough-try-two

-------------------------------

https://www.displaydaily.com/paid-n...lanning-cg3145-high-contrast-monitor-shipment

--------------------------------

https://www.engadget.com/2019/01/07/hisense-ces-2019/

-------------------------------

https://www.oled-info.com/hisenses-new-uled-xd-technology-uses-dual-lcd-panels-achieve-high-contrast

--------------------------------------

https://www.techradar.com/news/sams...-uled-xd-offers-brightness-and-super-contrast

---------------------------------------

----------------------------------------

...

So for the hisense tv at least, they are quoting ~ 3000 nit HDR color volume, extreme .0003 black depth, no dim/fade area offset problems of FALD, no burn in risk nor being shackled to lower nit colors due to burn in avoidance of OLED.

Since micro-LED is still a ways off and likely to be astronomically priced at first, this tech could potentially be able to bridge the gap between OLED and MicroLED phases of monitor tech.

Here's hoping some major mfgs develop some in the next few years that can do some proper gaming. HDMI 2.1 4k VRR would be the main thing but 120hz capable response times and input lag of ~10ms or less would be important too.. BFI, Flicker, Interpolation options would be pluses.

Here are a few links with some basics:

-------------------------------

https://www.researchgate.net/publication/261050283_HDR_medical_display_based_on_dual_layer_LCD

(2013)

-------------------------------

https://appleinsider.com/articles/1...echnology-promises-crisp-lifelike-hdr-images-

(2016)

Apple's dual-layer LCD patent application was first filed for in August 2015

--------------------------------

------ Current news -----

--------------------------------

https://www.displaydaily.com/article/display-daily/if-one-lcd-is-not-enough-try-two

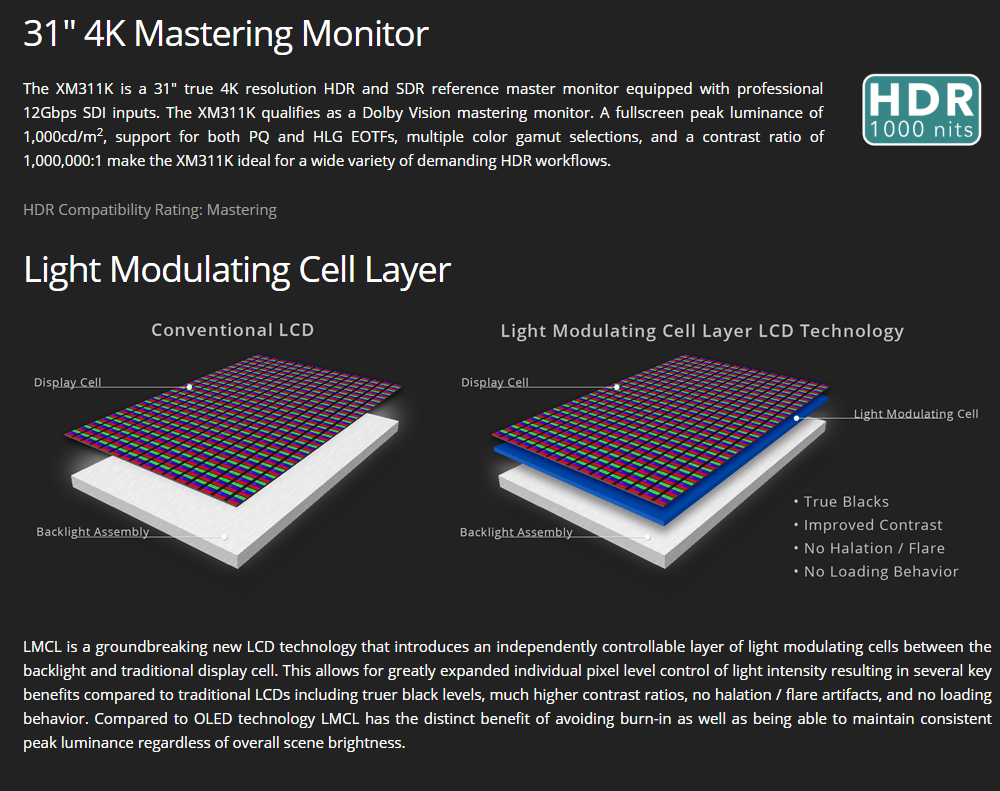

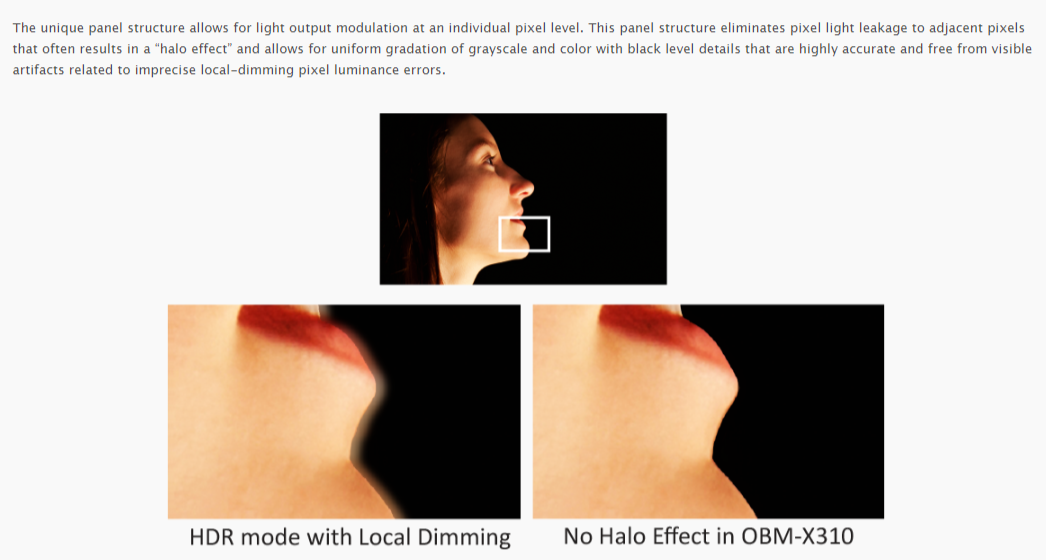

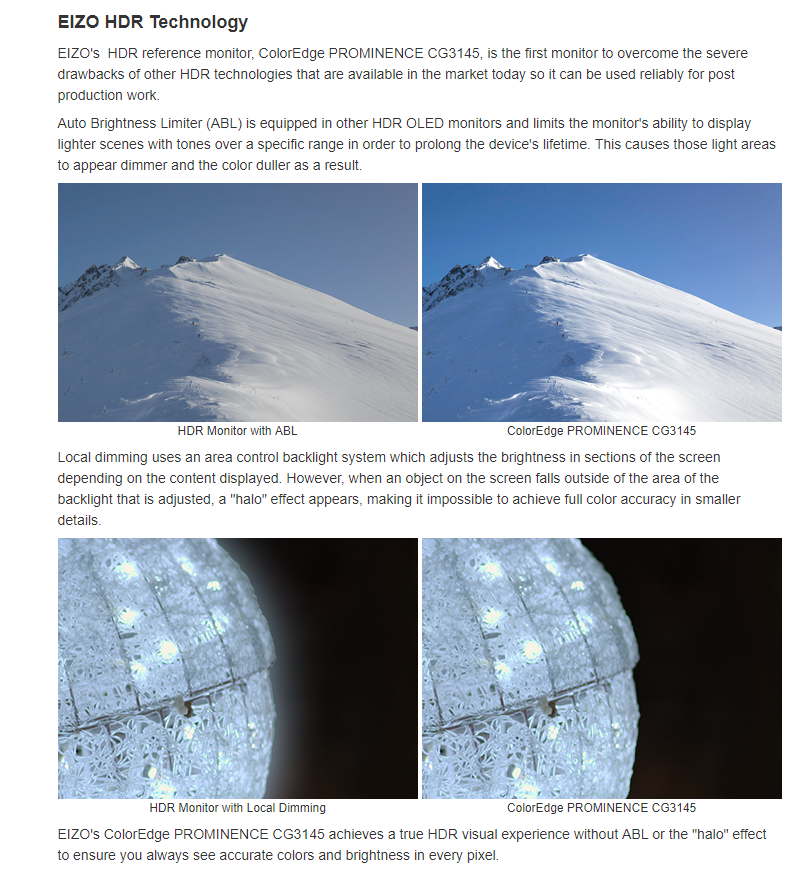

Sony was clearly stung by this technology (which was also adopted by several other broadcast monitor bands) and, as we reported from IBC in 2018, introduced a monitor based on a similar panel from Panasonic (Sony, of course, claims it has some special technology that is slightly different). The company also told us that it was no longer developing OLED broadcast monitors, but concentrating on the LCD type (while continuing to supply OLED products to those that love them) That was big news to me. (Sony Moves from OLED for Monitoring)

-------------------------------

https://www.displaydaily.com/paid-n...lanning-cg3145-high-contrast-monitor-shipment

Effectively, the monitor adds the high 'colour volume' that Samsung has been promoting for its QLED TVs, to the black levels of OLED.

--------------------------------

https://www.engadget.com/2019/01/07/hisense-ces-2019/

ULED XD's secret sauce, of course, is the pairing of two panels that sit in front of the LED backlight, with a 4K RGB display up front. And, sandwiched between the 4K panel and the backlight is a 1080p greyscale panel that displays the image in black and white. That means that, for local dimming, you'll actually be looking at both a dimmed LED source and a B&W image, which should offer far deeper blacks.

The company hasn't offered up many concrete specifications, but says that the TV offers more than 2,900 nits of brightness and the "highest dynamic range" seen on an LCD panel.

-------------------------------

https://www.oled-info.com/hisenses-new-uled-xd-technology-uses-dual-lcd-panels-achieve-high-contrast

basically a way to achieve a high number of 'local dimming' zones for an LCD display (over 2 million such zones, in fact). The TV itself is very bright (over 2,900 nits) and reportedly offers a great image quality and an almost perfect contrast. HiSense it will release its first ULED XD TVs later this year in China. Apparently SkyWorth is also demonstrating a similar technology at CES.

--------------------------------------

https://www.techradar.com/news/sams...-uled-xd-offers-brightness-and-super-contrast

The biggest and brightest of these technologies is Hisense's ULED XD tech that will be going into future screens that promises upgrades in local dimming, colors and dynamic range. It does this, according to Hisense, by using a Dual-cell ULED XD panel layer that puts a 1080p module displaying a grayscale image between a full array LED backlight and a 4K module.

While Hisense hasn't yet announced any TVs that use ULED XD, its 2019 flagship TV is no slouch in the performance department: The Hisense 75U9F is a 75-inch Quantum Dot screen with Android TV, 1,000 local dimming zones and a peak brightness of 2,200 nits. That puts the U9F on par with Samsung's Q9FN QLED that it debuted in 2018 and became one of the best TVs of last year.

Unfortunately, Hisense's 2019 flagship won't come cheap: when it launches in June, the 75-inch Hisense U9F will cost $3,499.99 (around £2,740, AU$4,999).

---------------------------------------

Note: Linus mentions the peak brightness wrong at 300. The reps quote 2900 nit HDR color and .0003 black depth

----------------------------------------

...

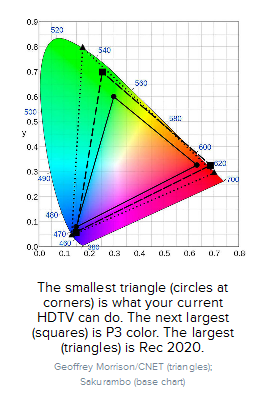

So for the hisense tv at least, they are quoting ~ 3000 nit HDR color volume, extreme .0003 black depth, no dim/fade area offset problems of FALD, no burn in risk nor being shackled to lower nit colors due to burn in avoidance of OLED.

Since micro-LED is still a ways off and likely to be astronomically priced at first, this tech could potentially be able to bridge the gap between OLED and MicroLED phases of monitor tech.

Here's hoping some major mfgs develop some in the next few years that can do some proper gaming. HDMI 2.1 4k VRR would be the main thing but 120hz capable response times and input lag of ~10ms or less would be important too.. BFI, Flicker, Interpolation options would be pluses.

![[H]ard|Forum](/styles/hardforum/xenforo/logo_dark.png)