Navigation

Install the app

How to install the app on iOS

Follow along with the video below to see how to install our site as a web app on your home screen.

Note: This feature may not be available in some browsers.

More options

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

Vulcan Multi GPU

- Thread starter Lucky75

- Start date

Baasha

Limp Gawd

- Joined

- Feb 23, 2014

- Messages

- 249

Vulkan API has to be configured by the devs to use multi-GPU. It cannot be "hacked" like DX11 profiles to add MGPU support - Strange Brigade and RDR2 have great MGPU support built in using the Vulkan API. Wolfenstein OTOH, does not. You cannot "enable" MGPU support if the devs have not implemented it.

cybereality

[H]F Junkie

- Joined

- Mar 22, 2008

- Messages

- 8,789

If you're a developer, search for "vulkan explicit multi gpu" you should find some info on the API.

See here for example:

https://ourmachinery.com/post/explicit-multi-gpu-programming/

This will not work on released games, only if you are creating your own project.

See here for example:

https://ourmachinery.com/post/explicit-multi-gpu-programming/

This will not work on released games, only if you are creating your own project.

cybereality

[H]F Junkie

- Joined

- Mar 22, 2008

- Messages

- 8,789

Well, the 3080 doesn't have an NVLink connector. It might still work in mGPU but only for games that have specific support by the developers.

cybereality

[H]F Junkie

- Joined

- Mar 22, 2008

- Messages

- 8,789

Honestly, don't bother with multi GPU. It's a waste of money and barely even works anymore.

cybereality

[H]F Junkie

- Joined

- Mar 22, 2008

- Messages

- 8,789

Well you'll need to make some compromises somewhere, but the 3090 should handle 4K high refresh on moderate settings.

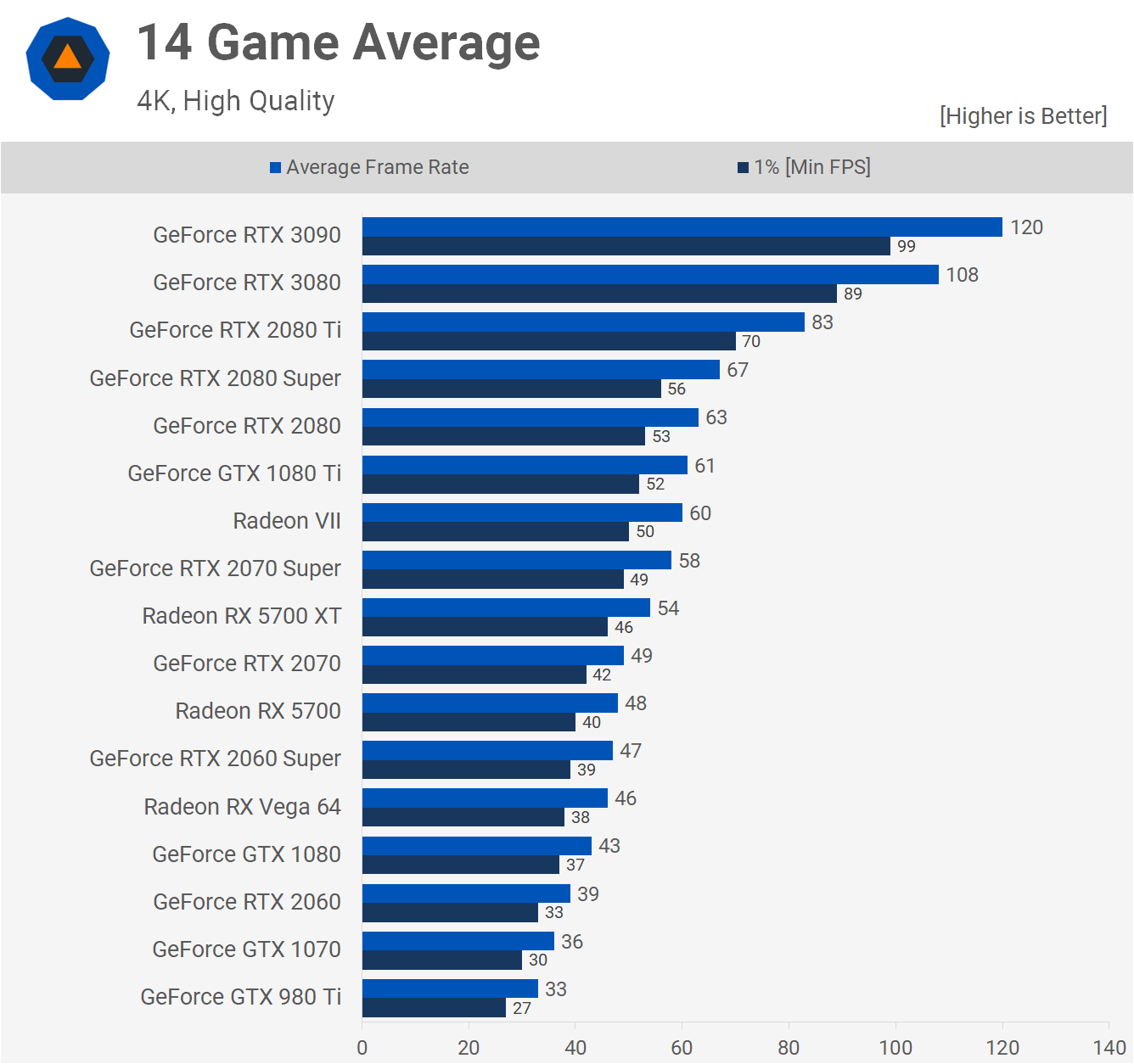

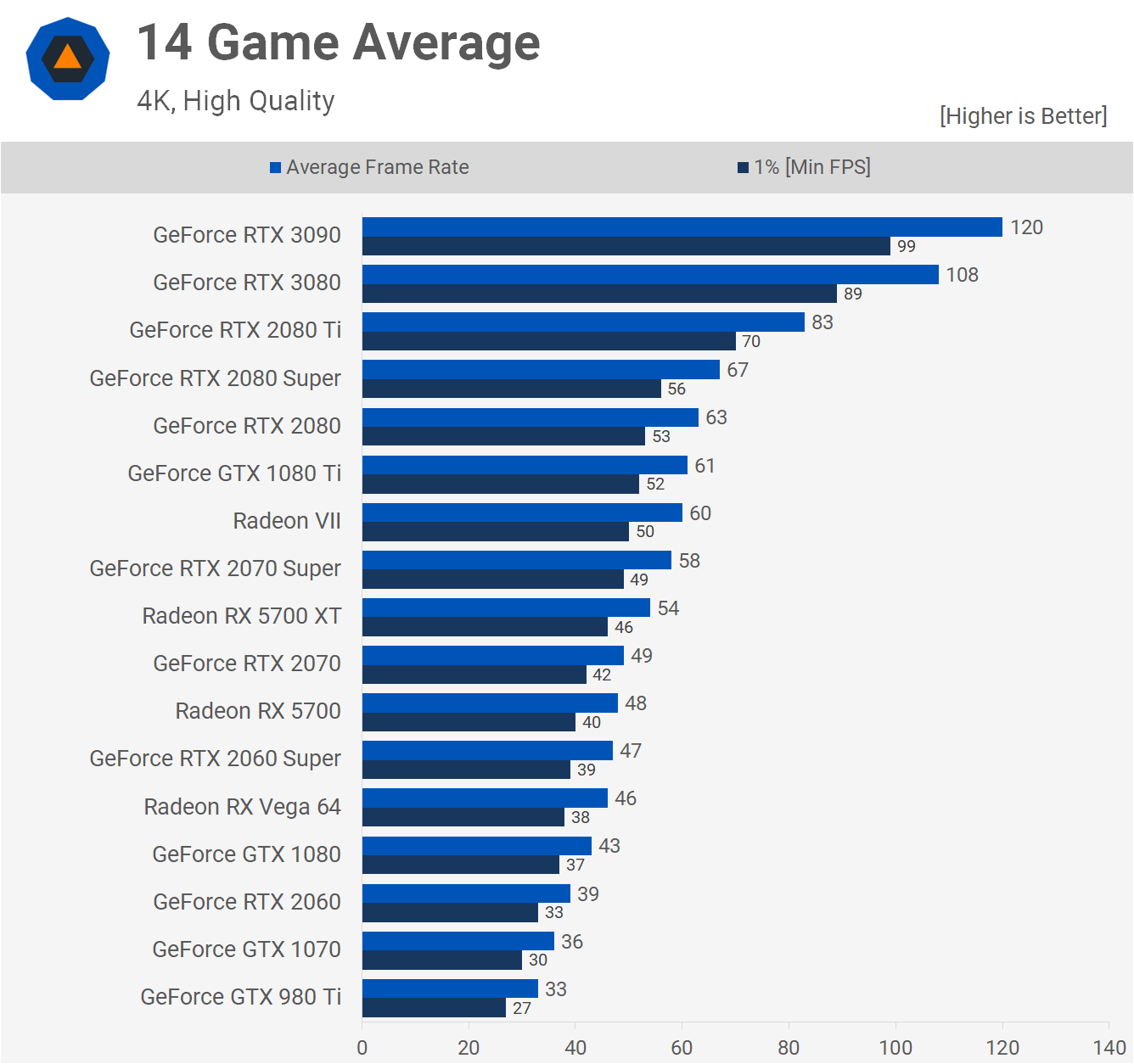

I wish Nvidia had advertised that over 8K, but here we are. See here the 3090 is getting 120 fps average. With some small tweaks you can absolutely get to 144 fps.

With G-Sync/FreeSync, I'd say the 3080 would still be enough, usually 90 -100 fps VRR is the sweet spot.

I wish Nvidia had advertised that over 8K, but here we are. See here the 3090 is getting 120 fps average. With some small tweaks you can absolutely get to 144 fps.

With G-Sync/FreeSync, I'd say the 3080 would still be enough, usually 90 -100 fps VRR is the sweet spot.

Mylex

Gawd

- Joined

- Aug 30, 2018

- Messages

- 852

The jump in performance is still impressive until you look at the power draw increase. Man I'm not looking forward to that aspect of 30 series ownership.

3080 do not have NVLink connectors at all. There is no bridge for them. Only the 3090 has a NVLink. SLI is dead and won't be supported in the 3xxx series. For the 3090 it is for compute performance and not gaming. SLI is dead move on.Absolutely that I mean ... if I want to have 144 Hz for some games I need more than 1 card. NVLink can be used for old DX11 applications and some DX12. I am not sure about DX12 Ultimate.

Guys, why do not want to see my point here ? AMD Crossfire have a special SLI NV or whatelse is the name Link ? After DX 12.1 SLI was pointless but stil is locked from gaming developers.

I am trying to save myself in that game "If you want good peformance pay more than double".

For example " The only game that supports heterogeneous DX12 mGPU is Ashes of the Singularity. All the others are homogeneous DX12 mGPU (with only two identical cards). With AMD drivers at least, you need to enable Crossfire to get them to do whatever magic they do that allows most DX12 mGPU capable games to find the linked pair of cards. You shouldn't need any bridges for DX12 mGPU (though AMD cards haven't used them in ages, at least not the gaming models). "

I am trying to save myself in that game "If you want good peformance pay more than double".

For example " The only game that supports heterogeneous DX12 mGPU is Ashes of the Singularity. All the others are homogeneous DX12 mGPU (with only two identical cards). With AMD drivers at least, you need to enable Crossfire to get them to do whatever magic they do that allows most DX12 mGPU capable games to find the linked pair of cards. You shouldn't need any bridges for DX12 mGPU (though AMD cards haven't used them in ages, at least not the gaming models). "

sirmonkey1985

[H]ard|DCer of the Month - July 2010

- Joined

- Sep 13, 2008

- Messages

- 22,414

Yes, you right for most of 3d shooters with little tweak should be ok. But it was the same with RTX2080ti too. When we will have a complete card that no need adjustment for it ?

never, that's the whole point of game development and hardware.. they're both meant to push each other.. otherwise there would never be a reason to upgrade.

Guys, why do not want to see my point here ? AMD Crossfire have a special SLI NV or whatelse is the name Link ? After DX 12.1 SLI was pointless but stil is locked from gaming developers.

I am trying to save myself in that game "If you want good peformance pay more than double".

For example " The only game that supports heterogeneous DX12 mGPU is Ashes of the Singularity. All the others are homogeneous DX12 mGPU (with only two identical cards). With AMD drivers at least, you need to enable Crossfire to get them to do whatever magic they do that allows most DX12 mGPU capable games to find the linked pair of cards. You shouldn't need any bridges for DX12 mGPU (though AMD cards haven't used them in ages, at least not the gaming models). "

the reality is nvidia and AMD doen't want to pay the money needed to continue supporting SLI/CFX and game developers don't want to be held responsible for making sure SLI/CFX is supported correctly so mgpu's dead.

Mylex

Gawd

- Joined

- Aug 30, 2018

- Messages

- 852

We can't fix this for you but we can point you to a place to F5 for a 3080.

The future is cloud gaming and Video Cards with chiplets that is normal. How we will gaming next 2-5 years which are transmission? We still need 2 cards to get 120-144 Hz on 4K or 60Hz at 8K.never, that's the whole point of game development and hardware.. they're both meant to push each other.. otherwise there would never be a reason to upgrade.

the reality is nvidia and AMD doen't want to pay the money needed to continue supporting SLI/CFX and game developers don't want to be held responsible for making sure SLI/CFX is supported correctly so mgpu's dead.

The future is cloud gaming and Video Cards with chiplets that is normal. How we will gaming next 2-5 years which are transmission? We still need 2 cards to get 120-144 Hz on 4K or 60Hz at 8K.

8K 60 hz require 50 gbits by second (need compressed signal to be send to a monitor until display port 2.0), an extremely good internet connection will still be 1 gbits in the next 2-5 year's I imagine.

I doubt that level of compression is interesting to do or would not completely destroy the purpose, cloud gaming should be for much lower resolution or lower FPS (would high FPS make sense in a cloud latency context and almost nill bandwitch, 4K 144hz is 31.35 gbits )

Native 4k 144hz or 8K 60 hz should be left for a far future for most type of game, the sacrifice they would need to not seem to be a good idea.

I do not undestand what is the issue with Internet speed or with next HDMI or DP ? We have a 8K 60 already and 400 Gbps switches that can be used very soon. For the cloud gaming it should be only a browser sharing which is 1-3 Mbps. Only important there is latency which Nvidia already starting to improve.

About compression Nvidia using last 5 years Neural networks with backpropagation which are doing perfect aproximation (x4 or x16 times). That mean that cards like RTX 3090 can approximate screen size like 1440 to 4320 in real time like now. That is just a first card that using full specs of that technology.

About compression Nvidia using last 5 years Neural networks with backpropagation which are doing perfect aproximation (x4 or x16 times). That mean that cards like RTX 3090 can approximate screen size like 1440 to 4320 in real time like now. That is just a first card that using full specs of that technology.

cybereality

[H]F Junkie

- Joined

- Mar 22, 2008

- Messages

- 8,789

See here why multi-GPU is dead and gone.

See here why multi-GPU is dead and gone.

I saw it and results for 4K 360 Frames is wonderful. Unfortunely new games will stop using multi-GPU and that is the reason that we need follow Nvidia and AMD recommendations. If you saw Radja interview ... he already told us that future is on only one GPU with very big performance. But why still is not good enogh ... why not most of the games do not have optimisation like DOOM ethernal ?

Last edited:

I do not undestand what is the issue with Internet speed or with next HDMI or DP ? We have a 8K 60 already and 400 Gbps switches that can be used very soon. For the cloud gaming it should be only a browser sharing which is 1-3 Mbps. Only important there is latency which Nvidia already starting to improve.

About compression Nvidia using last 5 years Neural networks with backpropagation which are doing perfect aproximation (x4 or x16 times). That mean that cards like RTX 3090 can approximate screen size like 1440 to 4320 in real time like now. That is just a first card that using full specs of that technology.

If you want to cloud game (if we talk about the rendering being done on a remote location by a super server), streaming 8K at 60 hz is not close to fit on 10 gbs internet and that is still quite rare and costly, just to express how much data that is, it does not fit on a HDMI cable yet either, the near 80 gbits display port 2 should do it.

For the cloud gaming it should be only a browser sharing which is 1-3 Mbps

I am not sure what that mean (I am really not familiar), but you cannot have high quality at 1-3 Mbps, specially with realtime affair where you cannot use buffering/non real time compression and so on. It is not just reaction time, reaction time is the only important variable if you do not mind visual quality, not minding visual quality, using giant and not that good compression, that pretty much kill the purpose of using something like 8K instead of 4K (same can be said for many current use of 4K instead of 2K too)

cybereality

[H]F Junkie

- Joined

- Mar 22, 2008

- Messages

- 8,789

Did you watch the video? If you did you'd see that SLI is worthless, even if you don't care about money, it's still not worth it.So we are still on same boat rtx 3090 is doing 80 fps in most games 4K and 30 FPS 8K. For me I still need secound card for best experince.

I understand if you want more performance, but a second card won't help. It only works in a couple games and support seems to be getting worse and worse every year.

Last edited:

UnknownSouljer

[H]F Junkie

- Joined

- Sep 24, 2001

- Messages

- 9,041

In order to get 8k at 120Hz that requires 80Gbps of data. This is if its done locally - there is few if any of us that have that kind of bandwidth. While compression might help if the whole point of this discussion is to have "maximum fidelity" and high hz - cloud computing/gaming defeats this purpose. Cloud gaming will be about bringing "good enough" to the masses. It's for people that would otherwise be playing on a console or a phone, but getting to charge them all a monthly fee forever rather than a piece of hardware and games on a cycle.The future is cloud gaming and Video Cards with chiplets that is normal. How we will gaming next 2-5 years which are transmission? We still need 2 cards to get 120-144 Hz on 4K or 60Hz at 8K.

You haven't been gaming for very long in the computer space if you don't know that you'll never achieve perfect. "Perfect" is a moving target (goal posts are never the same - 10 years ago we were happy at 1080p as an example), it's never obtained, and there is always something that is faster and there are always graphical settings that require more power. Right now you think 4k 144Hz is perfect. But soon you'll long for 8k. Or you'll long for 240Hz. Or you'll long for the next set of graphics APIs. Or more detailed textures. It never stops. "But can it run Crysis?" is a meme for a reason. There will always be something more demanding.

As cybereality has already stated: getting a second card will do literally nothing for 99% of games. It has to be coded for explicitly and devs don't bother. So if you feel like spending $3000 on two 3090 cards, go ahead. We're not stopping you. But you won't get any improvement.So we are still on same boat rtx 3090 is doing 80 fps in most games 4K and 30 FPS 8K. For me I still need secound card for best experince.

Last edited:

So guys I am totally agree with all that two RTX 3090 are pointless because nobody will care for 4K 120 Hz. But I want to have more games than DOOM and 2-3 more that can handle more than 120-140 FPS.

Bad thing is that secound card can be used only for crypto mining. Which is not my target but is another option.

Bad thing is that secound card can be used only for crypto mining. Which is not my target but is another option.

UnknownSouljer

[H]F Junkie

- Joined

- Sep 24, 2001

- Messages

- 9,041

You can run every game to date more or less at 4k 120/144Hz. You just have to give up some level of graphical fidelity to do so. We've been over this; so we're going round and round talking about the same stuff. There currently does not exist a "no-compromise" solution. For some reason this is either too hard for you to understand or to accept. I don't really know which it is and frankly it doesn't matter.So guys I am totally agree with all that two RTX 3090 are pointless because nobody will care for 4K 120 Hz. But I want to have more games than DOOM and 2-3 more that can handle more than 120-140 FPS.

Bad thing is that secound card can be used only for crypto mining. Which is not my target but is another option.

The bottom line is the solution for what you want doesn't exist yet and going on about it won't change that fact. It's unlikely that AMD will change that this cycle either (even if they do make a card that is faster than a 3090, there is still a zero percent chance that it will be able to achieve 4k 144hz locked, maxed out in every title). Maybe in another 2-3 years.

![[H]ard|Forum](/styles/hardforum/xenforo/logo_dark.png)