Halon

Gawd

- Joined

- Aug 13, 2004

- Messages

- 853

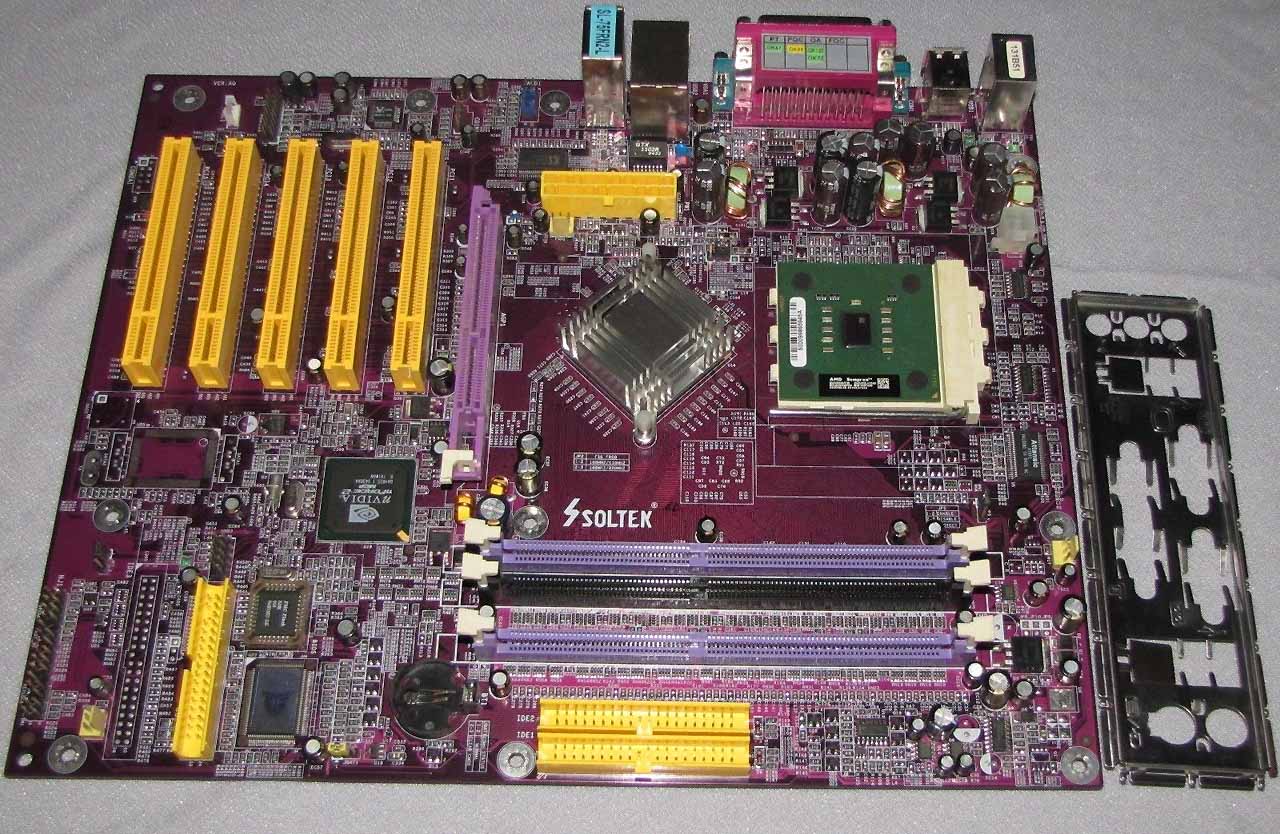

UT2003/2004 were designed for high-end Direct3D 8 hardware, and the default renderer was D3D8 through and through. There was an OpenGL renderer provided but it was relatively barebones and mostly intended for testing as I recall. There were some improvements made to it for Linux compatibility, but that was the better part of 20 years ago and I don't remember the details very well any more. It seemed to do alright on a Pentium 4 running Slackware with a Geforce2 Pro I had kicking around.was the default renderer OpenGL or D3D?

the NV30 / NV35 was good at OpenGL, but i can't recall if ut2003/4 defaulted to D3D in Windows.

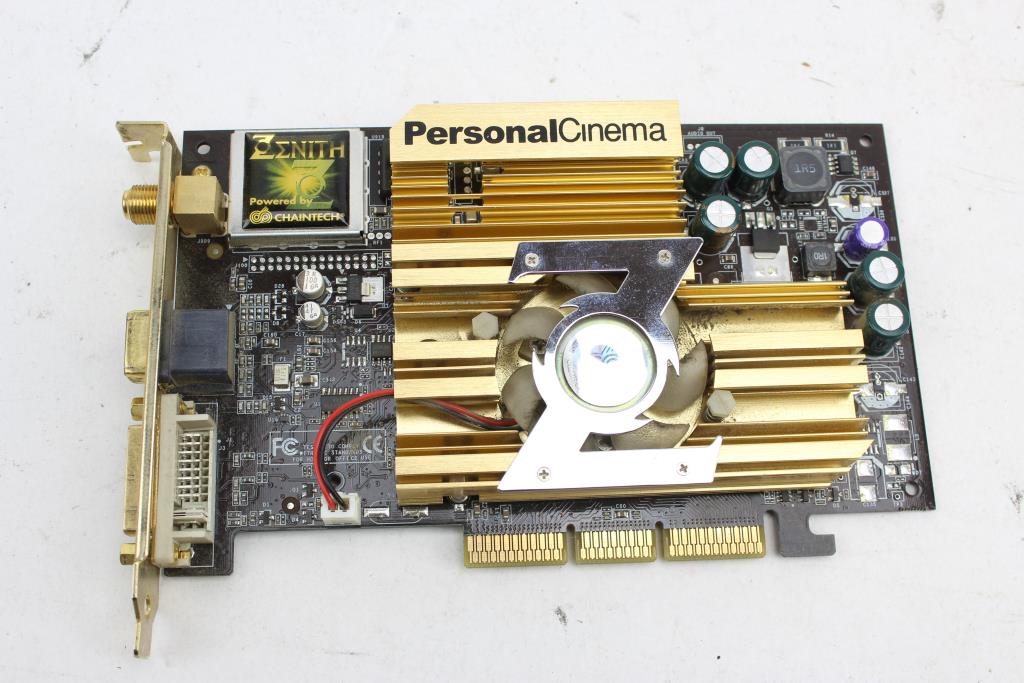

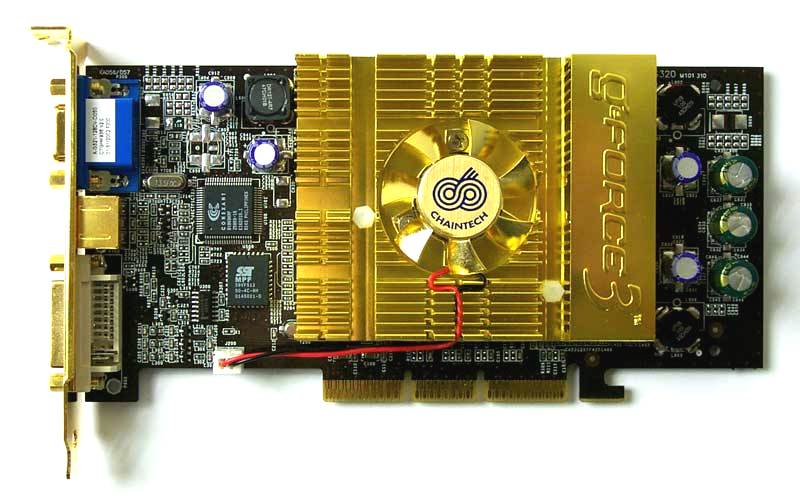

i was using Linux with my NV35 (FX 5900) back in the day

Later a Direct3D 9 renderer was added, but it made very little difference in practice and I *think* they removed it in a subsequent patch. Epic also added the Pixomatic software renderer, which was single-threaded but allowed the game to run a hell of a lot better on systems with crappy integrated video if they had the CPU performance to drive it. That was a lifesaver in the days when Intel graphics were worse than slow.

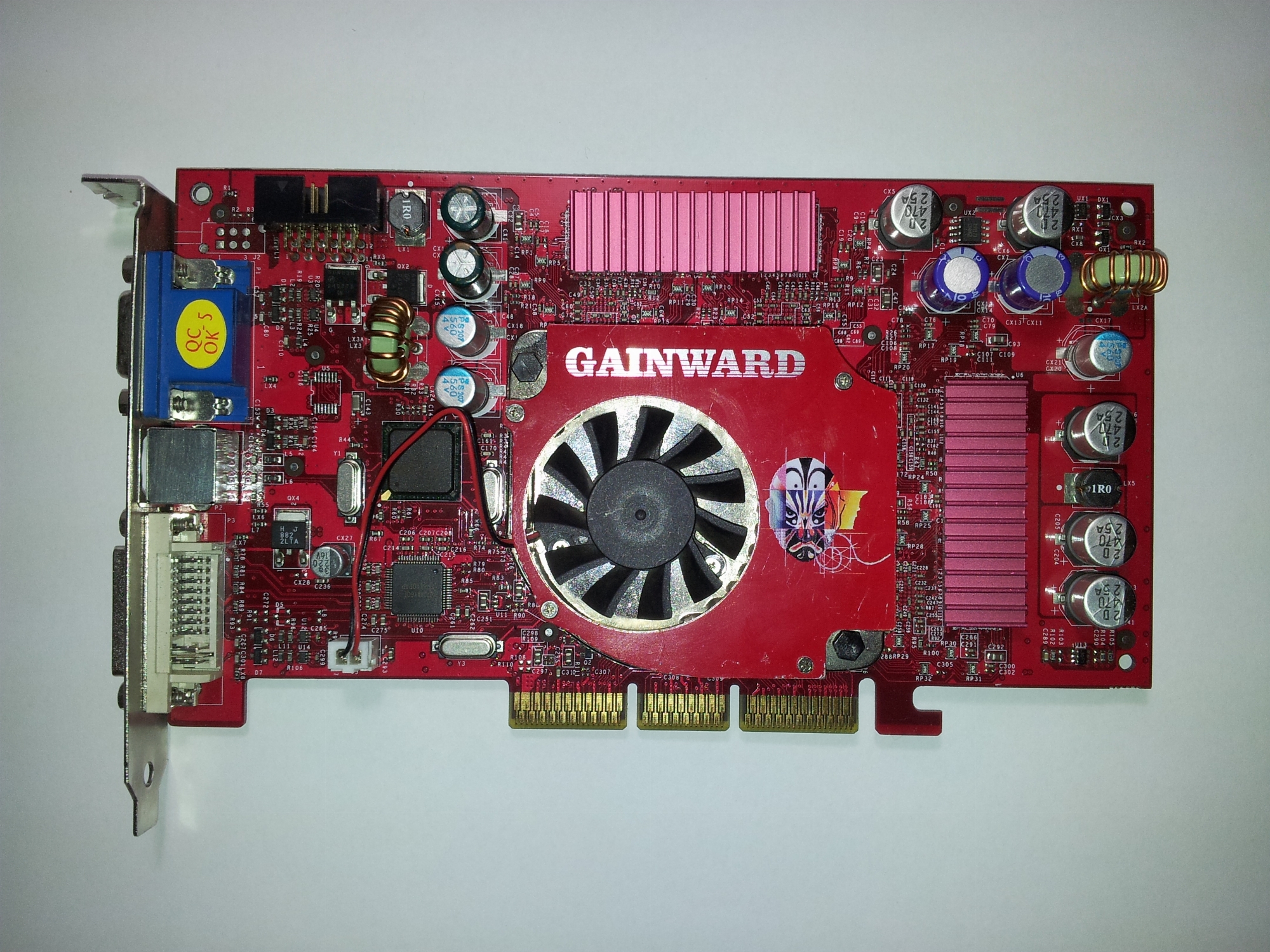

Outside of a few titles where they performed measurably worse than the GeForce4 Ti series they were intended to replace, the FX series were generally competent Direct3D 8 cards. The big issue at that point was waiting for driver optimizations to land, and for them to balance quality and performance in the process. My 5900XT pulled a lot of duty in UT2004... Thanks for the trip down memory lane.

Last edited:

![[H]ard|Forum](/styles/hardforum/xenforo/logo_dark.png)