sblantipodi

2[H]4U

- Joined

- Aug 29, 2010

- Messages

- 3,765

Hi all,

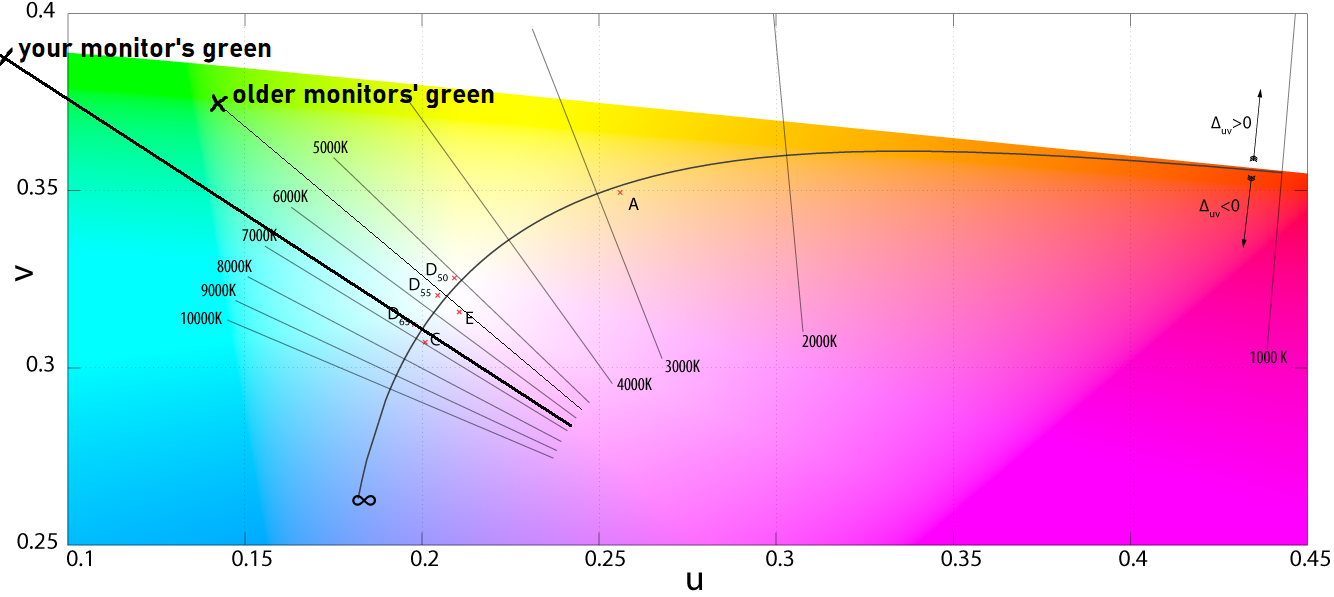

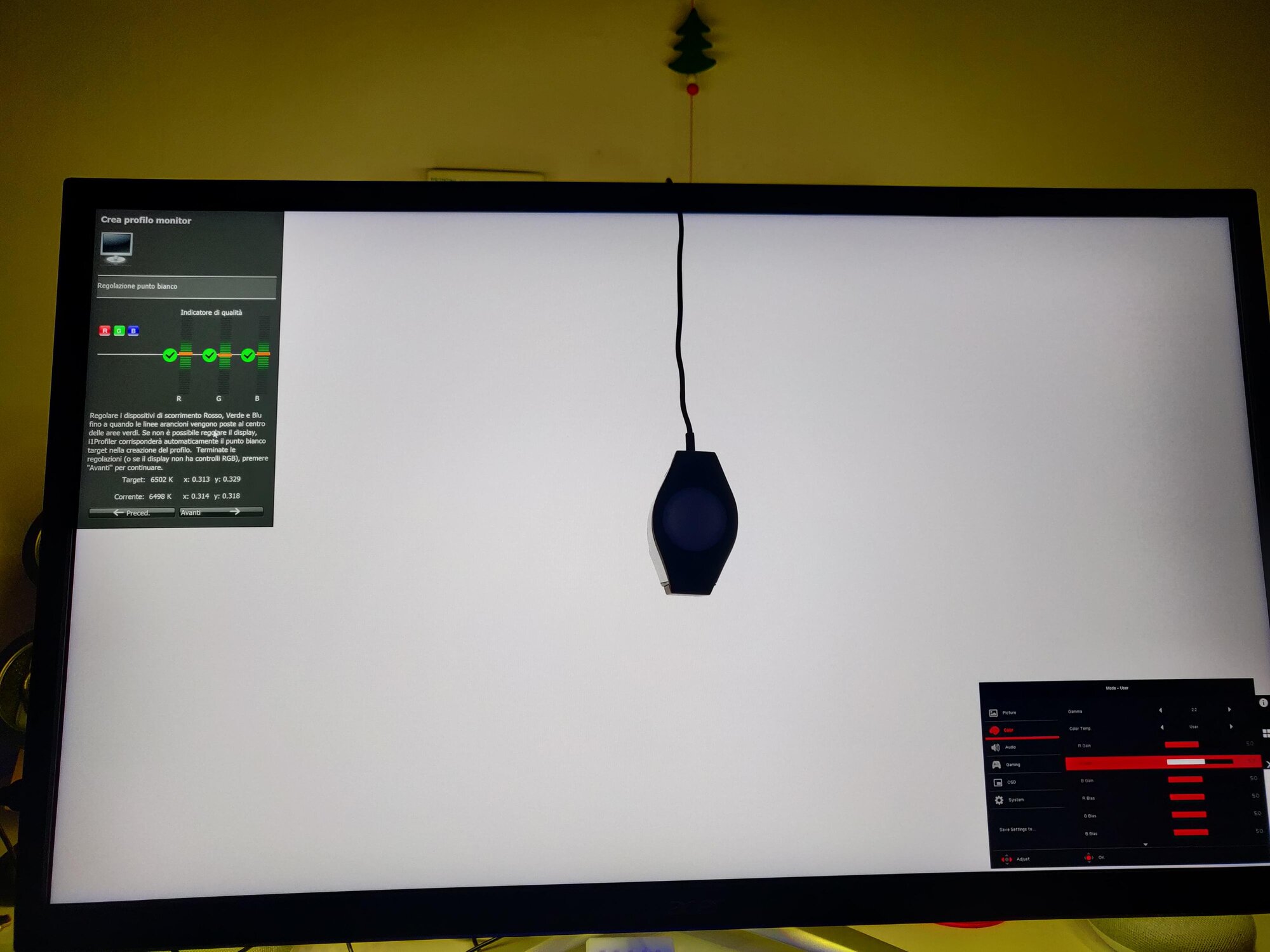

I'm trying to correctly calibrate my wide gamut

Acer Nitro XV273K

using an X-Rite i1 display pro colorimeter and i1 profiler software.

Something strange happen on this monitor that I never experienced before on other monitors.

I can easily achieve 6500K moving the Red or Blu gain but moving the Green gain seems to not influence the measured color temperature even if I can see the temperature changing with my naked eyes.

This is bad because I have one gain control less to achieve the temperature I want. It's like calibrating the monitor using red and blu only.

Why I have this problem?

Please help.

Thanks

I'm trying to correctly calibrate my wide gamut

Acer Nitro XV273K

using an X-Rite i1 display pro colorimeter and i1 profiler software.

Something strange happen on this monitor that I never experienced before on other monitors.

I can easily achieve 6500K moving the Red or Blu gain but moving the Green gain seems to not influence the measured color temperature even if I can see the temperature changing with my naked eyes.

This is bad because I have one gain control less to achieve the temperature I want. It's like calibrating the monitor using red and blu only.

Why I have this problem?

Please help.

Thanks

![[H]ard|Forum](/styles/hardforum/xenforo/logo_dark.png)