Navigation

Install the app

How to install the app on iOS

Follow along with the video below to see how to install our site as a web app on your home screen.

Note: This feature may not be available in some browsers.

More options

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

New video card/bottlenecking question

- Thread starter Braamer

- Start date

cannondale06

[H]F Junkie

- Joined

- Nov 27, 2007

- Messages

- 16,180

coming from a gtx260 you should get a nice boost in most games but yeah that cpu is really going to hold back any gpu upgrade. in some cases you may not get much more than half of what even a 7850 is capable of.

That's my concern. I might just have to hold off until I can do a complete system.coming from a gtx260 you should get a nice boost in most games but yeah that cpu is really going to hold back any gpu upgrade. in some cases you may not get much more than half of what even a 7850 is capable of.

whitewater

n00b

- Joined

- Apr 16, 2012

- Messages

- 62

It depends on the game as to how much of a "bottleneck" the CPU is.

In 99% of games, you'll be more than happy with the performance upgrade and the CPU will barely make a difference and pretty much everything will look and run at about 4x the frame rate on a 7850 compared to a 260, so you'll experienec much higher settings at a much higher frame rate.

If you're happy with a frame rate of ~40-50 in BF3 multiplayer at ultra settings, then you'll be fine also for a long while.

If you choose to overclock your processor to 3Ghz or 3.3Ghz (possible if you already have a decent heatsink/mobo) you'll be more than fine for a decent experience in basically every game for a while.

CPUs have only really increased in capability at a rate of ~10% a year for the past 5 years, but GPUs way way faster, moving from your CPU to an Ivy Bridge CPU is basically the difference between 1 year of graphics cards on average, the main speed boost people notice from getting a new pc is from the SSD that they buy with it.

In 99% of games, you'll be more than happy with the performance upgrade and the CPU will barely make a difference and pretty much everything will look and run at about 4x the frame rate on a 7850 compared to a 260, so you'll experienec much higher settings at a much higher frame rate.

If you're happy with a frame rate of ~40-50 in BF3 multiplayer at ultra settings, then you'll be fine also for a long while.

If you choose to overclock your processor to 3Ghz or 3.3Ghz (possible if you already have a decent heatsink/mobo) you'll be more than fine for a decent experience in basically every game for a while.

CPUs have only really increased in capability at a rate of ~10% a year for the past 5 years, but GPUs way way faster, moving from your CPU to an Ivy Bridge CPU is basically the difference between 1 year of graphics cards on average, the main speed boost people notice from getting a new pc is from the SSD that they buy with it.

It depends on the game as to how much of a "bottleneck" the CPU is.

In 99% of games, you'll be more than happy with the performance upgrade and the CPU will barely make a difference and pretty much everything will look and run at about 4x the frame rate on a 7850 compared to a 260, so you'll experienec much higher settings at a much higher frame rate.

If you're happy with a frame rate of ~40-50 in BF3 multiplayer at ultra settings, then you'll be fine also for a long while.

If you choose to overclock your processor to 3Ghz or 3.3Ghz (possible if you already have a decent heatsink/mobo) you'll be more than fine for a decent experience in basically every game for a while.

CPUs have only really increased in capability at a rate of ~10% a year for the past 5 years, but GPUs way way faster, moving from your CPU to an Ivy Bridge CPU is basically the difference between 1 year of graphics cards on average, the main speed boost people notice from getting a new pc is from the SSD that they buy with it.

what? from his CPU to ivy bridge would be huge.

cannondale06

[H]F Junkie

- Joined

- Nov 27, 2007

- Messages

- 16,180

whitewater, he cant oc as it looks like that is a Dell pc. a stock Q8300 is a fairly large bottleneck for today's mid range or better cards. also many settings impact the cpu not just the gpu so getting a new gpu does not mean he can just crank the settings.

whitewater

n00b

- Joined

- Apr 16, 2012

- Messages

- 62

Not really. Here's a video of BF3 on a similar setup to having a 7850 GPU + Quad 8300 CPU, infact a 7850 is much better than the one in the video.

http://www.youtube.com/watch?v=9SoC2fZx9q0

I really don't see how having an Ivy bridge processor would improve the experience much, and this is in an "Intense" CPU game.

http://www.youtube.com/watch?v=9SoC2fZx9q0

I really don't see how having an Ivy bridge processor would improve the experience much, and this is in an "Intense" CPU game.

cannondale06

[H]F Junkie

- Joined

- Nov 27, 2007

- Messages

- 16,180

what does that video prove? its a Q6600 oced to 3.2 so its about a 30% faster cpu than a stock Q8300. a Q8300 would easily drop into the low 30s a lot and probably average low 40s at best where as 3570k would nearly double that.Not really. Here's a video of BF3 on a similar setup to having a 7850 GPU + Quad 8300 CPU, infact a 7850 is much better than the one in the video.

http://www.youtube.com/watch?v=9SoC2fZx9q0

I really don't see how having an Ivy bridge processor would improve the experience much, and this is in an "Intense" CPU game.

whitewater

n00b

- Joined

- Apr 16, 2012

- Messages

- 62

The Q6600 @3.2 is about 20% faster than the Q8300. You didn't take architecture into account. Here's an i2500k with an 560Ti OC http://www.youtube.com/watch?v=YAggH3bEkZI running at 51FPS average. Do you still think a i3570k would somehow double that?

cannondale06

[H]F Junkie

- Joined

- Nov 27, 2007

- Messages

- 16,180

a Q6600 at 3.2 would be over 30% faster than a 2.5 Q8300. and random youtube videos is not a way to prove what you are trying prove. its fact that in multi player the newer i5 cpus are vastly better than a Q8300 would be. and a 3570k would double what Q8300 could do with full map and thats a a fact too.The Q6600 is about 20% faster than the Q8300. Here's an i2500k with an 560Ti OC http://www.youtube.com/watch?v=YAggH3bEkZI running at 51FPS average. Do you still think a i3570k would somehow double that?

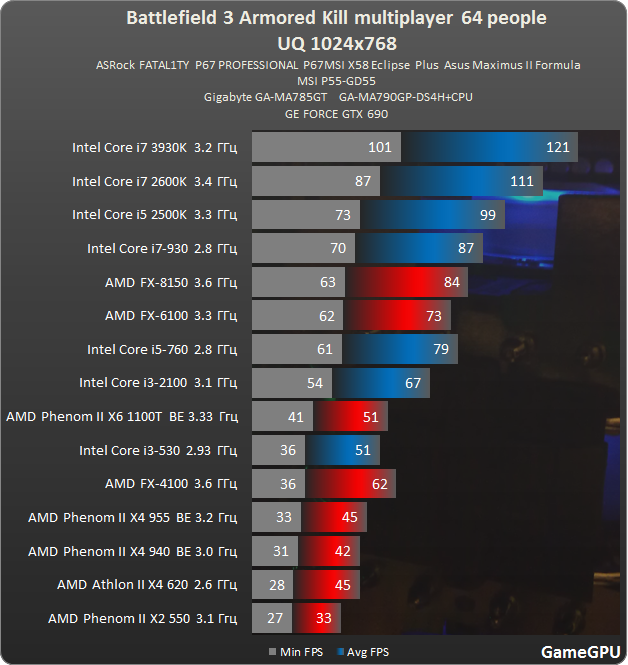

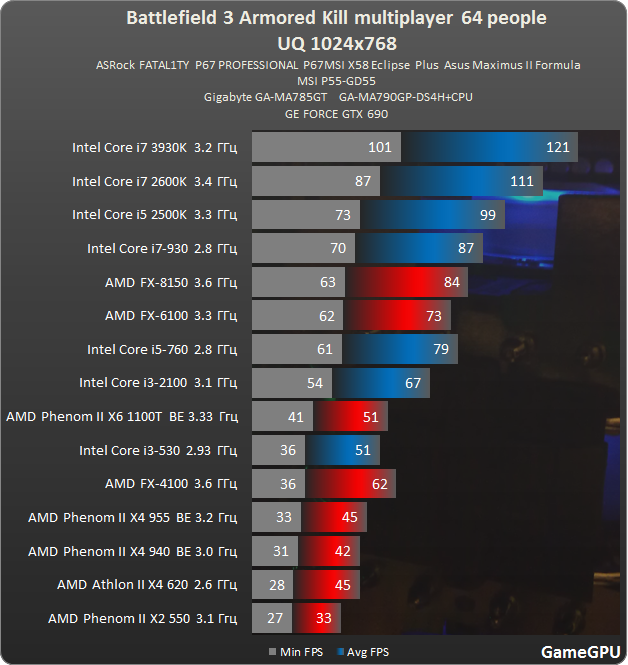

EDIT: maybe this will sink in for you. lol. his cpu would be slower than that X4 620 you see next to last.

whitewater

n00b

- Joined

- Apr 16, 2012

- Messages

- 62

Again, you didn't take architecture into account in your first comment.

Above I also stated in my first post

"If you're happy with a frame rate of ~40-50 in BF3 multiplayer at ultra settings, then you'll be fine also for a long while."

That is exactly what your graph shows and that's in the most extreme CPU settings possible, upping the display resolution will make your graph look the same on the lower end of the scale, but your high end FPS will be much lower. 1024x768 is 2.8x less intense compared to 1920x1080. Also running a card that's SLI does have a CPU overhead associated with it.

Above I also stated in my first post

"If you're happy with a frame rate of ~40-50 in BF3 multiplayer at ultra settings, then you'll be fine also for a long while."

That is exactly what your graph shows and that's in the most extreme CPU settings possible, upping the display resolution will make your graph look the same on the lower end of the scale, but your high end FPS will be much lower. 1024x768 is 2.8x less intense compared to 1920x1080. Also running a card that's SLI does have a CPU overhead associated with it.

cannondale06

[H]F Junkie

- Joined

- Nov 27, 2007

- Messages

- 16,180

I said the 3570 would be about twice as fast and it is.

I said a Q6600 at 3.2 would be over 30% faster than a 2.5 Q8300 and that is true.

I said that he would get in the low 40s for an average and drop into low 30s a lot and that would be true based on two cpus that are faster than his getting about that and even those dropped in to the 20s.

now again his Q8300 is huge bottleneck that will limit the crap out of some games and not let him come close to getting the most out of a 7850 overall. if he is planning on upgrading the rest of the system soon then nothing wrong with getting a 7850 now since they probably wont drop much lower in price in the next couple of months.

I said a Q6600 at 3.2 would be over 30% faster than a 2.5 Q8300 and that is true.

I said that he would get in the low 40s for an average and drop into low 30s a lot and that would be true based on two cpus that are faster than his getting about that and even those dropped in to the 20s.

now again his Q8300 is huge bottleneck that will limit the crap out of some games and not let him come close to getting the most out of a 7850 overall. if he is planning on upgrading the rest of the system soon then nothing wrong with getting a 7850 now since they probably wont drop much lower in price in the next couple of months.

whitewater

n00b

- Joined

- Apr 16, 2012

- Messages

- 62

To conclude:

Effectively with the upgrade to 7850 the OP is guaranteed:

Much high image quality.

At a higher frame rate.

In every game.

About a 4x raw performance difference.

With an absolute worst case game of ~40-50FPS in a 64 player server in BF3.

But running at ~1/3 of your resolution, you could potentially run the game at up to double the FPS with a high end CPU

I'll leave it to the OP to make his decision from here.

Effectively with the upgrade to 7850 the OP is guaranteed:

Much high image quality.

At a higher frame rate.

In every game.

About a 4x raw performance difference.

With an absolute worst case game of ~40-50FPS in a 64 player server in BF3.

But running at ~1/3 of your resolution, you could potentially run the game at up to double the FPS with a high end CPU

I'll leave it to the OP to make his decision from here.

a Q6600 at 3.2 would be over 30% faster than a 2.5 Q8300. and random youtube videos is not a way to prove what you are trying prove. its fact that in multi player the newer i5 cpus are vastly better than a Q8300 would be. and a 3570k would double what Q8300 could do with full map and thats a a fact too.

EDIT: maybe this will sink in for you. lol. his cpu would be slower than that X4 620 you see next to last.

Clock for clock the Athlon X4's are not nearly as good as the Core 2 Quads. His CPU would certainly be better than the second to last Athlon X4, but I agree with the rest of what you're saying. An i5 or i7 would be a huge boost.

cannondale06

[H]F Junkie

- Joined

- Nov 27, 2007

- Messages

- 16,180

4x the power? you are REALLY far off. even with no cpu limitations at all, a 7850 is not quite twice as fast as gtx260. and before you argue with that, a 7850 is a little slower than a gtx570 which is right at twice as fast as gtx260.To conclude:

Effectively with the upgrade to 7850 the OP is guaranteed:

Much high image quality.

At a higher frame rate.

In every game.

About a 4x raw performance difference.

With an absolute worst case game of ~40-50FPS in a 64 player server in BF3.

But running at ~1/3 of your resolution, you could potentially run the game at up to double the FPS with a high end CPU

I'll leave it to the OP to make his decision from here.

The Q6600 @3.2 is about 20% faster than the Q8300. You didn't take architecture into account. Here's an i2500k with an 560Ti OC http://www.youtube.com/watch?v=YAggH3bEkZI running at 51FPS average. Do you still think a i3570k would somehow double that?

the point of my post above was because we are talking about his specific processor. not an i5 2500k to a 3570k

the difference between i5 2500k and any other 'better' 1155 processor is very small so in that sense i agree. but his cpu is very dated.

if he makes the jump to an IB or a SB he will be in much better shape.

or just overclock your cpu and get a 7850

cannondale06

[H]F Junkie

- Joined

- Nov 27, 2007

- Messages

- 16,180

well the 620 is clocked at 3.0 so its every bit as fast or faster than the Q8300 at 2.5 which was already a cut down core 2 quad.Clock for clock the Athlon X4's are not nearly as good as the Core 2 Quads. His CPU would certainly be better than the second to last Athlon X4, but I agree with the rest of what you're saying. An i5 or i7 would be a huge boost.

whitewater

n00b

- Joined

- Apr 16, 2012

- Messages

- 62

4x the power? you are REALLY far off. even with no cpu limitations at all, a 7850 is not quite twice as fast as gtx260. and before you argue with that, a 7850 is a little slower than a gtx570 which is right at twice as fast as gtx260.

Sorry, was getting confused with a 250, a 3x raw performance diff is more comparable. Regardless, comparing cards from such different generations is difficult, there are features in the latest architectures which provide way more performance under certain loads - think the kind of loads that next gen engines will move towards, and much more stability in FPS compared to earlier generations - Comparing old games between these cards will only show performance differences in features that date back to the 260. None of the new features of the card are used.

At a much deeper level, you can easily get 10x improvements in some workloads due to different caching methods, better multiplexing of parallel work loads with different instructions, better local data share, synchronisation and cache consistency, better compilers for helping advantage of these features and better debugging abilities which allows for better optimisation/performance analysis.

well the 620 is clocked at 3.0 so its every bit as fast or faster than the Q8300 at 2.5 which was already a cut down core 2 quad.

the graph states 2.6Ghz

cannondale06

[H]F Junkie

- Joined

- Nov 27, 2007

- Messages

- 16,180

well I am just looking at benchmarks and it would be around twice as fast at the same settings. and again that is with no cpu bottlenecking either. and some of the higher settings you think he would run would also impact his cpu not just the gpu. bottom line is he will not even come close to getting what a 7850 can fully deliver in most cases.Sorry, was getting confused with a 250, a 3x raw performance diff is more comparable. Regardless, comparing cards from such different generations is difficult, there are features in the latest architectures which provide way more performance under certain loads - think the kind of loads that next gen engines will move towards, and much more stability in FPS compared to earlier generations - Comparing old games between these cards will only show performance differences in features that date back to the 260. None of the new features of the card are used.

At a much deeper level, you can easily get 10x improvements in some workloads due to different caching methods, better multiplexing of parallel work loads with different instructions, better local data share, synchronisation and cache consistency, better compilers for helping advantage of these features and better debugging abilities which allows for better optimisation/performance analysis.

the graph states 2.6Ghz

and yeah the 620 is at 2.6 not 3.0. the 3.0 Phenom 2 is doing no better though and the Q8300 would be slower than that.

Everyone's arguments here are almost the same arguments I've been having in my head. I want to milk what I have for a little while longer, but at the same time, the GTX 260 is getting a little long in the tooth. It's just that the Q8300 is so dated, I don't know if it's worth messing with.

Might be time to build a new system. Which, with a Micro Center 6 miles away, may not be too painful.

Thanks all for the help.

Might be time to build a new system. Which, with a Micro Center 6 miles away, may not be too painful.

Thanks all for the help.

AltTabbins

Fully [H]

- Joined

- Jul 29, 2005

- Messages

- 20,387

(Super colorful graph that doesn't have anything to do with the OP)

Lol who plays at that resolution? Are they using an iphone as a monitor?

I'm not going to argue that the 8300 isn't going to limit higher end gpus, but its not as dramatic as the graph shows. At that low of a resolution a lot of the work is being put on the cpu.

my last rig was e6750 oc'ed to 3.2 with gtx 260 but this card can go 100% with e8400 pushed to 4ghz so actually you already bootleneck you current graphic card so 2 sugestions

1- overclock the cpu and the gtx 260 a litle

2- buy a new rig instead spending money to botleneck a second card to gain as much 10 %

1- overclock the cpu and the gtx 260 a litle

2- buy a new rig instead spending money to botleneck a second card to gain as much 10 %

cannondale06

[H]F Junkie

- Joined

- Nov 27, 2007

- Messages

- 16,180

perhaps you missed the whole point of the graph which was to show what an 8300 level cpu could do. would it make you feel better to see it getting 40-45 fps average and sub 30 fps minimums at 1920x1080? if its sluggish at 640x480 then its going to be just as bad at 1920x1080.Lol who plays at that resolution? Are they using an iphone as a monitor?

I'm not going to argue that the 8300 isn't going to limit higher end gpus, but its not as dramatic as the graph shows. At that low of a resolution a lot of the work is being put on the cpu.

Last edited:

Gmok Bonecrusha

[H]ard|Gawd

- Joined

- Jun 16, 2004

- Messages

- 1,090

I have a similar situation. Dell PC. I have a stock i7 2600 and a 6970. Would jumping up to a gtx 680 see a bottleneck from that i7, making it not worth grabbing a 680?

terrybogard21

Gawd

- Joined

- Jun 11, 2008

- Messages

- 645

Bottleneck is being thrown around way too much, like 850+ watt PSU's for single card configurations a year ago

OP; I would put the $200 towards a new system. Otherwise grab the best card and enjoy gaming!

OP; I would put the $200 towards a new system. Otherwise grab the best card and enjoy gaming!

cageymaru

Fully [H]

- Joined

- Apr 10, 2003

- Messages

- 22,105

If you were going to spend $200 on a video card and upgrade the entire system before January as AMD typically releases new cards at higher than now prices then go for it. Also remember AMD releases the $200 cards a few months after launch so you'll have to wait even longer.

As far as a new card being a bottleneck who cares if you're going to upgrade the entire thing soon? The video card can drop right into the new system without any problems. Not seeing the full potential is fine in my book. Now if you're going to stick with what you have then you can look for a lower end card. Getting bottlenecked doesn't make your system run slower than what it was going to do. Just means you hit the limit of what your cpu can handle.

2 main points.

In short if you're going to upgrade your system before new cards come out feel free to do so. Otherwise wait and see what new cards come out.

If you're going to stick with what you have then choose a cheaper card. If you're going to spend $200 on a card for your new system then get whatever is fastest for $200. Who cares if it doesn't reach potential now if you're going to upgrade anyways.

As far as a new card being a bottleneck who cares if you're going to upgrade the entire thing soon? The video card can drop right into the new system without any problems. Not seeing the full potential is fine in my book. Now if you're going to stick with what you have then you can look for a lower end card. Getting bottlenecked doesn't make your system run slower than what it was going to do. Just means you hit the limit of what your cpu can handle.

2 main points.

In short if you're going to upgrade your system before new cards come out feel free to do so. Otherwise wait and see what new cards come out.

If you're going to stick with what you have then choose a cheaper card. If you're going to spend $200 on a card for your new system then get whatever is fastest for $200. Who cares if it doesn't reach potential now if you're going to upgrade anyways.

cageymaru

Fully [H]

- Joined

- Apr 10, 2003

- Messages

- 22,105

I have a similar situation. Dell PC. I have a stock i7 2600 and a 6970. Would jumping up to a gtx 680 see a bottleneck from that i7, making it not worth grabbing a 680?

I doubt it seriously. Remember that it's mostly systems that utilize SLi or Crossfire that have problems with low end cpus. And your cpu isn't considered low end.

![[H]ard|Forum](/styles/hardforum/xenforo/logo_dark.png)