Jacktivated

n00b

- Joined

- Aug 27, 2016

- Messages

- 4

Greetings,

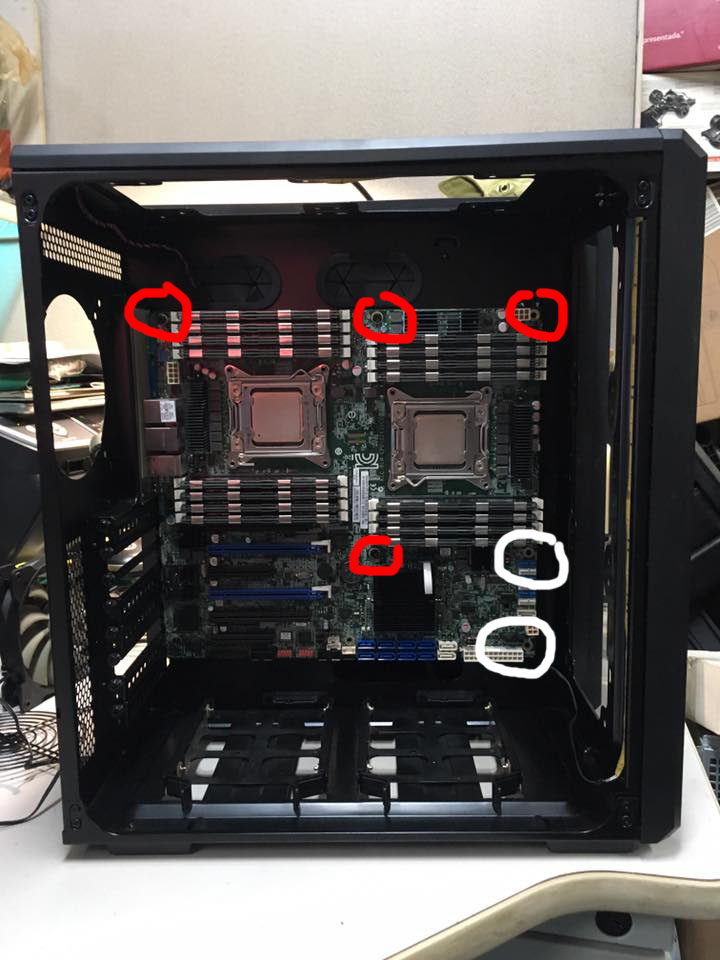

I've recently picked up two E5-2670 (v1) processors with the intention of building an everyday workstation PC that would also be used as a VMware Workstation Lab, plus some occasional gaming. I would also like 128 GB RAM (minimum of 64 GB to start).

I need advice on what motherboard to buy for this type of use. A few that I was looking at were:

ASRock Rack EP2C602-4L/D16 SuperMicro X9DR3-LN4F+ ASUS Z9PA-D8

Should I just consider building a system with one processor and keep the other for a spare, or is two CPUs a good way to go for what I want?

Any other advice is welcomed as well, such as memory, power supply, video and sound cards, storage controller, case, liquid cooling, etc.

Thanks very much for all your help!

I've recently picked up two E5-2670 (v1) processors with the intention of building an everyday workstation PC that would also be used as a VMware Workstation Lab, plus some occasional gaming. I would also like 128 GB RAM (minimum of 64 GB to start).

I need advice on what motherboard to buy for this type of use. A few that I was looking at were:

ASRock Rack EP2C602-4L/D16 SuperMicro X9DR3-LN4F+ ASUS Z9PA-D8

Should I just consider building a system with one processor and keep the other for a spare, or is two CPUs a good way to go for what I want?

Any other advice is welcomed as well, such as memory, power supply, video and sound cards, storage controller, case, liquid cooling, etc.

Thanks very much for all your help!

Last edited:

![[H]ard|Forum](/styles/hardforum/xenforo/logo_dark.png)