Navigation

Install the app

How to install the app on iOS

Follow along with the video below to see how to install our site as a web app on your home screen.

Note: This feature may not be available in some browsers.

More options

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

about IPC

- Thread starter sparks

- Start date

AMD's IPC is really not that far behind Intels. However it's clock speed really is. https://www.guru3d.com/articles-pages/amd-ryzen-5-2600-review,9.html gives an example of IPC with them all locked to the same clock speed.

But most of AMD's lineup struggles to go past 4ghz on all cores, whereas Intel runs a solid 4.5-5ghz on most of their recent processors, (or higher with OC, etc)

But most of AMD's lineup struggles to go past 4ghz on all cores, whereas Intel runs a solid 4.5-5ghz on most of their recent processors, (or higher with OC, etc)

BinarySynapse

[H]F Junkie

- Joined

- Feb 6, 2006

- Messages

- 15,103

Single-core IPC is only part of the equation. Clock speed and cache/memory/IO access limitations (coherency, latency, contention) also have a significant impact.

Gideon

2[H]4U

- Joined

- Apr 13, 2006

- Messages

- 3,548

Clock speed hurts it against Intel at the moment, however if the next Zen fixes that issue then I expect that gap to go away.

Pieter3dnow

Supreme [H]ardness

- Joined

- Jul 29, 2009

- Messages

- 6,784

The whole problem with IPC is that so many games are far from optimized for something as 8 core. Coming this summer we will see 12 and 16 cores hitting the desktop market and software will still be behind.

And if you check video games resolutions you will find out that it is more gpu bound.

The only thing that really matters with IPC is lower resolutions the gap between Intel and AMD after Zen 2 hits will more then ever be a thing of the past.

And if you check video games resolutions you will find out that it is more gpu bound.

The only thing that really matters with IPC is lower resolutions the gap between Intel and AMD after Zen 2 hits will more then ever be a thing of the past.

The whole problem with IPC is that so many games are far from optimized for something as 8 core. Coming this summer we will see 12 and 16 cores hitting the desktop market and software will still be behind.

And if you check video games resolutions you will find out that it is more gpu bound.

The only thing that really matters with IPC is lower resolutions the gap between Intel and AMD after Zen 2 hits will more then ever be a thing of the past.

The gap is already small with zen plus. 2is def gonna close it.

AMD's IPC is really not that far behind Intels. However it's clock speed really is. https://www.guru3d.com/articles-pages/amd-ryzen-5-2600-review,9.html gives an example of IPC with them all locked to the same clock speed.

Invalid review: (i) It only uses Cinebench, which favors AMD Zen muarch. (ii) It uses engineering samples of Intel systems (see here or here), not the real retail chips. (iii) It compares Intel chips with stock interconnect to AMD chips overclocked (when selecting 3200MHz, the IF is overclocked in Zen/Zen+ systems, but the Intel chips are running with the interconnect in stock settings)

A valid IPC comparison (measuring over 20 applications, testing retails chips, and comparing stock to stock) shows that Zen/Zen+ IPC is 10--15% behind Skylake/CoffeeLake. According to the first link in my post the average IPC gap is "14.4%".

Pieter3dnow

Supreme [H]ardness

- Joined

- Jul 29, 2009

- Messages

- 6,784

Invalid review: (i) It only uses Cinebench, which favors AMD Zen muarch. (ii) It uses engineering samples of Intel systems (see here or here), not the real retail chips. (iii) It compares Intel chips with stock interconnect to AMD chips overclocked (when selecting 3200MHz, the IF is overclocked in Zen/Zen+ systems, but the Intel chips are running with the interconnect in stock settings)

A valid IPC comparison (measuring over 20 applications, testing retails chips, and comparing stock to stock) shows that Zen/Zen+ IPC is 10--15% behind Skylake/CoffeeLake. According to the first link in my post the average IPC gap is "14.4%".

And before things favoured AMD they were fine to ridicule AMD with. Performance on either Bulldozer or Phenom II showed always the right numbers.

That is called a classic moving the goal posts ...

That is called a classic moving the goal posts ...

No. It isn't. It is just the technical difference between one single benchmark being close to the average (and so can be used as metric for representing the whole sample) and a single benchmark being an "outlier" and not-representative of the sample.

DuronBurgerMan

[H]ard|Gawd

- Joined

- Mar 13, 2017

- Messages

- 1,340

Invalid review: (i) It only uses Cinebench, which favors AMD Zen muarch. (ii) It uses engineering samples of Intel systems (see here or here), not the real retail chips. (iii) It compares Intel chips with stock interconnect to AMD chips overclocked (when selecting 3200MHz, the IF is overclocked in Zen/Zen+ systems, but the Intel chips are running with the interconnect in stock settings)

A valid IPC comparison (measuring over 20 applications, testing retails chips, and comparing stock to stock) shows that Zen/Zen+ IPC is 10--15% behind Skylake/CoffeeLake. According to the first link in my post the average IPC gap is "14.4%".

IPC gap, excl. 256b and AVX512 workloads (edge cases not encountered frequently by most users), with regards to Zen+, is ~9% according to The Stilt, who is about as reliable as anyone can be on this matter. 9% is the number I accept. In throughput applications (Cinebench being one), that margin evaporates to a few percentage points at most. In latency-sensitive workloads, it is somewhat higher. Gaming being a prime example of this - however, that is somewhat mitigated by the fact the GPU tends to be the limiting factor anyway.

Either way, 9% will do.

As most of the previous post imply, IPC+clock difference between AMD and Intel is ~20% for single core usage. For games that utilise more than 3-4 threads the difference is mainly due to clockspeed differences, as the AMD's SMT is more efficient to Intel's HT and thus, it neutralises the IPC differences. All these for systems with hifh speed RAM, as Ryzen needs it to perform the best way possible. If Zen2 is what we expect to be for its IPC increase over Zen1 and clocks close to Intel's offerings, we will have an ultra competitive year for the CPU market. We already see the Ryzen effect, especially with the cpu prices in the last few months being where they do.

IPC gap, excl. 256b and AVX512 workloads (edge cases not encountered frequently by most users), with regards to Zen+, is ~9% according to The Stilt, who is about as reliable as anyone can be on this matter. 9% is the number I accept. In throughput applications (Cinebench being one), that margin evaporates to a few percentage points at most. In latency-sensitive workloads, it is somewhat higher. Gaming being a prime example of this - however, that is somewhat mitigated by the fact the GPU tends to be the limiting factor anyway.

Either way, 9% will do.

I went out of my way to find avx512 software and found zilch. It's like MMX. A totally useless marketing nothingness. Regular avx is easier to find. Avx512 is vaporware.

thanks everyone, I am hoping that amd can get it done. cpu and gpu

the reason I asked was intel was winning and someone said it was clock speed...but even when clock speeds were locked the same intel was still winning test so they said ipc must be the reason.

the reason I asked was intel was winning and someone said it was clock speed...but even when clock speeds were locked the same intel was still winning test so they said ipc must be the reason.

Pieter3dnow

Supreme [H]ardness

- Joined

- Jul 29, 2009

- Messages

- 6,784

No. It isn't. It is just the technical difference between one single benchmark being close to the average (and so can be used as metric for representing the whole sample) and a single benchmark being an "outlier" and not-representative of the sample.

You mean now that AMD has a better floating point processor we suddenly can't use the same benchmark we have used before. What is next a generic compiler demand where we can't optimize ? The one that we have been claiming in the days of Cinebench when it first started to be the benchmark to show IPC.

Don't worry about itthanks everyone, I am hoping that amd can get it done. cpu and gpu

the reason I asked was intel was winning and someone said it was clock speed...but even when clock speeds were locked the same intel was still winning test so they said ipc must be the reason.

Like I said before the higher the resolution of whichever game you are playing the more gpu bound it will become

We've had these IPC discussions before. A case can be made in many directions for the upper and lower bounds. You could easily write a routine that mixes AVX and SSE and that would cause a big penalty on an Intel processor. Likewise you could front load swizzling on a routine and achieve 6 micro ops on zen. The same could be said for permute vs insert pre haswell vs bulldozer vs zen vs haswell+.

You could also say that intel has been running fast and loose with its speculative processing, achieving greater IPC at the cost of bounds checking.

Saddly. Software has been optimized for Intel since they squashed the K8. Only in the past year has it begun to swing to normalcy.

You could also say that intel has been running fast and loose with its speculative processing, achieving greater IPC at the cost of bounds checking.

Saddly. Software has been optimized for Intel since they squashed the K8. Only in the past year has it begun to swing to normalcy.

Last edited:

IPC gap, excl. 256b and AVX512 workloads (edge cases not encountered frequently by most users), with regards to Zen+, is ~9% according to The Stilt, who is about as reliable as anyone can be on this matter. 9% is the number I accept. In throughput applications (Cinebench being one), that margin evaporates to a few percentage points at most. In latency-sensitive workloads, it is somewhat higher. Gaming being a prime example of this - however, that is somewhat mitigated by the fact the GPU tends to be the limiting factor anyway.

Either way, 9% will do.

(i)

The small number of AVX256/512 workloads he used is the reason why the IPC gap grows only 5% when those workloads are considered to compute the average. So there is little reason to ignore those cases using your argument that they are "not encountered frequently by most users". That low frequency you allude is already being considered in the sample.

The average IPC gap must be 9% for you. But it is 14.4% for him. And it is a much larger percentage for customers as Google and TACC, which use AVX512 massively, including workloads he didn't test.

(ii)

He tested exclusively applications, most of which are throughput oriented. If he had tested games, the IPC gap had been bigger, because Intel muarchs are optimized for latency.

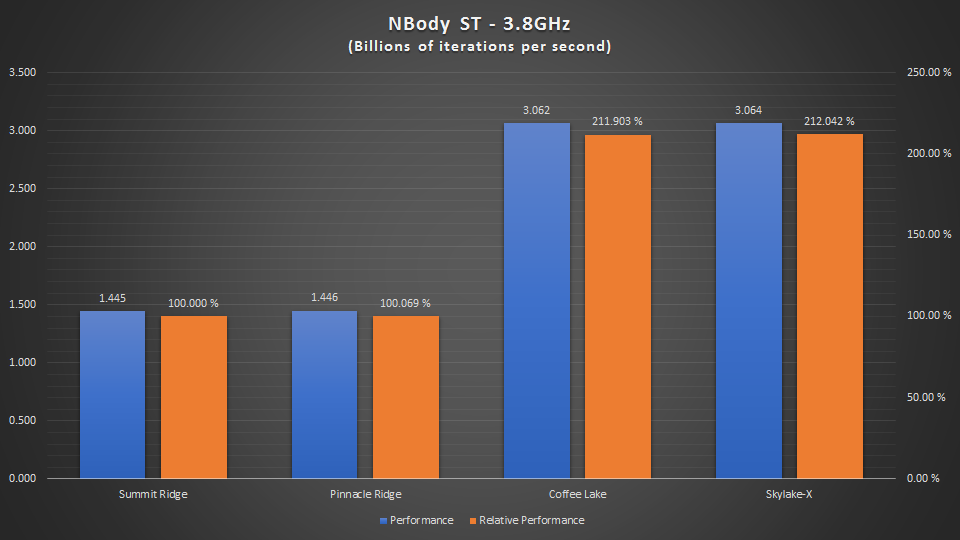

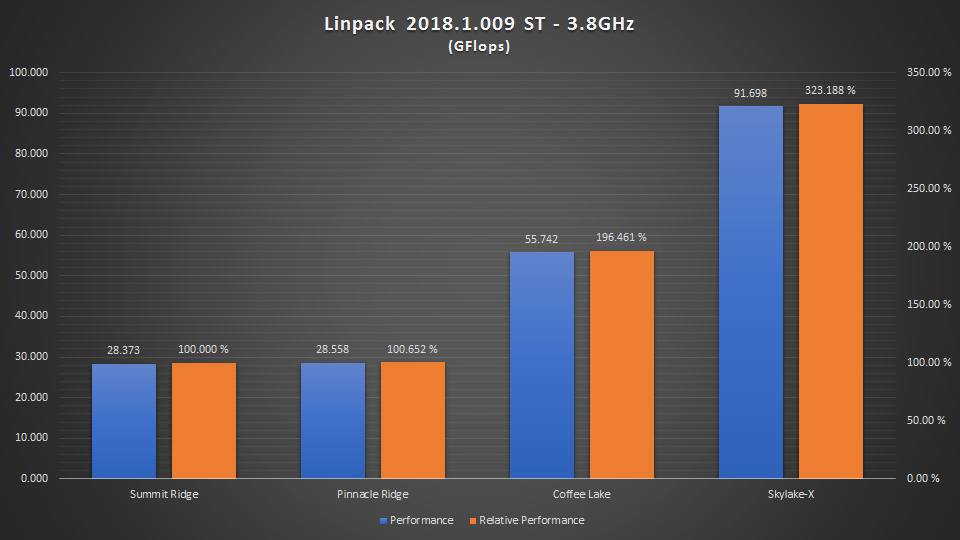

AMD Zen is currently about 10--15% behind Intel in IPC. The gap grows to about 2x or 3x on AVX256/512 workloads (e.g. a 12 core Xeon run circles around a 32 core EPYC on GROMACS).

(iii)

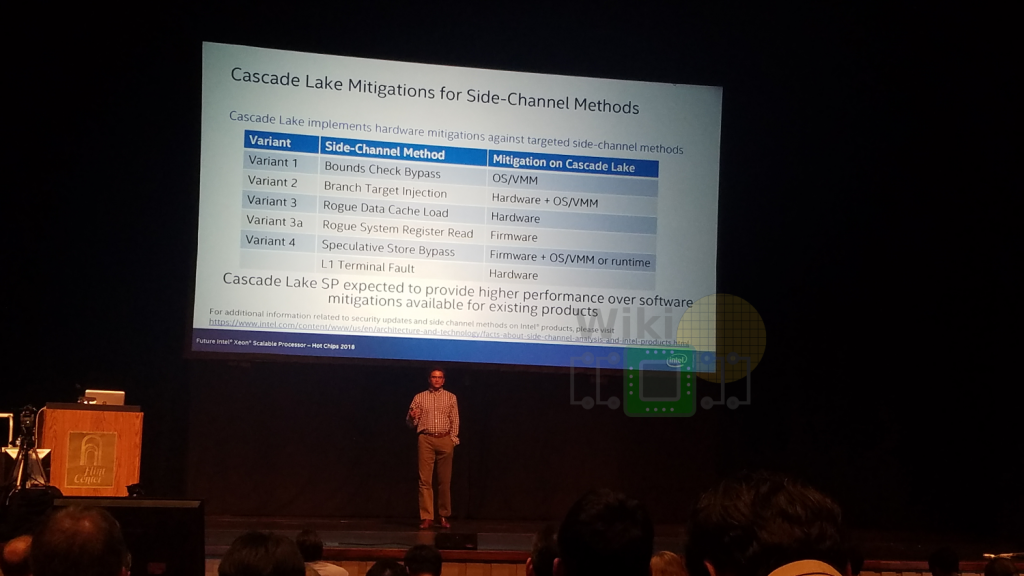

He tested with Spectre/Meltdown software patches enabled. Those software patches are emergency security patches, not optimized for performance. The new Intel processors have hardware mitigations that avoid the performance penalty of the software patches. So new processors will recover the performance that they lost recently.

You mean now that AMD has a better floating point processor we suddenly can't use the same benchmark we have used before. What is next a generic compiler demand where we can't optimize ? The one that we have been claiming in the days of Cinebench when it first started to be the benchmark to show IPC.

Zen core is only 16 FLOP. CofeeLake is 32 FLOP, and Skylake-X is 64 FLOP. This deficit in the floating point performance is the reason why AMD duplicates the throughput of the floating point units for Zen2.

No one said that you cannot use Cinebench. What we are saying is that you cannot use only Cinebench to measure the IPC gap. Cinebench is an "outlier" that doesn't represent the average. This was explained to you in #12 and the first link in #10 gives the relevant details.

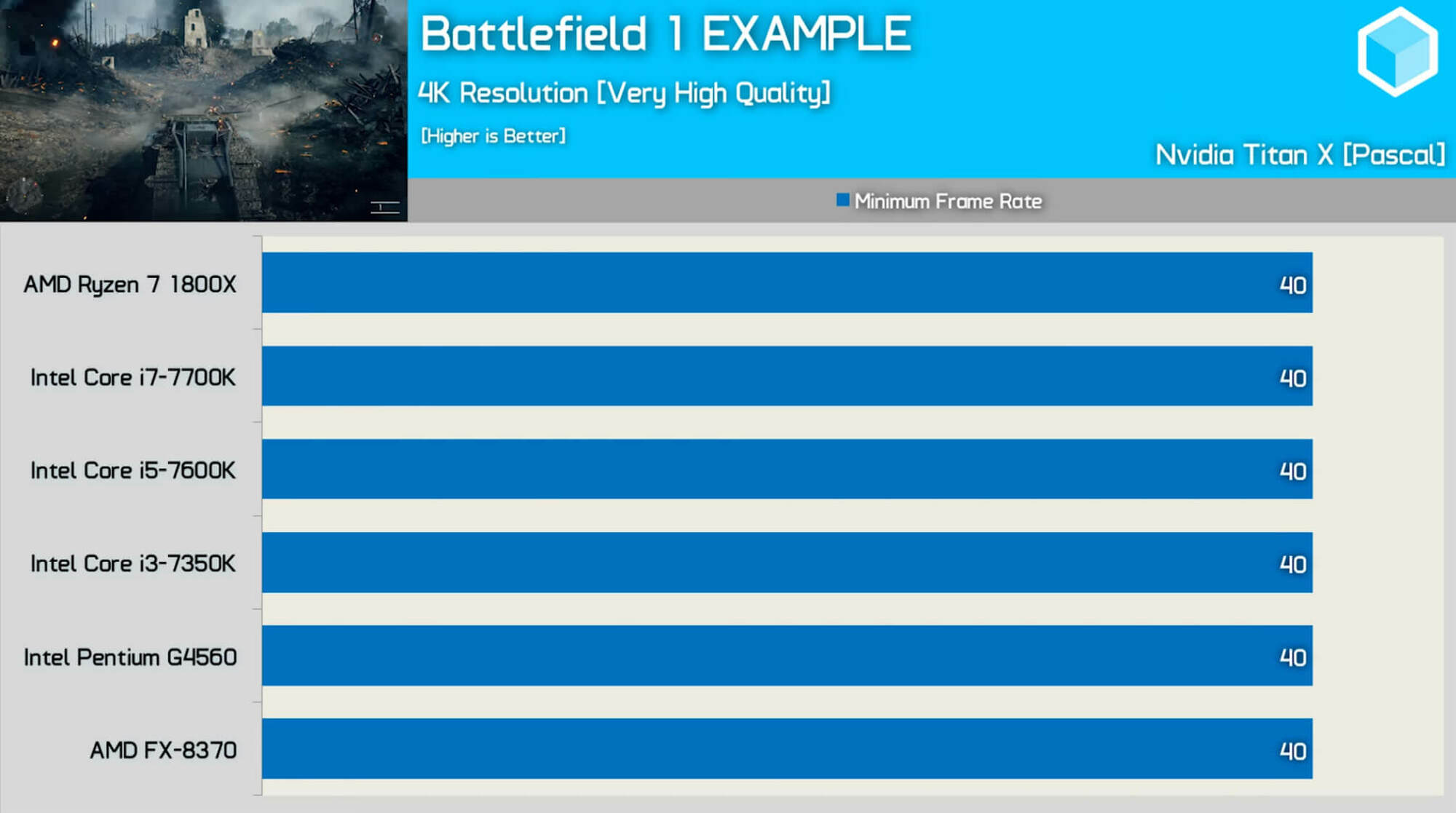

Like I said before the higher the resolution of whichever game you are playing the more gpu bound it will become. Find some 4K benchmarks

You can find a 4K benchmark here

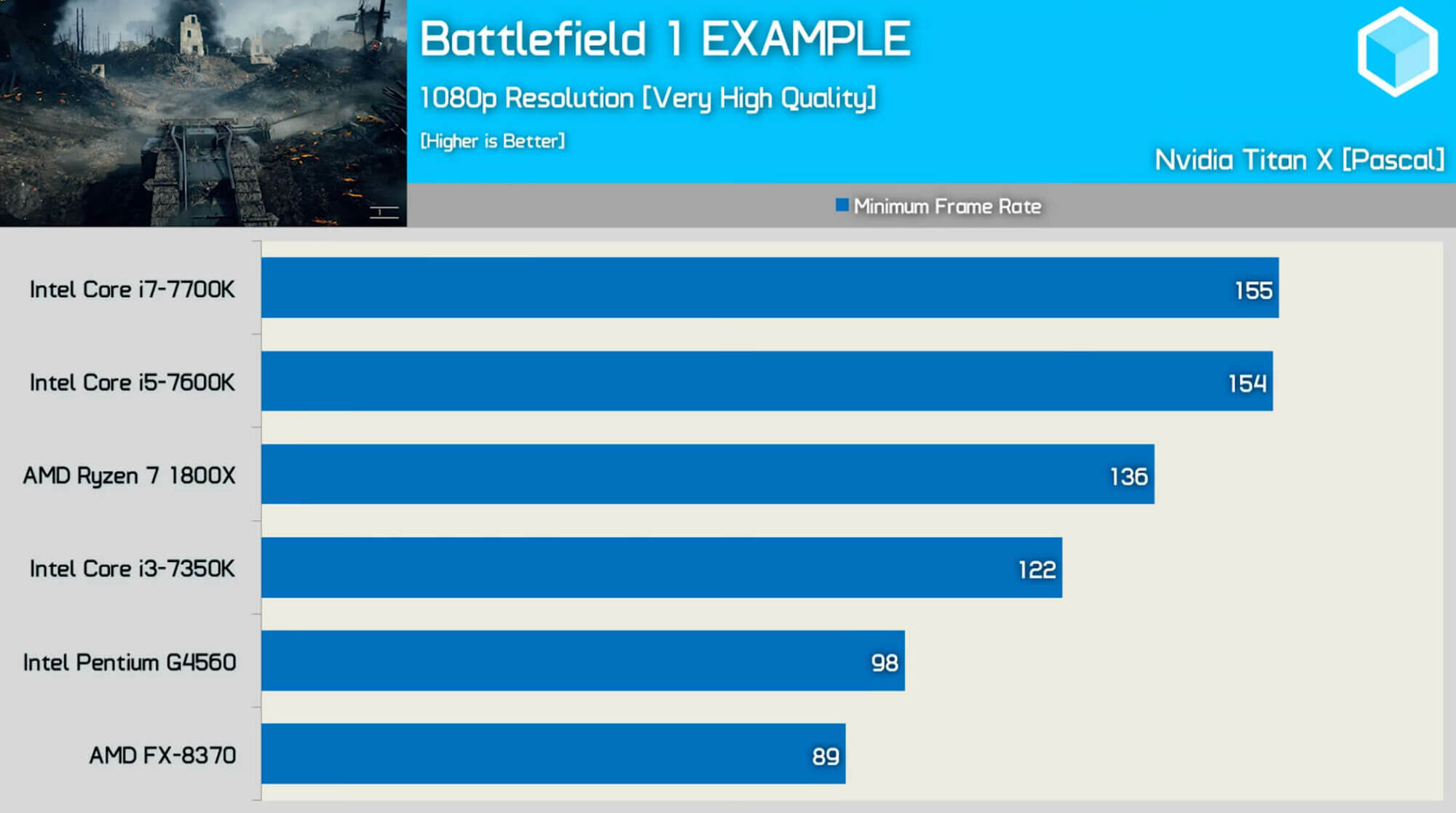

it proves that under GPU bound scenarios Zen performs as Piledriver. Of course, it would be a wrong conclusion to believe that Piledriver is so capable for gaming as Zen is. A 1080p test reveals the gaming differences

This review explains very well why is "avoiding a GPU bottleneck so important for testing and understanding CPU performance".

Zen core is only 16 FLOP. CofeeLake is 32 FLOP, and Skylake-X is 64 FLOP. This deficit in the floating point performance is the reason why AMD duplicates the throughput of the floating point units for Zen2.

No one said that you cannot use Cinebench. What we are saying is that you cannot use only Cinebench to measure the IPC gap. Cinebench is an "outlier" that doesn't represent the average. This was explained to you in #12 and the first link in #10 gives the relevant details.

You can find a 4K benchmark here

View attachment 128780

it proves that under GPU bound scenarios Zen performs as Piledriver. Of course, it would be a wrong conclusion to believe that Piledriver is so capable for gaming as Zen is. A 1080p test reveals the gaming differences

View attachment 128781

This review explains very well why is "avoiding a GPU bottleneck so important for testing and understanding CPU performance".

Why are you referencing old ass zen 1 benches? Why not use zen plus. There was some big differences in overall performance where you wouldn't expect. Unless your just point proving and the latest data is irrelevant to your argument.

Gideon

2[H]4U

- Joined

- Apr 13, 2006

- Messages

- 3,548

Why are you referencing old ass zen 1 benches? Why not use zen plus. There was some big differences in overall performance where you wouldn't expect. Unless your just point proving and the latest data is irrelevant to your argument.

Or better yet realize his forum title and just ignore him, hes going to spin everything to make Intel look better. Now just wait for Super Pi to be used to show the superiority of Intel

Pieter3dnow

Supreme [H]ardness

- Joined

- Jul 29, 2009

- Messages

- 6,784

Zen core is only 16 FLOP. CofeeLake is 32 FLOP, and Skylake-X is 64 FLOP. This deficit in the floating point performance is the reason why AMD duplicates the throughput of the floating point units for Zen2.

No one said that you cannot use Cinebench. What we are saying is that you cannot use only Cinebench to measure the IPC gap. Cinebench is an "outlier" that doesn't represent the average. This was explained to you in #12 and the first link in #10 gives the relevant details.

You can find a 4K benchmark here

View attachment 128780

it proves that under GPU bound scenarios Zen performs as Piledriver. Of course, it would be a wrong conclusion to believe that Piledriver is so capable for gaming as Zen is. A 1080p test reveals the gaming differences

View attachment 128781

This review explains very well why is "avoiding a GPU bottleneck so important for testing and understanding CPU performance".

You proved my point it is meaningless because of the workload not because of the IPC difference. If all you do is game at 4K who cares and what about 2K is that a good enough reason to not be worried about IPC?

IPC becomes meaningless more and more if gaming engines start using different approaches instead of the half baked DX11 stuff we see now. It takes time and larger then 1080p will be relevant sooner rather then later.

The reason why Techspot is so utterly utterly useless because it pretends that IPC is important and it is not. When you can have a bottleneck it is of the game engine rather then the cpu/gpu and that is why we have the GPU the cpu is only there to send data to the gpu for rendering. This is a concept so simple that something as a Playstation 4 manages 60 fps on battlefield 4 and makes the Playstation 4 pro do 4K on nothing more then jaguar cores. 7 or 8 .

The concept is being pushed because of the misunderstanding on how things are supposed to work rather then could work. In the end it is the game engine and API limitations that matters more in the gaming needs IPC debate.

The IPC debate or lack of the people with better understanding about game development, it took us so many years that the whole game industry is stuck because that debate was ruled by a company that limited everyone to 4c8t and the only thing that matters is IPC.

And now before the time when we finally can have more cores on the desktop the game industry is still figuring how badly they stood still all of these years.

IPC has been the death of game development far to long....

Last edited:

(snip)

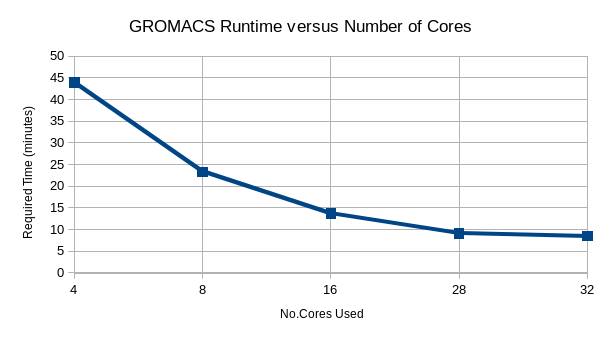

AMD Zen is currently about 10--15% behind Intel in IPC. The gap grows to about 2x or 3x on AVX256/512 workloads (e.g. a 12 core Xeon run circles around a 32 core EPYC on GROMACS).

(snip)

https://hardforum.com/threads/an-in...ome-server-processor.1971333/#post-1043930871

Grow up about gromacs. Frequency OP.

Grimlaking

2[H]4U

- Joined

- May 9, 2006

- Messages

- 3,245

((iii)

He tested with Spectre/Meltdown software patches enabled. Those software patches are emergency security patches, not optimized for performance. The new Intel processors have hardware mitigations that avoid the performance penalty of the software patches. So new processors will recover the performance that they lost recently.

As I am about to order 1.5 million in new servers I'd love to see where you found that the new CPU's have hardware level mitigation's for Specter and Meltdown and their variants.

Most recent article I could find that clearly spelled this out some...

https://www.bit-tech.net/news/intel-details-spectre-meltdown-protection-in-its-latest-cpus/1/

So in answer.. yes... it is on SOME variants of specter and meltdown, and even then that is limited because some of the new chips are just die shrunk older chips running at a higher speed. (read as a lot of them.)

Last edited:

DuronBurgerMan

[H]ard|Gawd

- Joined

- Mar 13, 2017

- Messages

- 1,340

(i)

The small number of AVX256/512 workloads he used is the reason why the IPC gap grows only 5% when those workloads are considered to compute the average. So there is little reason to ignore those cases using your argument that they are "not encountered frequently by most users". That low frequency you allude is already being considered in the sample.

You can choose to emphasize AVX workloads if they are important to you. He includes both the excl. 256b results and the results with 256b for a reason.

The average IPC gap must be 9% for you. But it is 14.4% for him. And it is a much larger percentage for customers as Google and TACC, which use AVX512 massively, including workloads he didn't test.

"Must be" is bad language. He provides both values. You want to accept the larger number because it works better for your argument. I accept the smaller number because it is more likely to represent the normal use case of an end user. However, if we were explicitly comparing enterprise datacenter usage, 14.4% is the value I would choose to use for that situation.

(ii)

He tested exclusively applications, most of which are throughput oriented. If he had tested games, the IPC gap had been bigger, because Intel muarchs are optimized for latency.

He selected tests which were latency sensitive as well as throughput sensitive. He did not use games directly because IPC is difficult to measure in a circumstance where the GPU affects the outcome. We know Intel's architecture is better for this. The Stilt resolved to determine by how much. In some latency-sensitive tests he conducted, Zen did quite poorly. The Stilt's analysis is the best, most comprehensive deep dive into Zen IPC anyone has yet conducted. If you don't think it's done right, YOU DO IT.

AMD Zen is currently about 10--15% behind Intel in IPC. The gap grows to about 2x or 3x on AVX256/512 workloads (e.g. a 12 core Xeon run circles around a 32 core EPYC on GROMACS).

It is 9% excluding 256b workloads. It is 14% including them. In heavy AVX512 use cases, you'd be an idiot not to buy Intel right now for reasons that should be obvious to anyone with half a brain. I'm always amazed by your ability to use rhetorical pseudo-dialectic to put spin on your data. You will use real data values, but you will cherry pick the ones you choose to accept according to what makes Intel look best. You do not do so according to use case, which would be the proper way to do it. To understand what I mean, imagine if, instead of using The Stilt's 9% value, arrived at via comprehensive testing... I just used Cinebench R15 only and then told you Zen is only 3% or 4% behind in IPC. That would be bullshit. Cinebench is a benchmark. Nobody uses Cinebench (though it's at least based on a real rendering engine). You do the same by cherry picking those workloads which favor Intel's offerings - to which few (if any) in this forum will ever use.

(iii)

He tested with Spectre/Meltdown software patches enabled. Those software patches are emergency security patches, not optimized for performance. The new Intel processors have hardware mitigations that avoid the performance penalty of the software patches. So new processors will recover the performance that they lost recently.

Now I can't actually recall if he did or not. I will take you at your word, though, and assume that he did.

If he did, it was entirely appropriate of him to do so. This is what you get, today, if you buy an AMD or an Intel CPU. The hardware mitigations - as I understand them - are essentially the same as the software ones in principle. I.e. IPC difference between a 9900k and an 8700k are negligible - probably non-existent. I read a couple tests on this recently, though I can't recall where exactly (I read too much of this shit). Perhaps you recall. In any event, if Intel releases an update that recovers some performance, then I will modify my position accordingly. If they release new hardware tomorrow that improves performance, we will assign new values to Intel IPC, thus increasing the gap. If AMD's Zen 2 improves IPC, then we will compare Zen 2 to all these...

This isn't hard.

Why are you referencing old ass zen 1 benches? Why not use zen plus. There was some big differences in overall performance where you wouldn't expect. Unless your just point proving and the latest data is irrelevant to your argument.

I am referencing an article that explains what is a GPU bottleneck and explains why avoiding a GPU bottleneck is "important for testing and understanding CPU performance." It is irrelevant what they used to explain. Note they used what was available then (1000 series octo-core Ryzen and quad-core Kabylake). We know that 2700X is faster than 1800X. We also know that 8700k and 9700k are faster than 7700k.

You proved my point it is meaningless because of the workload not because of the IPC difference. If all you do is game at 4K who cares and what about 2K is that a good enough reason to not be worried about IPC?

You didn't understand the article then.

You proved my point it is meaningless because of the workload not because of the IPC difference. If all you do is game at 4K who cares and what about 2K is that a good enough reason to not be worried about IPC?

IPC becomes meaningless more and more if gaming engines start using different approaches instead of the half baked DX11 stuff we see now. It takes time and larger then 1080p will be relevant sooner rather then later.

The reason why Techspot is so utterly utterly useless because it pretends that IPC is important and it is not. When you can have a bottleneck it is of the game engine rather then the cpu/gpu and that is why we have the GPU the cpu is only there to send data to the gpu for rendering. This is a concept so simple that something as a Playstation 4 manages 60 fps on battlefield 4 and makes the Playstation 4 pro do 4K on nothing more then jaguar cores. 7 or 8 .

The concept is being pushed because of the misunderstanding on how things are supposed to work rather then could work. In the end it is the game engine and API limitations that matters more in the gaming needs IPC debate.

The IPC debate or lack of the people with better understanding about game development, it took us so many years that the whole game industry is stuck because that debate was ruled by a company that limited everyone to 4c8t and the only thing that matters is IPC.

And now before the time when we finally can have more cores on the desktop the game industry is still figuring how badly they stood still all of these years.

IPC has been the death of game development far to long....

Performance is given by IPC, GHz, and number of cores. Pretending that IPC is "meaningless" is nonsense! In fact, not only AMD engineers have been improving IPC with new AGESAs, memory controllers, and cache updates, but as virtually and Ryzen gamer knows, selecting the highest possible DRAM helps gaming a lot of, because... it increases Zen IPC.

https://hardforum.com/threads/an-in...ome-server-processor.1971333/#post-1043930871

Grow up about gromacs. Frequency OP.

I already answered you in that thread.

As I am about to order 1.5 million in new servers I'd love to see where you found that the new CPU's have hardware level mitigation's for Specter and Meltdown and their variants.

Most recent article I could find that clearly spelled this out some...

https://www.bit-tech.net/news/intel-details-spectre-meltdown-protection-in-its-latest-cpus/1/

So in answer.. yes... it is on SOME variants of specter and meltdown, and even then that is limited because some of the new chips are just die shrunk older chips running at a higher speed. (read as a lot of them.)

3a is Spectre not Meltdown.

Coffee Lake refresh has hardware mitigations for Meltdown and Foreshadow. Cascade Lake adds further hardware mitigations for Spectre v2.

And future microarchitectures as Icelake will include further hardware mitigations. So new cores are recovering the IPC that they lost recently due to software patches.

You can choose to emphasize AVX workloads if they are important to you. He includes both the excl. 256b results and the results with 256b for a reason.

"Must be" is bad language. He provides both values. You want to accept the larger number because it works better for your argument. I accept the smaller number because it is more likely to represent the normal use case of an end user. However, if we were explicitly comparing enterprise datacenter usage, 14.4% is the value I would choose to use for that situation.

I am not emphasizing AVX workloads. I am saying that there is no reason to ignore them. Yes, he gives the results broken into three parts for further analysis, but when he mentions the IPC gap between AMD and Intel he gives 14.4%, because that is the real average, without eliminating workloads.

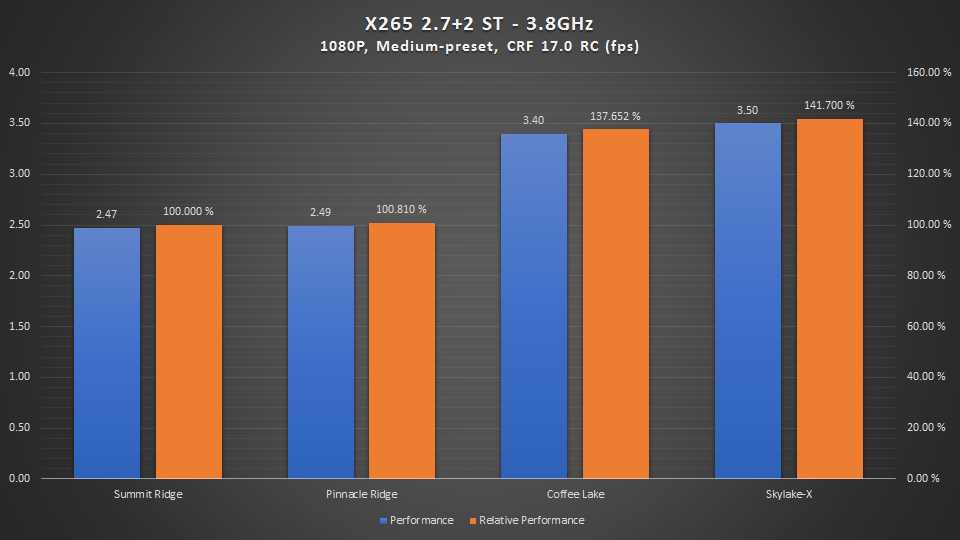

I could invert your claim and say that you want to accept the smaller number because it works better for your argument. However, I will simply mention that if a workload as x265 supports AVX256, why would I ignore it? Because Intel IPC is a 40% ahead?

I understand that something as GROMACS or NBODY is of little utility for most users here, but I see no reason why one has to ignore stuff as x265 or Embree, and only keep x264 and Blender.

He selected tests which were latency sensitive as well as throughput sensitive. He did not use games directly because IPC is difficult to measure in a circumstance where the GPU affects the outcome. We know Intel's architecture is better for this. The Stilt resolved to determine by how much. In some latency-sensitive tests he conducted, Zen did quite poorly. The Stilt's analysis is the best, most comprehensive deep dive into Zen IPC anyone has yet conducted. If you don't think it's done right, YOU DO IT.

I have mentioned multiple times here and in other places that his reviews are deeper and more complete than many professional reviews. But the immense majority of his workloads are throughput-oriented. If he had tested games, then the IPC gap had increased from the 14.4% he measured.

It is 9% excluding 256b workloads. It is 14% including them. In heavy AVX512 use cases, you'd be an idiot not to buy Intel right now for reasons that should be obvious to anyone with half a brain. I'm always amazed by your ability to use rhetorical pseudo-dialectic to put spin on your data. You will use real data values, but you will cherry pick the ones you choose to accept according to what makes Intel look best. You do not do so according to use case, which would be the proper way to do it. To understand what I mean, imagine if, instead of using The Stilt's 9% value, arrived at via comprehensive testing... I just used Cinebench R15 only and then told you Zen is only 3% or 4% behind in IPC. That would be bullshit. Cinebench is a benchmark. Nobody uses Cinebench (though it's at least based on a real rendering engine). You do the same by cherry picking those workloads which favor Intel's offerings - to which few (if any) in this forum will ever use.

It is amazing that you accuse me of cherry picking data, when my former claim "AMD Zen is currently about 10--15% behind Intel in IPC" is for ordinary x86 workloads. The 10% is for applications that don't include AVX256/512 (my 10% is what you call 9%). The 15% is for games. So, what I am saying is that AMD is about 10% behind in applications and about 15% behind in games.

Maybe you would try to understand what the other people is writing before making unfounded accusations?

Now I can't actually recall if he did or not. I will take you at your word, though, and assume that he did.

If he did, it was entirely appropriate of him to do so. This is what you get, today, if you buy an AMD or an Intel CPU. The hardware mitigations - as I understand them - are essentially the same as the software ones in principle. I.e. IPC difference between a 9900k and an 8700k are negligible - probably non-existent. I read a couple tests on this recently, though I can't recall where exactly (I read too much of this shit). Perhaps you recall. In any event, if Intel releases an update that recovers some performance, then I will modify my position accordingly. If they release new hardware tomorrow that improves performance, we will assign new values to Intel IPC, thus increasing the gap. If AMD's Zen 2 improves IPC, then we will compare Zen 2 to all these...

This isn't hard.

Not only he tested with Meltdown/spectre patches on Intel systems, but he found a huge performance regression in GCC, which he couldn't eliminate even after manually disabling the Spectre/Meltdown patches to try to find the source of the regression in that benchmark.

Evidently, it was entirely appropriate that he enabled all the security patches on Intel. Did I say the contrary? No. What I am saying is that the performance regressions caused by the software patches are eliminated now in newest Intel hardware that includes hardware mitigations. So the performance he measured then isn't the performance that corresponds now to the new hardware, because the in-silicon mitigations don't suffer from the performance penalty of the older OS/compiler patches.

Last edited:

DuronBurgerMan

[H]ard|Gawd

- Joined

- Mar 13, 2017

- Messages

- 1,340

We've been through the rest a dozen times before, juanrga. But as for the hardware mitigations in CFL refresh, they don't appear to noticeably affect performance. I remember reading it somewhere... I went ahead and located it for you:

https://www.anandtech.com/show/1365...ith-spectre-and-meltdown-hardware-mitigations

A quote:

"As a result, this hardware fix appears to essentially be a hardware implementation of the fixes already rolled out via microcode for the current Coffee Lake processors."

We will have to wait for Cascade Lake for performance enhancements from hardware mitigations, probably.

https://www.anandtech.com/show/1365...ith-spectre-and-meltdown-hardware-mitigations

A quote:

"As a result, this hardware fix appears to essentially be a hardware implementation of the fixes already rolled out via microcode for the current Coffee Lake processors."

We will have to wait for Cascade Lake for performance enhancements from hardware mitigations, probably.

Pieter3dnow

Supreme [H]ardness

- Joined

- Jul 29, 2009

- Messages

- 6,784

IPC is meaningless in gaming if not already then certainly when Zen 2 arrives. Game engines use resources on gpu and cpu the reason why you have a bottleneck is because how the game engine in question uses these(API related as well), something Juanrga and hardware unboxed/techspot fail to understand.

That is why I mentioned 2 hardware examples where software that is catered towards hardware that prove that IPC is meaningless.

When you run your game in higher resolution it becomes less of a factor as well.

Software has been more multi core oriented and that will be the factor in how gaming evolves not from IPC gains ..

That is why I mentioned 2 hardware examples where software that is catered towards hardware that prove that IPC is meaningless.

When you run your game in higher resolution it becomes less of a factor as well.

Software has been more multi core oriented and that will be the factor in how gaming evolves not from IPC gains ..

Last edited:

I already answered you in that thread.

Did you???

The source is requesting cores in a cluster, so showing the scaling of the cluster, not the scaling of the benchmark.

Serve The Home usually tests joy benches as dyyristone and basic benchs like C-Ray, and some other stuff. They only tested a single AVX512 workload, they didn't test any of the dozens of server/HPC AVX512 workloads.

I really don't think you understand software then.

Both Intel chips turbo to 3.7 while the AMD turbos 3.2.

0.5/3.2 = 0.15625

From the graph the

Intel

22 core 3.7/2.1 chip enjoys about 60% advantage

12 core 3.7/3.0 chip 50%

AMD

32 core 3.2/2.2 chip 0%

Turbo clocks have a +15% advantage.

If GROMACS scaled perfectly without turbos the 22 core would have a 28% advantage, yet it has 7%.

This pretty much follows the curve I showed in the other thread.

You lamely explained this with "scaling of the cluster"

WTF does that even mean?

So any advantage over 16 cores is basically nothing and for every core after 12 you get less than 2% improvement.

Turbo + diminished returns + 10-30% IPC about equals the difference.

Edit: messed up some %

Last edited:

We've been through the rest a dozen times before, juanrga. But as for the hardware mitigations in CFL refresh, they don't appear to noticeably affect performance. I remember reading it somewhere... I went ahead and located it for you:

https://www.anandtech.com/show/1365...ith-spectre-and-meltdown-hardware-mitigations

A quote:

"As a result, this hardware fix appears to essentially be a hardware implementation of the fixes already rolled out via microcode for the current Coffee Lake processors."

We will have to wait for Cascade Lake for performance enhancements from hardware mitigations, probably.

He mentions he didn't found relevant penalty before and after applying the patches for Broadwell and Haswell servers. Then he adds "it was published in other media that some video game servers saw their game servers move from being 20% active to 50% active when the patches were active".

He doesn't find relevant penalty on CoffeeLake, but a pair of posts above I gave you one example of a workload running 40% slower on Coffeelake.

Game engines use resources on gpu and cpu the reason why you have a bottleneck is because how the game engine in question uses these(API related as well), something Juanrga and hardware unboxed/techspot fail to understand.

Nonsense. Reducing the resolution and lowering the settings eliminates the GPU bottleneck and shows the real capabilities of the CPU. Anyone can see it in the pair of Techspot images given above. At 4K Piledriver behaves as Zen because the GPU is bottlenecked. At 1080p Zen is much faster than Piledriver because the GPU is not.

NyxNightGoddess

Weaksauce

- Joined

- Jun 22, 2004

- Messages

- 66

The issue in all of this is I think the op wanted to know how well Zen\+\2 games not how well a server chip handles AVX512. We are going off on a tangent here. Yes Intel server hardware has higher IPC. We all know this. That is where Intel is king, but Intel losses on performance per dollar. When Intel's 16 core server chip cost as much as AMD's 32 core server chip we are being screwed as consumers. Intel charges such a premium for their less than 20% IPC gain on single core performance that it is mind blowing. Intel screwed us because they could. They are finding out that they are not going to be able to do that anymore. Intel is hurting and they know that. Thats why Cascade Lake appeared out of nowhere. They know that they can't just sit around and twiddle their thumbs anymore. AMD is closing the gap and at a more competitive price. Two or three years ago I would have said buy Intel, but today no. Buy AMD. One you are helping a business that wants to innovate and two you are showing Intel that we are not going to pay a premium for a name anymore.

Did you???

I really don't think you understand software then.

Both Intel chips turbo to 3.7 while the AMD turbos 3.2.

0.5/3.2 = 0.15625

From the graph the

Intel

22 core 3.7/2.1 chip enjoys about 60% advantage

12 core 3.7/3.0 chip 50%

AMD

32 core 3.2/2.2 chip 0%

Turbo clocks have a +15% advantage.

If GROMACS scaled perfectly without turbos the 22 core would have a 28% advantage, yet it has 7%.

This pretty much follows the curve I showed in the other thread.

You lamely explained this with "scaling of the cluster"

WTF does that even mean?

So any advantage over 16 cores is basically nothing and for every core after 12 you get less than 2% improvement.

Turbo + diminished returns + 10-30% IPC about equals the difference.

Edit: messed up some %

Than the workload scales well above 16 cores (0.9 scaling factor) is easy to see by comparing the scores of EPYC 7351P and EPYC 7451. Using the 16 core as baseline, the 24 core would score about 46 and it does 40.

32 * 24/16 * 2.3GHz/2.4GHz = 46

Platinum and Golden have several differences such as binning, TDP, and 1.5 higher L3 per core. All that affects performance and you are ignoring it.

Also, since this is a heavy compute workload that scales over all the cores, the single core turbos that you mention and use for your computations mean nothing.

It is not about core scaling, it is because Skylake core is 64FLOP whereas Zen core is 16 FLOP. Also we can invert your argument and compare the 22 core Xeon and the 16 core EPYC.

Gold 6152: 70 / (22 * 1.4) = 2.3

EPYC 7351P: 32 / (16 * 2.4) = 0.8

"2.3" is 2.9x higher IPC than "0.8", confirming that

The gap grows to about 2x or 3x on AVX256/512 workloads

But we have known this for years...

Pieter3dnow

Supreme [H]ardness

- Joined

- Jul 29, 2009

- Messages

- 6,784

Nonsense. Reducing the resolution and lowering the settings eliminates the GPU bottleneck and shows the real capabilities of the CPU. Anyone can see it in the pair of Techspot images given above. At 4K Piledriver behaves as Zen because the GPU is bottlenecked. At 1080p Zen is much faster than Piledriver because the GPU is not.

Techspot is useless

The issue in all of this is I think the op wanted to know how well Zen\+\2 games not how well a server chip handles AVX512. We are going off on a tangent here. Yes Intel server hardware has higher IPC. We all know this. That is where Intel is king, but Intel losses on performance per dollar. When Intel's 16 core server chip cost as much as AMD's 32 core server chip we are being screwed as consumers. Intel charges such a premium for their less than 20% IPC gain on single core performance that it is mind blowing. Intel screwed us because they could. They are finding out that they are not going to be able to do that anymore. Intel is hurting and they know that. Thats why Cascade Lake appeared out of nowhere. They know that they can't just sit around and twiddle their thumbs anymore. AMD is closing the gap and at a more competitive price. Two or three years ago I would have said buy Intel, but today no. Buy AMD. One you are helping a business that wants to innovate and two you are showing Intel that we are not going to pay a premium for a name anymore.

The main discussion is about games and IPC. AMD IPC is about 15% behind in games. This and the clock difference is the reason why Intel is better for gaming. The sub-discussion about AVX512 was started by people that still continues negating that Intel has a 2x or 3x IPC leap in heavy AVX workloads.

Intel loses on performance per dollar, because price is a nonlinear function of performance. IPC is a nonlinear function of number of transistors. So getting 20% higher IPC costs about 40% more, not 20%. Similarly getting a node capable of 5GHz costs much more than a node that only does 4GHz.

So if you compare a fast core and a slow core, the slow core will win in performance per dollar. It is the reason why a R7 1800X loses to a R7 1700X in performance per dollar.

Moreover, for servers, AMD is using a MCM approach that further reduces price, but at expense of additional performance and power penalties. The multidie approach in EPYC is the reason why it has same internal latencies than a four-socket Broadwell.

Cascade Lake is plan B. Cascade Lake was born from 10nm fiasco. The original plan was CannonLake 10nm for 2016.

Last edited:

Than the workload scales well above 16 cores (0.9 scaling factor) is easy to see by comparing the scores of EPYC 7351P and EPYC 7451. Using the 16 core as baseline, the 24 core would score about 46 and it does 40.

32 * 24/16 * 2.3GHz/2.4GHz = 46

Platinum and Golden have several differences such as binning, TDP, and 1.5 higher L3 per core. All that affects performance and you are ignoring it.

Also, since this is a heavy compute workload that scales over all the cores, the single core turbos that you mention and use for your computations mean nothing.

It is not about core scaling, it is because Skylake core is 64FLOP whereas Zen core is 16 FLOP. Also we can invert your argument and compare the 22 core Xeon and the 16 core EPYC.

Gold 6152: 70 / (22 * 1.4) = 2.3

EPYC 7351P: 32 / (16 * 2.4) = 0.8

"2.3" is 2.9x higher IPC than "0.8", confirming that

That would be all almost well and good if this chart didn't exist.

(source)

Oh and that chart is actually skewed to look less dramatic due to the x axis being spaced 4 8 12 4.

I will concede that nill actually happens after 28.

and your math seems to be a bit off here

Gold 6152: 70 / (22 * 1.4) = 2.3

EPYC 7351P: 32 / (16 * 2.4) = 0.8

"2.3" is 2.9x higher IPC than "0.8", confirming that

If gold was a 1.4ghz processor that math would work. But it's a 2.1.

So it's 1.5 is 0.9x higher than 0.8

Edit: Semantically the above is wrong too.

"2.3" is 1.9x higher than "0.8"

Although you could say

2.3 is 2.9x the value of 0.8

Once you use higher you are talking about the difference not the absolute.

endedit

As I stated in my other post above. There are cases where you can see dramatic differences and yes if you throw avx512 into the mix 2x throughput can be seen. However this isn't ubiquitous as most software is a bit more complicated than that.

Oh and please link to your sources. Those single thread benches need context.

Last edited:

If gold was a 1.4ghz processor that math would work. But it's a 2.1.

I already commented about that graph measuring cluster scaling, proved that GROMACS has a 0.9 scaling factor above 16 core, and compared a 22 core Intel vs a 16 core AMD, making moot your core scaling-up argument, but you can insist.

About frequencies, 2.1GHz is the base clock for x86 stuff. For AVX512 stuff as GROMACs the base clock is 1.4GHz. I stop here.

![[H]ard|Forum](/styles/hardforum/xenforo/logo_dark.png)