Hi _Gea,

What kind of speeds are you seeing on your NFS? I'm currently using a separate NAS but speeds are not as good as I was hoping for so I'm debating whether I should move to a virtualized NAS/SAN. Are you using NFS in sync or async mode?

Thanks

Its hard to answer because you have not

- defined workload and use case

- expected/needed performance

- your hardware

ex:

If you have some Macs for videoproduction, it is senseless to virtualize a NAS. It also does not matter to think about sync and async usage of NFS - It is async per defailt. That is ok because if you have a power outage during update of a multi-GB videofile you can expect that the file is damaged and you need a working snap or backup.

If you use a database with critical date or ESXi that use NFS as shared storage, you cannot allow a dataloss on power outage. In this case, you need sync write - even if it is 100x slower or you need a 3k expensive Zeus RAM disk for best sync performance.

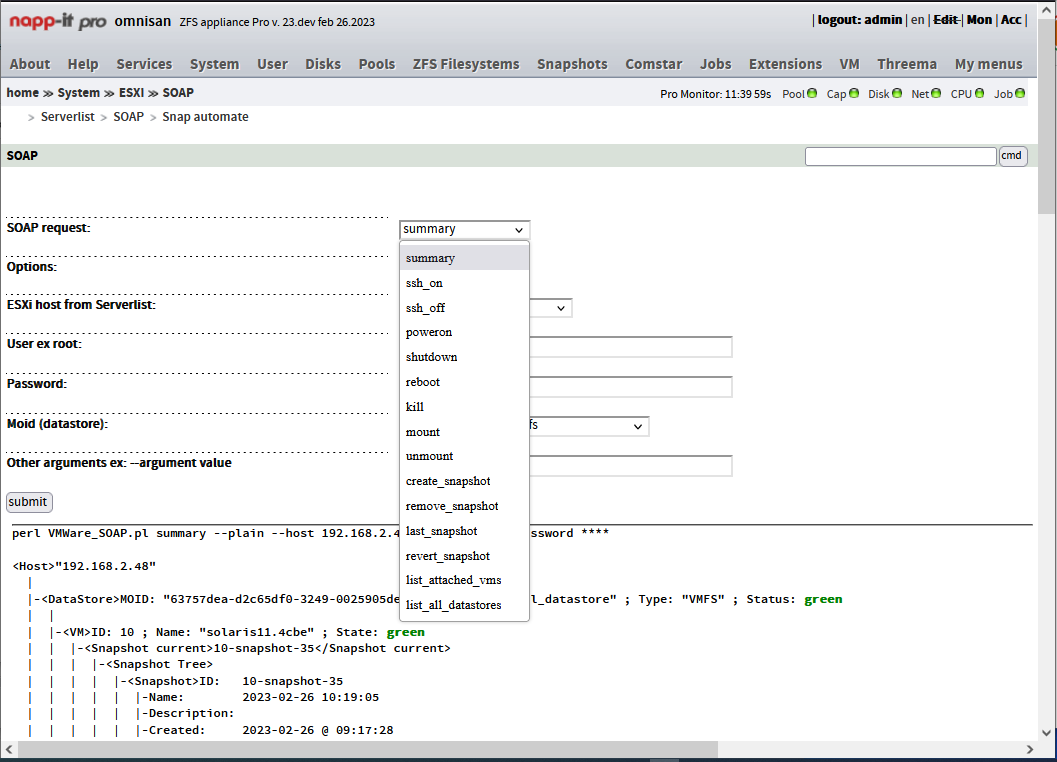

If you like to have SAN like features like ultra fast RAM-based data caching, snaps, fast multiprotokoll access to your date, shared storage etc. with ESXi, you need a NAS/SAN.

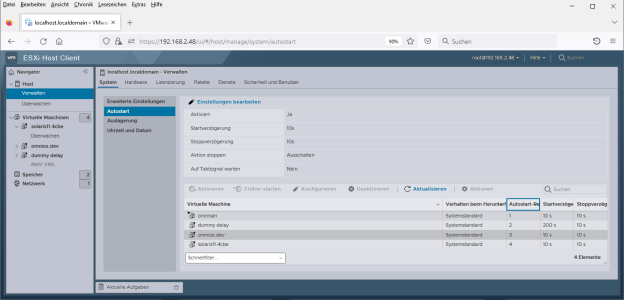

If your usage has a lab-character or if your traffic is mostly between SAN and ESXi over the ESXi virtual switch, then you may think about all-in-one because you do not need expensibe FC SAN hardware to have multi-GB transfer rates with a low latency between SAN and ESXi. From outside view all-in-one is identical to a conventional scenario with ESXi servers and a dedicated shared SAN connected via high-speed hardware.

Not to talk of the other advantages like less, hardware, less energy, less cabling

with the minus of a slightly higher complexity, less performance compared to two highend boxes and different update strategies

Last edited:

![[H]ard|Forum](/styles/hardforum/xenforo/logo_dark.png)