Yes it's all speculation at this point.

But given specs alone, you can normally (but of course, not always) spot the reason why next generation's card should/should not be significantly faster than previous gen's.

This is purely speculation on my part:

Maxwell to Maxwell2 -- 780 vs 980 was more of minor improvement and a big 20-30% clock speed increase, hence the ~20% improvement

Maxwell2 to Pascal -- 980 vs 1080 had a large improvement probably because it was a combination of more CUDA cores and vastly higher clock speeds.

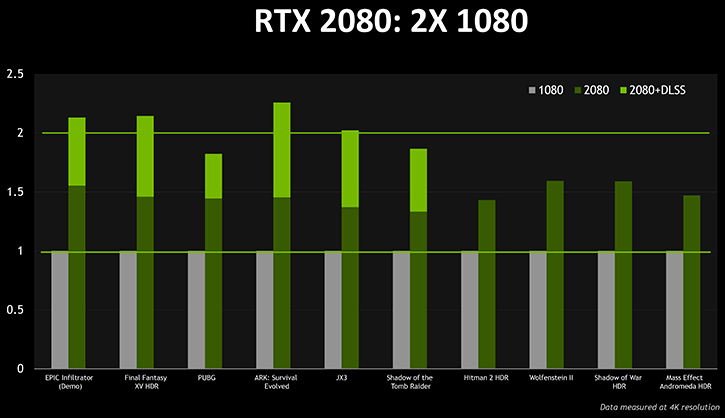

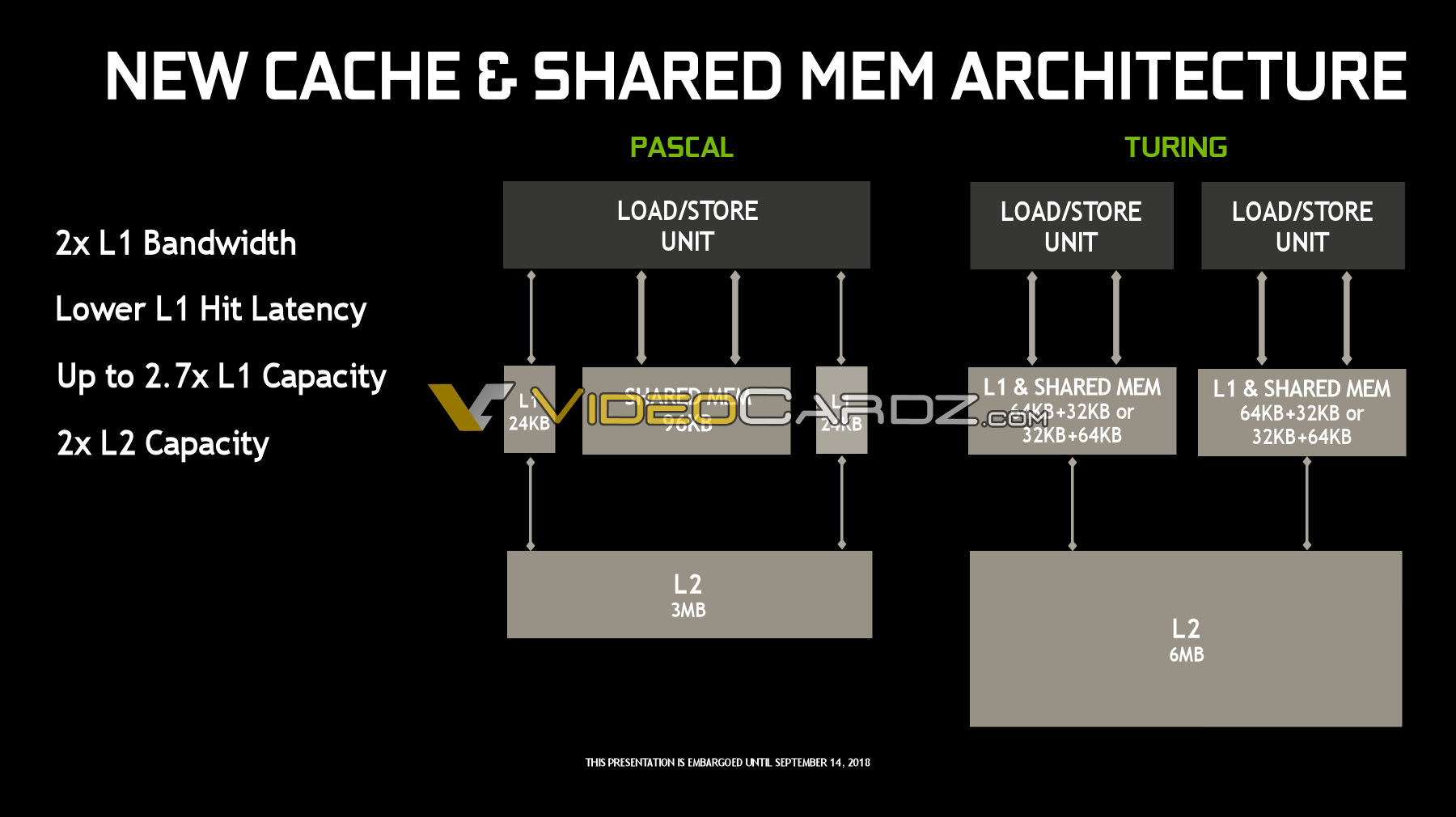

Pascal to Turing (2070 vs 2070, 2080 vs 2080)-- seems like a wash in terms of CUDA cores and clock speeds. Not expecting a very large jump unless the architecture itself is vastly superior to Pascal's. The 2080 Ti seems to bring a lot to the table though, except I'm not willing to pay for it given the games I play.

Just one point on your post. The performance jump from Maxwell to Pascal was down to the major die shrink. Remember they went from 28nm to 16nm, skipping 20nm. Now I know 20nm to 16nm isn't a real node shrink and neither is 16nm to 12nm. So I am not sure there will be any performance increase from the change to 12nm, maybe a little power saving. Like you, I am not expecting a large jump in performance apart from games that use the Ray Tracing and AI stuff.

![[H]ard|Forum](/styles/hardforum/xenforo/logo_dark.png)