demondrops

Limp Gawd

- Joined

- Jul 7, 2016

- Messages

- 422

ye muthafuckas today dey need that DVI port!

Follow along with the video below to see how to install our site as a web app on your home screen.

Note: This feature may not be available in some browsers.

I think prices normalizing over the long term is a good bet. For one thing, eventually the mining market will be saturated. There won't be nearly as many new people jumping in, and buys will mostly be people making marginal upgrades, which are much less motivated buys.

Yep. Even if they reach the power capacity of your home they will just start upgrading their slower cards.What makes you think miners won't simply continue to add capacity? As long as the profitability and ROI is there they will continue to buy and add cards. When you're essentially printing money what motivation is there to not do more?

I thinking it will be around a 50-60% improvement across the board. Expecting a Titan X to drop 2-3 months after the small chip which is about perfect time to come out with the delayed 4k 144hz g-sync displays.

LOL, 50-60% gain? LOL, that's not happening.

LOL, 50-60% gain? LOL, that's not happening.

2080 beating 1080 with 50-60% is very possible.

Funny a Titan V cant even beat a 1080ti by much more then 15% and usually less then that. I dont see a 2080 doing much better then 20% faster then a 1080, Pascal was a homerun from a engineering standpoint, so expecting another one is just not happening.

LOL, 50-60% gain? LOL, that's not happening.

Funny a Titan V cant even beat a 1080ti by much more then 15% and usually less then that. I dont see a 2080 doing much better then 20% faster then a 1080, Pascal was a homerun from a engineering standpoint, so expecting another one is just not happening.

It's not a gaming card to begin with and on top of that its a reduced GV100 with 3/4th the memory speed. And I see you already forgot Maxwell for example. AMD is 3 generations behind in April on the gaming front, just as they are now in the HPC segment.

To be fair, Kepler to Maxwell was only 30-40%, but we were at the end of the 28nm life cycle at that point.It's happened every architecture change in the past 8 years, I don't see why it would stop now. GP104 vs GA104 we will see a minimum of 50% improvement.

Why are you talking about AMD in a Nvidia thread nor did I mention them. Gaming and compute are nearly the same paths the only difference is the removal of the tensor cores and memory speed will only make a tiny difference. I expect many to be disappointed especially if they think a 50% gain is coming, it will be a minor update that I think many will pass on. You sound like Razor did before the Titan V came out and we saw numbers, he expected a large gain and it was not there. Expecting the gaming version to be miles ahead of the the compute version is just not likely at all. We shall see how it turns out around July or so.

To be fair, Kepler to Maxwell was only 30-40%, but we were at the end of the 28nm life cycle at that point.

The Titan V isn't even a Geforce card. Also there is a lot more than Tensor cores, FP64 for example. At the same time memory bandwidth will see massive increase due to GDDR6. Not to mention the clocks due to being a gaming card.

Gaming and compute is very different in the proportions of a card.

Kelper->Maxwell->Pascal and now this. History will continue. Funny how it works out when you can fund the R&D.

And now the card are out in July? Oh the bitterness you hold

There is simply no way nvidia would launch normal (non titan class) products at those price points.

1. They sell to OEMs who sell systems which could not absorb those sorts of cost levels (even at OEM discounts)

2. They would never give AMD a bay window of price advantage like that. That's basically abdicating the entire "upper mainstream" market.

FP64 is in Pascal as well, GDDR6 will help some but that is assuming a memory bottleneck is a issue. I have seen higher clocked Titan V cards granted not the gaming card, it didnt help much. Rumor is August Q3 launch, I am actually being generous with my thoughts of a July launch. Bitterness is all you and always has been.

You are gasping at straws. Now you even compare 6000-7500Gflops of FP64 to ~350Gflops.

So now July is generous of you. Please tell what you really expect then.

Funny a Titan V cant even beat a 1080ti by much more then 15% and usually less then that. I dont see a 2080 doing much better then 20% faster then a 1080, Pascal was a homerun from a engineering standpoint, so expecting another one is just not happening.

TItan V is ROP limited, already discussed this in the Volta threadby cutting cuda cores, the ROPS are attached to the SMX blocks, and they had to put fairly low clocks to keep it in a certain TDP due to the humongous die and all that extra non gaming silicon, it actually has less pixel filtrates than a 1080ti! Both have close to the same counts on ROP's 96 vs 88 but the Titan V has much less mhz, so effectively less filtrates.

Its 20% faster than a 1080ti while being ROP limited!, that is why in pure compute tests, its 50-100% faster depending on the application.

We have seen many times, nV's GPU's scale very well with increased core counts and with other parts scaling proportionately too. This time, they couldn't do that with the Titan V, it has so much more shader potential but no extra filtrates (less as I explained before).

Also look at the arrangement of cuda cores in the smx's, nV compute cards have half the amount of cuda cores per sm vs the gaming cards, essentially its a different cache and core layout, the ASIC is different! This will make a big difference in driver optimizations for games. I expect to see the same in Volta vs what ever the gaming GPU's will be called.

I am not convinced it's as ROP limited as you are tho, the clock speed is no doubt hurting it. As for the 20% I have also seen the 1080ti tie or beat the Titan V as well, both are outliers tho and one would expect it to win in pure compute. I just dont see this 50% increase coming, I think 20% is far more likely as I just dont think they will get the clocks they need for a large improvement. On the plus side if I am wrong then we end up with a better card we can buy, shame it likely wont be cheaper tho.

I'm 100% positive it is, we can see what resolutions does with Titan V vs 1080ti lol, what does resolution affect? Pixel fillrates. That is the only metric that has an equalizing balance between the 1080ti and Titan V. And the titles that shows this well are more pixel fillrate limited vs any other part of the GPU.

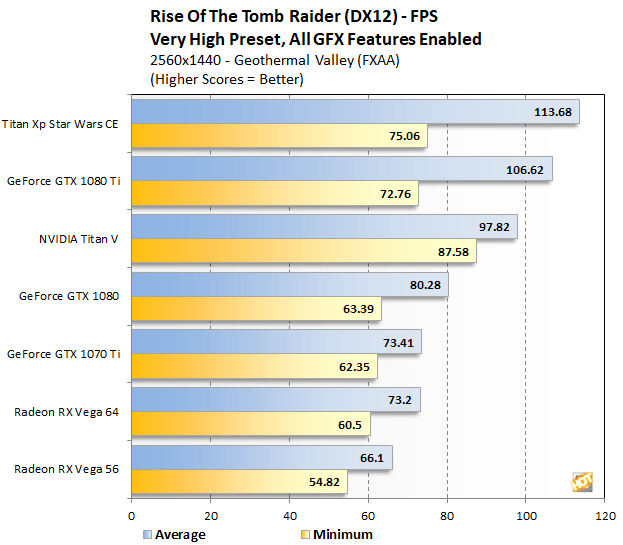

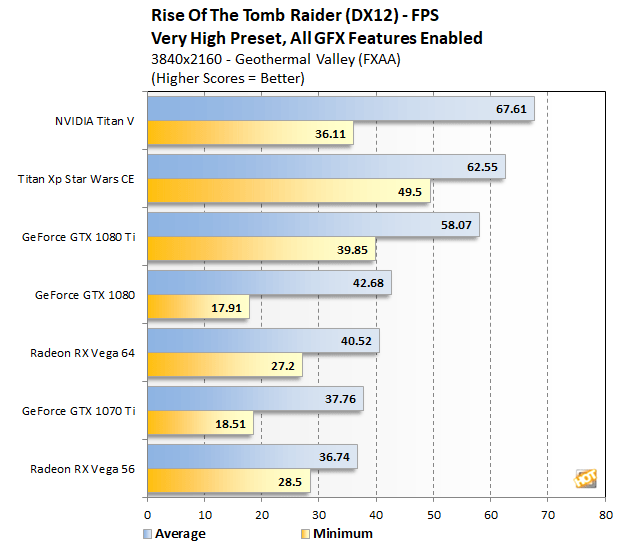

https://hothardware.com/reviews/nvidia-titan-v-volta-gv100-gpu-review?page=3

Look at these titles.

Look at FPS in relationship to resolution. You can see the Titan V is getting bottle necked.

You can't say its not because as the resolution gets higher there is an equalizing effect. Now is it a CPU bottleneck? I don't think so because that would equalize all numbers for all of the graphics cards, there is only one place to look, on the GPU, what on the GPU is bottlenecking? Simple.

To be more obvious than that

A ~13% overclock is giving the Titan V 19% performance boost, yeah its bottleneck and its heavily bottlenecked in titles by its fillrates. The only time we will ever see a performance increase over the overclock amount is if there is a bottleneck being alleviated.

This happens in more than one game to boot.

Your the one that posted it like Pascal did not have it, I corrected you. I have no doubt they will make changes for the consumer card but it just wont help as much as you think it will.

Look at the .1% and 1% tho, it's horrible on the Titan V in Doom. It also happens in Grand theft Auto and a few other titles, that just does not scream a ROP issue to me. I mean heck a Vega 64 running at stock has better frame time then a Titan V at stock. I have seen it do that in other benchmarks but not all the time, this is why I tend to discount the ROP's as a issue. Then there is stuff like this.

Looking at overall performance expectations then, the Titan V is clearly the fastest of the Titans. And yet outside of compute, the advantage for graphics is much smaller. Relative to the Titan Xp we’re looking at just a 14% on-paper advantage in FP32 shader throughput, and thanks to the slightly lower clockspeed an actual ROP throughput disadvantage. The real-world impact of these differences will play out differently among different programs and games, as we’ll see. But it’s an important piece of context all the same. GV100 has a lot of hardware that really only helps compute performance, and from a power standpoint that hardware is a liability. This is why NVIDIA creates differentiated consumer and compute-focused GPUs, and why GV100 isn’t quite as potent for gaming as it may seem.

https://segmentnext.com/2018/02/28/nvidia-ampere-gaming-graphics-cards-release/

I have don't know how much truth is in this article but rumor has it these cards will be LOCKED for MINING so basically shutting the Crypto Miners out.

Look at the .1% and 1% tho, it's horrible on the Titan V in Doom. It also happens in Grand theft Auto and a few other titles, that just does not scream a ROP issue to me. I mean heck a Vega 64 running at stock has better frame time then a Titan V at stock. I have seen it do that in other benchmarks but not all the time, this is why I tend to discount the ROP's as a issue. Then there is stuff like this.

I'm 100% positive it is, we can see what resolutions does with Titan V vs 1080ti lol, what does resolution affect? Pixel fillrates. That is the only metric that has an equalizing balance between the 1080ti and Titan V. And the titles that shows this well are more pixel fillrate limited vs any other part of the GPU.

https://hothardware.com/reviews/nvidia-titan-v-volta-gv100-gpu-review?page=3

Look at these titles.

Look at FPS in relationship to resolution. You can see the Titan V is getting bottle necked.

You can't say its not because as the resolution gets higher there is an equalizing effect. Now is it a CPU bottleneck? I don't think so because that would equalize all numbers for all of the graphics cards, there is only one place to look, on the GPU, what on the GPU is bottlenecking? Simple.

To be more obvious than that

A ~13% overclock is giving the Titan V 19% performance boost, yeah its bottleneck and its heavily bottlenecked in titles by its fillrates. The only time we will ever see a performance increase over the overclock amount is if there is a bottleneck being alleviated.

This happens in more than one game to boot.

Now that it seems (relatively substantiated) that production won't be starting until June, with the announcement some time in June with launch in July (or August)_ for Ampere, I've decided to hold onto one of my 1080 tis.

https://wccftech.com/rumor-nvidia-t...eforce-lineup-ampere-to-succeed-volta-in-hpc/

The bright side from the longer wait is the crypto market could have somewhat stabilized by then thus bringing prices down a bit from bonkers levels.

We must have different definitions of “substantiated”. All I’ve seen are rumors from shitty websites with long histories of talking out their asses for page clicks.

What you might actually be seeing here is just the power delivery being increased enough to normalize the performance. The stock numbers are clearly throttling and limiting performance in stock scenarios, hence the 'artificial' bottleneck look in the graph.

Right out of the box, the TITAN V will actually hit its default power and temperature targets while under a sustained load, which causes the card to quickly bounce in and out of boost states. Its default boost clock is 1,455MHz, so simply increasing the power and temperature targets unlocked quite of a bit of frequency. We saw a peak GPU clock of 1,815MHz, which is quite a significant jump without touching the offset frequency. Even with additional cooling and frequency manipulation, however, we suspect the TITAN V will be power limited -- as you can see, we hit the power limit even with the target maxed out. We have to do some more experimenting here.

Read more at https://hothardware.com/reviews/nvidia-titan-v-volta-gv100-gpu-review?page=6#OPIczhxGPWV8R7pl.99

I am still doing some more analysis on this, but the power draw of the Titan V appears to be quite interesting. As I pointed out on the first page, the clock speeds start in the 1700+ MHz range, but quickly drop to the 1550-1600 MHz range under sustained usage. That is a bigger variance than normal and our power testing shows an interesting profile as well.

The lack of any real information or even crappy chinese leaks should be a clue that it wont be soon. July or August would be the most likely launch months if things start to leak around May. Also Nvidia has made it clear they are in no rush.