Navigation

Install the app

How to install the app on iOS

Follow along with the video below to see how to install our site as a web app on your home screen.

Note: This feature may not be available in some browsers.

More options

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

The perfect 4K 43” monitor! Soon! Asus XG438Q ROG

- Thread starter Omegaferrari

- Start date

Martha Stewart

Gawd

- Joined

- Apr 14, 2011

- Messages

- 668

$1999 is insulting as this is nothing more than a TV , And no manufacture would ever get away with charging $2k for a 43" TV. But with the cheesy gaming branding and it being 1st to market I expect $2k+ easy (maybe even $2499.99)

bananadude

Limp Gawd

- Joined

- Dec 29, 2006

- Messages

- 370

$1999 is insulting as this is nothing more than a TV , And no manufacture would ever get away with charging $2k for a 43" TV. But with the cheesy gaming branding and it being 1st to market I expect $2k+ easy (maybe even $2499.99)

Unfortunately this is the case for most monitors... from a technical point of view, I can't think of a single high end model that's actually worth the price when you think what TV's cost. That said, TV's are produced in FAR FAR greater quantities, so that does drive price down somewhat. It is a different market. Still, I know exactly what you mean, and if this 43" monitor is anywhere near the $2K mark, it's ridiculous.

Zarathustra[H]

Extremely [H]

- Joined

- Oct 29, 2000

- Messages

- 38,860

$1999 is insulting as this is nothing more than a TV , And no manufacture would ever get away with charging $2k for a 43" TV. But with the cheesy gaming branding and it being 1st to market I expect $2k+ easy (maybe even $2499.99)

Unfortunately this is the case for most monitors... from a technical point of view, I can't think of a single high end model that's actually worth the price when you think what TV's cost. That said, TV's are produced in FAR FAR greater quantities, so that does drive price down somewhat. It is a different market. Still, I know exactly what you mean, and if this 43" monitor is anywhere near the $2K mark, it's ridiculous.

Yeah, you hit the nail on the head, it's all about volumes.

Even a small change (better VRR implementation, higher refresh support (even when using the same panel, the underlying circuitry and firmware need to be altered), PC sleep and wake support, diaplayport input, etc. etc. cost engineering and test man hours to implement. Engineering and test hours are VERY expensive.

So, with a monitor like this, you have way fewer sales to spread that cost over, so it will have a significant impact on the price.

Besides, I disagree and bit that this is just a TV. Find me a TV that has a displayport input so I can use 4k 120hz VRR at 4:4:4 chroma.

Even though it uses the same panel as TV's these feature sets are crucial, and adding them costs real money.

Now, there is also bullshit involved. Branding and "gaming" industrial design and disco lighting add to the perception of the lowest common denominator that this is "better" and thus they can charge more for it.

Branding is a cancer on society.

I was asking, because I didn't want to speculate.

My last few Monitors have been around $1500. I would expect this 43" to be about $1999. Because that is what I would pay today. But give me 2 months and real HDMI2.1 monitors start hitting the market... ones without speakers and such. And I THINK we will see this around $1500 by mid 2020.

But without even knowing MSRP, they could probably want $3200 for it... lol

My last few Monitors have been around $1500. I would expect this 43" to be about $1999. Because that is what I would pay today. But give me 2 months and real HDMI2.1 monitors start hitting the market... ones without speakers and such. And I THINK we will see this around $1500 by mid 2020.

But without even knowing MSRP, they could probably want $3200 for it... lol

Zarathustra[H]

Extremely [H]

- Joined

- Oct 29, 2000

- Messages

- 38,860

I was asking, because I didn't want to speculate.

My last few Monitors have been around $1500. I would expect this 43" to be about $1999. Because that is what I would pay today. But give me 2 months and real HDMI2.1 monitors start hitting the market... ones without speakers and such. And I THINK we will see this around $1500 by mid 2020.

But without even knowing MSRP, they could probably want $3200 for it... lol

What about HDMI2.1 monitors appeal to you above what displayport can provide?

I've always thought of HDMI as a TV format, and a hack at best on the desktop, best to be avoided if possible.

Last edited:

What about HDMI2.1 monitors appeal to you above what diaplayport can provide?

I've always thought of HDMI as a TV format, and a hack at best on the desktop, best to be avoided if possible.

Bandwidth.

4k @ 144hz 4.4.4. with full HDR.

Mad Maxx

Supreme [H]ardness

- Joined

- Apr 12, 2016

- Messages

- 7,322

Any price over $1200 is a deal breaker for me. I'm just not that much of a gamer to justify spending more. I'll get the best 43" 4K TV, probably the $650 Samsung Q60, and call it day.

Any price over $1200 is a deal breaker for me. I'm just not that much of a gamer to justify spending more. I'll get the best 43" 4K TV, probably the $650 Samsung Q60, and call it day.

I am getting old and have the money. I justify it in one way... no matter how expensive your computer and GPU is, you spend your WHOLE TIME looking at your monitor/desktop.

Zarathustra[H]

Extremely [H]

- Joined

- Oct 29, 2000

- Messages

- 38,860

I am getting old and have the money. I justify it in one way... no matter how expensive your computer and GPU is, you spend your WHOLE TIME looking at your monitor/desktop.

Yeah, Accessories (mice, keyboards monitors, etc) tend to last much longer too. We may buy a new GPU every 1-2 years, but our monitors are usually with us 5+ years.

Mad Maxx

Supreme [H]ardness

- Joined

- Apr 12, 2016

- Messages

- 7,322

I am getting old and have the money. I justify it in one way... no matter how expensive your computer and GPU is, you spend your WHOLE TIME looking at your monitor/desktop.

All true, and if I were a serious gamer I'd be all in on this. A $1300+ monitor for every day work and occasional gaming? That ain't for me.Yeah, Accessories (mice, keyboards monitors, etc) tend to last much longer too. We may buy a new GPU every 1-2 years, but our monitors are usually with us 5+ years.

Lateralus

More [H]uman than Human

- Joined

- Aug 7, 2004

- Messages

- 18,501

I wish LG would hurry up with their 48" OLED so that this decision would be easier.

I'm pretty much all in for one of these 43" gaming monitors up to a certain price point, since it's an ideal size and the 43" TVs have all ended up being gimped in terms of features (gaming and otherwise). But if it ends up being $2000 when I paid $1400 for my 55" OLED...ehhhh not too sure about that. I'll probably wait for more options to come to market and see what happens to pricing. I've done without FreeSync and VRR for this long, so I'm not in a rush.

I'm pretty much all in for one of these 43" gaming monitors up to a certain price point, since it's an ideal size and the 43" TVs have all ended up being gimped in terms of features (gaming and otherwise). But if it ends up being $2000 when I paid $1400 for my 55" OLED...ehhhh not too sure about that. I'll probably wait for more options to come to market and see what happens to pricing. I've done without FreeSync and VRR for this long, so I'm not in a rush.

TheGameguru

[H]ard|Gawd

- Joined

- May 31, 2002

- Messages

- 1,837

Its really crazy to see these awful picture quality/black level $2000+ monitors hitting the market when OLED's are almost perfect minus VRR/FreeSync at high refresh rates. Hopefully the LG will adopt HDMI 2.1 and push 144FPS at 4K with HDR..but since AMD isnt developing GPU's fast enough to do that its all sorta moot since we know Nvidia will push G-Sync as long as humanely possible.

Oh well I'm still happy with my 2016 LG 55" Curved OLED as my primary PC Gaming and Work Monitor.

Oh well I'm still happy with my 2016 LG 55" Curved OLED as my primary PC Gaming and Work Monitor.

IdiotInCharge

NVIDIA SHILL

- Joined

- Jun 13, 2003

- Messages

- 14,675

Its really crazy to see these awful picture quality/black level $2000+ monitors hitting the market when OLED's are almost perfect minus VRR/FreeSync at high refresh rates.

Still not sold on burn-in nor max brightness, nor color accuracy with these for the 'ideal' desktop monitor, but they're certainly closer than anything else.

Hopefully the LG will adopt HDMI 2.1 and push 144FPS at 4K with HDR..

Hopefully everyone will adopt HDMI 2.1 at their next regularly scheduled architecture update. Intel, I'm looking at you too.

but since AMD isnt developing GPU's fast enough to do that its all sorta moot since we know Nvidia will push G-Sync as long as humanely possible.

Really just need Nvidia GPUs that support HDMI VRR. That's likely to come with Ampere. Doesn't make a whole lot of sense to exclude that- and it's not like they wouldn't have the tech as they'll need it for their Shield line too.

Zarathustra[H]

Extremely [H]

- Joined

- Oct 29, 2000

- Messages

- 38,860

Really just need Nvidia GPUs that support HDMI VRR. That's likely to come with Ampere. Doesn't make a whole lot of sense to exclude that- and it's not like they wouldn't have the tech as they'll need it for their Shield line too.

I thuoght they did with the recent driver update this year? or was that just FreeSync?

IdiotInCharge

NVIDIA SHILL

- Joined

- Jun 13, 2003

- Messages

- 14,675

I thuoght they did with the recent driver update this year? or was that just FreeSync?

Just over DisplayPort. So you can enable FreeSync over DisplayPort, but not HDMI. It's the one single function that makes AMD GPUs desirable outside of The Faithful today.

TheGameguru

[H]ard|Gawd

- Joined

- May 31, 2002

- Messages

- 1,837

I left the house for 4 days and came back and my OLED was stuck on a Trend Micro screen.. I must have forgotten to turn it off and somehow the PC kept the monitor awake. I thought I would have burn in for sure but running the Pixel Refresh and there was zero burn in. Sure there are ways to get burn in to happen but I feel like its all super easy to avoid even with a modicum of thought and even then it seems when you do make a mistake its not the end of the world.

Max Brightness is probably important for some situations but I find the overdone bloom of local dimming super bright LCD's worse in HDR situations.. I'd rather take less bright and better blacks without blooming anyday.

Max brightness is slowly getting better but I think it will always be a limit with OLED tech in its current form.. even then they pretty much use unrealistic scenarios to measure peak brightness in HDR

Max Brightness is probably important for some situations but I find the overdone bloom of local dimming super bright LCD's worse in HDR situations.. I'd rather take less bright and better blacks without blooming anyday.

Max brightness is slowly getting better but I think it will always be a limit with OLED tech in its current form.. even then they pretty much use unrealistic scenarios to measure peak brightness in HDR

IdiotInCharge

NVIDIA SHILL

- Joined

- Jun 13, 2003

- Messages

- 14,675

Max Brightness is probably important for some situations but I find the overdone bloom of local dimming super bright LCD's worse in HDR situations.. I'd rather take less bright and better blacks without blooming anyday.

Don't really disagree with that. Not a fan of local-dimming LCDs.

Max brightness is slowly getting better but I think it will always be a limit with OLED tech in its current form.. even then they pretty much use unrealistic scenarios to measure peak brightness in HDR

The biggest issue is the handling of HDR and traditional content. Photography is a big example for me, and one challenge with current OLED tech is that the color 'response' (input vs. displayed) changes with brightness. That effectively means that from a photography standpoint, HDR ranges from a minor liability to being unusable on OLEDs due to inaccuracies. You'd have to leave the panel in SDR to be able to rely on the colors.

Lateralus

More [H]uman than Human

- Joined

- Aug 7, 2004

- Messages

- 18,501

I left the house for 4 days and came back and my OLED was stuck on a Trend Micro screen.. I must have forgotten to turn it off and somehow the PC kept the monitor awake. I thought I would have burn in for sure but running the Pixel Refresh and there was zero burn in. Sure there are ways to get burn in to happen but I feel like its all super easy to avoid even with a modicum of thought and even then it seems when you do make a mistake its not the end of the world.

Yeah, this has been covered in numerous threads here. A lot of the traditional LCD folks have bought in to the OLED burn-in fears and don't realize how robust these things are. I've spoken at length about this in other threads and most of the time, several other OLED owners chime in and agree that "Yeah, the concerns are way overblown." I'm creeping up on 2 years of using mine as a PC monitor and it's still as as perfect as it was Day 1. Like you said, as long as you exercise a little caution they're fine. Rtings noted in their burn-in tests that only the panels left with static content running 24/7 had any issues. Not exactly a realistic scenario.

Commander Shepard did experience burn-in on his OLED unfortunately, but IIRC he basically left it running the news every day for hours at a time on a high brightness level so it morphed from an LG set into a FOX News TV, hehehe. I believe that he ended up replacing it with one of the newer Samsung QLED sets, which are awesome in their own right.

I'm with you, though. I'm totally spoiled by the black levels and I'm afraid that these 43" gaming monitors are going to be a regression in terms of image quality. I realize that some people are willing to deal with that in order to get VRR/FreeSync/120Hz+, but LG's new line of OLEDs was supposed to solve most of that giving us the best of both worlds so here I wait...

Zarathustra[H]

Extremely [H]

- Joined

- Oct 29, 2000

- Messages

- 38,860

Just over DisplayPort. So you can enable FreeSync over DisplayPort, but not HDMI. It's the one single function that makes AMD GPUs desirable outside of The Faithful today.

I haven't looked into it a lot, but when the 5700 and 5700 XT launched I remember thinking they had a solid mid range punch for a relatively modest price tag.

I'm not in the mid-range market, but I have totally been thinking about the 5700 XT as a GPU upgrade for my stepsons system.

IdiotInCharge

NVIDIA SHILL

- Joined

- Jun 13, 2003

- Messages

- 14,675

I haven't looked into it a lot, but when the 5700 and 5700 XT launched I remember thinking they had a solid mid range punch for a relatively modest price tag.

I'm not in the mid-range market, but I have totally been thinking about the 5700 XT as a GPU upgrade for my stepsons system.

I'm looking forward to seeing them evaluated across the board once versions with third-party cooling are available; as it stands, I'd pay more for RTX and a decent cooler today, and really can't recommend any different barring hard budgetary considerations.

Mad Maxx

Supreme [H]ardness

- Joined

- Apr 12, 2016

- Messages

- 7,322

Yeah, my OLED's burn-in was entirely a case of user error. Had I toned down the brightness and paid attention, the disaster easily could have been averted. Bottom line: OLED ain't best for extended TV viewing with any kind of scrolling across the bottom of the screen (news, sports, etc). I picked up a Sony 55" X950G and it's much better suited to my TV habits. I do miss the inky blacks of OLED, though. I'd be all over a 43" OLED for a computer monitor.Commander Shepard did experience burn-in on his OLED unfortunately, but IIRC he basically left it running the news every day for hours at a time on a high brightness level so it morphed from an LG set to a FOX News TV, hehehe. I believe that he ended up replacing it with one of the newer Samsung QLED sets, which are awesome in their own right.

Zarathustra[H]

Extremely [H]

- Joined

- Oct 29, 2000

- Messages

- 38,860

Its really crazy to see these awful picture quality/black level $2000+ monitors hitting the market when OLED's are almost perfect minus VRR/FreeSync at high refresh rates. Hopefully the LG will adopt HDMI 2.1 and push 144FPS at 4K with HDR..but since AMD isnt developing GPU's fast enough to do that its all sorta moot since we know Nvidia will push G-Sync as long as humanely possible.

Oh well I'm still happy with my 2016 LG 55" Curved OLED as my primary PC Gaming and Work Monitor.

I have to admit, having a Displayport, VRR and above 60hz max refresh rates is probably a bigger deal to me than deep blacks or HDR, but if I can have both, I'd of course rather have that.

Another reason I really like these screens is that 43" is THE PERFECT size for 4K from a pixel density perspective. 40" is a little small, and 48" (what I have now) is a little big.

Is there nay information about these upcoming LG OLED models, or is it just speculation at this point?

I mean, if I can have OLED blacks, 144hz VRR over displayport at 43", I'd happily wait a few months, but I'm not willing to just bide my time on a guess that "it might be coming".

IdiotInCharge

NVIDIA SHILL

- Joined

- Jun 13, 2003

- Messages

- 14,675

I mean, if I can have OLED blacks, 144hz VRR over displayport at 43", I'd happily wait a few months, but I'm not willing to just bide my time on a guess that "it might be coming".

I spent... ~US$1200 on my HP ZR30w back in 2010?

I've spent at most half that since. I could spend more, but I find that higher prices just bring different compromises. If I could be assured that burn-in wouldn't be a problem, I'd take your above display and not look back- and that's if such a thing ever exists.

Personally, with my acuity and viewing position, I could get away with maybe 35" 4k; I have 31.5" 4k up there now and that's for sure too high DPI.

Remember, these displays have Freesync @ 4k/144Hz. No TV offers that now, and when HDMI2.1 starts to really hit the TVs will cost the same anyways.

It's not like anyone can push 144FPS @ 4k with any GPU on the market with the latest games anyways. I have a 2080Ti and I'm happy to get even close to 60FPS in AC Odyssey with the settings turned down. lol

It's not like anyone can push 144FPS @ 4k with any GPU on the market with the latest games anyways. I have a 2080Ti and I'm happy to get even close to 60FPS in AC Odyssey with the settings turned down. lol

Zarathustra[H]

Extremely [H]

- Joined

- Oct 29, 2000

- Messages

- 38,860

It's not like anyone can push 144FPS @ 4k with any GPU on the market with the latest games anyways. I have a 2080Ti and I'm happy to get even close to 60FPS in AC Odyssey with the settings turned down. lol

Yeah, but remember, people keep monitors for many years.

The monitor I buy today I expect to still be using in 2025. heck, if its good enough and new features I like don't pop up, maybe even 2030.

IdiotInCharge

NVIDIA SHILL

- Joined

- Jun 13, 2003

- Messages

- 14,675

It's not like anyone can push 144FPS @ 4k with any GPU on the market with the latest games anyways.

Also remember: not all games require all the horsepower, nor do gamers only play the latest games. It's quite nice to go back and play games that were challenging to run at release with high resolution and high framerates.

cybereality

[H]F Junkie

- Joined

- Mar 22, 2008

- Messages

- 8,789

Right on. Lots of great games from years past that can be maxed out on hardware that didn't exist at the time.

Just finished playing the entire Half-Life series (Black Mesa, Half-Life 2 Update, Ep 1 and Ep 2, plus Lost Coast) in 5K ultrawide 166Hz. It was great.

Think I might try Bioshock next, but I might start with System Shock, then do that whole Deus Ex franchise. It's lots of fun. Some of those old games are better than anything today.

Just finished playing the entire Half-Life series (Black Mesa, Half-Life 2 Update, Ep 1 and Ep 2, plus Lost Coast) in 5K ultrawide 166Hz. It was great.

Think I might try Bioshock next, but I might start with System Shock, then do that whole Deus Ex franchise. It's lots of fun. Some of those old games are better than anything today.

Mine has flashing lights on it!its too big! it just won't fit!

Its really crazy to see these awful picture quality/black level $2000+ monitors hitting the market when OLED's are almost perfect minus VRR/FreeSync at high refresh rates. Hopefully the LG will adopt HDMI 2.1 and push 144FPS at 4K with HDR..but since AMD isnt developing GPU's fast enough to do that its all sorta moot since we know Nvidia will push G-Sync as long as humanely possible.

Oh well I'm still happy with my 2016 LG 55" Curved OLED as my primary PC Gaming and Work Monitor.

For a lot of people 50+" screens are way too big to be comfortable to use on the desktop. You will need a deep desk to put it far enough and lots of scaling so you can read it. I even wish the 43" model was under 40" but since I can split the screen between two inputs its real estate is easier to justify for me in purely desktop use. TV manufacturers have shown that they are completely unwilling to make flagship level products at smaller screen sizes.

It's a shame the XG438Q doesn't come with HDMI 2.1 and instead opts for HDMI 2.0 which limits the refresh rate at 4K to 60 Hz. HDMI 2.1 would have enabled it to work well with next gen consoles too as those are likely to support Freesync and high refresh rates. It's not going to be an issue for me as I'm going to be running it via Displayport but it would have been nice.

People don't really change their displays, like they do with GPU. (How many CPUs have some of your monitors survived?)

I made the point that a Monitor is your whole system, because it should be the most costly. It should be the thing you most save for, since you don't replace them often, if ever (hand-me-down). People can justify paying more for a Monitor, because more often than not, you'll go threw another GPU to get max performance out of your 2 year old (but brand new) monitor.

I agree that HDMI2.1 is really late coming to the party and smells like some collusion. But at least AMD offers FreeSync (2.0) for all those sub-4k Gamers.

ed: If this 43" Monitor hit all the check marks, I would feel comfortable paying $2k for it. I too am wondering what a 39" OLED @ 120Hz is taking so long? Us Gamers are not getting any younger... Kyle, Me and even John Carmack has grey hairs...

We want our unrestricted gameplay.

I made the point that a Monitor is your whole system, because it should be the most costly. It should be the thing you most save for, since you don't replace them often, if ever (hand-me-down). People can justify paying more for a Monitor, because more often than not, you'll go threw another GPU to get max performance out of your 2 year old (but brand new) monitor.

I agree that HDMI2.1 is really late coming to the party and smells like some collusion. But at least AMD offers FreeSync (2.0) for all those sub-4k Gamers.

ed: If this 43" Monitor hit all the check marks, I would feel comfortable paying $2k for it. I too am wondering what a 39" OLED @ 120Hz is taking so long? Us Gamers are not getting any younger... Kyle, Me and even John Carmack has grey hairs...

We want our unrestricted gameplay.

Last edited:

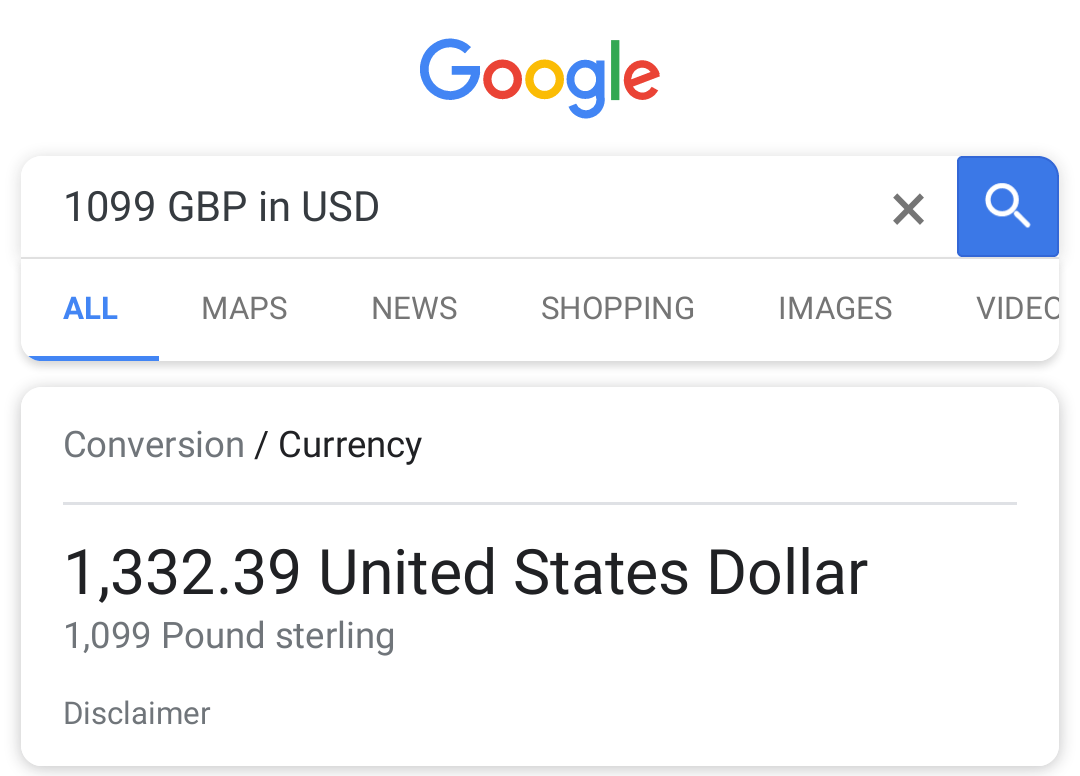

If you go back to the product link on the 1st message. It now shows a RRP of 1099 pounds which usually means it will have a similar price in the US. If this info is correct. it's a very reasonable price

Nice catch. Available from late August according to that. If that turns to about 1200 euros then I'm buying one.

I would not be surprised if they introduce an upgraded 144+ Hz / HDR1000 version next year for 1400-1600 euros.

IdiotInCharge

NVIDIA SHILL

- Joined

- Jun 13, 2003

- Messages

- 14,675

If you go back to the product link on the 1st message. It now shows a RRP of 1099 pounds which usually means it will have a similar price in the US. If this info is correct. it's a very reasonable price

Fffffuuuuuuuuuu....

Time to clear a card!

Zarathustra[H]

Extremely [H]

- Joined

- Oct 29, 2000

- Messages

- 38,860

If you go back to the product link on the 1st message. It now shows a RRP of 1099 pounds which usually means it will have a similar price in the US. If this info is correct. it's a very reasonable price

If this is accurate, I'll take it.

I could have sworn PC hardware was almost always more expensive in Europe than in the U.S. though.

IdiotInCharge

NVIDIA SHILL

- Joined

- Jun 13, 2003

- Messages

- 14,675

I could have sworn PC hardware was almost always more expensive in Europe than in the U.S. though.

Mostly they just charge the same number of Pounds Sterling as dollars, to account for British Socialism. In Europe that doesn't fly because the Euro dropped versus the dollar, but they still have to pay the Socialists, so the number goes up.

Zarathustra[H]

Extremely [H]

- Joined

- Oct 29, 2000

- Messages

- 38,860

Mostly they just charge the same number of Pounds Sterling as dollars, to account for British Socialism. In Europe that doesn't fly because the Euro dropped versus the dollar, but they still have to pay the Socialists, so the number goes up.

Oh come on. Don't politicize a monitor thread

Astral Abyss

2[H]4U

- Joined

- Jun 15, 2004

- Messages

- 3,065

Oh come on. Don't politicize a monitor thread

British VAT at 20% adds up quick.

![[H]ard|Forum](/styles/hardforum/xenforo/logo_dark.png)