Here is the exact NVMe drive I have, bought it from here on Jan 30 of this year:

https://www.amazon.com/gp/product/B07MFZY2F2

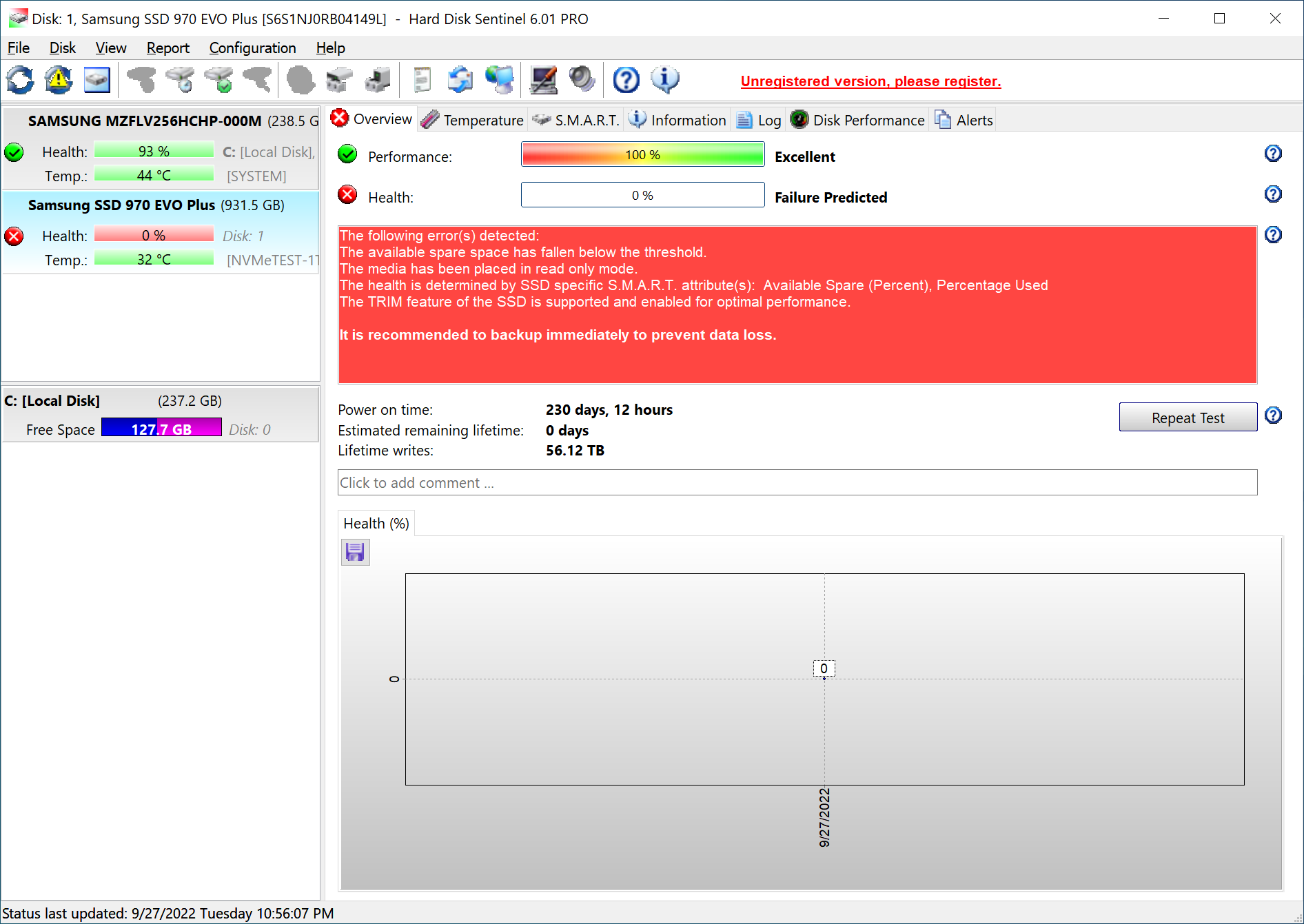

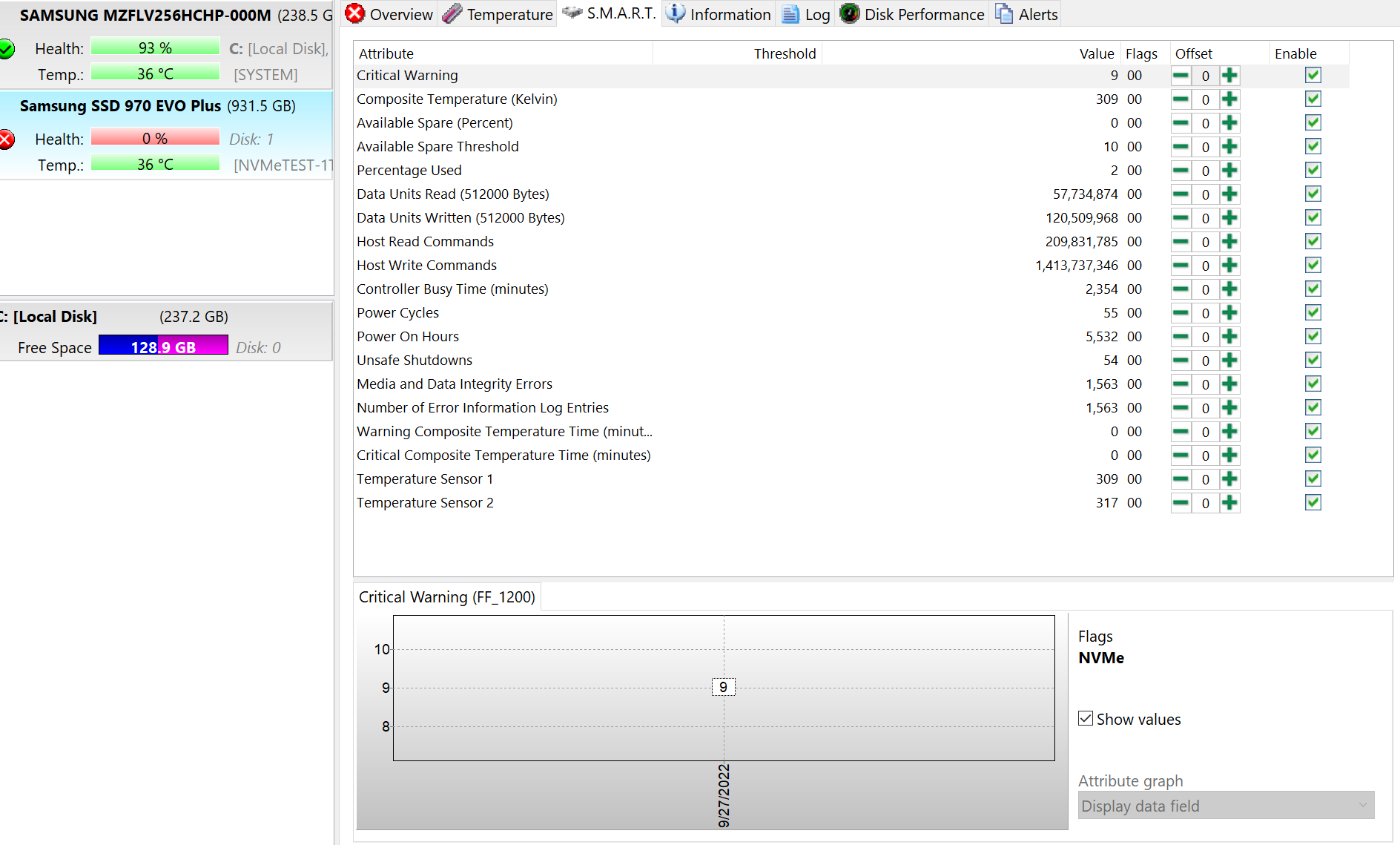

I installed ESXi 6.7u3 onto a Dell PowerEdge server (diff boot drive), and used this NVMe drive for just a few VMs. The NVMe drive was installed onto a PCIe card since the server didn't have NVME native capability on the motherboard. It worked like a champ from February up until September (maybe 2 weeks ago max). The datastore vanished, never to be seen again.

I'll keep the story short. It sucked, but I had most of my stuff backed up. I got the important parts of my environment back online on a regular SATA SSD until I bought a new NVMe drive. I also bought a new NVMe PCIe card. I ran on the SSD for a few days and migrated it all to a new NVMe drive. Everything is working great again.

Well, now I have this Samsung Evo 970 and wondering what the hell went wrong. I tried it in both the original, and the new (diff brand) NVMe PCIe card. It just won't work. The new NVMe drive works in both PCIe cards. I gave up on recovering the file system on it (VMFS), so I popped the 970 evo into a USB 3.0 adapter and ran diskpart from windows 10, and ran a CLEAN on it. It was hanging at some points, but eventually worked, and eventually was able to format NTFS and test file transfers to it. I didn't do much testing, but I was able to transfer a file of ~4-5GB to it.

Still... what's wrong with this drive? If I plug it back into the original server, it shows up with an error of sorts and while the NVMe PCIe controller is seen, the drive is not detected as an available device. I'd say the original NVMe PCIe card is fine b/c like I said the 970 evo didn't work in the new PCIe card either.

Now, I have Samsung magician, but that just does secure erase. How the heck can I test this 970 evo? Running it over a USB 3.0 adapter doesn't really do proper testing.

So what I did was take an old desktop (that I used as a whitebox esxi host back in the day) and I installed ESXi 6.7u3 on it. I then popped in the original NVMe PCIe card and the 970 evo, and this new 6.7u3 install doesn't see the 970 EVO. It does detect the NVMe PCIe card though.

I'm totally fine with RMAing the 970 evo, but:

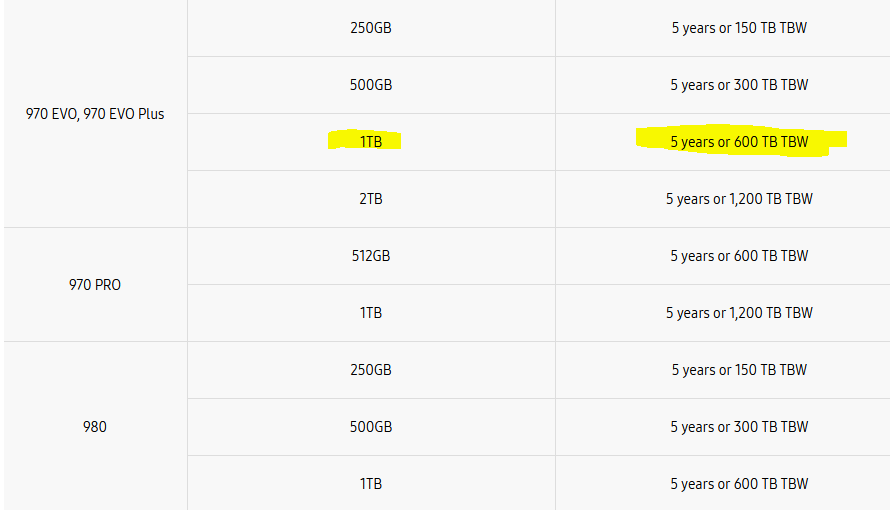

- Never in a million years did I expect an NVMe to die within 7 months

- No I did not slam away at it. I ran a few VMs, nothing doing crazy work. In fact, I only run OSs and services on it. I don't store videos/pics or anything on it.

- Is there no other bootable tool that can properly test this 970 evo? Samsung just has the magician tool -- that doesn't do testing.

I'm used to the old Long and Short DST testing. No idea on what to do on an NVMe.

Thanks in advance.

https://www.amazon.com/gp/product/B07MFZY2F2

I installed ESXi 6.7u3 onto a Dell PowerEdge server (diff boot drive), and used this NVMe drive for just a few VMs. The NVMe drive was installed onto a PCIe card since the server didn't have NVME native capability on the motherboard. It worked like a champ from February up until September (maybe 2 weeks ago max). The datastore vanished, never to be seen again.

I'll keep the story short. It sucked, but I had most of my stuff backed up. I got the important parts of my environment back online on a regular SATA SSD until I bought a new NVMe drive. I also bought a new NVMe PCIe card. I ran on the SSD for a few days and migrated it all to a new NVMe drive. Everything is working great again.

Well, now I have this Samsung Evo 970 and wondering what the hell went wrong. I tried it in both the original, and the new (diff brand) NVMe PCIe card. It just won't work. The new NVMe drive works in both PCIe cards. I gave up on recovering the file system on it (VMFS), so I popped the 970 evo into a USB 3.0 adapter and ran diskpart from windows 10, and ran a CLEAN on it. It was hanging at some points, but eventually worked, and eventually was able to format NTFS and test file transfers to it. I didn't do much testing, but I was able to transfer a file of ~4-5GB to it.

Still... what's wrong with this drive? If I plug it back into the original server, it shows up with an error of sorts and while the NVMe PCIe controller is seen, the drive is not detected as an available device. I'd say the original NVMe PCIe card is fine b/c like I said the 970 evo didn't work in the new PCIe card either.

Now, I have Samsung magician, but that just does secure erase. How the heck can I test this 970 evo? Running it over a USB 3.0 adapter doesn't really do proper testing.

So what I did was take an old desktop (that I used as a whitebox esxi host back in the day) and I installed ESXi 6.7u3 on it. I then popped in the original NVMe PCIe card and the 970 evo, and this new 6.7u3 install doesn't see the 970 EVO. It does detect the NVMe PCIe card though.

I'm totally fine with RMAing the 970 evo, but:

- Never in a million years did I expect an NVMe to die within 7 months

- No I did not slam away at it. I ran a few VMs, nothing doing crazy work. In fact, I only run OSs and services on it. I don't store videos/pics or anything on it.

- Is there no other bootable tool that can properly test this 970 evo? Samsung just has the magician tool -- that doesn't do testing.

I'm used to the old Long and Short DST testing. No idea on what to do on an NVMe.

Thanks in advance.

As an Amazon Associate, HardForum may earn from qualifying purchases.

![[H]ard|Forum](/styles/hardforum/xenforo/logo_dark.png)