Steve at Hardware Unboxed has another good video showing how an old favorite does in newer games.

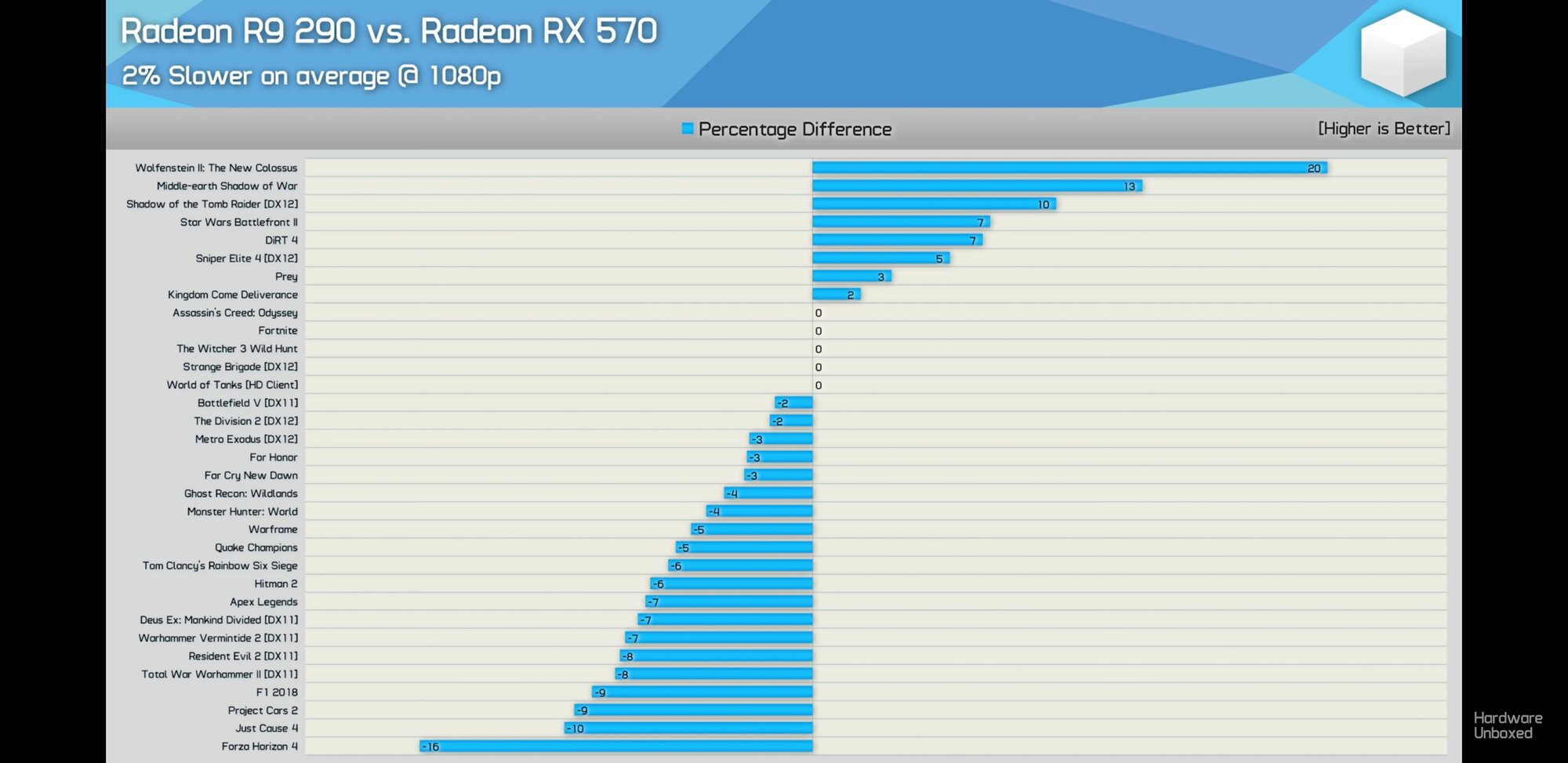

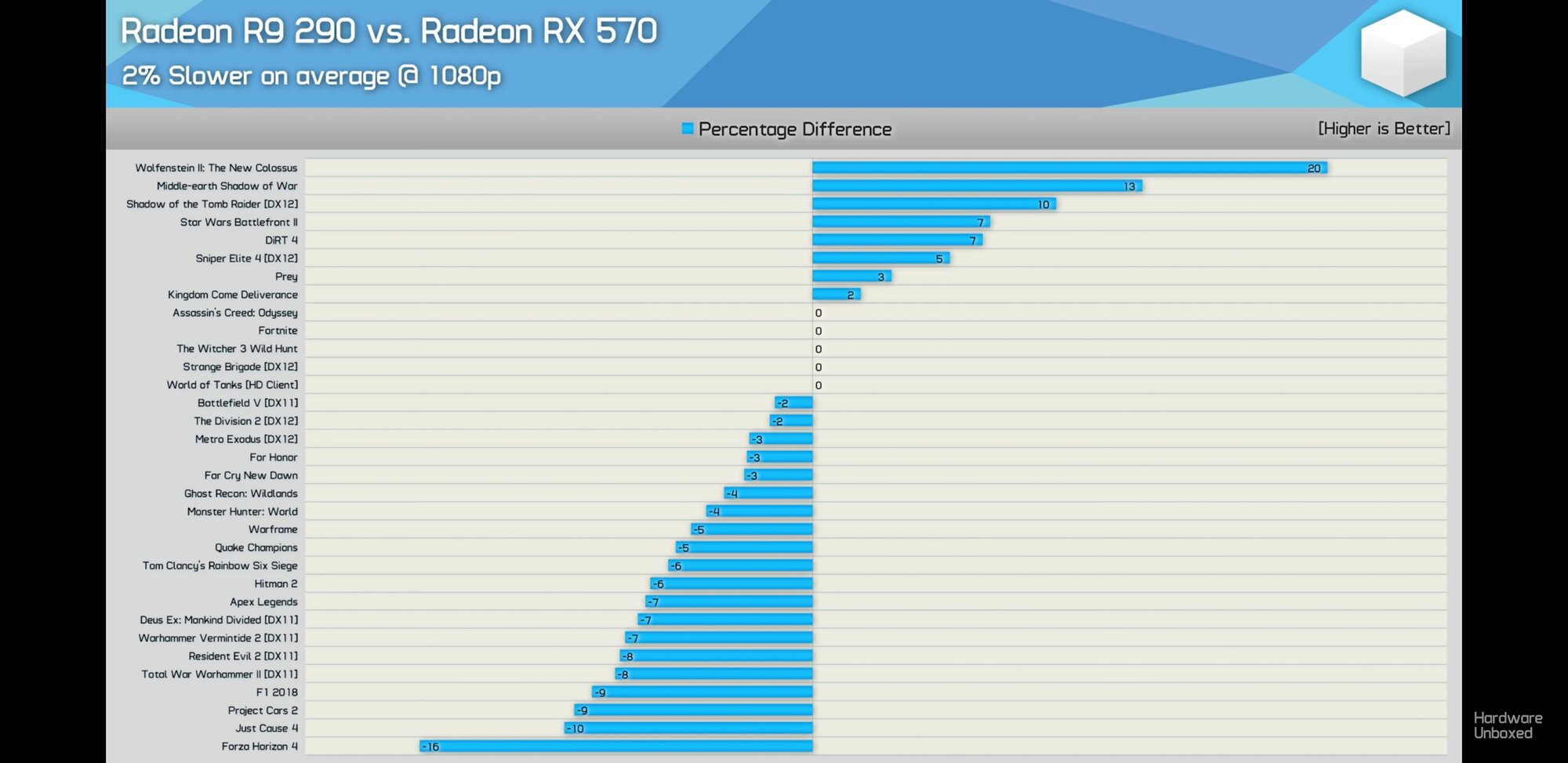

The RX570 manages to best the older card, but you have to wonder how would things look if the Hawaii was the newer card and more of the games were optimized for that architecture. Take a look at the results:

Just look how the DX12 games are actually better on the R9 290. Division 2, Metro Exodus, and FC New Dawn are the exception, but those are BRAND NEW games. You have to wonder how well Hawaii would be if it was still optimized for and upgraded with more advanced memory / GPU node.

The RX570 manages to best the older card, but you have to wonder how would things look if the Hawaii was the newer card and more of the games were optimized for that architecture. Take a look at the results:

Just look how the DX12 games are actually better on the R9 290. Division 2, Metro Exodus, and FC New Dawn are the exception, but those are BRAND NEW games. You have to wonder how well Hawaii would be if it was still optimized for and upgraded with more advanced memory / GPU node.

![[H]ard|Forum](/styles/hardforum/xenforo/logo_dark.png)