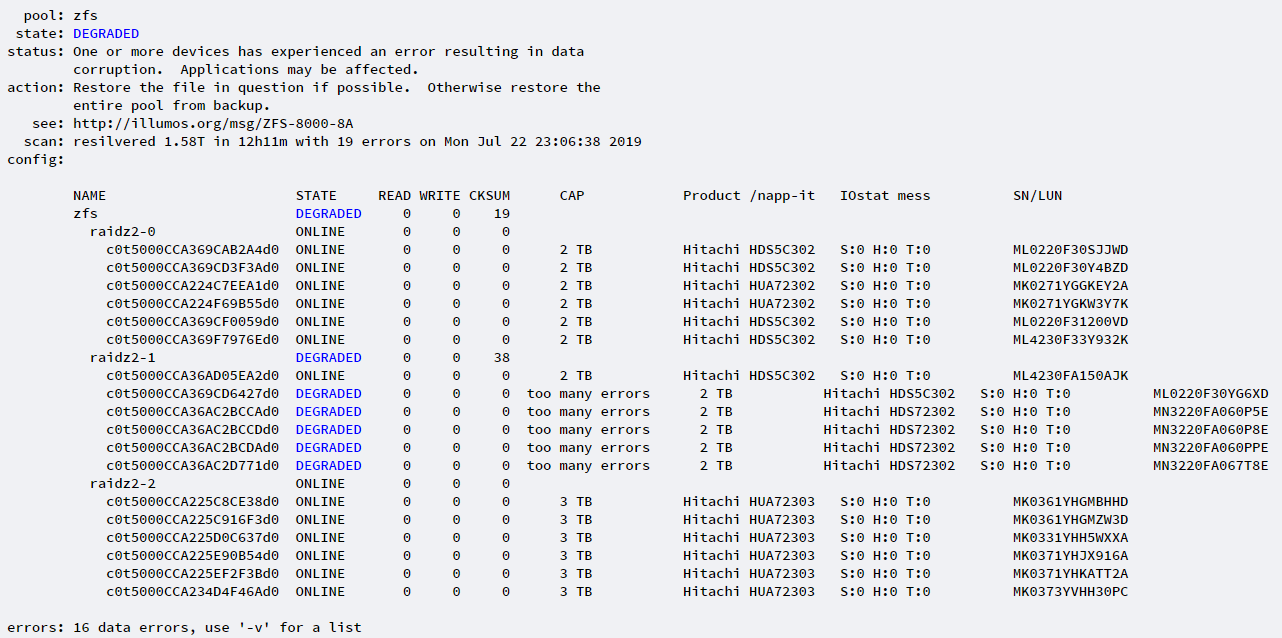

If the problem would be initiated by a single incident in the past and the hardware would be now ok, a scrub and pool clear would be enough.

If you cannot identify the problem with the help of the system and fault log and if there is no common part like same HBA or power cabling for the 6 disks showing problems, I would look at the disk with the hard errors.

Maybe this disk affects the others negatively ex by blocking something. I would offline or even physically remove this disk and retry a disk replace or scrub and clear.

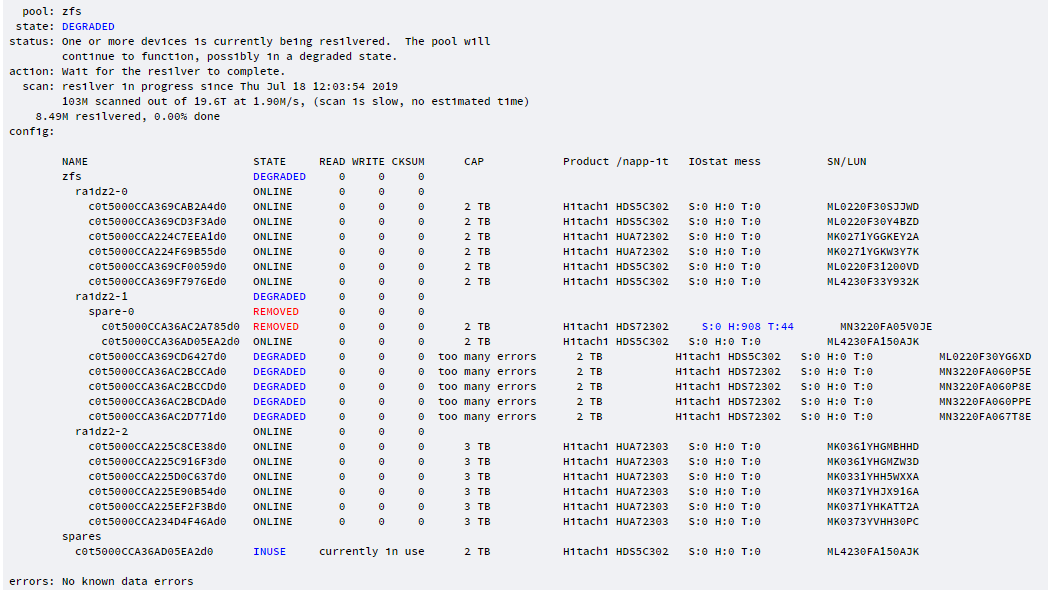

That was my thought..I pulled the drive. Lets see what happens.

![[H]ard|Forum](/styles/hardforum/xenforo/logo_dark.png)