DukenukemX

Supreme [H]ardness

- Joined

- Jan 30, 2005

- Messages

- 7,939

That's a problem that China would like to have. There's no future in manufacturing as it'll be automated, so having engineers instead of simple laborers is kinda what you want. The only reason we still have people putting stuff together is because we don't want to use engineers to make a design that's automated friendly. A lot of what goes into designing products is making it easy to put them together.Yes and no. A key problem in the States and other service-oriented economies is that it's difficult to find people who are just qualified enough. Trade education is in relatively short supply; you're more likely to find people with engineering degrees, and they're not going to work for typical factory pay.

The problem isn't convincing entitled Americans but convincing entitled businesses to pay more. If Americans would work for $0.2 Cents an hour then you'd build a factory here, but then you run into the problem of who would buy them? People making 2 cents per hour aren't going to be able to afford food, let a lone an iPhone.I also can't imagine Foxconn et. al. having much luck convincing entitled Americans that their future will be bright sitting on an iPhone assembly line.

Now you know why China wants their own version of Silicon Valley. Factory work has no future, so what can China do to employ their citizens? The reasons why we still have people working in factories is because it's cheaper than paying the engineers to make products to not depend on human hands, and intricate work is just not easy to automate.For that matter, let's not forget that any new or updated factories will likely include a lot of robots, wherever they're built — companies may get around labor conditions simply by having fewer people involved.

At least you get it. Apple has so much money laying around that Apple could afford to make their products here in USA and pay people $20-$30 per hour without an issue. Just like Jeff Bezos can give every employee $100k and still be a Billionaire. The problem is nobody likes losing money. Humans put more value in loss than in gain, so of course it's not possible.It pisses me off hearing people say 'this is no longer possible here'. No, it's possible, we just need the people with the money to start re-investing that money in our country. No, it won't instantly happen. I get that.

You may not care about paying 3x more but 99.99% of people certainly do. That doesn't mean if Apple built their products in USA or Europe then suddenly prices triple in cost. The cost of an item is mostly priced based on what you're willing to pay and not what the product costs. The iPhone 12 costs $373 to make and 22% of its parts come from USA, which at $800 for an iPhone 12 means Apple is making a lot per sale of iPhone. If you treat these devices like game consoles, then Apple will continue to make money off these devices from the sale of digital goods, but unlike game consoles these devices aren't sold at cost. Even printers now sell for cheap and depend on ink sales for revenue. Apple could easily make their products here and still charge you the same, but that would mean less money for them. They may not employ as many people as they do in China but they aren't employing anyone in USA to make any of their goods.And yes, i'll repeat it, I have no problem paying two to three times the cost of whatever product you can name if it were actually made in the USA. It's the same reason I have no problem buying expensive watches, and certain cars. Where something is built matters to me. I don't give a flying fuck about people in China. People in the UK, Ireland, most of western Europe, US & Canada? I care about those people because we generally share the same values.

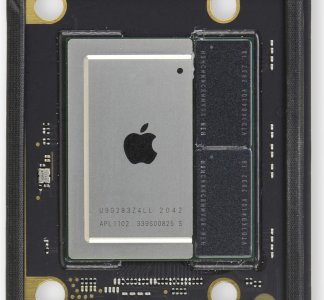

I still have a PowerBook G4 that I tried putting Ubuntu 16.04. It isn't something you can use daily, and you can forget about watching YouTube videos. Too many loops I had to jump just to get it to even boot, let alone all the features working. I still can't sleep the laptop without it crashing and staying on. This is what I mean by I hate dealing with other architectures that aren't x86 because the support for these devices on Linux is just not good. There's work trying to get Linux onto the M1 but I suspect it'll never get good enough to be 100% usable. My Lenovo laptop runs Mint 20 perfectly, and I have zero issues getting it to do anything I want. When the M2 and M3 are released, you can bet that just makes things more problematic for porting Linux onto them. Who knows if Apple starts to block other OS's from being installed? The community can get very far without the support of the manufacturer, but you won't get it working without any issues without the help of the manufacturer.Honestly, I loved my mac laptop from 2003 and as a media machine it was fantastic. That said, I always ended up using either linux or windows for work and never got into the ecosystem. I was issued an m1 macbook pro for work and GD does it blow away the 10750h cpu in my msi laptop. Granted, that msi laptop has a 2070 super in it and I think the 8core gpu in the m1 is somewhere around a 1050ti in performance, when it comes to getting actual work done that macbook just runs circles around it. I have legitimately pulled an 18 hour day completely on battery with it and I don't believe that is possible on any laptop I have laid my hands on. Certainly not one with desktop cpu levels of performance. I look forward to seeing the performance of the 14 and 16 inch releases over the next couple of years

![[H]ard|Forum](/styles/hardforum/xenforo/logo_dark.png)