ShuttleLuv

Supreme [H]ardness

- Joined

- Apr 12, 2003

- Messages

- 7,295

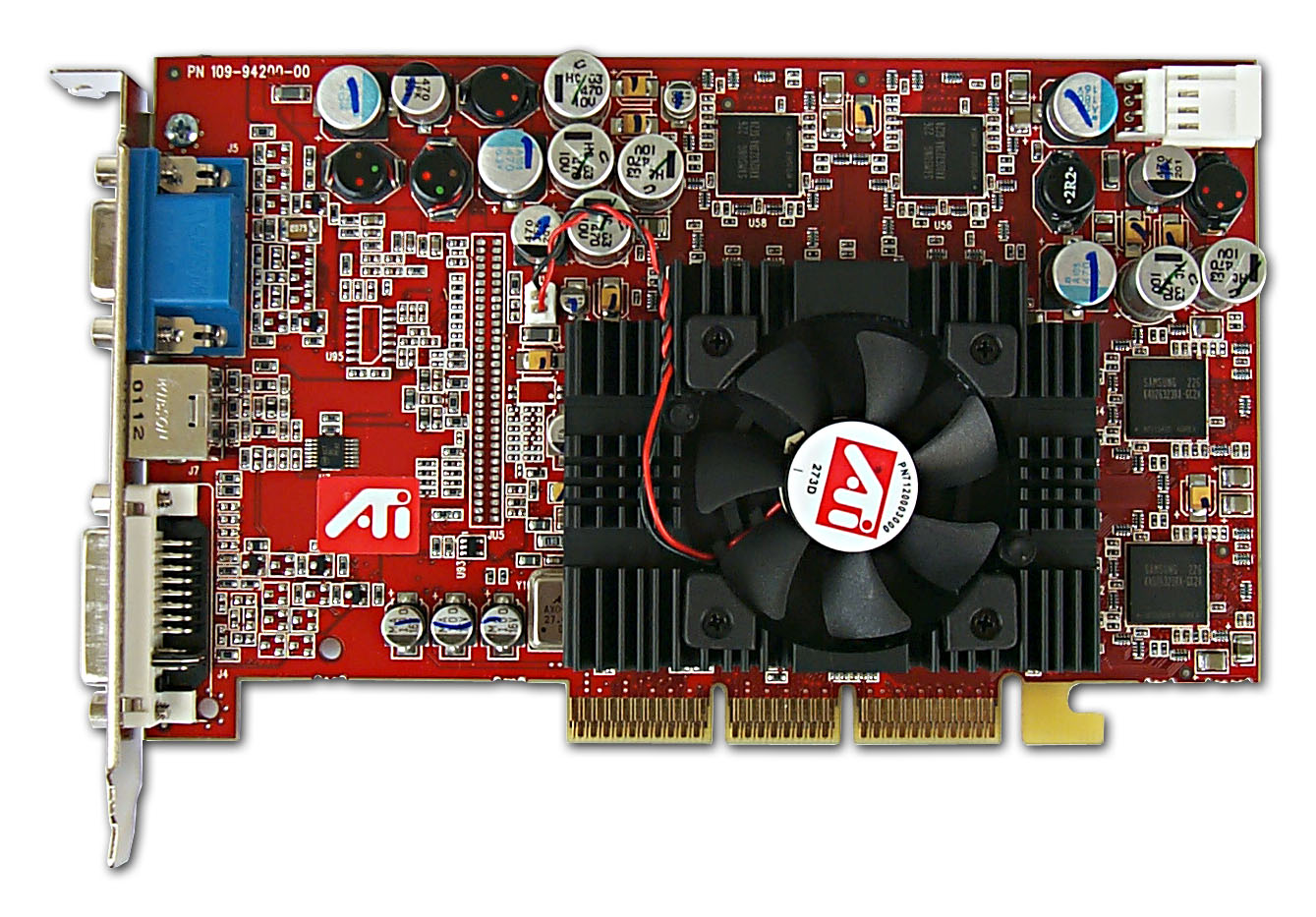

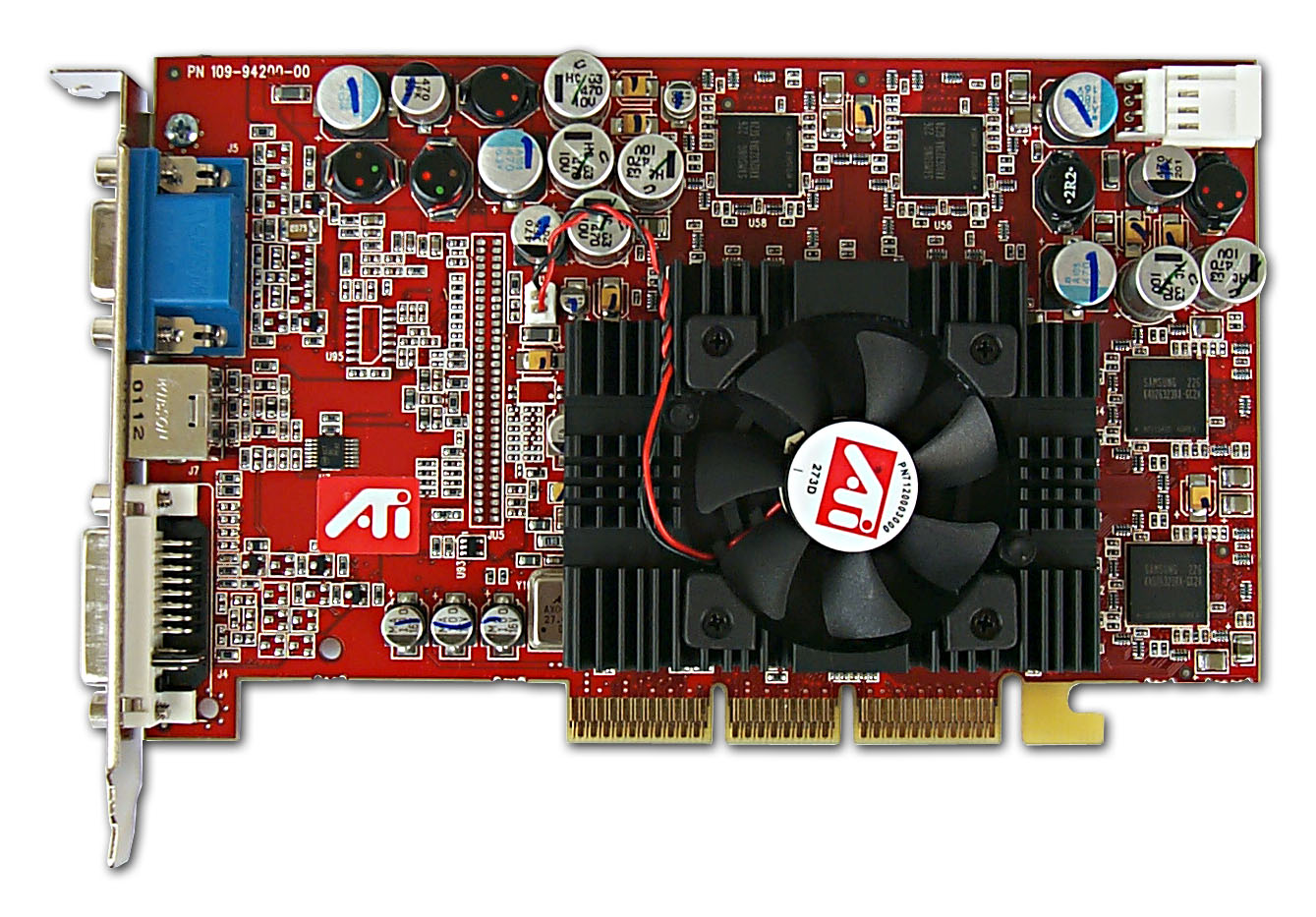

Was released. The Ati Radeon 9700 Pro, one of my few favorite cards of all time. Can you believe it's almost been just about 10 years since it came out???

What it brought to the table as per wikipedia:

Architecture

The chip adopted an architecture consisting of 8 pixel pipelines, each with 1 texture mapping unit (an 8x1 design). While this differed from the older chips using 2 (or 3 for the original Radeon) texture units per pipeline, this did not mean R300 could not perform multi-texturing as efficiently as older chips. Its texture units could perform a new loopback operation which allowed them to sample up to 16 textures per geometry pass. The textures can be any combination of one, two, or three dimensions with bilinear, trilinear, or anisotropic filtering. This was part of the new DirectX 9 specification, along with more flexible floating-point-based Shader Model 2.0+ pixel shaders and vertex shaders. Equipped with 4 vertex shader units, R300 possessed over twice the geometry processing capability of the preceding Radeon 8500 and the GeForce4 Ti 4600, in addition to the greater feature-set offered compared to DirectX 8 shaders.

ATI demonstrated part of what was capable with pixel shader PS2.0 with their Rendering with Natural Light demo. The demo was a real-time implementation of noted 3D graphics researcher Paul Debevec's paper on the topic of high dynamic range rendering.[1] A noteworthy limitation is that all R300-generation chips were designed for a maximum floating point precision of 96-bit, or FP24, instead of DirectX 9's maximum of 128-bit FP32. DirectX 9.0 specified FP24 as a minimum level for conforming to the specification for full precision. This trade-off in precision offered the best combination of transistor usage and image quality for the manufacturing process at the time. It did cause a usually visibly-imperceptible loss of quality when doing heavy blending. ATI's Radeon chips did not go above FP24 until R520.

ATI's Rendering with Natural Light promo demo

The R300 was the first board to truly take advantage of a 256-bit memory bus. Matrox had released their Parhelia 512 several months earlier, but this board did not show great gains with its 256-bit bus. ATI, however, had not only doubled their bus to 256-bit, but also integrated an advanced crossbar memory controller, somewhat similar to NVIDIA's memory technology. Utilizing four individual load-balanced 64-bit memory controllers, ATI's memory implementation was quite capable of achieving high bandwidth efficiency by maintaining adequate granularity of memory transactions and thus working around memory latency limitations. "R300" was also given the latest refinement of ATI's innovative HyperZ memory bandwidth and fillrate saving technology, HyperZ III. The demands of the 8x1 architecture required more bandwidth than the 128-bit bus designs of the previous generation due to having double the texture and pixel fillrate.

Radeon 9700 introduced ATI's multi-sample gamma-corrected anti-aliasing scheme. The chip offered sparse-sampling in modes including 2×, 4×, and 6×. Multi-sampling offered vastly superior performance over the supersampling method on older Radeons, and superior image quality compared to NVIDIA's offerings at the time. Anti-aliasing was, for the first time, a fully usable option even in the newest and most demanding titles of the day. The R300 also offered advanced anisotropic filtering which incurred a much smaller performance hit than the anisotropic solution of the GeForce4 and other competitors' cards, while offering significantly improved quality over Radeon 8500's anisotropic filtering implementation which was highly angle dependent.

On March 14, 2008, AMD released the 3D Register Reference for R3xx.[2]

Performance

Radeon 9700's advanced architecture was very efficient and, of course, more powerful compared to its older peers of 2002. Under normal conditions it beats the GeForce4 Ti 4600, the previous top-end card, by 15–20%. However, when anti-aliasing (AA) and/or anisotropic filtering (AF) were enabled it would beat the Ti 4600 by anywhere from 40–100%. At the time, this was quite astonishing, and resulted in the widespread acceptance of AA and AF as critical, truly usable features.[3]

Besides advanced architecture, reviewers also took note of ATI's change in strategy. The 9700 would be the second of ATI's chips (after the 8500) to be shipped to third-party manufacturers instead of ATI producing all of its graphics cards, though ATI would still produce cards off of its highest-end chips. This freed up engineering resources that were channeled towards driver improvements, and the 9700 performed phenomenally well at launch because of this. id Software technical director John Carmack had the Radeon 9700 run the E3 Doom 3 demonstration.[4]

The performance and quality increases offered by the R300 GPU is considered to be one of the greatest in the history of 3D graphics, alongside the achievements GeForce 256 and Voodoo Graphics. Furthermore, NVIDIA's response in the form of the GeForce FX 5800 was both late to market and somewhat unimpressive, especially when pixel shading was used. R300 would become one of the GPUs with the longest useful lifetime in history, allowing playable performance in new games at least 3 years after its launch.[5]

What it brought to the table as per wikipedia:

Architecture

The chip adopted an architecture consisting of 8 pixel pipelines, each with 1 texture mapping unit (an 8x1 design). While this differed from the older chips using 2 (or 3 for the original Radeon) texture units per pipeline, this did not mean R300 could not perform multi-texturing as efficiently as older chips. Its texture units could perform a new loopback operation which allowed them to sample up to 16 textures per geometry pass. The textures can be any combination of one, two, or three dimensions with bilinear, trilinear, or anisotropic filtering. This was part of the new DirectX 9 specification, along with more flexible floating-point-based Shader Model 2.0+ pixel shaders and vertex shaders. Equipped with 4 vertex shader units, R300 possessed over twice the geometry processing capability of the preceding Radeon 8500 and the GeForce4 Ti 4600, in addition to the greater feature-set offered compared to DirectX 8 shaders.

ATI demonstrated part of what was capable with pixel shader PS2.0 with their Rendering with Natural Light demo. The demo was a real-time implementation of noted 3D graphics researcher Paul Debevec's paper on the topic of high dynamic range rendering.[1] A noteworthy limitation is that all R300-generation chips were designed for a maximum floating point precision of 96-bit, or FP24, instead of DirectX 9's maximum of 128-bit FP32. DirectX 9.0 specified FP24 as a minimum level for conforming to the specification for full precision. This trade-off in precision offered the best combination of transistor usage and image quality for the manufacturing process at the time. It did cause a usually visibly-imperceptible loss of quality when doing heavy blending. ATI's Radeon chips did not go above FP24 until R520.

ATI's Rendering with Natural Light promo demo

The R300 was the first board to truly take advantage of a 256-bit memory bus. Matrox had released their Parhelia 512 several months earlier, but this board did not show great gains with its 256-bit bus. ATI, however, had not only doubled their bus to 256-bit, but also integrated an advanced crossbar memory controller, somewhat similar to NVIDIA's memory technology. Utilizing four individual load-balanced 64-bit memory controllers, ATI's memory implementation was quite capable of achieving high bandwidth efficiency by maintaining adequate granularity of memory transactions and thus working around memory latency limitations. "R300" was also given the latest refinement of ATI's innovative HyperZ memory bandwidth and fillrate saving technology, HyperZ III. The demands of the 8x1 architecture required more bandwidth than the 128-bit bus designs of the previous generation due to having double the texture and pixel fillrate.

Radeon 9700 introduced ATI's multi-sample gamma-corrected anti-aliasing scheme. The chip offered sparse-sampling in modes including 2×, 4×, and 6×. Multi-sampling offered vastly superior performance over the supersampling method on older Radeons, and superior image quality compared to NVIDIA's offerings at the time. Anti-aliasing was, for the first time, a fully usable option even in the newest and most demanding titles of the day. The R300 also offered advanced anisotropic filtering which incurred a much smaller performance hit than the anisotropic solution of the GeForce4 and other competitors' cards, while offering significantly improved quality over Radeon 8500's anisotropic filtering implementation which was highly angle dependent.

On March 14, 2008, AMD released the 3D Register Reference for R3xx.[2]

Performance

Radeon 9700's advanced architecture was very efficient and, of course, more powerful compared to its older peers of 2002. Under normal conditions it beats the GeForce4 Ti 4600, the previous top-end card, by 15–20%. However, when anti-aliasing (AA) and/or anisotropic filtering (AF) were enabled it would beat the Ti 4600 by anywhere from 40–100%. At the time, this was quite astonishing, and resulted in the widespread acceptance of AA and AF as critical, truly usable features.[3]

Besides advanced architecture, reviewers also took note of ATI's change in strategy. The 9700 would be the second of ATI's chips (after the 8500) to be shipped to third-party manufacturers instead of ATI producing all of its graphics cards, though ATI would still produce cards off of its highest-end chips. This freed up engineering resources that were channeled towards driver improvements, and the 9700 performed phenomenally well at launch because of this. id Software technical director John Carmack had the Radeon 9700 run the E3 Doom 3 demonstration.[4]

The performance and quality increases offered by the R300 GPU is considered to be one of the greatest in the history of 3D graphics, alongside the achievements GeForce 256 and Voodoo Graphics. Furthermore, NVIDIA's response in the form of the GeForce FX 5800 was both late to market and somewhat unimpressive, especially when pixel shading was used. R300 would become one of the GPUs with the longest useful lifetime in history, allowing playable performance in new games at least 3 years after its launch.[5]

Last edited:

![[H]ard|Forum](/styles/hardforum/xenforo/logo_dark.png)