wetware interface

n00b

- Joined

- Apr 4, 2005

- Messages

- 31

mikeblas said:NUMA can provide independent pipelines between the processors and memory. The only reason I had focused on random accesses is to avoid having the cache prefetch lines of memory. If the cache prefetching the data effectively (as it would after, linear access to the memory) there's still a measurable performance pentaly accessing memory cross-node, though it isn't nearly as pronounced.

no. simply put numa is non-uniform-memory access. in other words a non-shared memory bus. how the opterons do it is different from how power 4/5 does it and how sparc did it. the fact that the opterons give you a side band of hyperlink and some extra data handling capability in the cpu itself to hand off data from a different cpus memory cache without having the instruction go through that cpu's pipeline is specific to opteron. yes in your particular example the opterons will pay a penalty higher than xeon. move to a different memory addressing pattern and it will go either way depending on if the data is treated as independant or shared.

mikeblas said:Your summary that this issue is "because you are doing RANDOM memory accesses" isn't accurate. It's because the program ends up running code on one processor that access memory physically associated with the other processor.

no again. you did a random memory address lookup. where in a shared bus this is all in one memory controller's space. in numa it is either in one or the other memory controller's space and may or may not need to be fetched with a slight latency penalty or not. the point of numa is to provide a cpu it's own memory space. and when you decide to share then you go and fetch out of another memory controller's area, or have a cpu write the data over into anothers area. this is to provide 2 things. data integrity and speed up of not having a cpu wait on a memory bus being used by another cpu. the intel platforms have a very fast memory controller with low latency, plus prefetching algos in the cpu itself to minimize a cache miss keeping the pipeline full. what your test showed was that the opteron memory controller is a bit more latent than the xeon in fetching data. not that the opteron's memory crosslinks are slower but the memory controllers themselves are slower.

mikeblas said:I don't think it's flawed at all. The intention is to show and measure the difference between NUMA and shared memory busses, and it does exactly that. The measure a difference, it has to be identifiable.

well it is flawed as it is assuming from the beginning that your memory addressing is always going to be random when in reality it NEVER will be unless you are writing a malicious piece of software that is out to F@#$ up a system's stability. as a synthetic benchmark it's useless because no real software is going to look anything up in a random memory address unless to pick the contents of another apps memory spaces. there is no pattern other than malicious intent that a random memory address lookup can be used for. well, i guess you could use it for crypto enconding and key creation but with xeons and opteron's the pipeline empty penalty you pay is way too high to make it usefull vs. another scheme.

mikeblas said:I'm not trying to simulate anything. Meanwhile, I don't think it would be easy to find a good or experienced software engineer who would agree with the balance of your point.

what? find me any software engineer who isn't about to get fired who would agree that picking out random memory addreses to read data from is a way to write software period. let alone specifically coding random memory address lookups between 2 cpus haphazardly. your example is a very poor way to show one tiny aspect of each architechture's strong point and weak point. and you even drew the wrong conclusions from that to boot. you didn't narrow down the test enough to prove a point about opteron or xeon. nor did you involve the architechtures' real world performance in memory reads. the latency could be from a number of different sources other than the memory cross connect in the opteron. frankly using a random address to avaoid how the cpu would actually fetch data and not involving the pipelines of each and their strengths and weaknesses isn't good testing methodology. in the real world large data sets get a big boost from opteron vs. xeon. smaller data sets favor xeon down to a point of filling the cache and residing in cache or are more linear so the pre-fetch can work it's magic. also clock speed plays a big role in how fast a simple instruction can be handled and a more complex cpu can handle varied items easier etc...

mikeblas said:Any memory access might be non-sequential: any lookup table or hash causes strides through memory to be at irregular intervals, for example. Code itself does too, as it executes: branches are taken, functions are called, and so on. Traversing a linked list, tree, or heap are additional examples of non-predictive accesses through the address space since the next node of the structure might lie at a location beyond the cache line length from the current node.

Why would you identify non-consecutive memory access by an application as a symptom of malicious code?

i didn't say anything about non-sequential. you picked a memory address at random to fetch from. you didn't put anything there ahead of time and you're not relying on the actual data that's there just discarding it. i didnt say sequential, i said random. as in whatever is there is there and i won't know where i'm getting it from ahead of time nor did i plan to put what i want where it does the most good.

mikeblas said:Multi-threading optimization is about maximuzing concurrency, not assuming memory access patterns are linear.

Protect data integrity? If an application is relying on the distance between two data sets in memory for data integrity, that application is fundamentally flawed. Code should deliberatey write to the memory it owns and therefore avoid touching memory it doesn't own.

you misunderstand what i said. i said multi PROCESSING requires data integrity. not multi threading (which should also have data integrity as it's foremost preconception. if you can't rely on the data as being correct due to the execution order for true coherency you should not multi thread.)

mikeblas said:Regardless, in this test app only one thread was active at a time. No memory was shared between threads or processes, period. The addresses shown are the same because the memory was freed, then reallocated without any interceeding allocations. Plus, they're virtual addresses and not physical locations.

Since there was only one thread running at a time, there was no data interdependency between threads in this application at all. There could not have possibly been because there was only one runnable thread at any moment.

In fact, it's possible to demonstrate similar numbers without ever creating a second thread.

Sure, the two Xeons will trip over eachother if they're not in a NUMA system.

I described this in my previous post, by the way. And I gave a link to a program that can help actually demonstrate the phenomenon, and further included some of the results from my own machine.

Is it polling, or just entering a wait state?

I fully understand that much about NUMA, at least.

there is no debate on this point as i can't see your code nor does it really matter as from what i stated above.

mikeblas said:My example is exactly intended to show that NUMA-unaware code on a NUMA-enabled system can pay a significant memory access penalty.

My claim is that the vast majority of developers don't think about heap-allocated memory being affined to a particular processor or thread, and so the vast majority of code in shipping products today isn't NUMA-ready.

I wouldn't call this optimization: the penalty of 40% that I measured is pretty substantial, especially considering the other processor wasn't busy at all. Writing code with the unique requirements of NUMA in mind is a requirement.

Of course, this leads to a very interesting problem. If you want to use both processors cooperatively, then you eventually have to copy the data from memory owned by one processor to the other so the processor accepting the data isn't paying an access penalty (or disrupting the first processor) during every access.

This isn't an issue on non-NUMA systems, since both processors have direct access to all available memory. And so that's another facet NUMA which will hinder applications not specifically witten with the characteristics of the architure in mind.

I think it's very important that someone planning on building a NUMA-based system understands the shortage of software available that's able to run well on NUMA systems.

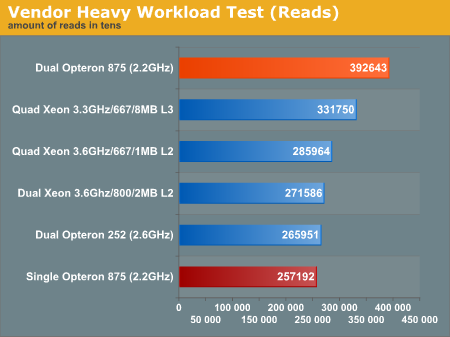

well i find fault in your conclusions based on a limited code example in a very non-real world scenario. numa is designed for seperate procesess to run at greater speed. memory penalties aside the platform strengths of the opteron lean toward data base apps and non sse3 optimized code requiring floating point power. render farms will be faster on xeon or opteron platforms depending on the render engine you choose. some workstation apps rely on higher clock speed and favor the xeon when they don't have large data sets. the opteron's memory controller's don't hurt it at all as it excels at large data set manipulation over the xeon. so as i said your attempt was a good thought but not a real representation of real world performance as it doesn't factor in enough elements to judge a platform by.

and i still don't think you get the point of numa. so here we go one last time.

if you have a seperate PROCESS such as running windows virtual server with win 2k3 installed as the virtual enviornment. you don't want the second Virtual server sharing the same memory space as the first real win server 2k3 the virtual environment is running under anyway. so seperate memory entirely devoted to a PROCESS or set of PROCESSES is a big speed benefit if it has it's own dedicated memory controller.

same scenario on non-numa.

you get a protected memory area to use as if it's dedicated. however each process or set of processes now has to wait on the other for memory. causing a split of memory bandwidth performance and latency issues resulting in harmfull cache read misses or empty pipelines waiting on instructions. on xeon this translates into a further slowdown of the cpu's efficiency. on opteron it doesn't hurt it as much as it has a shorter pipeline. and yes there are non-numa dualie opteron systems out there as well as numa xeon systems.

this is a real world example of what numa was designed FOR. virtual enviornments.

machine partitioning as in mainframe/mini/super computers is another real world situation tht numa excels at.

seperate processes, such as a database running under it's own process benefit from numa as well as he data set is localized to the cpu handling it.

also numa can see a penalty in other situations where multithreading and larger data sets exceeding the local cpu's onboard cahce come into play as they would both be working on the same data set in different pieces and you then get a latency penalty for the data transfer back and forth. opterons however handle this gracefully if coded correctly to do so, and like a piece of $h|7 if you don't. xeons in numa handle it like crap period because numa is tacked on at the chipset level with no cpu specific instructions to deal with it so you have to realy watch it on numa xeons. but considering their numerical insignifigance you won't see one unless for a specific app.

![[H]ard|Forum](/styles/hardforum/xenforo/logo_dark.png)