Navigation

Install the app

How to install the app on iOS

Follow along with the video below to see how to install our site as a web app on your home screen.

Note: This feature may not be available in some browsers.

More options

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

2080ti 2080 Ownership Club

- Thread starter Comixbooks

- Start date

If I set my power limit from 100 to 112 in Afterburner, will that allow my 2080 Ti Black to boost to higher clocks?

That is what should happen. I world be very interested in hearing your results. Is your card a 300 or 300a bios.

XoR_

[H]ard|Gawd

- Joined

- Jan 18, 2016

- Messages

- 1,566

If I set my power limit from 100 to 112 in Afterburner, will that allow my 2080 Ti Black to boost to higher clocks?

Yes, it will allow your 2080Ti Black to burn faster

That is what should happen. I world be very interested in hearing your results. Is your card a 300 or 300a bios.

It seems to boost in the low 1800's during gaming. Is that pretty good for a $999 Ti? Any way to check if its a 300 or 300A chip?

Dayaks

[H]F Junkie

- Joined

- Feb 22, 2012

- Messages

- 9,774

It seems to boost in the low 1800's during gaming. Is that pretty good for a $999 Ti? Any way to check if its a 300 or 300A chip?

My Asus Dual boosted into the high 1800s. If it settles around there that’s fine. You won’t get much higher without a higher power limit found on the $1300+ cards. Max stable on any card with high PL and great cooling is usually 2050-2100. So you are within 10% of best case.

GPU-z will tell you.

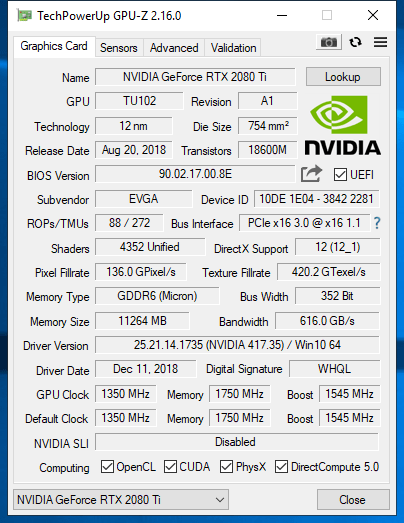

I am looking at GPU-Z and I can't tell where it is lol, what field should I be looking in?

IdiotInCharge

NVIDIA SHILL

- Joined

- Jun 13, 2003

- Messages

- 14,675

I am looking at GPU-Z and I can't tell where it is lol, what field should I be looking in?

Post some screenshots

I am looking at GPU-Z and I can't tell where it is lol, what field should I be looking in?

I'm pretty sure outs on the advanced tab. The main difference is the 300a can have a higher power limit up too 380 watts. You are able to flash it with different bioses. The 300 has a power limit of 112%.

XoR_

[H]ard|Gawd

- Joined

- Jan 18, 2016

- Messages

- 1,566

If it is EVGA Black and price was "only" 999$ then there is 100% probability it is "300" chip.my power limit in Afterburner is only 112% so I am pretty sure I have a 300 chip then.

Not that is matter that much anyway as this whole chip segregation seems to artificial, simple ploy to disallow card manufacturers from selling overclocked cards or set higher TDP in models that are cheaper than Founders Edition. All Turing chips overclock pretty much the same anyway.

What matter is TDP limit in BIOS because especially with OC it can limit performance somewhat.

I would not worry too much about it. In games TDP does not hit these limits that often and especially without something like i9 9900K and especially if you do not have 4K monitor.

Anyway, 999$ should be the max these cards should cost and non-FE chip is better value for money

Mad Maxx

Supreme [H]ardness

- Joined

- Apr 12, 2016

- Messages

- 7,322

All the 2080Ti flame out problems drove me to the 2080. I'm perfectly satisfied with its performance. My agency pays for all my hardware, so I feel extremely lucky to get a $900 GPU for nothing.

I am considered one of the dunce "side-graders" who went from 2x GTX 1080s SLI to a GTX 2080Ti. It was my first time getting a "Ti' series card as all along allamy rigs were using SLI (GTX 580/780/1080s x2) so I mistakenly thought 2x GTX 1080Tis = a GTX 2080Ti.

To make a long story short, I wasn't disappointed with the GTX 2080Ti although I wasn't seeing any major boost in frame rates coming from GTX 1080x2 SLI. BUT what a whopping difference it made to non-SLI supported (or not scaled well SLI) games like Doom, Wolfenstein, Star Wars Battlefront, Titanfall 2, Resident Evil 7 etc.

Out of curiousity, I tried using one of the GTX 1080 as a dedicated Physx card to compliment the GTX 2080Ti and found rather odd results:

Nvidia Physx tests

(A) GTX2080Ti + GTX1080 vs

(B) GTX2080Ti alone

* Metro 2033 benchmark

(A) 92 fps

(B) 94 fps (!)

* Batman Arkham Knight

(A) 101 fps

(B) 95 fps

* Rise of Tomb Raider

(A) 118 fps

(B) 113 fps

* Shadow of Tomb Raider

(A) 84 fps

(B) 86 fps (!)

The use of the GTX 1080 as a dedicated Physx card was simply not worth it with just marginal boost in frame rates ranging from 2 to 6 fps and strangely some instamced being against its favour! Not only that, it also showed that the GTX 2080Ti certainly has no problem running Physx effects maxed out on its own, without the need for a dedicated GPU, as the frame rates were all comfortably above 90 fps.

But the bottomline was that I had expanded my upgrade options going from 2x GTX 1080s SLI to a single GTX 2080Ti as my next upgrade path in future would be to add another GTX 2080Ti for SLI. As for added bonuses like Ray tracing and DLSS, I have yet to see if the improved eye candy would require a sacrifice in frame rates so I'm not too optimistic about these features yet.

To make a long story short, I wasn't disappointed with the GTX 2080Ti although I wasn't seeing any major boost in frame rates coming from GTX 1080x2 SLI. BUT what a whopping difference it made to non-SLI supported (or not scaled well SLI) games like Doom, Wolfenstein, Star Wars Battlefront, Titanfall 2, Resident Evil 7 etc.

Out of curiousity, I tried using one of the GTX 1080 as a dedicated Physx card to compliment the GTX 2080Ti and found rather odd results:

Nvidia Physx tests

(A) GTX2080Ti + GTX1080 vs

(B) GTX2080Ti alone

* Metro 2033 benchmark

(A) 92 fps

(B) 94 fps (!)

* Batman Arkham Knight

(A) 101 fps

(B) 95 fps

* Rise of Tomb Raider

(A) 118 fps

(B) 113 fps

* Shadow of Tomb Raider

(A) 84 fps

(B) 86 fps (!)

The use of the GTX 1080 as a dedicated Physx card was simply not worth it with just marginal boost in frame rates ranging from 2 to 6 fps and strangely some instamced being against its favour! Not only that, it also showed that the GTX 2080Ti certainly has no problem running Physx effects maxed out on its own, without the need for a dedicated GPU, as the frame rates were all comfortably above 90 fps.

But the bottomline was that I had expanded my upgrade options going from 2x GTX 1080s SLI to a single GTX 2080Ti as my next upgrade path in future would be to add another GTX 2080Ti for SLI. As for added bonuses like Ray tracing and DLSS, I have yet to see if the improved eye candy would require a sacrifice in frame rates so I'm not too optimistic about these features yet.

NukeDukem

2[H]4U

- Joined

- Feb 15, 2011

- Messages

- 2,659

The Strix O11G is a beast! I love the looks of this thing. It reminds me of something created by Cyberdyne Systems:

Last edited:

Furious_Styles

Supreme [H]ardness

- Joined

- Jan 16, 2013

- Messages

- 4,534

I am considered one of the dunce "side-graders" who went from 2x GTX 1080s SLI to a GTX 2080Ti. It was my first time getting a "Ti' series card as all along allamy rigs were using SLI (GTX 580/780/1080s x2) so I mistakenly thought 2x GTX 1080Tis = a GTX 2080Ti.

To make a long story short, I wasn't disappointed with the GTX 2080Ti although I wasn't seeing any major boost in frame rates coming from GTX 1080x2 SLI. BUT what a whopping difference it made to non-SLI supported (or not scaled well SLI) games like Doom, Wolfenstein, Star Wars Battlefront, Titanfall 2, Resident Evil 7 etc.

Out of curiousity, I tried using one of the GTX 1080 as a dedicated Physx card to compliment the GTX 2080Ti and found rather odd results:

Nvidia Physx tests

(A) GTX2080Ti + GTX1080 vs

(B) GTX2080Ti alone

* Metro 2033 benchmark

(A) 92 fps

(B) 94 fps (!)

* Batman Arkham Knight

(A) 101 fps

(B) 95 fps

* Rise of Tomb Raider

(A) 118 fps

(B) 113 fps

* Shadow of Tomb Raider

(A) 84 fps

(B) 86 fps (!)

The use of the GTX 1080 as a dedicated Physx card was simply not worth it with just marginal boost in frame rates ranging from 2 to 6 fps and strangely some instamced being against its favour! Not only that, it also showed that the GTX 2080Ti certainly has no problem running Physx effects maxed out on its own, without the need for a dedicated GPU, as the frame rates were all comfortably above 90 fps.

But the bottomline was that I had expanded my upgrade options going from 2x GTX 1080s SLI to a single GTX 2080Ti as my next upgrade path in future would be to add another GTX 2080Ti for SLI. As for added bonuses like Ray tracing and DLSS, I have yet to see if the improved eye candy would require a sacrifice in frame rates so I'm not too optimistic about these features yet.

You're not a dunce, SLI is generally inferior these days to a single card. Much less headaches trying to get profiles and games working properly.

By the way i just got BF5 at half price and couldnt wait to try out ray tracing. Just like to share my findings on my i7-4770K @ 4.2GHz/16 GB RAM/GTX 2080Ti/Win10 vers 1809 setup:

Framerates tested at the beginning of Story mode's "Under no flag" first "Follow Mason" level

DX11 (DXR disabled) : 110 fps

DX12 (DXR enabled @ Ultra) : 52 fps

DX12 (DXR enabled @ High) : 52 fps

DX12 (DXR enabled @ Medium) : 80 fps

This is with all graphics settings at Ultra except TAA at Low running at ultra-wide 3440x1440 resolution.

Medium DXR seems to be the sweet spot with everything at Ultra but im sure it can run at DXR High/Ultra if some of the graphics settings are lowered correspondingly.

I'll settle for higher framerates at DX11 with ray-tracing off.

I play BF5 to engage in battle, not to admire my own reflection off a glossy metalic car/tank/barrel of my rifle.

That would really be neat on a slower paced platform or adventure RPG game (Tomb Raider, Bioshock, Spiderman, Witcher) but not a fast paced war FPS.

Framerates tested at the beginning of Story mode's "Under no flag" first "Follow Mason" level

DX11 (DXR disabled) : 110 fps

DX12 (DXR enabled @ Ultra) : 52 fps

DX12 (DXR enabled @ High) : 52 fps

DX12 (DXR enabled @ Medium) : 80 fps

This is with all graphics settings at Ultra except TAA at Low running at ultra-wide 3440x1440 resolution.

Medium DXR seems to be the sweet spot with everything at Ultra but im sure it can run at DXR High/Ultra if some of the graphics settings are lowered correspondingly.

I'll settle for higher framerates at DX11 with ray-tracing off.

I play BF5 to engage in battle, not to admire my own reflection off a glossy metalic car/tank/barrel of my rifle.

That would really be neat on a slower paced platform or adventure RPG game (Tomb Raider, Bioshock, Spiderman, Witcher) but not a fast paced war FPS.

IdiotInCharge

NVIDIA SHILL

- Joined

- Jun 13, 2003

- Messages

- 14,675

A bit to be expected with BFV; I might leave it on for the single-player campaign stuff, but multiplayer will be multiplayer.I'll settle for higher framerates at DX11 with ray-tracing off.

I play BF5 to engage in battle, not to admire my own reflection off a glossy metalic car/tank/barrel of my rifle.

That would really be neat on a slower paced platform or adventure RPG game (Tomb Raider, Bioshock, Spiderman, Witcher) but not a fast paced war FPS.

The use of the GTX 1080 as a dedicated Physx card was simply not worth it with just marginal boost in frame rates ranging from 2 to 6 fps and strangely some instamced being against its favour! Not only that, it also showed that the GTX 2080Ti certainly has no problem running Physx effects maxed out on its own, without the need for a dedicated GPU, as the frame rates were all comfortably above 90 fps.

I came to the same conclusion (traded my single GTX 1080 for a single RTX 2080 Ti) -- have you considered using one of your 1080s as an NVENC card instead?

As I understand it, the RTX cards are supposed to have a more efficient NVENC chip onboard, but I'm wondering if maybe it makes sense to use the 1080 instead for local recording (at extremely high bitrates) without consuming bandwidth on the main rendering card.

Legendary Gamer

[H]ard|Gawd

- Joined

- Jan 14, 2012

- Messages

- 1,590

Only thing I can think of is dedicating it as a Physx board... Which I believe you still can do. Otherwise, I'm as lost as you are.What is NVENC? Anyways I've already sold both my GTX 1080s.

CAD4466HK

2[H]4U

- Joined

- Jul 24, 2008

- Messages

- 2,736

What is NVENC? Anyways I've already sold both my GTX 1080s.

Only thing I can think of is dedicating it as a Physx board... Which I believe you still can do. Otherwise, I'm as lost as you are.

NVENC is the video encoding hardware that has been on every Nvidia GPU since Kepler.

It allows the GPU to render video in hardware using it's own codec support, thus allowing the GPU to offload the work from the CPU. Every new gen of NVENC adds more codec support or increases FPS thresholds, allowing less and less reliance on the CPU and software. Turing is 6th generation NVENC.

Every time you watch a movie or use OBS to capture or stream for example, you are using this engine.

The next time you watch a movie, have GPU-Z running in the background and pay attention to the video engine slot, that shows you your NVENC usage.

Legendary Gamer

[H]ard|Gawd

- Joined

- Jan 14, 2012

- Messages

- 1,590

I knew video cards accelerated video but I had completely spaced the fact Nvidia had a dedicated rendering engine. As for 4k playback, it will get here in online streaming in some compressed as hell, upscaled nightmare that our cards will accelerate. I think there's only like 1-3 PC DVD drives that can handle playback (pioneer, LG maybe) it's not like playing HD DVD or standard Blu Ray on PC. The latter of which got really cheap to do over time. I gave up and bought a decent LG 4K player for 120 bucks after spending too much time looking at how I had to use an Intel IGPU to push it (no thanks).Thanks. Learnt something new. Its good to know that the GPU can offer hardware acceleration for playing movies as well. Especially useful for 4K movies although I don't have the software to play 4K movies.

Legendary Gamer

[H]ard|Gawd

- Joined

- Jan 14, 2012

- Messages

- 1,590

Ty for taking the time for the info. It's amazing what I take for granted with tech sometimes.NVENC is the video encoding hardware that has been on every Nvidia GPU since Kepler.

It allows the GPU to render video in hardware using it's own codec support, thus allowing the GPU to offload the work from the CPU. Every new gen of NVENC adds more codec support or increases FPS thresholds, allowing less and less reliance on the CPU and software. Turing is 6th generation NVENC.

Every time you watch a movie or use OBS to capture or stream for example, you are using this engine.

The next time you watch a movie, have GPU-Z running in the background and pay attention to the video engine slot, that shows you your NVENC usage.

IdiotInCharge

NVIDIA SHILL

- Joined

- Jun 13, 2003

- Messages

- 14,675

As for 4k playback

This is less the resolution and more the codec used. HEVC being to common one, is very resource-intensive to decode and even more resource-intensive to encode, but is also very efficient in terms of bandwidth. Personally, I feel that Netflix and Amazon do a pretty good job with 4k streaming. It's not UHD Bluray, but it gets the job done. I buy the discs of stuff that I want all the detail and the Dolby Atmos/Vision tracks for.

[one community that's pretty well informed on whose encoders/decoders are doing well with which codecs is the Plex streaming community- right now, NVENC is the 'gold standard', while Intel's IGPs are actually pretty good, and AMD's aren't worth using- and for those looking to support a large number of streams, cheap Quadros work best!]

Legendary Gamer

[H]ard|Gawd

- Joined

- Jan 14, 2012

- Messages

- 1,590

Dude! Loving the information today! You guys rock! I'm totally checking that out. I was considering running Plex in my home once I convert like 100+ DVDs to digital media.This is less the resolution and more the codec used. HEVC being to common one, is very resource-intensive to decode and even more resource-intensive to encode, but is also very efficient in terms of bandwidth. Personally, I feel that Netflix and Amazon do a pretty good job with 4k streaming. It's not UHD Bluray, but it gets the job done. I buy the discs of stuff that I want all the detail and the Dolby Atmos/Vision tracks for.

[one community that's pretty well informed on whose encoders/decoders are doing well with which codecs is the Plex streaming community- right now, NVENC is the 'gold standard', while Intel's IGPs are actually pretty good, and AMD's aren't worth using- and for those looking to support a large number of streams, cheap Quadros work best!]

What should I rip the DVDs with? I used to use things like make mkv but you seem to know a lot more about this stuff than I do. Is there a decent program out there that I can rip my HD DVD collection with? Only thing I want to hang on to these days are my Blu rays

Dude! Loving the information today! You guys rock! I'm totally checking that out. I was considering running Plex in my home once I convert like 100+ DVDs to digital media.

What should I rip the DVDs with? I used to use things like make mkv but you seem to know a lot more about this stuff than I do. Is there a decent program out there that I can rip my HD DVD collection with? Only thing I want to hang on to these days are my Blu rays

If you don't have data caps just download them. Its legal because you own a copy.

Legendary Gamer

[H]ard|Gawd

- Joined

- Jan 14, 2012

- Messages

- 1,590

I'm fairly certain that torrenting them off of YTS or another pirate outlet is still illegal. I would have a lot of fun telling a judge that "I own a physical copy of the movie right here, so I went to a pirate site and downloaded an illegally ripped copy for myself...". Lol .If you don't have data caps just download them. Its legal because you own a copy.

Some film's come with access to the digital files. Ripping them to my local server keeps my ass and my IP address the hell away from copyright trolls.

Yes, I know I could use a VPN but even those services aren't bulletproof.

I'm fairly certain that torrenting them off of YTS or another pirate outlet is still illegal. I would have a lot of fun telling a judge that "I own a physical copy of the movie right here, so I went to a pirate site and downloaded an illegally ripped copy for myself...". Lol .

Some film's come with access to the digital files. Ripping them to my local server keeps my ass and my IP address the hell away from copyright trolls.

Yes, I know I could use a VPN but even those services aren't bulletproof.

If you're going to nitpick, even ripping it is illegal.

https://lifehacker.com/5978326/is-it-legal-to-rip-a-dvd-that-i-own

Don't torrent. Usenet (which is ssl encrypted) and then use a VPN to hide your dns, using killswitch to cut off internet if it fails. *cough* a friend of mine does this, and has for years, never ever received a single DMCA request.

Legendary Gamer

[H]ard|Gawd

- Joined

- Jan 14, 2012

- Messages

- 1,590

I know. Not not picking, just saying what's safer and uses no data. I'm an old IT guy that never touched the Usenet. Amazingly.If you're going to nitpick, even ripping it is illegal.

https://lifehacker.com/5978326/is-it-legal-to-rip-a-dvd-that-i-own

Don't torrent. Usenet (which is ssl encrypted) and then use a VPN to hide your dns, using killswitch to cut off internet if it fails. *cough* a friend of mine does this, and has for years, never ever received a single DMCA request.

I have a standard Comcast data cap... Which is amazing on Gig internet.

To each their own, my friend.

Solhokuten

[H]ard|Gawd

- Joined

- Dec 9, 2009

- Messages

- 1,541

I got the EVGA Hydro Copper block for my FTW3 Friday. It is a very nice piece and I am pretty happy with the results. The temps shown are after 30+ minutes of Heaven.

View attachment 126380

Did you reuse the thermal pads or did it come with a sheet?

Dayaks

[H]F Junkie

- Joined

- Feb 22, 2012

- Messages

- 9,774

spintroniX

Gawd

- Joined

- Apr 7, 2009

- Messages

- 974

In for two Founders Edition cards.

Spent a couple hours with some deep learning last night, and no evidence of failure yet. Fingers crossed.

https://imgur.com/Vdlcz9a

Spent a couple hours with some deep learning last night, and no evidence of failure yet. Fingers crossed.

https://imgur.com/Vdlcz9a

Armenius

Extremely [H]

- Joined

- Jan 28, 2014

- Messages

- 42,122

So apparently EVGA released the hybrid kit a couple weeks before Christmas while I wasn't paying attention. Hopefully more will come in stock and I can grab one. Anyone get one and install it on theirs? People on the forum have been complaining about malfunctioning pumps and failed solder joints on the fan/RGB cable, so hopefully that was only an issue with the first batch.

https://www.evga.com/products/product.aspx?pn=400-HY-1384-B1

https://www.evga.com/products/product.aspx?pn=400-HY-1384-B1

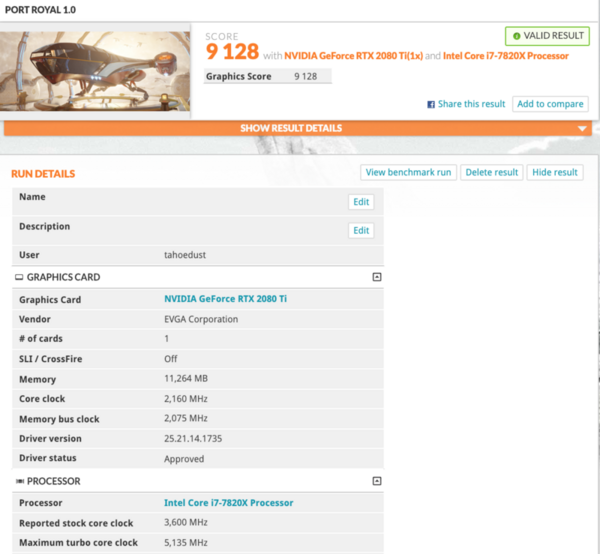

TahoeDust

Limp Gawd

- Joined

- Dec 3, 2011

- Messages

- 502

Did you reuse the thermal pads or did it come with a sheet?

It came with new pads installed on the block.

TahoeDust

Limp Gawd

- Joined

- Dec 3, 2011

- Messages

- 502

Dayaks

[H]F Junkie

- Joined

- Feb 22, 2012

- Messages

- 9,774

So apparently EVGA released the hybrid kit a couple weeks before Christmas while I wasn't paying attention. Hopefully more will come in stock and I can grab one. Anyone get one and install it on theirs? People on the forum have been complaining about malfunctioning pumps and failed solder joints on the fan/RGB cable, so hopefully that was only an issue with the first batch.

https://www.evga.com/products/product.aspx?pn=400-HY-1384-B1

I had 3x 1080ti hybrids die from EVGA after a month. I think they just suck balls.

I went full water block with my 2080ti... really not much more in the scheme of things.

The kicker is with EVGA is that they wanted me to pay shipping cross country for three cards with a known issue. Fuck them. I liked Legendary Gamers’ thread where after four RMAs they would refund with a 15% restocking fee for his 2080ti.

Last edited:

![[H]ard|Forum](/styles/hardforum/xenforo/logo_dark.png)