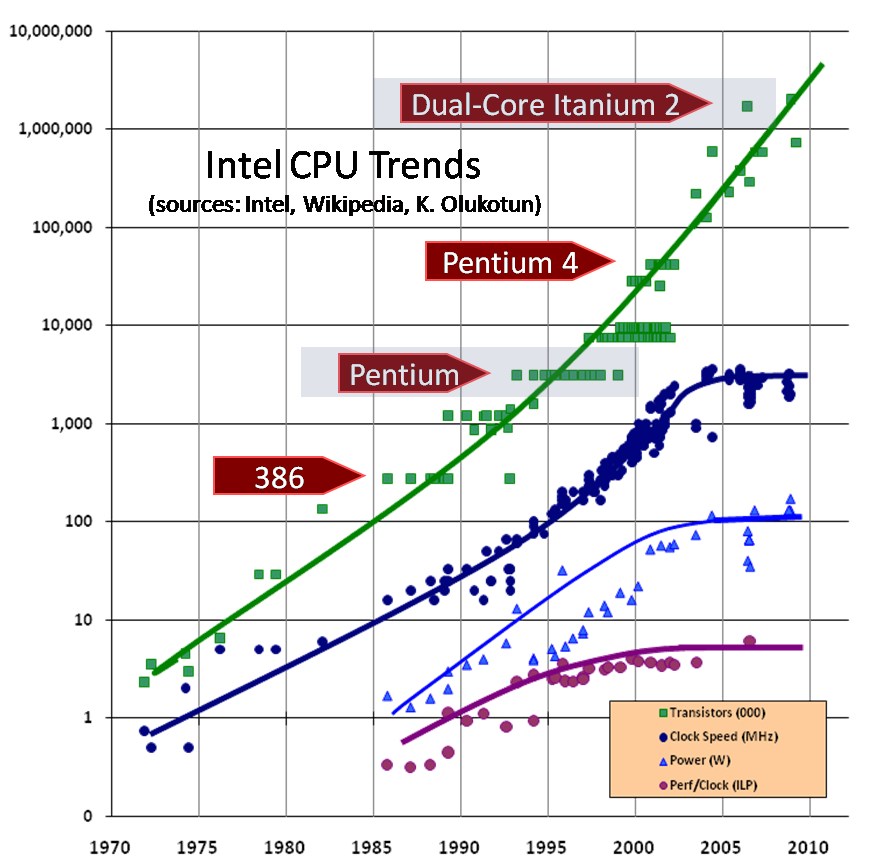

I built my first computer in 1991 -- it was a 486DX/25, which really blew the doors off the 386/33's the other computer nerds in the dorm had at the time. I've built countless systems for myself since then -- back then, it was assumed that clock speed would just keep increasing, just like transistor count -- but of course Intel eventually hit the GHz wall with the Pentium 4. But multiple cores took over and kept the performance increases going, at a reduced rate.

Then about a year ago Intel announced they were changing to having feature sizes shrink once every three years instead of every two years, because they are nearing the quantum limit for silicon. I assumed that meant we were approaching the end of the golden age of computing -- but now that I'm building another system, I'm starting to wonder if it actually ended some years ago -- and I'm not sure why.

I paid $315 for an i7-2600K almost six years ago. Today, the highest performing CPU for about the same money ($324) is the Ryzen 1700. The PassMark scores for the 2600K and Ryzen 1700 are 8486 and 13797, respectively -- only a 63% improvement in SIX YEARS -- that works out to a whopping 8.4% annualized increase in performance/dollar per year. It's even worse for Intel -- for roughly that money the best you can get is either an i7-6700K or an i7-7700, both of which score around 11,000 -- only a 30% improvement, or 4.4% annualized. These days I think muscle car horsepower is increasing faster than that.

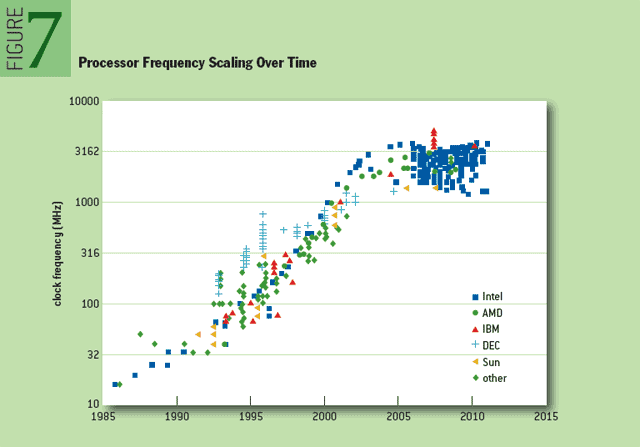

I was talking to a former Intel employee a few months ago that was at retirement age, and I mentioned that I'd heard the boom years were the 70's and 80's. She said the 90's were good as well, but things changed after 2000. By an interesting coincidence, that's when the Pentium 4 came out. I'm wondering if anyone has a chart of desktop processor performance vs. year -- I have to think the rate slowed after 2000, and then more recently it slowed to a crawl.

Then about a year ago Intel announced they were changing to having feature sizes shrink once every three years instead of every two years, because they are nearing the quantum limit for silicon. I assumed that meant we were approaching the end of the golden age of computing -- but now that I'm building another system, I'm starting to wonder if it actually ended some years ago -- and I'm not sure why.

I paid $315 for an i7-2600K almost six years ago. Today, the highest performing CPU for about the same money ($324) is the Ryzen 1700. The PassMark scores for the 2600K and Ryzen 1700 are 8486 and 13797, respectively -- only a 63% improvement in SIX YEARS -- that works out to a whopping 8.4% annualized increase in performance/dollar per year. It's even worse for Intel -- for roughly that money the best you can get is either an i7-6700K or an i7-7700, both of which score around 11,000 -- only a 30% improvement, or 4.4% annualized. These days I think muscle car horsepower is increasing faster than that.

I was talking to a former Intel employee a few months ago that was at retirement age, and I mentioned that I'd heard the boom years were the 70's and 80's. She said the 90's were good as well, but things changed after 2000. By an interesting coincidence, that's when the Pentium 4 came out. I'm wondering if anyone has a chart of desktop processor performance vs. year -- I have to think the rate slowed after 2000, and then more recently it slowed to a crawl.

![[H]ard|Forum](/styles/hardforum/xenforo/logo_dark.png)