elvn

Supreme [H]ardness

- Joined

- May 5, 2006

- Messages

- 5,291

I've read some interesting info here:

https://www.reddit.com/r/Overwatch/comments/3u5kfg/everything_you_need_to_know_about_tick_rate/

So there is tuning going on...

So if I'm reading it right you'd have to see

....your frame you'd be reacting to delivered at your local frame rate first (though that's being altered/resolved throughout by the true server state modifying your local one based on the server latency compensation code tied to it's tick rate)

....then add your reaction time e.g. 150, more like 180ms - 250ms with some variance (this is a huge number frames-wise, your reaction spans ~ 23 frames at 117fps! ~ .180 seconds)

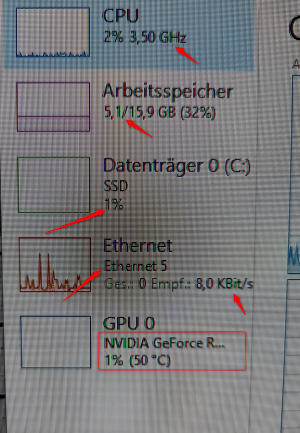

....then add your mouse, keyboard, and main pc case hardware component's input lag. According to the nvidia charts posted earlier system lag can be 33ms to 77ms of system lag across the three games it listed. That's another 4 to 8 frames later at 117fps (.033 to .077 seconds).

.. and then add your 4ms (a gaming monitor, .004 seconds) or 13ms (LG CX, .013 seconds) input lag on your display? This is a .009 second difference.

So is this quasi-accurate?

--------------------------------------

... 8ms local frame rate (if at 117 SOLID) but throughout "overwritten" by things resolving on the server after being compared to rolled back/compensated latencies(pings) and a 15ms tick rate interpolation and the return trip time.. that result showing you a registered server game state update and it hits your eyeballs so you decide to react to it...

... + 180ms human reaction time (if being generous, but varies throughout game) + 27 to 51ms system latency with nvidia reflex supported games + 4ms or 13ms from a gaming monitor or LG CX display.

...

I don't think the online game cares when you are seeing the result on your screen in resolving the actions as it relates specifically to screen input lag but in the overall flow - again you usually can't react well to what you haven't seen yet (e.g. is your crosshair visibly on the target yet to your eyes).... though some might compensate for even high input lag screens with best guess on the fly in their heads and get by.

It seems to me that your system latency (~ 30 - 77ms), the very large by comparison and variable reaction times(~180ms+/-), and 20 - 40 ms variable ping + tick rate(15ms, 33ms or worse) and the resulting compensation formula being shuffled into your frame state stream would dwarf the difference between 4ms (.004seconds) and 13ms (an additional .009 seconds by comparison) input lag screens.

Tick rate .015 on the best servers, most games are multiples worse (.031sec on 22 tick , .090sec on 64 tick)

Ping times .020 seconds to .040 seconds typically.

Latency as related to action delivery: After it's delivery time relative to tick it is 1/2 compensated for to resolve when it registered on the server (rewind time). The other 1/2 of the latency = return trip time (.010 sec to .020 sec at 20ms to 40ms ping). So everything on the server is really happening in the past.

Human reaction time .180 seconds +/-

4ms gaming monitor vs 13ms OLED = .009 seconds added difference

https://www.reddit.com/r/Overwatch/comments/3u5kfg/everything_you_need_to_know_about_tick_rate/

..Without lag compensation (or with poor lag compensation), you would have to lead your target in order to hit them, since your client computer is seeing a delayed version of the game world. Essentially what lag compensation is doing, is interpreting the actions it receives from the client, such as firing a shot, as if the action had occurred in the past.

The difference between the server game state and the client game state or "Client Delay" as we will call it can be summarized as: ClientDelay = (1/2*Latency)+InterpolationDelay

...An example of lag compensation in action:

Note: In an example where two players shoot eachother, and both shots are hits, the game may behave differently. In some games. e.g. CSGO, if the first shot arriving at the server kills the target, any subsequent shots by that player that arrive to the server later will be ignored. In this case, there cannot be any "mutual kills", where both players shoot within 1 tick and both die. In Overwatch, mutual kills are possible. There is a tradeoff here.

- Player A sees player B approaching a corner.

- Player A fires a shot, the client sends the action to the server.

- Server receives the action Xms layer, where X is half of Player A's latency.

- The server then looks into the past (into a memory buffer), of where player B was at the time player A took the shot. In a basic example, the server would go back (Xms+Player A's interpolation delay) to match what Player A was seeing at the time, but other values are possible depending on how the programmer wants the lag compensation to behave.

- The server decides whether the shot was a hit. For a shot to be considered a hit, it must align with a hitbox on the player model. In this example, the server considers it a hit. Even though on Player B's screen, it might look like hes already behind the wall, but the time difference between what player B see's and the time at which the server considers the shot to have taken place is equal to: (1/2PlayerALatency + 1/2PlayerBLatency + TimeSinceLastTick)

- In the next "Tick" the server updates both clients as to the outcome. Player A sees the hit indicator (X) on their crosshair, Player B sees their life decrease, or they die.

So there is tuning going on...

- If you use the CSGO model, people with better latency have a significant advantage, and it may seem like "Oh I shot that guy before I died, but he didn't die!" in some cases. You may even hear your gun go "bang" before you die, and still not do any damage.

- If you use the current Overwatch model, tiny differences in reaction time matter less. I.e. if the server tick rate is 64 for example, if Player A shoots 15ms faster than player B, but they both do so within the same 15.6ms tick, they will both die.

- If lag compensation is overtuned, it will result in "I shot behind the target and still hit him"

- If it is undertuned, it results in "I need to lead the target to hit them".

So if I'm reading it right you'd have to see

....your frame you'd be reacting to delivered at your local frame rate first (though that's being altered/resolved throughout by the true server state modifying your local one based on the server latency compensation code tied to it's tick rate)

....then add your reaction time e.g. 150, more like 180ms - 250ms with some variance (this is a huge number frames-wise, your reaction spans ~ 23 frames at 117fps! ~ .180 seconds)

....then add your mouse, keyboard, and main pc case hardware component's input lag. According to the nvidia charts posted earlier system lag can be 33ms to 77ms of system lag across the three games it listed. That's another 4 to 8 frames later at 117fps (.033 to .077 seconds).

.. and then add your 4ms (a gaming monitor, .004 seconds) or 13ms (LG CX, .013 seconds) input lag on your display? This is a .009 second difference.

So is this quasi-accurate?

--------------------------------------

... 8ms local frame rate (if at 117 SOLID) but throughout "overwritten" by things resolving on the server after being compared to rolled back/compensated latencies(pings) and a 15ms tick rate interpolation and the return trip time.. that result showing you a registered server game state update and it hits your eyeballs so you decide to react to it...

... + 180ms human reaction time (if being generous, but varies throughout game) + 27 to 51ms system latency with nvidia reflex supported games + 4ms or 13ms from a gaming monitor or LG CX display.

...

I don't think the online game cares when you are seeing the result on your screen in resolving the actions as it relates specifically to screen input lag but in the overall flow - again you usually can't react well to what you haven't seen yet (e.g. is your crosshair visibly on the target yet to your eyes).... though some might compensate for even high input lag screens with best guess on the fly in their heads and get by.

It seems to me that your system latency (~ 30 - 77ms), the very large by comparison and variable reaction times(~180ms+/-), and 20 - 40 ms variable ping + tick rate(15ms, 33ms or worse) and the resulting compensation formula being shuffled into your frame state stream would dwarf the difference between 4ms (.004seconds) and 13ms (an additional .009 seconds by comparison) input lag screens.

Tick rate .015 on the best servers, most games are multiples worse (.031sec on 22 tick , .090sec on 64 tick)

Ping times .020 seconds to .040 seconds typically.

Latency as related to action delivery: After it's delivery time relative to tick it is 1/2 compensated for to resolve when it registered on the server (rewind time). The other 1/2 of the latency = return trip time (.010 sec to .020 sec at 20ms to 40ms ping). So everything on the server is really happening in the past.

Human reaction time .180 seconds +/-

4ms gaming monitor vs 13ms OLED = .009 seconds added difference

...Generally, a higher tick-rate server will yield a smoother, more accurate interaction between players, but it is important to consider other factors here. If we compare a tick rate of 64 (CSGO matchmaking), with a tick rate of 20 (alleged tick rate of Overwatch Beta servers), the largest delay due to the difference in tick rate that you could possibly perceive is 35ms. The average would be 17.5ms. For most people this isn't perceivable, but experienced gamers who have played on servers of different tick rates, can usually tell the difference between a 10 or 20 tick server and a 64 tick one.

Keep in mind that a higher tickrate server will not change how lag compensation behaves, so you will still experience times where you ran around the corner and died. 64 Tick servers will not fix that.

Last edited:

![[H]ard|Forum](/styles/hardforum/xenforo/logo_dark.png)