Cool I'll be interested to hear how that cable works out for you off of a hdmi 2.1 gpu later. Did you get the 20' one?

Yes. Never paid that much for a cable but from what I have been reading 6-8' is the limit for HDMI 2.1 via copper.

Follow along with the video below to see how to install our site as a web app on your home screen.

Note: This feature may not be available in some browsers.

Cool I'll be interested to hear how that cable works out for you off of a hdmi 2.1 gpu later. Did you get the 20' one?

Yes. Never paid that much for a cable but from what I have been reading 6-8' is the limit for HDMI 2.1 via copper.

Yeah TBH I'd rather experiment with 3 $20 cables off Amazon and return the 2 that don't work vs dropping $120 on one.

Wait for others to reply to this, but I'm fairly sure you can audio out from the HDMI-ARC on the CX.Planning to (hopefully) get an RTX 3080 later this week and also a CX 48". The card I’m looking at only has 1 HDMI out port, which would run to the CX for Gsync. If I want to run audio out to my AVR, can I use a separate HDMI cable from my motherboard to the AVR?

I use HDMI-eARC on my second computer from the CX to Denon AVR. I do have to change the windows sample rate from 24 bit to 16 bit and then back to 24 bit every time I boot up, though.Wait for others to reply to this, but I'm fairly sure you can audio out from the HDMI-ARC on the CX.

I use HDMI-eARC on my second computer from the CX to Denon AVR. I do have to change the windows sample rate from 24 bit to 16 bit and then back to 24 bit every time I boot up, though.

Don't know about Win10, but on Win7 I used a cheapo GPU (GT710) as a sound card by running it into my AVR. That was when nVidia had the "never clock down on multiple displays" issue.I’ve heard of issues including this one with EARC, which is why I’m hoping to bypass it entirely by using a separate cable from my MB.

Planning to (hopefully) get an RTX 3080 later this week and also a CX 48". The card I’m looking at only has 1 HDMI out port, which would run to the CX for Gsync. If I want to run audio out to my AVR, can I use a separate HDMI cable from my motherboard to the AVR?

You can use a Displayport->HDMI cable also. I'm doing this on my 2080Ti right now.

4k 60, quest is 75hz (90hz capable internally supposedly but not supported). I don't know what the quest 2's connection will be. I'm guessing it would work since the Quest uses compression in the signal.Transmits Up To 100 Feet: SlimRun™ AV can perfectly transmit 4K@60Hz HDR video to distances up to 100 feet without extenders by using optical fiber to replace copper wire as the high-speed signal transmission medium.

Doing the same thing with mine. Sadly the receiver I have it on is not Atmos capable. If I schlep my PC downstairs and connect it to that receiver I will update the forum.Does this have any limitations on what it supports? E.g. does it pass through uncompressed 5.1 audio or support stuff like Dolby Atmos?

Does this have any limitations on what it supports? E.g. does it pass through uncompressed 5.1 audio or support stuff like Dolby Atmos?

Does this have any limitations on what it supports? E.g. does it pass through uncompressed 5.1 audio or support stuff like Dolby Atmos?

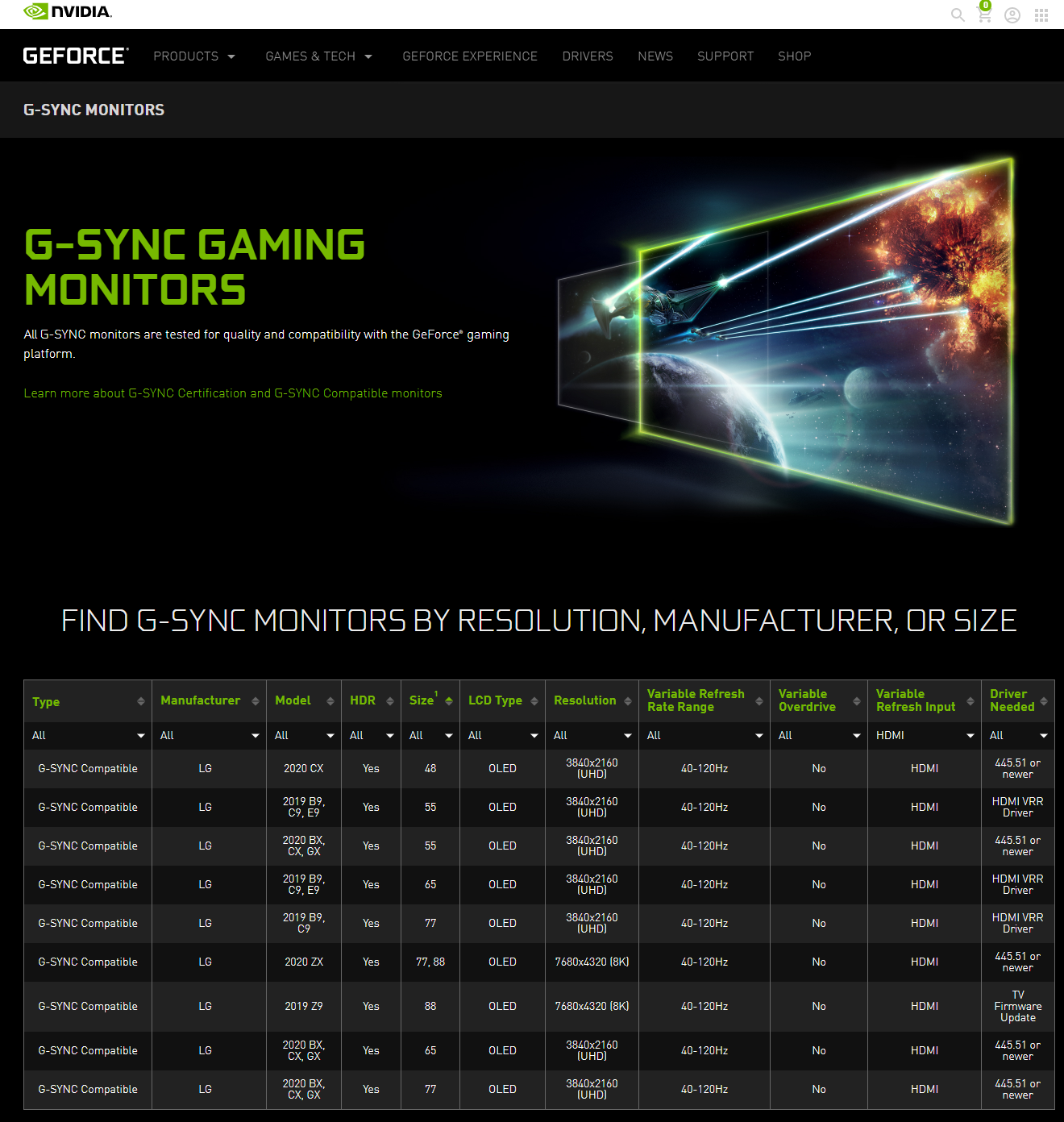

So we have confirmation that, on launch, there's a good chance HDMI 2.1 with VRR won't even work.

No wonder why no one's covering. Nvidia probably told shills to downplay it.

If it makes you feel any better and it may, I turned off gsync on my C9. Guess what? I cannot tell once difference whatsoever.

But, maybe it's my hardware, I'm on a 2080 ti / 10900K @ 5.3ghz with 32GB of DDR4 4400mhz.

I wonder if nVidia is purposely blocking VRR from working on the LG's and within it's drivers. Is that possible? Forcing people to buy gsync branded products.

If it makes you feel any better and it may, I turned off gsync on my C9. Guess what? I cannot tell once difference whatsoever.

But, maybe it's my hardware, I'm on a 2080 ti / 10900K @ 5.3ghz with 32GB of DDR4 4400mhz.

I wonder if nVidia is purposely blocking VRR from working on the LG's and within it's drivers. Is that possible? Forcing people to buy gsync branded products.

Looks like it!So the 3000 series can provide 4:4:4 at 120hz 4k am I correct?

3080 Reviews are up and....~30% better than a 2080 Ti at 4k while having only a measely 10GB VRAM is definitely lackluster. Can't wait for the big boy 3090.

Cat's out of the bag. Nvidia is pulling the wool over rubes' eyes.

They wanted to trick people into thinking that they lowered prices, but they actually raised them.

The 3090 is the "real" 3080. The 3080 is the real 3070, etc.

Last gen was $1,200 for a good high end part. Now it's $1,500 for a good high end part.

More snake oil. More deceptive dog shit.

Even though AMD is so incompetent, and these companies are so corrupt with so much collusion going on, nothing would surprise me, I hope that AMD shocks everyone and actually blows Nvidia away this time. It needs to happen for the good of the market.

Last gen was $1,200 for a good high end part. Now it's $1,500 for a good high end part.

Even though AMD is so incompetent, and these companies are so corrupt with so much collusion going on, nothing would surprise me, I hope that AMD shocks everyone and actually blows Nvidia away this time. It needs to happen for the good of the market.

Personally I considered the 2000 series a "tick" and the 3000 series a big "TOCK". I skipped the 2000 series as I found it incremental and mediocre from my position (although I have 1080ti SLI not single card). The fact that the 2000 series was incremental compared to the 3000 series was even admitted to by Jensen Huang in his speech and his charts showing 1000, 2000, and 3000 series' performance.

"Every couple of generations, the stars align as it did with Pascal and we get a giant generational leap"

"Pascal was known as the perfect 10. Pascal was a huge success and set a very high bar."

"It took the "Super Family" of Turing to meaningfully exceed Pascal on game performances without ray tracing"

"With ray tracing turned on, Pascal, using programmable shaders to compute ray-triangle intersections, fell far behind Turing's RT core."

(However)

"Turing with ray tracing ON reached the same performance as pascal with raytracing OFF."

"So we doubled down on everything. Twice the shaders, twice the ray-tracing, and twice the Tensor Core. The triple double. Ampere knocks the daylights out of Pascal on ray tracing. And even with ray tracing on, crushes Pascal on frame rate. To all my Pascal gamer friends, it is safe to upgrade now".

I don't expect something faster than the 3090 because there really isn't room in the die, and I don't expect them to have a whole new die, so i think the 3090 is the Titan or the **80ti... but I do expect something with 20GB and possibley slightly higher clocks to come out between the 3080 and 3090. Whether that's called a "3080 Super" or 3080Ti, that doesn't mean "a 3080 is actually a 3070" if you start from a 3090 being a Titan (because there's not really room on the die above it for a Titan). You're forgetting about the Titan last gen that was $2500.

Then there is the 5 nm rumor.I'm not ruling out a 7nm TSMC variant Titan card.

Well to be fair, $700 will still get you a good high end part in the 3080. It's just not going to be that massive of an upgrade for 2080Ti owners...hence the 3090. But for those like myself with a 1080Ti that skipped the 2080Ti, in some games the 3080 provides 2x the framerates. That's huge.

I hope they bring it for the sake of competition as well, but given their history of disappointment there I'm just not holding my breath. I miss when they were neck and neck, like in the X800XT/6800GT days. Now it seems as though they can only compete in the midrange. But, I'd be happy to see that change for everyone's sake.

If AMD's recent high performance units are any indication, even if they can make it peformance competitive its going to be non-competitive in power draw and heat. Considering these new 3000 series GPUs are using 350W, that's a scary proposition.

That was a C9 though.And there it is. What a glorious year for PC gaming!