erek

[H]F Junkie

- Joined

- Dec 19, 2005

- Messages

- 10,875

opinion?

Follow along with the video below to see how to install our site as a web app on your home screen.

Note: This feature may not be available in some browsers.

I don't care for looks....I care for performance/features...

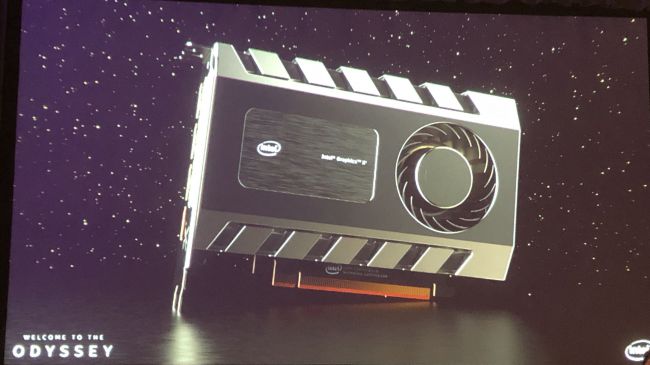

what about this?

Looks like the stunts you pull when you have no performance numbers

I'm excited to see them pushing it and not just leaving us with rumours in the shadows. It makes me feel like this might actually happen.

I'm not too worried about card size, we don't know anything about the design \ price \ performance. Even if it only competes in the $150 - $200 segment it's still a start. My memory could be failing but wasn't their last attempt some super scalable bundle of tiny cores? If the new design works that way scaling up to a larger \ better performing pcb should be relatively simple.

Larrabee was a bunch of modified x86 Pentium Pro cores AFAIR

here's my larrabee, heh

No drivers though, rigth?

Larrabee was a bunch of modified x86 Pentium Pro cores AFAIR

Intel integrated GPU's are bad at all for what they are: addition to CPU's that have tiny bit die space and TDP allocated to it and no memory (in most processors)Don't get your hopes up, intel has been subsidizing their GPU architecture development through their cpu business for 10 years (now at the point of >2:1 die area in favor of) and even with this massive default presence it has been extremely unimpressive for anything but media acceleration.

Intel integrated GPU's are bad at all for what they are: addition to CPU's that have tiny bit die space and TDP allocated to it and no memory (in most processors)

Broadwell iGPU was actually faster than AMD APU of the time.

If Intel continues its open source friendliness Intel Xe might actually be the new Linux best friendBeen gaming on them for years- currently I'm experimenting with one on Linux through Wine.

It likely uses HBM, thus the size.Based on the render the card looks like 200$ MSRP tops

It likely uses HBM, thus the size.

I dunno I would like them to add 4" of extra blank PCB, make me feel like I'm getting a value right? If it's little it has to be slow!!!

But on a more serious note, this is an actual problem for Intel, they need to let more marketing guys have their say.

The customer doesn't make logical decisions, he just knows that big card=high end=desirable because thats the way it has been. After all, big mansions and yachts are yet to go out of style.

It's the same as when you sell a customer a digital code, it helps to give them a .0013 cent green plastic shell to go with it, increases the precived value of the item.

No, Not big card=high end=desirable.

Heavy=high end=desirable!

I see Intel using HMB2 or something faster.

I also predict them adding a high end option for an Optane card add-on for memory in rendering like the AMD Pro SSG, that offers 2 PCIE card slots for an additional 1TB. If Intel can find a way of market more use of Optane drives, they will.

Intel doesn't "just" want gamers, they want the whole enchilada.

They want render farms, professional workstations and gamers.

kind of reminds me of the R9 Nano

I recently saw a RTX 2070 FE IRL, the backplate was the most sturdy looking backplate i've seen on any modern card. very impressive, it looked like a really expensive product to me.

That percieved value thing really fucks with your mind, I was happy with the card before I plugged it in

They do the same thing with how car door close. Premium trim will have a distinctive sound and feel sturdy while cheaper trim will sound echo and feel like you're lucky it latched properly lol.

Marketing stunt at its bestMuch like RGB fad... Some people (normal people EDIT: "If I may say") are appealed to those eye candy since they don't understand the rest of the specifications anyway.

If Intel continues its open source friendliness Intel Xe might actually be the new Linux best friend

In order to install Ubuntu (and not have a black screen) I needed to swap in an old Nvidia card (750 Ti) to do the OS install and then load closed-source Nvidia drivers before swapping back in the GTX 1080.

Don't want to get too side-tracked, the point was that there is room for Intel to release a solid open-source driver that would be better than what's out there now with Nvidia (or AMD to a lesser extent).