Digital Viper-X-

[H]F Junkie

- Joined

- Dec 9, 2000

- Messages

- 15,116

Lol. Wait, you mean some people are still gaming in 1080p?

Yea, in fact most people are.

Follow along with the video below to see how to install our site as a web app on your home screen.

Note: This feature may not be available in some browsers.

Lol. Wait, you mean some people are still gaming in 1080p?

Hope AMD's R9 is a low power consuming monster. I want to see at least 30% faster performance for the flagship vs its predecessor and a significant drop in power and heat.

.

Unless you go for some triple or quad videocard setup running $2000+ so games don't look like a choppy slideshow with constantly low dips into the 10's, yeah. Lots of people still game in 1080p.

Give it up with the drivers crap, nVidia's drivers F'ed up my hard drive requiring a complete reinstall due to them constantly reseting my PC in the middle of games.

Say what now? I've had a 30" monitor since around 2008 and have never had a single game drop into the 10s and I never turn my settings down. What you folks keep forgetting is that at 2560x1600(and 1440) the pixel density is already high enough that you don't need a shitload of AA.

Imo a 512 bit bus card seems a bit too complex pcb wise for that price point or anything besides a "halo" type niche product like Titan at this moment. I think they'll stick with 384bit bus & 3/6GB combos until 20/22nm.

AMD made one other major change to improve efficiency for Barts: theyre using Redwoods memory controller. In the past weve talked about the inherent complexities of driving GDDR5 at high speeds, but until now weve never known just how complex it is. It turns out that Cypresss memory controller is nearly twice as big as Redwoods! By reducing their desired memory speeds from 4.8GHz to 4.2GHz, AMD was able to reduce the size of their memory controller by nearly 50%. Admittedly we dont know just how much space this design choice saved AMD, but from our discussions with them its clearly significant. And it also perfectly highlights just how hard it is to drive GDDR5 at 5GHz and beyond, and why both AMD and NVIDIA cited their memory controllers as some of their biggest issues when bringing up Cypress and GF100 respectively

haha I was gonna call BS on this until I finished reading it all.

Totally agreed you don't need 8x AA at 2560x1600

And I find the people that complain about it are just silly.

But then again you always have the OMG I can't run 8x AA and max setting on every game out I need to drop $2000 in videocards etc.

It like people forget about actually playing games!

There was no AA on a Sega genesis or a Super nintendo yet some of my best gaming memories were on those early consoles.

Just how memory bound is the 7970? Trying to figure out if 512bit memory bus is marketing, or tangible performance improvement, as we can assume only a fairly modest increase (20% at best) in performance from the GPU itself, compared to the 7970.

They have an ace up their sleeve: Maxwell is coming within 3-4 months.They may drop the titan but definitely not 400-450 since it still serves very well for CUDA tasks and workstation environments... either they have an ace up their sleeve or the 780 will take a tumble.. maybe 770 too.

They have an ace up their sleeve: Maxwell is coming within 3-4 months.

Titan has been out for 8+ months already, and the gtx780 for 3+. They've already sold a crapton of them, and they can afford to drop the prices a little and still sell them, since Ati as always have driver problems, and no support for cuda/physx/lightboost.

Seriously, with this small performance gap, Nvidia has nothing to fear. They will just release Maxwell Q1 2014 and crush Ati again until 2015...

Just to give an idea, GK110 is like 18 months old, and Ati only now is catching up with it in performance.

Question is: how much has Nvidia advanced in the last 18 months while Ati was catching up? I have the feeling Maxwell will easily be double the performance of a Titan...

GK110, the chip used in Titan, was presented at the beginning of 2012, and then put in Teslas.18 months old? You just said the Titan came out 8 months ago..... Technically, AMD is only a little late to the party on this one and if they bring something faster, whats it matter at that point? They are both refreshes, neither is a new design on red or green.

NV never crushed AMD in the GTX6xx vs the AMD 7xxx series. Also, I do believe the 7xxx released earlier than the 6xx series if I remember right...

Hmmm... So while they were having yield production problems with the gk104 chips, the gk110 was abundant in supply but they held onto them forever giving the market to AMD for 3 months? Then even after giving them the market, they still only produced a card to rival AMD not trump them? Then they wait a year, release a $1000 card that isnt even aimed at the majority of the enthusiast market. Then they finally release a more consumer like card for about 4 months ahead of AMDs card.

Its back and forth dude, accept it. Neither is further ahead at this point in the overall picture, only ahead for a short time.

GK110, the chip used in Titan, was presented at the beginning of 2012, and then put in Teslas.

Only the next year it was released on consumer devices, with Titan first, and 780 later

Giving AMD the market? Hardly. Allowing themselves to slack is more like it since the bulk of the work was already done on the gk110 and there was no point in launching it as a consumer product with zero competition(which absolutely sucks for the consumers). The fact is that if AMD had actually got a product to market it would have lit a fire under Nvidia's ass and we would have seen the gk110 on the consumer market much sooner.

http://www.benchmark.pl/aktualnosci...ta-hawaii-zdjecia-specyfikacja-wydajnosc.html

Here you go, pics of card and results. Text is in Polish, but all the charts are readable, and were taken from the closed one site now

Dude, are you that ignorant? Did you read anything I posted? Provided benchmarks are true, if AMD comes out @ $650 w/ a little better than Titan performance, where is NVidia? They are a good 6 months out from their next generation if not more. Then AMD will be 6 months behind that.

Neither is ahead of the other in the big picture. All you seem to see is black and white (or green).

I think both companies are out of sync now.

Is this to compete with Titan, or nVidia's next gen card? Titan is still Kepler.

That's a hell of an assumption on your part(that nvidia wouldn't be able to come up with anything within 6 months of the launch of this particular AMD card).

.

That's a hell of an assumption on your part(that nvidia wouldn't be able to come up with anything within 6 months of the launch of this particular AMD card).

Furthermore, even if we were to take a serious look at what AMD has been producing for the past couple of years, they get beat by nvidia, eventually release a new card that catches up, get beat, catch up, get beat, catch up. That's not competition that drives prices down(I'm intentionally ignoring the cost of the titan since you can't assume it's cost won't change, and it was a halo product that did actually sell at it's ridiculous price point). For AMD to be a serious competitor in the market, they need to beat nvidia, and they can't.

"but you're just an nvidia fanboy" I hope that's not what you're thinking, because it couldn't be further from the truth as I've owned plenty of cards over the years besides nvidia(3dfx, couple matrox cards, various versions of ATI chipsets). The sad fact is that aside from the driver improvements that ATI had finally started making during the "reign" of the 4000 series cards, AMD has done diddly squat the past few years and keeps playing catch up with nvidia instead of leapfrog(the same could be said about their desktop CPUs and competition with intel as well, but that's not even really catching up).

Not to be rude, but WTF are you babbling on about!? The 4000 series was far superior on a price/performance scale to the Nvidia 200 series,

From the leaked benches, the 780 at overclocked spec can already surpass the card since as far as I understood the benches were done at 1020 MHz (already an overclock). If this is the case and assuming less OC headroom beyond 1020, it is safe to say cards are evenly matched on performance.

Factor in drivers, features that come with nVidia and 3D support and I am willing to pay 50 more per card for nVidia just for stability of my system and my personal sanity than go back to AMD or run a CFX system.

Giving AMD the market? Hardly. Allowing themselves to slack is more like it since the bulk of the work was already done on the gk110 and there was no point in launching it as a consumer product with zero competition(which absolutely sucks for the consumers). The fact is that if AMD had actually got a product to market it would have lit a fire under Nvidia's ass and we would have seen the gk110 on the consumer market much sooner.

You ask what I'm babbling about, and then post the obvious. You didn't even read my post. I said AMD hadn't done squat since ATI produced a competing product with the 4000 series. I suppose now I've just typed it in as plain of English as I possibly can, but holy crap guy. I've been talking about single GPU cards, now you want to bring up dual GPU cards. If you're going to go that route, might as well take it to the full extreme of quad SLI and toss the budget right out the window just because ATI can slap a couple GPUs on the same board.

WHOOPDEEDOO

Sorry pal, I want serious competition from AMD, something to actually convince me to buy their products.

5870 was pretty pimped out

Yeah and was pretty boss for a long time.

And ya know what? When framerates dipped on sega and snes games, it sucked then too. The entire point is, that even if I drop $1000, I still can't get smooth gameplay with everything maxed. Running smoothly is a part of gameplay. Obviously I can just turn details down, but I don't want to. I could just buy another videocard, but that doesn't get me the minimum FPS I want either.

This obessions for Max settings is pointless.

Impossible, Nvidia have never written a bad driver ever in the history of videocards.

Never!

Nvidia cant make a mistake ever.

its told in the tablet left by Moses.

I find the mythical tales of people telling that nvidia works so well is sad at best.

This obessions for Max settings is pointless.

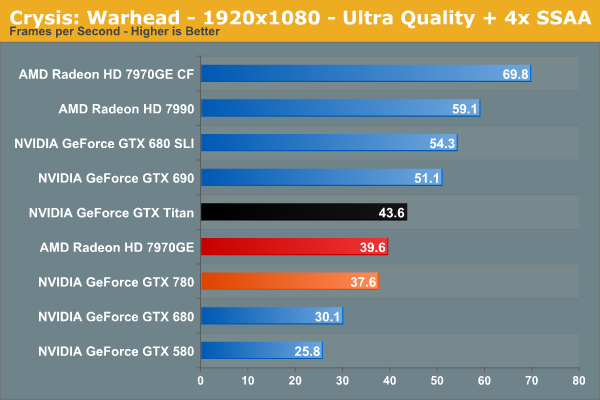

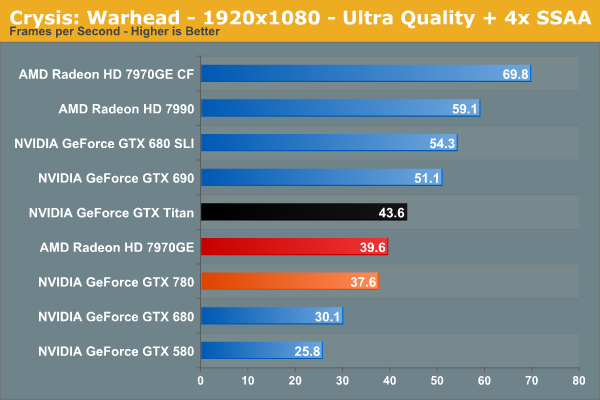

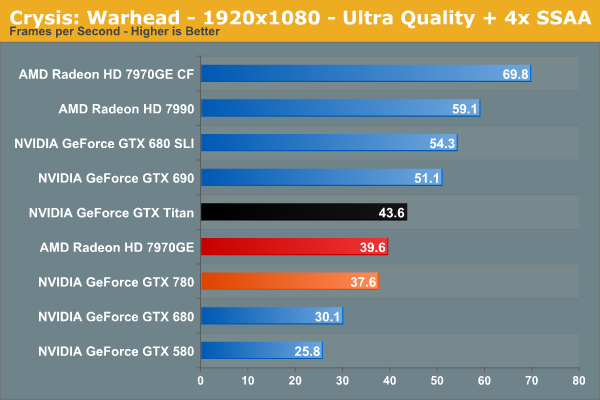

Buut, I would like to be able to run at least a 6 years old game maxed out:

I tried that line once around here ... put on your fire suit!

Buut, I would like to be able to run at least a 6 years old game maxed out: